8. Matching Methods

Mike Nguyen

2026-03-22

i_matching.Rmd

library(causalverse)

#> Registered S3 method overwritten by 'car':

#> method from

#> na.action.merMod lme4

#> -- Attaching causalverse core packages --

#> ggplot2 4.0.2 magrittr 2.0.4

#> dplyr 1.2.0 data.table 1.18.2.1

#> tidyr 1.3.2 fixest 0.13.2

#> purrr 1.2.1 rio 1.2.41. Introduction

1.1 The Fundamental Problem and Why Matching Helps

The fundamental problem of causal inference is that we never observe the same unit in both the treated and untreated state at the same time. For a unit that receives treatment (), we observe but not the counterfactual . Conversely, for an untreated unit (), we observe but not .

When treatment is not randomly assigned, treated and control groups will generally differ in their pre-treatment characteristics. A naive comparison of outcomes between the two groups conflates the treatment effect with pre-existing differences. Matching addresses this problem by constructing a comparison group that is as similar as possible to the treated group in terms of observed covariates, thereby isolating the causal effect of treatment.

The intuition is straightforward: if a treated unit and a matched control unit share the same values of all relevant pre-treatment covariates , then any difference in their outcomes can be attributed to the treatment rather than to confounders.

1.2 Selection on Observables

Matching methods rely on the selection on observables assumption (also called unconfoundedness, ignorability, or exogeneity of treatment conditional on covariates). Formally, this requires two conditions:

Conditional Independence Assumption (CIA)

This states that, conditional on the observed covariates , treatment assignment is independent of potential outcomes. In other words, after conditioning on , there are no remaining unobserved confounders that jointly affect both treatment selection and outcomes. This is a strong and fundamentally untestable assumption. The credibility of any matching analysis rests on whether the researcher has measured and included all relevant confounders.

Common Support (Overlap) Condition

This requires that for every value of the covariates, there exist both treated and untreated units. Without common support, extrapolation replaces interpolation, and causal inference becomes unreliable. In practice, violations of common support are diagnosed by examining the distribution of propensity scores across treatment groups. Regions where one group has propensity scores near 0 or 1 with no overlap from the other group indicate lack of common support, and observations in these regions should typically be trimmed from the analysis.

1.3 The Curse of Dimensionality

If the covariate vector is high-dimensional, exact matching—finding control units with identical values on every covariate—becomes infeasible. With binary covariates, there are possible strata. With continuous covariates, exact matching is essentially impossible. This is the curse of dimensionality.

Rosenbaum and Rubin (1983) provided the key theoretical breakthrough to address this problem:

Propensity Score Theorem. If the CIA holds conditional on , then it also holds conditional on the propensity score :

This reduces a potentially high-dimensional matching problem to a one-dimensional one: instead of matching on all covariates simultaneously, one can match on the scalar propensity score. However, it is important to verify that matching on the propensity score actually achieves covariate balance—it is balance on the covariates, not the propensity score itself, that matters for causal identification.

1.4 ATT vs. ATE

Matching methods can target different causal estimands:

Average Treatment Effect on the Treated (ATT):

This is the average effect of treatment for those who actually received treatment. It asks: “For units that were treated, what would have happened on average if they had not been treated?” Most matching estimators naturally target the ATT, because they search for control matches for each treated unit.

Average Treatment Effect (ATE):

This is the average effect across the entire population. It requires matching in both directions—finding control matches for treated units and treated matches for control units—or reweighting the sample to represent the full population. Full matching and propensity score weighting methods more naturally target the ATE.

Average Treatment Effect on the Controls (ATC):

The choice of estimand has practical implications. The ATT requires common support only in the region of covariate space occupied by treated units. The ATE requires common support across the entire covariate distribution, which is a stronger requirement. When treated and control groups differ substantially in their covariate distributions, the ATT is often more credible.

1.5 Mathematical Framework for Matching Estimators

Nearest-Neighbor Matching

For each treated unit , define the set of matched controls as:

where is a distance metric (Euclidean, Mahalanobis, etc.). The matching estimator for the ATT is then:

where is the number of treated units.

Inverse Probability Weighting (IPW)

Rather than matching, one can reweight observations:

This estimates the ATE. For the ATT, the weights for the control group become . IPW estimators can be sensitive to extreme propensity scores (near 0 or 1), motivating trimming or stabilization strategies.

Subclassification (Stratification)

Divide the propensity score distribution into strata and estimate within-stratum treatment effects:

where is the estimated treatment effect in stratum and is a weight (e.g., the fraction of treated units in stratum for the ATT). Cochran (1968) showed that five strata remove approximately 90% of the bias due to the stratifying variable.

2. Simulated Data

We construct a simulated dataset with realistic confounding to demonstrate matching methods. The data-generating process ensures that treatment assignment depends on observed covariates, creating selection bias that matching methods must address.

set.seed(42)

n <- 1000

# Covariates

age <- rnorm(n, mean = 40, sd = 10)

income <- rnorm(n, mean = 50000, sd = 15000)

education <- rbinom(n, size = 1, prob = 0.4)

experience <- pmax(0, age - 22 + rnorm(n, 0, 3))

region <- sample(c("North", "South", "East", "West"), n, replace = TRUE)

# Treatment assignment depends on covariates (creates confounding)

# Older, higher-income, more educated individuals are more likely to be treated

propensity_true <- plogis(

-2 + 0.03 * age + 0.00002 * income + 0.8 * education + 0.02 * experience

)

treatment <- rbinom(n, 1, propensity_true)

# Potential outcomes

# Y(0): baseline outcome depends on covariates

y0 <- 10 + 0.5 * age + 0.0001 * income + 3 * education +

0.2 * experience + rnorm(n, 0, 5)

# Y(1): treatment effect is heterogeneous

tau_i <- 5 + 0.1 * age - 0.00001 * income + 2 * education

y1 <- y0 + tau_i

# Observed outcome

outcome <- treatment * y1 + (1 - treatment) * y0

# Assemble data

sim_data <- data.frame(

id = 1:n,

age = age,

income = income,

education = education,

experience = experience,

region = region,

treatment = treatment,

outcome = outcome,

ps_true = propensity_true

)

head(sim_data)

#> id age income education experience region treatment outcome ps_true

#> 1 1 53.70958 84875.88 0 33.56159 West 1 60.56432 0.8786851

#> 2 2 34.35302 57861.83 1 12.33939 North 1 42.21534 0.7746253

#> 3 3 43.63128 64561.00 0 21.35752 South 1 51.16313 0.7363938

#> 4 4 46.32863 55654.60 1 25.52850 West 1 54.14162 0.8597869

#> 5 5 44.04268 35061.00 0 23.80939 West 1 55.28842 0.6221510

#> 6 6 38.93875 41037.76 0 16.88812 South 0 44.50161 0.5809514

# Summary statistics by treatment group

sim_data %>%

group_by(treatment) %>%

dplyr::summarise(

n = n(),

mean_age = mean(age),

mean_inc = mean(income),

pct_educ = mean(education),

mean_exp = mean(experience),

mean_y = mean(outcome),

.groups = "drop"

)

#> # A tibble: 2 × 7

#> treatment n mean_age mean_inc pct_educ mean_exp mean_y

#> <int> <int> <dbl> <dbl> <dbl> <dbl> <dbl>

#> 1 0 313 36.4 45727. 0.313 14.6 37.0

#> 2 1 687 41.3 51830. 0.464 19.4 50.5Notice that the treated group is older, has higher income, is more educated, and has more experience than the control group. A naive comparison of mean outcomes between treated and control will overstate the treatment effect because these confounders positively affect both treatment probability and outcomes.

# Naive estimate (biased)

naive_att <- mean(sim_data$outcome[sim_data$treatment == 1]) -

mean(sim_data$outcome[sim_data$treatment == 0])

cat("Naive ATT estimate:", round(naive_att, 2), "\n")

#> Naive ATT estimate: 13.42

# True ATT (from the DGP)

true_att <- mean(tau_i[treatment == 1])

cat("True ATT:", round(true_att, 2), "\n")

#> True ATT: 9.543. Balance Assessment with causalverse

Before and after matching, it is essential to assess whether the covariate distributions are balanced across treatment groups. The causalverse package provides several functions for this purpose.

3.1 balance_assessment(): SUR and Hotelling’s T-Test

The balance_assessment() function performs a joint test of covariate balance using two complementary approaches:

Seemingly Unrelated Regression (SUR): Regresses each covariate on the treatment indicator in a system of equations. The SUR framework accounts for correlations among covariates when testing whether treatment predicts any of them. A significant coefficient on treatment for any covariate indicates imbalance on that dimension.

Hotelling’s T-squared test: A multivariate generalization of the two-sample -test. It tests the null hypothesis that the mean vectors of covariates are equal across treatment and control groups. Rejection of this test indicates that the groups differ on at least one covariate (or a linear combination of covariates).

Together, these tests provide a rigorous assessment of whether the treated and control groups are comparable prior to (or after) matching.

balance_results <- causalverse::balance_assessment(

data = sim_data,

treatment_col = "treatment",

"age", "income", "education", "experience"

)

# SUR results: coefficient on treatment for each covariate

print(balance_results$SUR)

#>

#> systemfit results

#> method: SUR

#>

#> N DF SSR detRCov OLS-R2 McElroy-R2

#> system 4000 3992 2.10543e+11 48454662601 0.036648 0.029224

#>

#> N DF SSR MSE RMSE R2 Adj R2

#> ageeq 1000 998 9.52317e+04 9.54226e+01 9.76845e+00 0.051518 0.050568

#> incomeeq 1000 998 2.10543e+11 2.10965e+08 1.45246e+04 0.036648 0.035682

#> educationeq 1000 998 2.38193e+02 2.38670e-01 4.88539e-01 0.020231 0.019249

#> experienceeq 1000 998 9.62112e+04 9.64040e+01 9.81855e+00 0.049050 0.048097

#>

#> The covariance matrix of the residuals used for estimation

#> ageeq incomeeq educationeq experienceeq

#> ageeq 95.422593 -4.96787e+03 -0.346096 90.718501

#> incomeeq -4967.871142 2.10965e+08 191.124624 -4380.154839

#> educationeq -0.346096 1.91125e+02 0.238670 -0.336404

#> experienceeq 90.718501 -4.38015e+03 -0.336404 96.403979

#>

#> The covariance matrix of the residuals

#> ageeq incomeeq educationeq experienceeq

#> ageeq 95.422593 -4.96787e+03 -0.346096 90.718501

#> incomeeq -4967.871142 2.10965e+08 191.124624 -4380.154839

#> educationeq -0.346096 1.91125e+02 0.238670 -0.336404

#> experienceeq 90.718501 -4.38015e+03 -0.336404 96.403979

#>

#> The correlations of the residuals

#> ageeq incomeeq educationeq experienceeq

#> ageeq 1.0000000 -0.0350138 -0.0725224 0.9458511

#> incomeeq -0.0350138 1.0000000 0.0269347 -0.0307140

#> educationeq -0.0725224 0.0269347 1.0000000 -0.0701318

#> experienceeq 0.9458511 -0.0307140 -0.0701318 1.0000000

#>

#>

#> SUR estimates for 'ageeq' (equation 1)

#> Model Formula: age ~ treatment

#> <environment: 0x145eb68b0>

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 36.372283 0.552145 65.87447 < 2.22e-16 ***

#> treatment 4.904618 0.666155 7.36258 3.7681e-13 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 9.768449 on 998 degrees of freedom

#> Number of observations: 1000 Degrees of Freedom: 998

#> SSR: 95231.747478 MSE: 95.422593 Root MSE: 9.768449

#> Multiple R-Squared: 0.051518 Adjusted R-Squared: 0.050568

#>

#>

#> SUR estimates for 'incomeeq' (equation 2)

#> Model Formula: income ~ treatment

#> <environment: 0x145ebd8a8>

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 45727.405 820.981 55.69852 < 2.22e-16 ***

#> treatment 6103.093 990.500 6.16163 1.043e-09 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 14524.63084 on 998 degrees of freedom

#> Number of observations: 1000 Degrees of Freedom: 998

#> SSR: 210542971225.668 MSE: 210964901.027723 Root MSE: 14524.63084

#> Multiple R-Squared: 0.036648 Adjusted R-Squared: 0.035682

#>

#>

#> SUR estimates for 'educationeq' (equation 3)

#> Model Formula: education ~ treatment

#> <environment: 0x145ebc098>

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 0.3130990 0.0276138 11.33849 < 2.22e-16 ***

#> treatment 0.1512387 0.0333157 4.53957 6.3238e-06 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 0.488539 on 998 degrees of freedom

#> Number of observations: 1000 Degrees of Freedom: 998

#> SSR: 238.192568 MSE: 0.23867 Root MSE: 0.488539

#> Multiple R-Squared: 0.020231 Adjusted R-Squared: 0.019249

#>

#>

#> SUR estimates for 'experienceeq' (equation 4)

#> Model Formula: experience ~ treatment

#> <environment: 0x145ebe798>

#>

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 14.580274 0.554977 26.27183 < 2.22e-16 ***

#> treatment 4.803989 0.669571 7.17472 1.4113e-12 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 9.818553 on 998 degrees of freedom

#> Number of observations: 1000 Degrees of Freedom: 998

#> SSR: 96211.170818 MSE: 96.403979 Root MSE: 9.818553

#> Multiple R-Squared: 0.04905 Adjusted R-Squared: 0.048097

# Hotelling's T-squared test: joint test of mean equality

print(balance_results$Hotelling)

#> Test stat: 120.18

#> Numerator df: 4

#> Denominator df: 995

#> P-value: 0The SUR results show whether treatment significantly predicts each covariate individually (within the joint system), while the Hotelling test provides an overall assessment. If the Hotelling test rejects (small p-value), the groups are not balanced, and matching or weighting is needed.

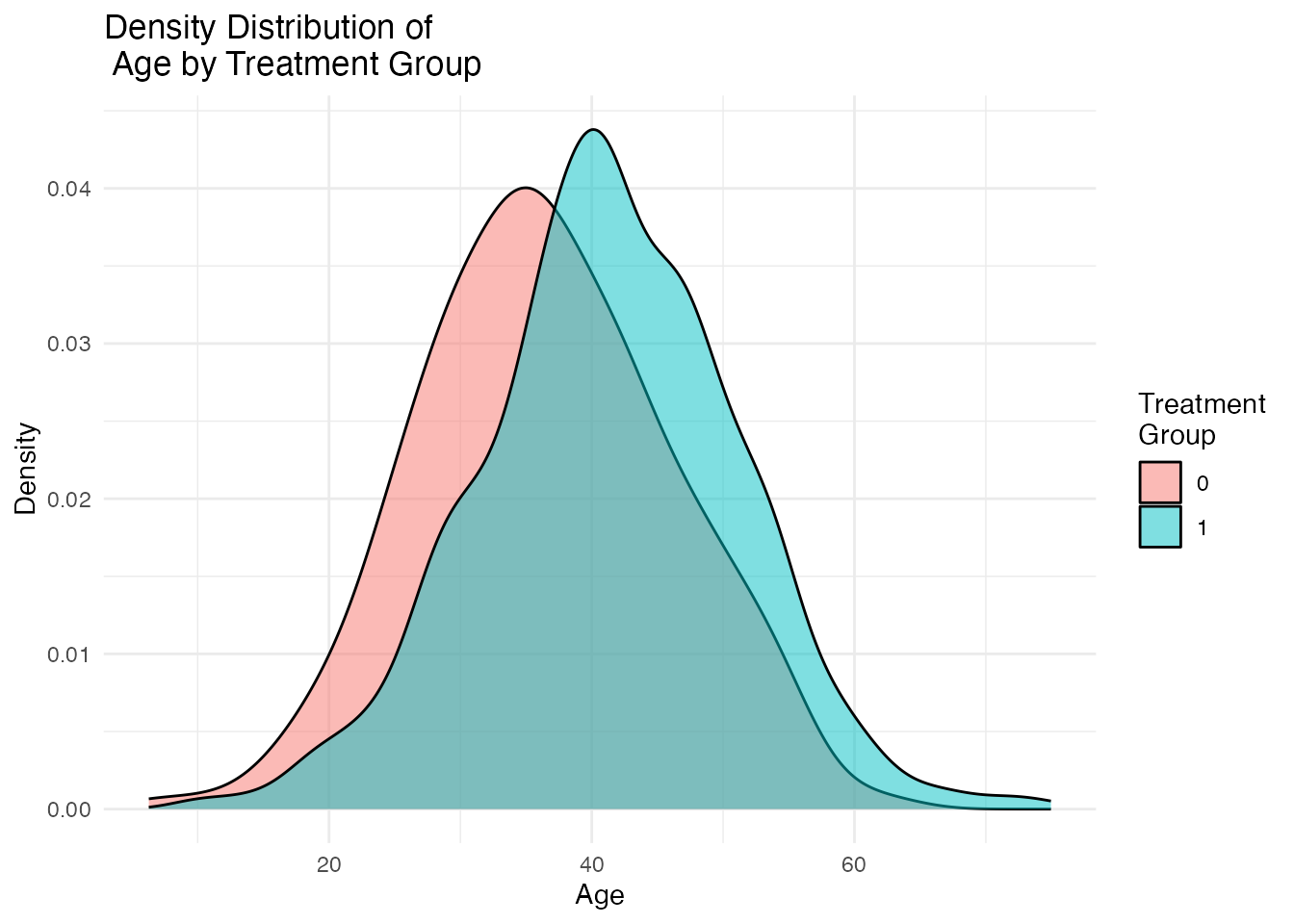

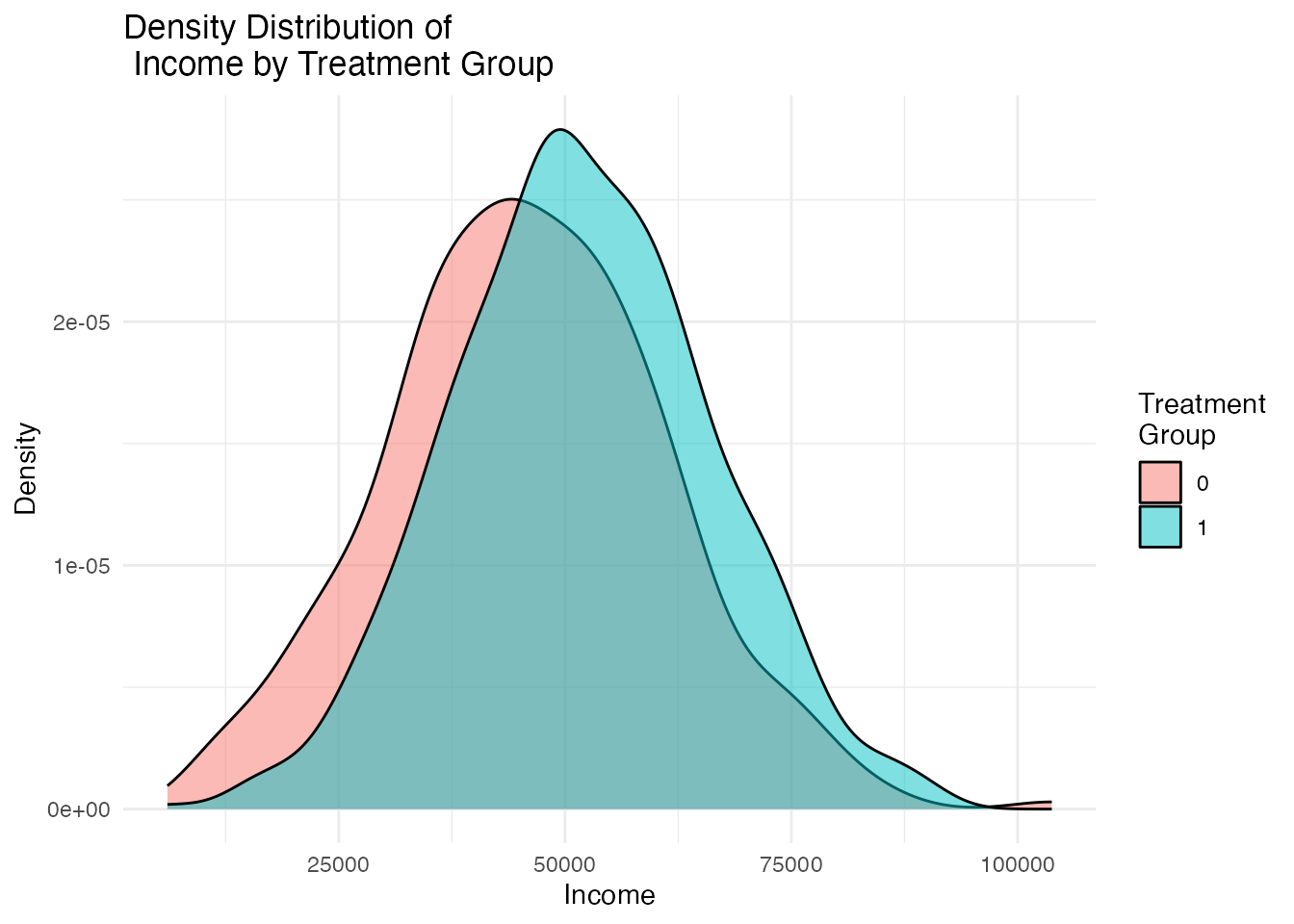

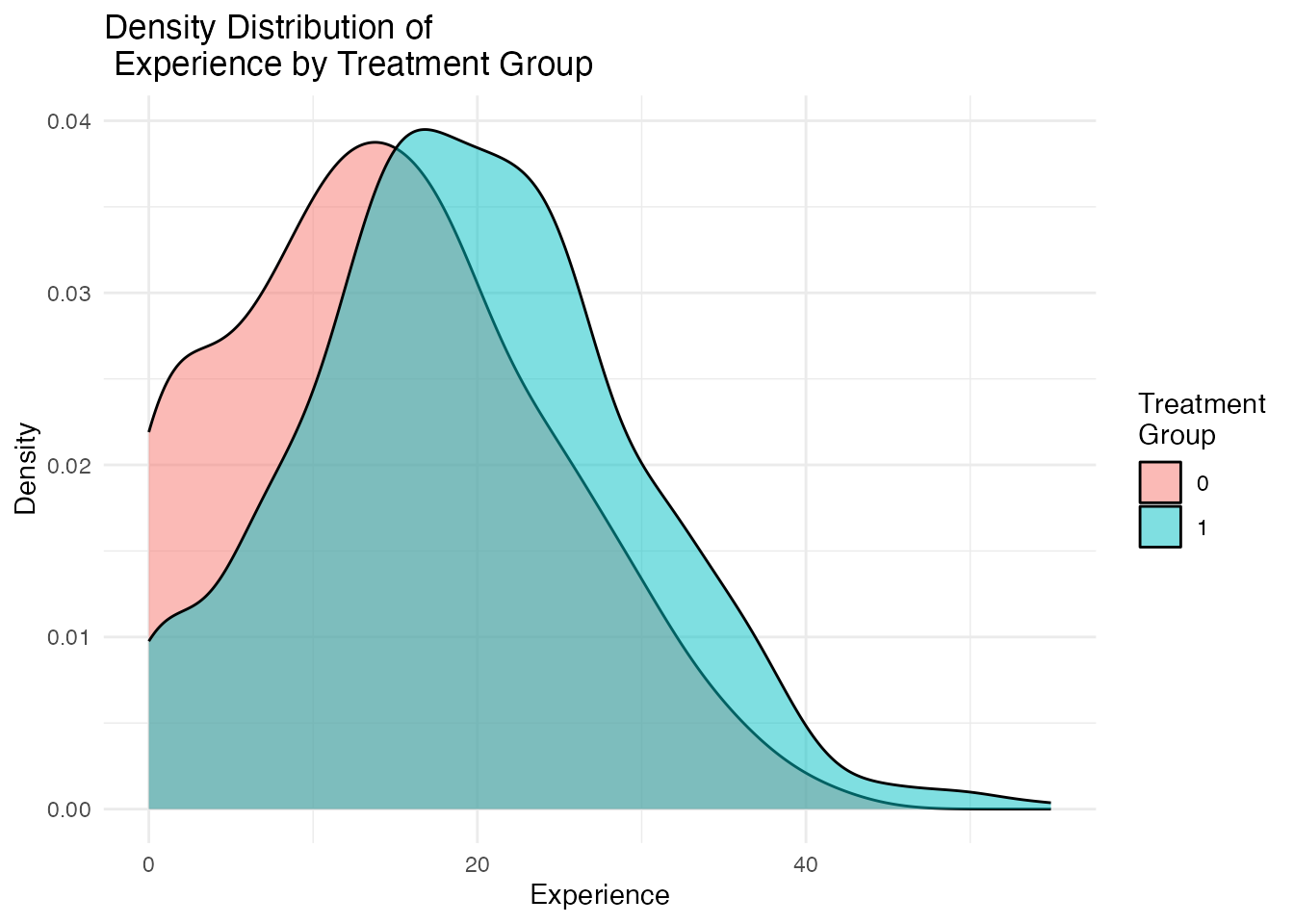

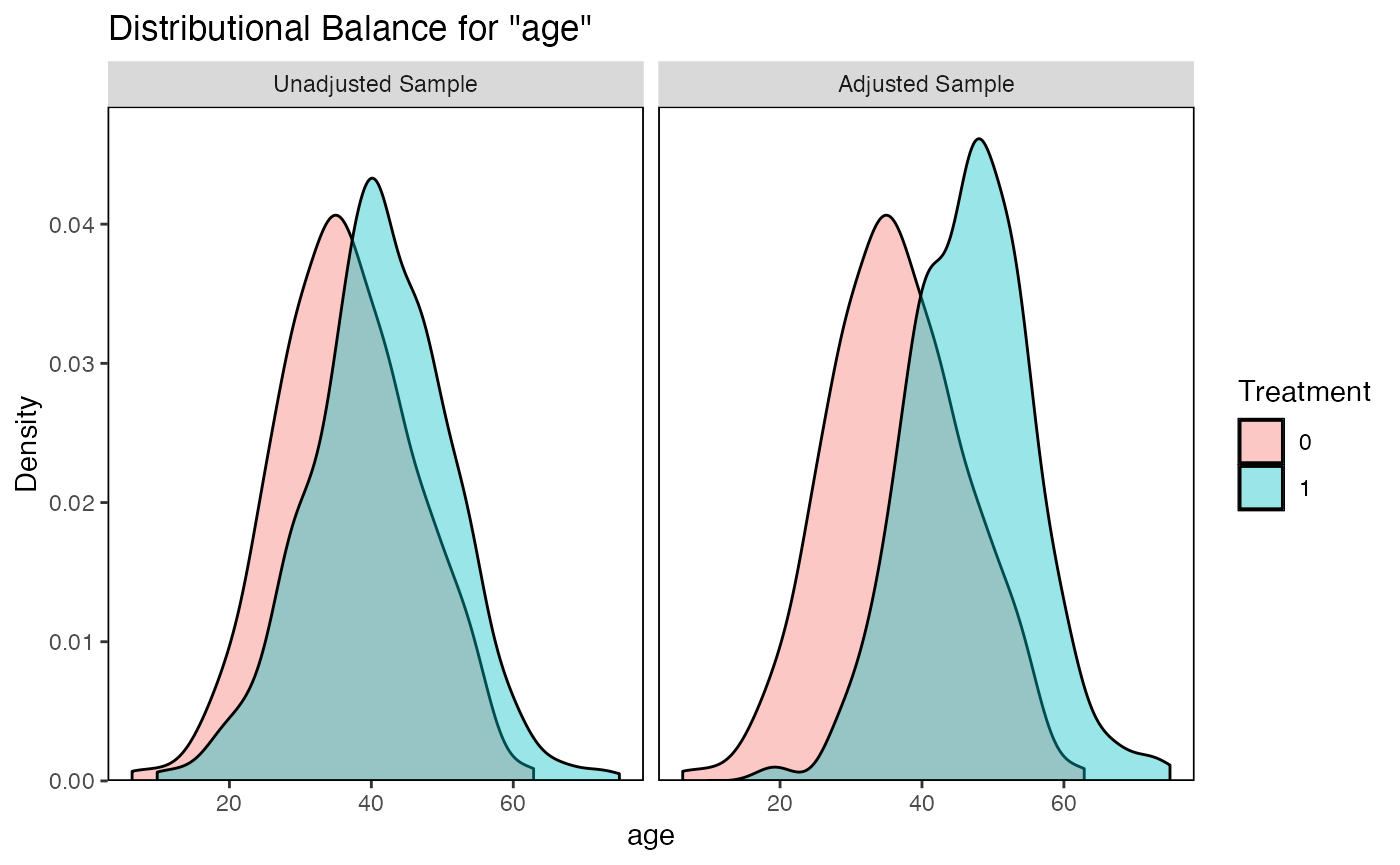

3.2 plot_density_by_treatment(): Density Plots

Visual inspection of covariate distributions is equally important. The plot_density_by_treatment() function creates overlapping density plots for each covariate, split by treatment group. Non-overlapping densities indicate covariate imbalance.

density_plots <- causalverse::plot_density_by_treatment(

data = sim_data,

var_map = list(

"age" = "Age",

"income" = "Income",

"experience" = "Experience"

),

treatment_var = c("treatment" = "Treatment\nGroup")

)

# Display individual plots

density_plots[["Age"]]

density_plots[["Income"]]

density_plots[["Experience"]]

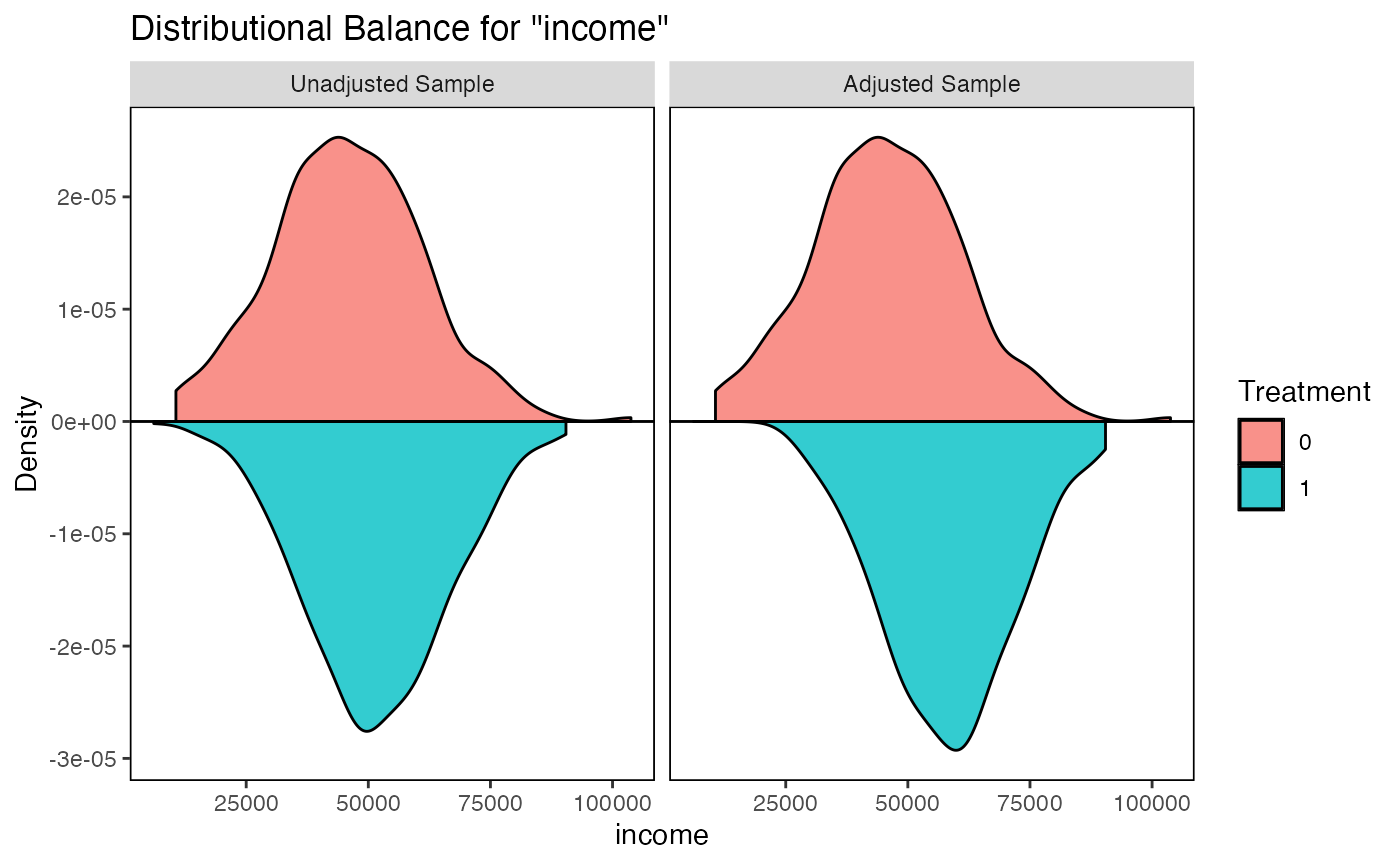

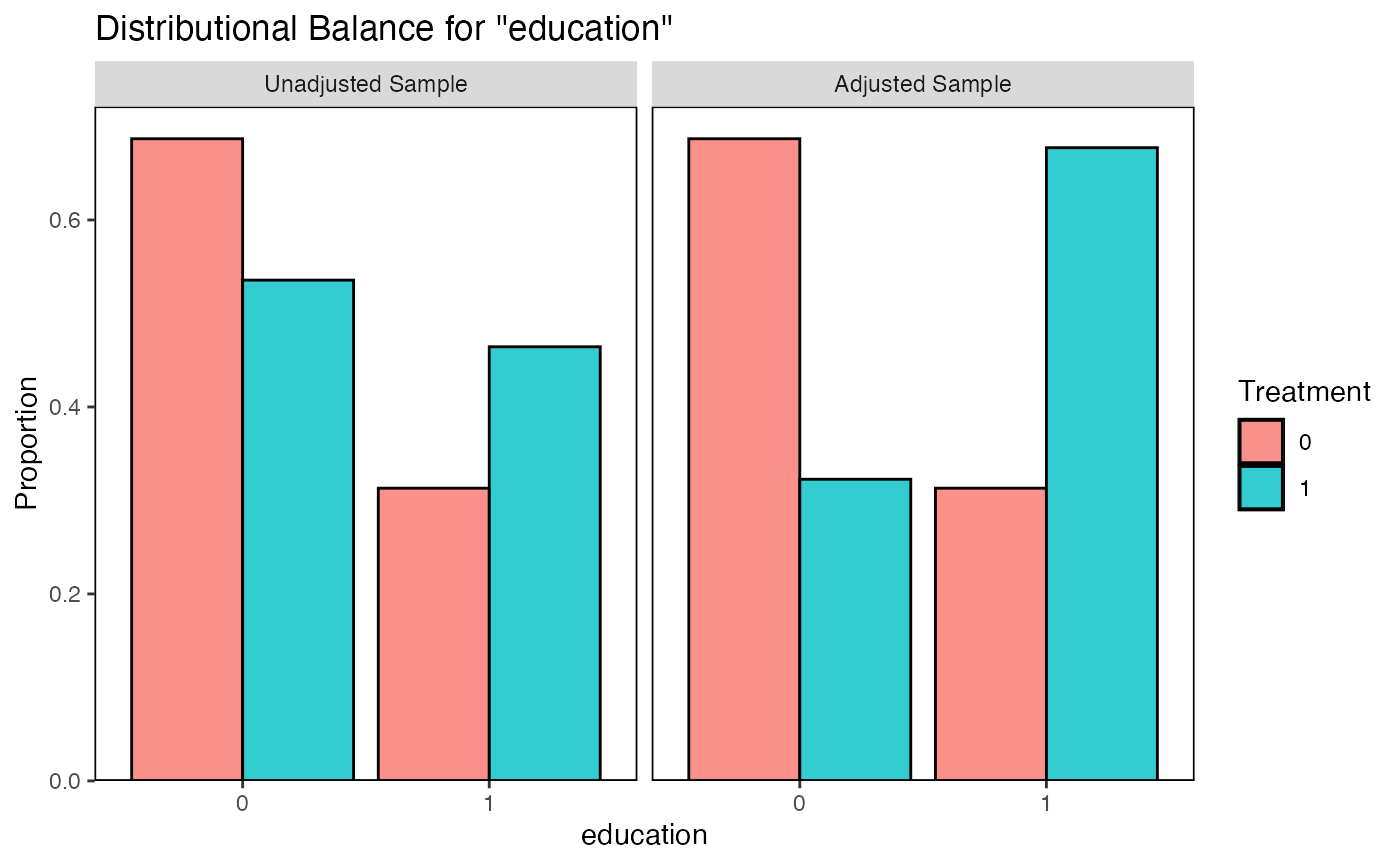

Ideal balance would show nearly identical density curves for treated and control groups. Visible separation between the curves indicates confounding that must be addressed.

3.3 balance_scatter_custom(): Standardized Mean Difference Scatter Plot

The balance_scatter_custom() function creates a scatter plot comparing covariate balance before and after matching refinement. Each point represents a covariate: the x-axis shows the standardized mean difference (SMD) before refinement and the y-axis shows the SMD after refinement. Points below the 45-degree line indicate that refinement improved balance.

This function is designed to work with PanelMatch objects and is most useful in panel data settings. See the DID vignette for a complete worked example.

# Requires PanelMatch objects - not runnable without panel data setup

# See the DID vignette (vignettes/c_did.Rmd) for a complete example

library(PanelMatch)

# Example usage (not run):

# balance_scatter_custom(

# pm_result_list = list(PM.results.5m, PM.results.10m),

# panel.data = pd,

# set.names = c("5 Matches", "10 Matches"),

# covariates = c("y", "tradewb"),

# xlim = c(0, 0.5),

# ylim = c(0, 0.5)

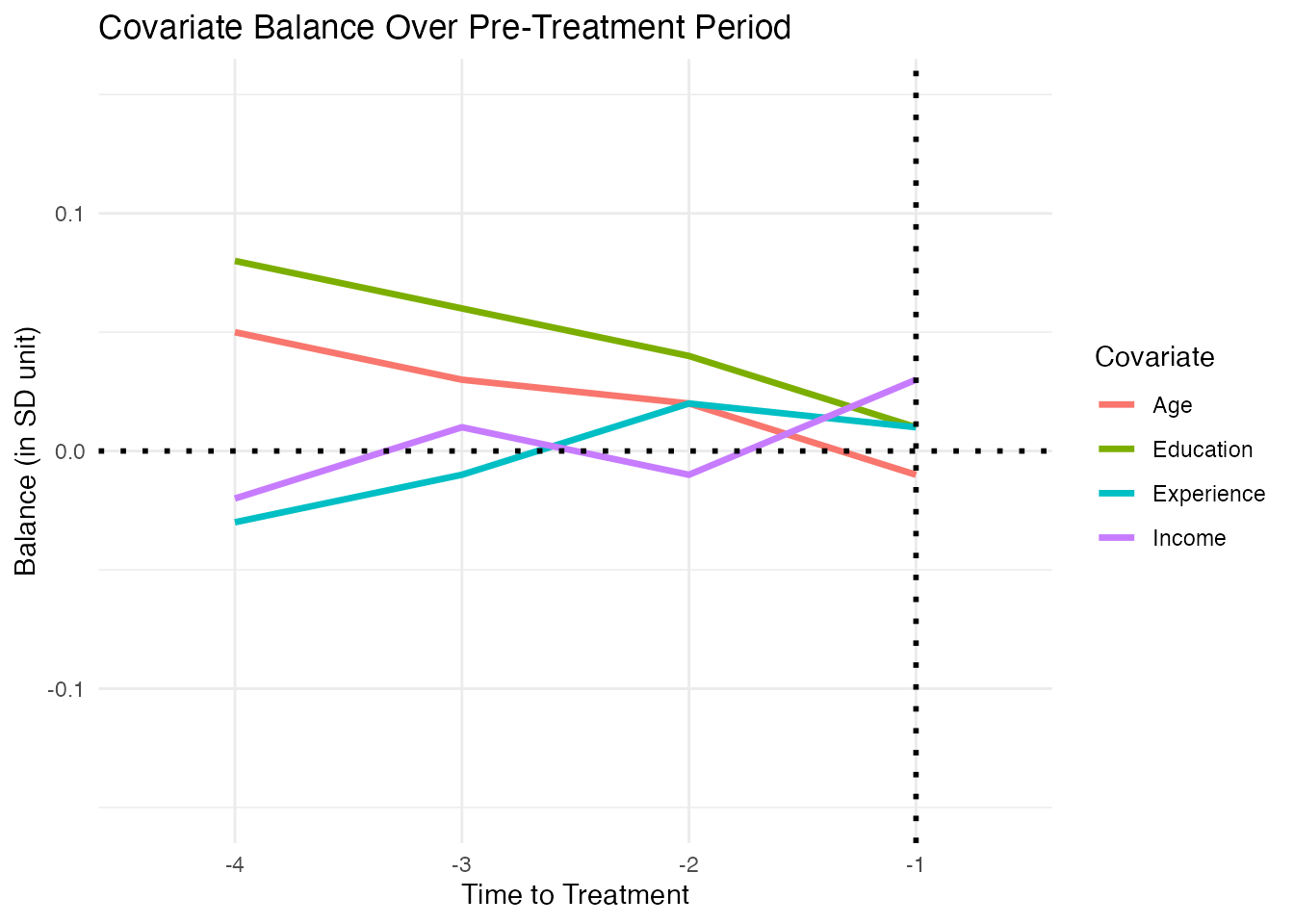

# )3.4 plot_covariate_balance_pretrend(): Balance Over Time

For panel data, it is important to check that covariate balance holds not just on average but across the entire pre-treatment period. The plot_covariate_balance_pretrend() function plots the standardized mean difference for each covariate at each pre-treatment time point.

# Create a sample balance matrix (rows = time periods, columns = covariates)

set.seed(123)

balance_matrix <- matrix(

c(

0.05, -0.02, 0.08, -0.03, # t_4

0.03, 0.01, 0.06, -0.01, # t_3

0.02, -0.01, 0.04, 0.02, # t_2

-0.01, 0.03, 0.01, 0.01 # t_1

),

nrow = 4,

byrow = TRUE

)

rownames(balance_matrix) <- paste0("t_", 4:1)

colnames(balance_matrix) <- c("Age", "Income", "Education", "Experience")

p <- causalverse::plot_covariate_balance_pretrend(

balance_data = balance_matrix,

y_limits = c(-0.15, 0.15),

main_title = "Covariate Balance Over Pre-Treatment Period",

theme_use = ggplot2::theme_minimal()

)

print(p)

All lines should hover close to zero across the pre-treatment period. A sharp divergence near the treatment date suggests an anticipation effect or a violation of the parallel trends assumption.

4. The MatchIt Package

The MatchIt package (Ho, Imai, King, and Stuart, 2011) is the gold standard for implementing matching in R. It provides a unified interface for a wide variety of matching methods and integrates seamlessly with balance diagnostics.

The workflow follows a principled three-step process:

-

Match: Use

matchit()to create matched data. -

Check: Use

summary()andplot()to assess balance. - Estimate: Use the matched data for outcome analysis.

4.1 Nearest Neighbor Matching

Nearest neighbor matching pairs each treated unit with the closest control unit based on a distance measure (default: propensity score distance estimated via logistic regression).

library(MatchIt)

# 1:1 nearest neighbor matching on the propensity score

m_nn <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "glm", # logistic regression for PS

ratio = 1, # 1 control per treated

replace = FALSE # without replacement

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Summary of balance before and after matching

summary(m_nn)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "nearest", distance = "glm", replace = FALSE,

#> ratio = 1)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.8411 0.6096 1.6920 0.0934 0.4562

#> age 46.7996 36.3723 1.0610 0.8120 0.3017

#> income 58374.9316 45727.4053 0.8930 0.7275 0.2452

#> education 0.6773 0.3131 0.7303 . 0.3642

#> experience 24.8716 14.5803 1.0355 0.9270 0.3033

#> eCDF Max Std. Pair Dist.

#> distance 0.8051 1.6920

#> age 0.4601 1.2414

#> income 0.3610 1.2321

#> education 0.3642 1.1275

#> experience 0.4345 1.2421

#>

#> Sample Sizes:

#> Control Treated

#> All 313 687

#> Matched 313 313

#> Unmatched 0 374

#> Discarded 0 0

# Balance improvement plot (Love plot equivalent)

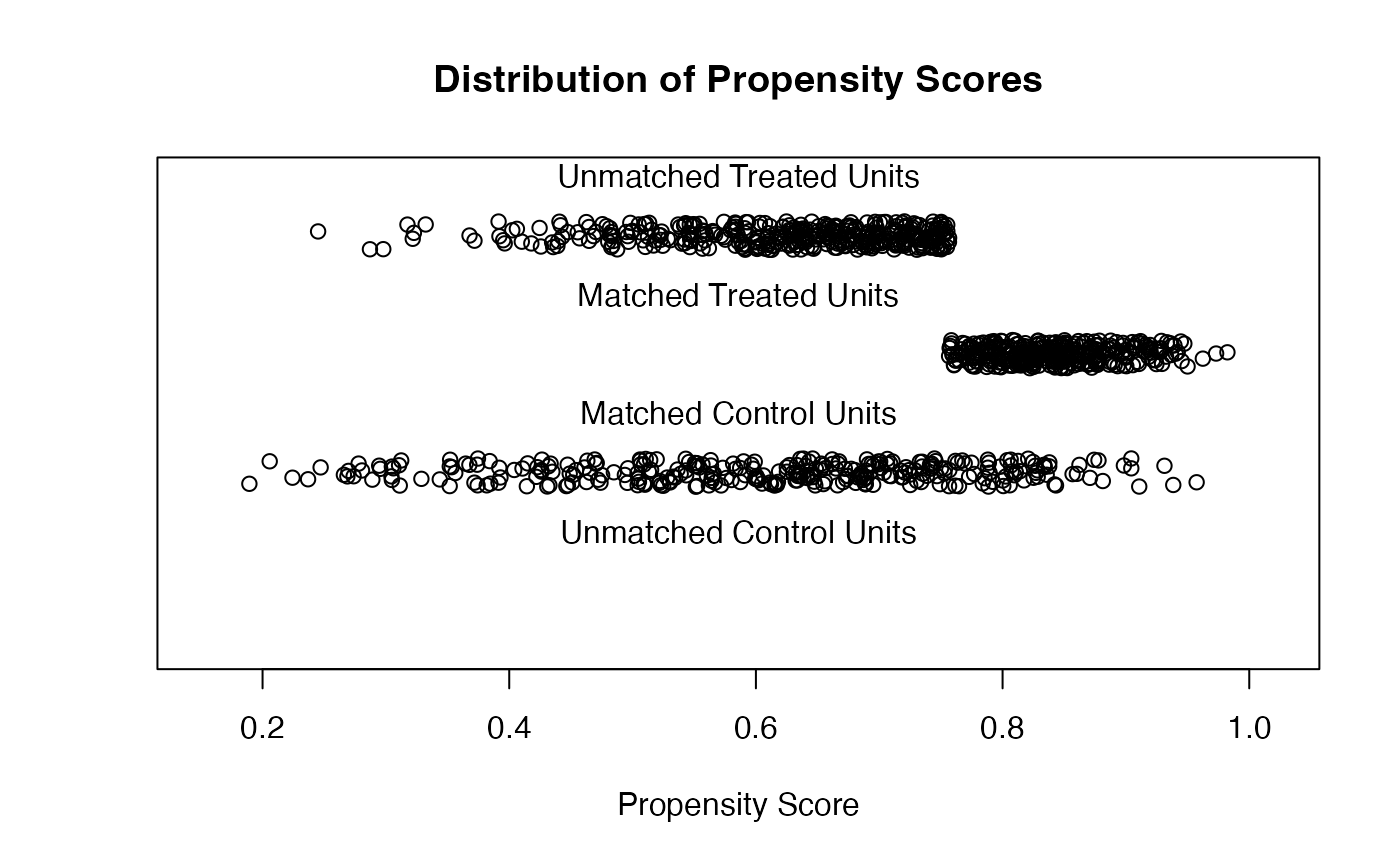

plot(m_nn, type = "jitter", interactive = FALSE)

# Extract matched data for outcome analysis

matched_nn <- match.data(m_nn)

nrow(matched_nn)

#> [1] 626Key options for nearest neighbor matching:

-

ratio: Number of controls matched to each treated unit (:1 matching). Higher ratios retain more data but may increase bias if distant matches are used. -

replace: Whether controls can be matched to multiple treated units. Matching with replacement reduces bias (each treated unit gets its best match) but increases variance (matched controls are reused). -

caliper: Maximum allowable distance between matched pairs. Treated units without a sufficiently close control are dropped. Calipers improve balance but may reduce the effective sample size.

# Nearest neighbor with caliper and 2:1 matching

m_nn_cal <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "glm",

ratio = 2,

caliper = 0.2, # 0.2 SD of the propensity score

replace = FALSE

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

summary(m_nn_cal)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "nearest", distance = "glm", replace = FALSE,

#> caliper = 0.2, ratio = 2)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.6619 0.6342 0.2024 1.0516 0.0537

#> age 39.4658 37.3612 0.2141 1.2519 0.0635

#> income 47698.4497 47201.9519 0.0351 0.8422 0.0162

#> education 0.3483 0.3293 0.0380 . 0.0190

#> experience 17.4883 15.3883 0.2113 1.1648 0.0599

#> eCDF Max Std. Pair Dist.

#> distance 0.1034 0.1982

#> age 0.1276 0.8338

#> income 0.0552 0.9160

#> education 0.0190 0.7194

#> experience 0.1103 0.8598

#>

#> Sample Sizes:

#> Control Treated

#> All 313. 687

#> Matched (ESS) 295.61 290

#> Matched 301. 290

#> Unmatched 12. 397

#> Discarded 0. 04.2 Optimal Matching

Optimal matching minimizes the total distance across all matched pairs, unlike greedy nearest-neighbor matching which minimizes each pair’s distance sequentially. This typically produces better overall balance, especially when the supply of good matches is limited.

m_opt <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "optimal",

distance = "glm"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

summary(m_opt)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "optimal", distance = "glm")

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.6343 0.6096 0.1806 0.6712 0.0206

#> age 37.4032 36.3723 0.1049 0.9367 0.0326

#> income 47037.8353 45727.4053 0.0925 0.7809 0.0284

#> education 0.3259 0.3131 0.0256 . 0.0128

#> experience 15.4824 14.5803 0.0908 0.9102 0.0285

#> eCDF Max Std. Pair Dist.

#> distance 0.1182 0.1823

#> age 0.0990 0.7933

#> income 0.0895 0.9732

#> education 0.0128 0.7559

#> experience 0.0895 0.7799

#>

#> Sample Sizes:

#> Control Treated

#> All 313 687

#> Matched 313 313

#> Unmatched 0 374

#> Discarded 0 0

# Compare balance with nearest neighbor

summary(m_nn)$sum.matched

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 8.410781e-01 6.096293e-01 1.6920354 0.09339355 0.4562332

#> age 4.679961e+01 3.637228e+01 1.0610338 0.81202357 0.3017125

#> income 5.837493e+04 4.572741e+04 0.8930259 0.72748898 0.2452396

#> education 6.773163e-01 3.130990e-01 0.7302944 NA 0.3642173

#> experience 2.487157e+01 1.458027e+01 1.0355431 0.92702883 0.3032596

#> eCDF Max Std. Pair Dist.

#> distance 0.8051118 1.692035

#> age 0.4600639 1.241366

#> income 0.3610224 1.232078

#> education 0.3642173 1.127472

#> experience 0.4345048 1.242133

summary(m_opt)$sum.matched

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 6.343381e-01 6.096293e-01 0.18063677 0.6712083 0.02061661

#> age 3.740316e+01 3.637228e+01 0.10489670 0.9366823 0.03262620

#> income 4.703784e+04 4.572741e+04 0.09252781 0.7808702 0.02840895

#> education 3.258786e-01 3.130990e-01 0.02562437 NA 0.01277955

#> experience 1.548239e+01 1.458027e+01 0.09077424 0.9101679 0.02848915

#> eCDF Max Std. Pair Dist.

#> distance 0.11821086 0.1822937

#> age 0.09904153 0.7933261

#> income 0.08945687 0.9731595

#> education 0.01277955 0.7559188

#> experience 0.08945687 0.7799437Optimal matching requires the optmatch package. It is generally preferred over nearest neighbor matching for pair matching, although it can be computationally expensive for large datasets.

4.3 Full Matching

Full matching creates subclasses (strata) where each subclass contains at least one treated and one control unit. Every unit in the data is assigned to a subclass, so no data are discarded. Full matching is optimal in the sense that it minimizes the average within-subclass distance.

m_full <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "full",

distance = "glm"

)

summary(m_full)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "full", distance = "glm")

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.7220 0.0017 0.9916 0.0029

#> age 41.2769 41.1001 0.0180 1.0977 0.0163

#> income 51830.4982 52367.4758 -0.0379 0.9247 0.0186

#> education 0.4643 0.4464 0.0360 . 0.0179

#> experience 19.3843 19.3941 -0.0010 0.9940 0.0169

#> eCDF Max Std. Pair Dist.

#> distance 0.0247 0.0136

#> age 0.0552 0.8361

#> income 0.0585 0.9691

#> education 0.0179 0.6973

#> experience 0.0634 0.8558

#>

#> Sample Sizes:

#> Control Treated

#> All 313. 687

#> Matched (ESS) 115.05 687

#> Matched 313. 687

#> Unmatched 0. 0

#> Discarded 0. 0

# Extract matched data with subclass weights

matched_full <- match.data(m_full)

# Each observation gets a weight reflecting its role in the matched sample

table(matched_full$subclass[1:20])

#>

#> 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20

#> 1 2 0 0 0 0 0 1 0 0 0 0 0 1 0 0 0 0 1 0

#> 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

#> 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0

#> 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80

#> 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

#> 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100

#> 1 0 0 0 0 1 0 0 0 0 0 0 0 0 1 0 0 0 0 0

#> 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120

#> 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0 0 0

#> 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

#> 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0

#> 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180

#> 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0 0 0 0 0 0

#> 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200

#> 0 0 0 1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

#> 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1 0 0

#> 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240

#> 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 1

#> 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260

#> 0 0 0 1 0 0 0 0 0 1 0 0 0 0 1 0 0 0 0 0

#> 261

#> 0

summary(matched_full$weights)

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.06509 1.00000 1.00000 1.00000 1.00000 9.11208Full matching naturally targets the ATE (or the ATT with appropriate arguments). Because it uses all observations, it is generally more efficient than pair matching, though the resulting weights can be highly variable.

4.4 Coarsened Exact Matching (CEM)

Coarsened Exact Matching (Iacus, King, and Porro, 2012) temporarily coarsens continuous covariates into bins, performs exact matching on the coarsened values, and then retains the original (uncoarsened) data for analysis. This ensures that matched units are within the same bin on every covariate.

m_cem <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "cem",

cutpoints = list(

age = 5, # bin width for age

income = 4, # number of bins for income

experience = 5 # bin width for experience

)

)

summary(m_cem)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "cem", cutpoints = list(age = 5,

#> income = 4, experience = 5))

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 40.7179 40.5229 0.0198 1.0157 0.0108

#> income 50568.8635 49744.0154 0.0582 0.8391 0.0288

#> education 0.4826 0.4826 0.0000 . 0.0000

#> experience 18.7683 18.6671 0.0102 0.9941 0.0106

#> eCDF Max Std. Pair Dist.

#> age 0.0386 0.3602

#> income 0.0890 0.5411

#> education 0.0000 0.0000

#> experience 0.0350 0.3341

#>

#> Sample Sizes:

#> Control Treated

#> All 313. 687

#> Matched (ESS) 156.55 632

#> Matched 301. 632

#> Unmatched 12. 55

#> Discarded 0. 0

# CEM produces strata-based weights

matched_cem <- match.data(m_cem)

summary(matched_cem$weights)

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.03664 1.00000 1.00000 1.00000 1.00000 7.14399Advantages of CEM:

- Guarantees that imbalance is bounded by the coarsening level.

- Does not require a propensity score model.

- Transparent: the researcher directly controls the matching criteria.

Disadvantages:

- Sensitive to the coarsening scheme. Too fine a coarsening leads to many unmatched units; too coarse sacrifices precision.

- In high dimensions, many strata will contain only treated or only control units, leading to substantial data loss.

4.5 Subclassification

Subclassification divides the propensity score distribution into a fixed number of strata and estimates treatment effects within each stratum. The overall effect is a weighted average of stratum-specific effects.

m_sub <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "subclass",

subclass = 6 # number of subclasses

)

summary(m_sub)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "subclass", subclass = 6)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance Across Subclasses

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.7128 0.0694 0.8060 0.0121

#> age 41.2769 40.6680 0.0620 1.0145 0.0178

#> income 51830.4982 51852.0925 -0.0015 0.7820 0.0156

#> education 0.4643 0.4483 0.0322 . 0.0161

#> experience 19.3843 18.8408 0.0547 0.9783 0.0165

#> eCDF Max

#> distance 0.0473

#> age 0.0529

#> income 0.0586

#> education 0.0161

#> experience 0.0487

#>

#> Sample Sizes:

#> Control Treated

#> All 313. 687

#> Matched (ESS) 191.76 687

#> Matched 313. 687

#> Unmatched 0. 0

#> Discarded 0. 0

# Balance within each subclass

summary(m_sub, subclass = TRUE)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "subclass", subclass = 6)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7223 0.6096 0.8233 0.6936 0.1993

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2947

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance by Subclass:

#>

#> - Subclass 1

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.4956 0.4482 0.6408 0.5346 0.1346

#> age 31.8951 30.3054 0.2046 1.0347 0.0705

#> income 40341.8121 36642.7362 0.3057 0.9317 0.0919

#> education 0.1652 0.1462 0.0513 . 0.0191

#> experience 9.7578 8.9015 0.1262 0.9097 0.0490

#> eCDF Max

#> distance 0.2505

#> age 0.1716

#> income 0.2070

#> education 0.0191

#> experience 0.0983

#>

#> - Subclass 2

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.6315 0.6320 -0.0204 1.1319 0.0322

#> age 36.1483 36.3363 -0.0244 0.9917 0.0376

#> income 48061.7145 47817.1543 0.0206 0.9731 0.0433

#> education 0.2719 0.2807 -0.0197 . 0.0088

#> experience 14.7667 14.5269 0.0301 0.9722 0.0362

#> eCDF Max

#> distance 0.1053

#> age 0.0877

#> income 0.1140

#> education 0.0088

#> experience 0.1316

#>

#> - Subclass 3

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7078 0.7053 0.1209 0.9512 0.0468

#> age 40.2060 39.5754 0.0920 0.9419 0.0524

#> income 49434.4292 50515.0115 -0.0893 1.0146 0.0448

#> education 0.3947 0.3929 0.0038 . 0.0019

#> experience 18.1601 17.1524 0.1539 0.7383 0.0556

#> eCDF Max

#> distance 0.1341

#> age 0.1319

#> income 0.1297

#> education 0.0019

#> experience 0.1711

#>

#> - Subclass 4

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7734 0.7726 0.0455 0.9228 0.0346

#> age 42.5956 42.5173 0.0102 0.7902 0.0333

#> income 52948.5963 54173.5859 -0.0915 0.8207 0.0336

#> education 0.5391 0.5000 0.0785 . 0.0391

#> experience 20.6439 20.0564 0.0720 0.7658 0.0323

#> eCDF Max

#> distance 0.0995

#> age 0.0840

#> income 0.1144

#> education 0.0391

#> experience 0.1302

#>

#> - Subclass 5

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.8295 0.8238 0.4047 1.3587 0.1188

#> age 45.1786 46.3028 -0.1572 0.9812 0.0712

#> income 57278.0522 55387.5590 0.1620 0.8703 0.0884

#> education 0.6579 0.5833 0.1572 . 0.0746

#> experience 22.9792 24.2429 -0.1662 1.0866 0.0787

#> eCDF Max

#> distance 0.2851

#> age 0.1776

#> income 0.2807

#> education 0.0746

#> experience 0.1798

#>

#> - Subclass 6

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.8956 0.8949 0.0239 0.8799 0.0349

#> age 51.6178 48.9728 0.3304 1.2535 0.0923

#> income 62912.1473 66560.5380 -0.2941 0.5868 0.0761

#> education 0.7565 0.7857 -0.0680 . 0.0292

#> experience 29.9783 28.1594 0.2105 1.2117 0.0713

#> eCDF Max

#> distance 0.0901

#> age 0.1752

#> income 0.2286

#> education 0.0292

#> experience 0.2323

#>

#> Sample Sizes by Subclass:

#> 1 2 3 4 5 6 All

#> Control 130 57 56 32 24 14 313

#> Treated 115 114 114 115 114 115 687

#> Total 245 171 170 147 138 129 1000

# Matched data with subclass indicators

matched_sub <- match.data(m_sub)

table(matched_sub$subclass)

#>

#> 1 2 3 4 5 6

#> 245 171 170 147 138 1294.6 Propensity Score Matching (Detailed)

Propensity score matching uses the estimated propensity score as the distance measure. MatchIt estimates the propensity score via logistic regression by default, but supports many alternatives.

# Logistic regression (default)

m_ps_logit <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "glm",

link = "logit"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Probit model

m_ps_probit <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "glm",

link = "probit"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Random forest (via the randomForest package)

m_ps_rf <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "randomforest"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Generalized boosted model (via the gbm package)

m_ps_gbm <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "gbm"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Compare balance across PS estimation methods

summary(m_ps_logit)$sum.matched

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 8.410781e-01 6.096293e-01 1.6920354 0.09339355 0.4562332

#> age 4.679961e+01 3.637228e+01 1.0610338 0.81202357 0.3017125

#> income 5.837493e+04 4.572741e+04 0.8930259 0.72748898 0.2452396

#> education 6.773163e-01 3.130990e-01 0.7302944 NA 0.3642173

#> experience 2.487157e+01 1.458027e+01 1.0355431 0.92702883 0.3032596

#> eCDF Max Std. Pair Dist.

#> distance 0.8051118 1.692035

#> age 0.4600639 1.241366

#> income 0.3610224 1.232078

#> education 0.3642173 1.127472

#> experience 0.4345048 1.242133

summary(m_ps_rf)$sum.matched

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 8.659405e-01 6.168592e-01 1.3560082 0.07434252 0.3684388

#> age 4.522984e+01 3.637228e+01 0.9013019 0.90706182 0.2571310

#> income 5.641049e+04 4.572741e+04 0.7543193 0.72954743 0.2100351

#> education 5.686901e-01 3.130990e-01 0.5124873 NA 0.2555911

#> experience 2.328475e+01 1.458027e+01 0.8758720 0.96258677 0.2578406

#> eCDF Max Std. Pair Dist.

#> distance 0.6869010 1.356008

#> age 0.4185304 1.247094

#> income 0.3418530 1.248224

#> education 0.2555911 1.024975

#> experience 0.3769968 1.2297164.7 Mahalanobis Distance Matching

Instead of matching on the propensity score, one can match directly on the covariate space using Mahalanobis distance:

where is the sample covariance matrix. This accounts for correlations and scaling differences among covariates.

m_maha <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "mahalanobis"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

summary(m_maha)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "nearest", distance = "mahalanobis")

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.1985 36.3723 0.4911 0.9269 0.1396

#> income 51719.4187 45727.4053 0.4231 0.8061 0.1150

#> education 0.4856 0.3131 0.3459 . 0.1725

#> experience 19.2902 14.5803 0.4739 0.9564 0.1433

#> eCDF Max Std. Pair Dist.

#> age 0.2492 0.6076

#> income 0.2013 0.5707

#> education 0.1725 0.3459

#> experience 0.2236 0.5811

#>

#> Sample Sizes:

#> Control Treated

#> All 313 687

#> Matched 313 313

#> Unmatched 0 374

#> Discarded 0 0

# Mahalanobis distance matching with a caliper on age

m_maha_cal <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = "mahalanobis",

caliper = c(age = 0.2),

std.caliper = TRUE

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

summary(m_maha_cal)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "nearest", distance = "mahalanobis",

#> caliper = c(age = 0.2), std.caliper = TRUE)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 36.7153 36.4687 0.0251 0.9666 0.0129

#> income 52439.2107 45787.7991 0.4696 0.8165 0.1263

#> education 0.4872 0.3109 0.3535 . 0.1763

#> experience 14.9476 14.6270 0.0323 0.9194 0.0163

#> eCDF Max Std. Pair Dist.

#> age 0.0481 0.1007

#> income 0.2212 0.7018

#> education 0.1763 0.4177

#> experience 0.0545 0.1956

#>

#> Sample Sizes:

#> Control Treated

#> All 313 687

#> Matched 312 312

#> Unmatched 1 375

#> Discarded 0 04.8 Estimation After Matching

After obtaining matched data, treatment effects are estimated using regression on the matched sample. Including the matching covariates in the regression provides doubly robust estimation: the estimate is consistent if either the matching or the regression model is correctly specified.

# Get matched data

matched_data <- match.data(m_nn)

# Simple difference in means

lm_simple <- lm(outcome ~ treatment, data = matched_data, weights = weights)

summary(lm_simple)

#>

#> Call:

#> lm(formula = outcome ~ treatment, data = matched_data, weights = weights)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -24.8293 -5.5454 -0.0366 5.6464 29.4450

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 37.0384 0.4792 77.30 <2e-16 ***

#> treatment 18.8926 0.6777 27.88 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 8.478 on 624 degrees of freedom

#> Multiple R-squared: 0.5547, Adjusted R-squared: 0.554

#> F-statistic: 777.3 on 1 and 624 DF, p-value: < 2.2e-16

# Regression adjustment with covariates (doubly robust)

lm_adj <- lm(

outcome ~ treatment + age + income + education + experience,

data = matched_data,

weights = weights

)

summary(lm_adj)

#>

#> Call:

#> lm(formula = outcome ~ treatment + age + income + education +

#> experience, data = matched_data, weights = weights)

#>

#> Residuals:

#> Min 1Q Median 3Q Max

#> -13.820 -3.292 -0.170 3.209 18.104

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.742e+00 1.721e+00 2.756 0.00603 **

#> treatment 8.126e+00 5.616e-01 14.470 < 2e-16 ***

#> age 7.119e-01 6.406e-02 11.113 < 2e-16 ***

#> income 9.106e-05 1.439e-05 6.328 4.77e-10 ***

#> education 4.270e+00 4.449e-01 9.598 < 2e-16 ***

#> experience 6.184e-02 6.289e-02 0.983 0.32583

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 5.029 on 620 degrees of freedom

#> Multiple R-squared: 0.8443, Adjusted R-squared: 0.8431

#> F-statistic: 672.5 on 5 and 620 DF, p-value: < 2.2e-16

coef(lm_adj)["treatment"]

#> treatment

#> 8.125829

# Using fixest for cluster-robust standard errors

feols_fit <- fixest::feols(

outcome ~ treatment + age + income + education + experience,

data = matched_data,

weights = ~ weights,

vcov = "HC1"

)

summary(feols_fit)

#> OLS estimation, Dep. Var.: outcome

#> Observations: 626

#> Weights: weights

#> Standard-errors: Heteroskedasticity-robust

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.741698 1.548092 3.06293 2.2868e-03 **

#> treatment 8.125829 0.533045 15.24418 < 2.2e-16 ***

#> age 0.711915 0.059234 12.01866 < 2.2e-16 ***

#> income 0.000091 0.000014 6.49976 1.6547e-10 ***

#> education 4.270255 0.442550 9.64919 < 2.2e-16 ***

#> experience 0.061842 0.058720 1.05317 2.9267e-01

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#> RMSE: 5.00447 Adj. R2: 0.843063When matching is done with replacement or with variable numbers of controls, one should use cluster-robust standard errors (clustering on the matched pair or subclass) or use the weights produced by match.data().

# Cluster-robust SEs, clustering on the subclass (matched pair)

feols_cluster <- fixest::feols(

outcome ~ treatment + age + income + education + experience,

data = matched_data,

weights = ~ weights,

cluster = ~ subclass

)

summary(feols_cluster)

#> OLS estimation, Dep. Var.: outcome

#> Observations: 626

#> Weights: weights

#> Standard-errors: Clustered (subclass)

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 4.741698 1.568512 3.02306 2.7099e-03 **

#> treatment 8.125829 0.526873 15.42275 < 2.2e-16 ***

#> age 0.711915 0.058907 12.08549 < 2.2e-16 ***

#> income 0.000091 0.000014 6.32289 8.8749e-10 ***

#> education 4.270255 0.440074 9.70350 < 2.2e-16 ***

#> experience 0.061842 0.058060 1.06513 2.8764e-01

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#> RMSE: 5.00447 Adj. R2: 0.8430635. The cobalt Package: Balance Assessment

The cobalt package (Greifer, 2024) provides a comprehensive toolkit for assessing covariate balance after matching or weighting. It works seamlessly with objects from MatchIt, WeightIt, and many other packages.

5.1 Balance Tables with bal.tab()

library(cobalt)

#> cobalt (Version 4.6.1, Build Date: 2025-08-20)

#>

#> Attaching package: 'cobalt'

#> The following object is masked from 'package:MatchIt':

#>

#> lalonde

# From a MatchIt object

bal.tab(m_nn, thresholds = c(m = 0.1)) # flag covariates with SMD > 0.1

#> Balance Measures

#> Type Diff.Adj M.Threshold

#> distance Distance 1.6920

#> age Contin. 1.0610 Not Balanced, >0.1

#> income Contin. 0.8930 Not Balanced, >0.1

#> education Binary 0.3642 Not Balanced, >0.1

#> experience Contin. 1.0355 Not Balanced, >0.1

#>

#> Balance tally for mean differences

#> count

#> Balanced, <0.1 0

#> Not Balanced, >0.1 4

#>

#> Variable with the greatest mean difference

#> Variable Diff.Adj M.Threshold

#> age 1.061 Not Balanced, >0.1

#>

#> Sample sizes

#> Control Treated

#> All 313 687

#> Matched 313 313

#> Unmatched 0 374

# From a WeightIt object (see Section 6)

# bal.tab(w_out, thresholds = c(m = 0.1))

# From raw data with a formula

bal.tab(

treatment ~ age + income + education + experience,

data = sim_data,

thresholds = c(m = 0.1),

un = TRUE # show unadjusted balance too

)

#> Note: `s.d.denom` not specified; assuming "pooled".

#> Balance Measures

#> Type Diff.Un M.Threshold.Un

#> age Contin. 0.5039 Not Balanced, >0.1

#> income Contin. 0.4141 Not Balanced, >0.1

#> education Binary 0.1512 Not Balanced, >0.1

#> experience Contin. 0.4929 Not Balanced, >0.1

#>

#> Balance tally for mean differences

#> count

#> Balanced, <0.1 0

#> Not Balanced, >0.1 4

#>

#> Variable with the greatest mean difference

#> Variable Diff.Un M.Threshold.Un

#> age 0.5039 Not Balanced, >0.1

#>

#> Sample sizes

#> Control Treated

#> All 313 687The balance table reports the standardized mean difference (SMD), variance ratio, and other balance statistics for each covariate. The conventional threshold for acceptable balance is (Austin, 2011), though this is a guideline rather than a hard rule.

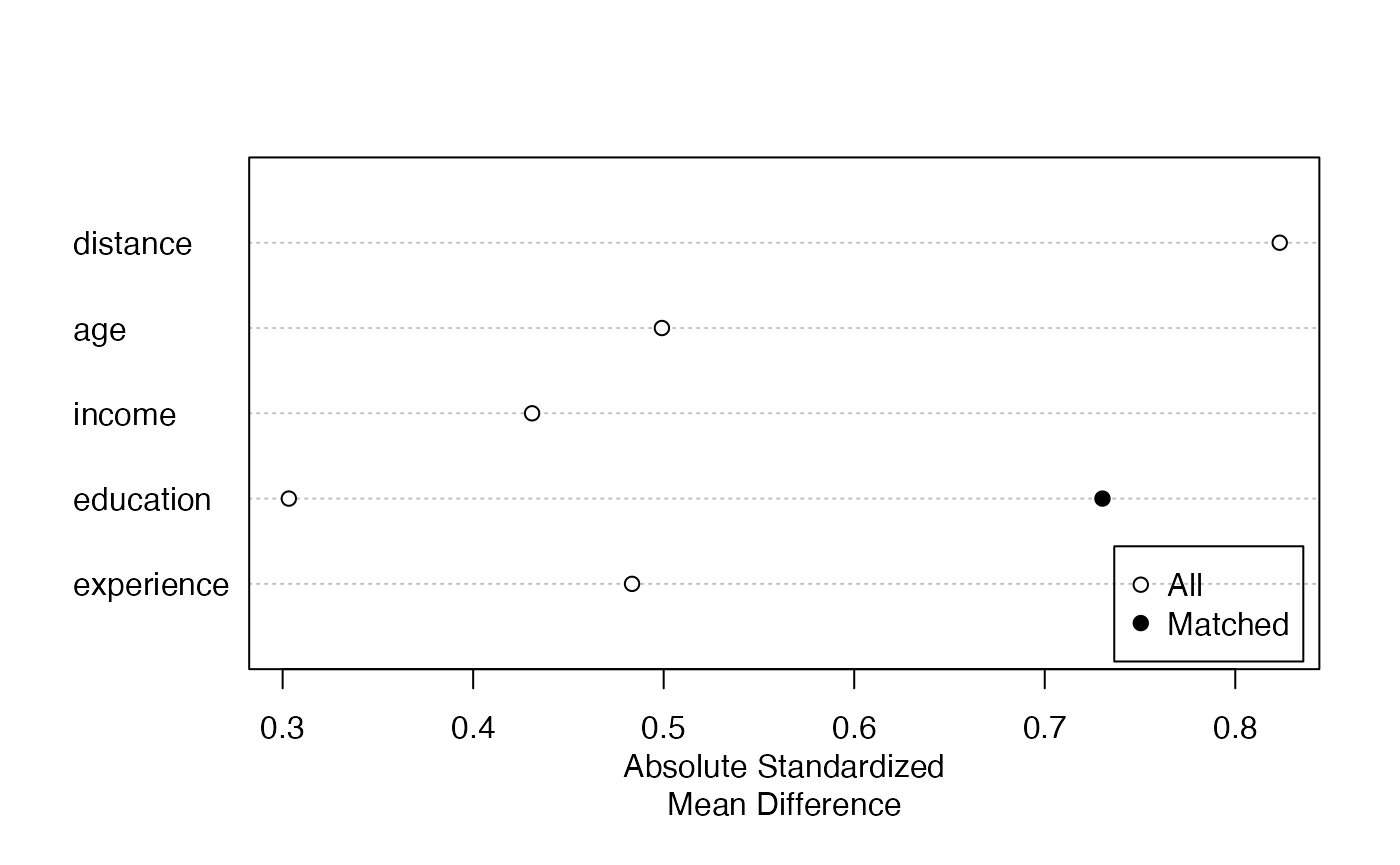

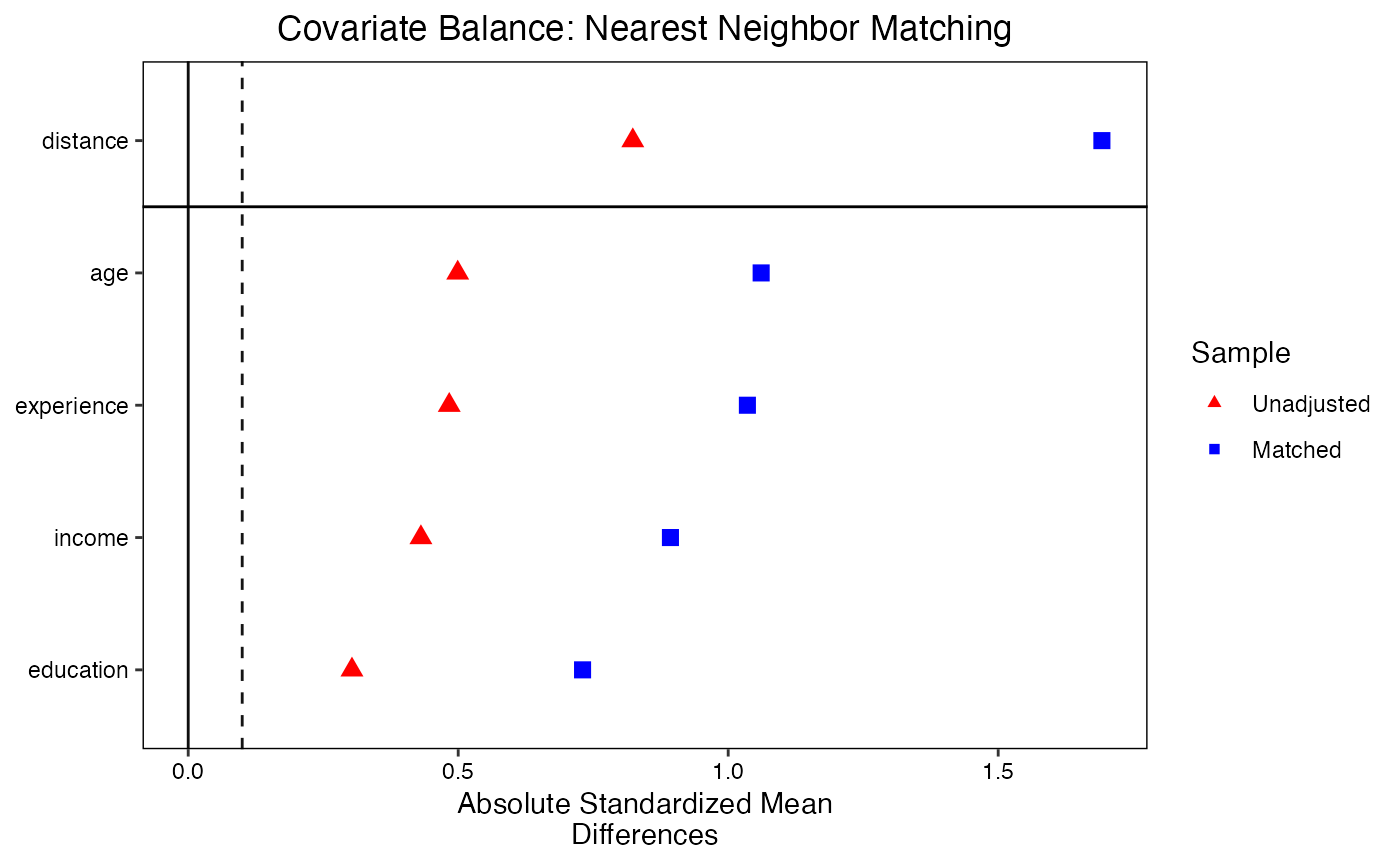

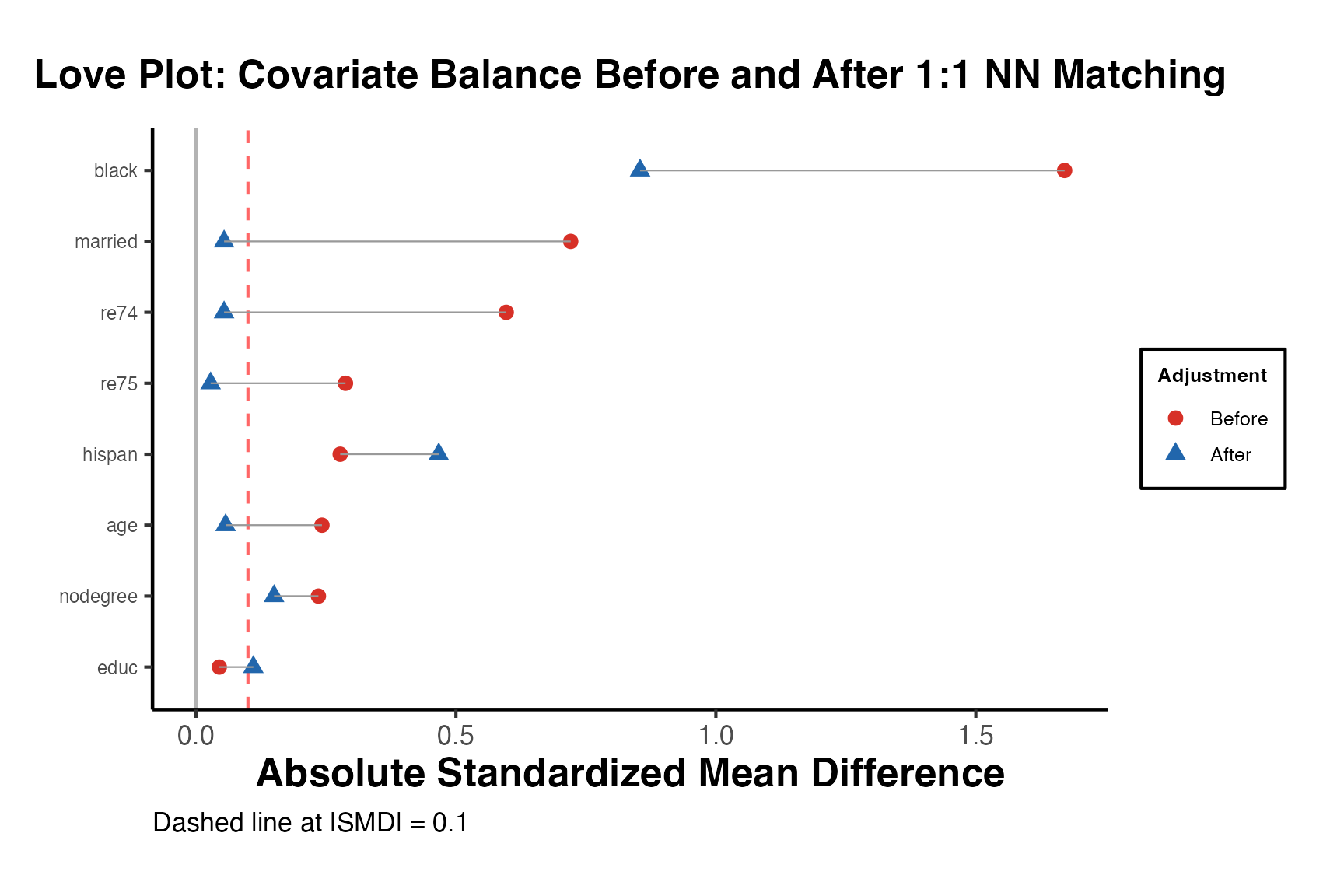

5.2 Love Plots with love.plot()

Love plots provide a visual summary of covariate balance. Each covariate is represented by a point; the x-axis shows the absolute standardized mean difference. Points are shown for both the unadjusted and adjusted samples, making it easy to see which covariates improved and whether all covariates meet the balance threshold.

# Love plot from a MatchIt object

love.plot(

m_nn,

binary = "std",

abs = TRUE,

thresholds = c(m = 0.1),

var.order = "unadjusted",

colors = c("red", "blue"),

shapes = c(17, 15),

sample.names = c("Unadjusted", "Matched"),

title = "Covariate Balance: Nearest Neighbor Matching"

)

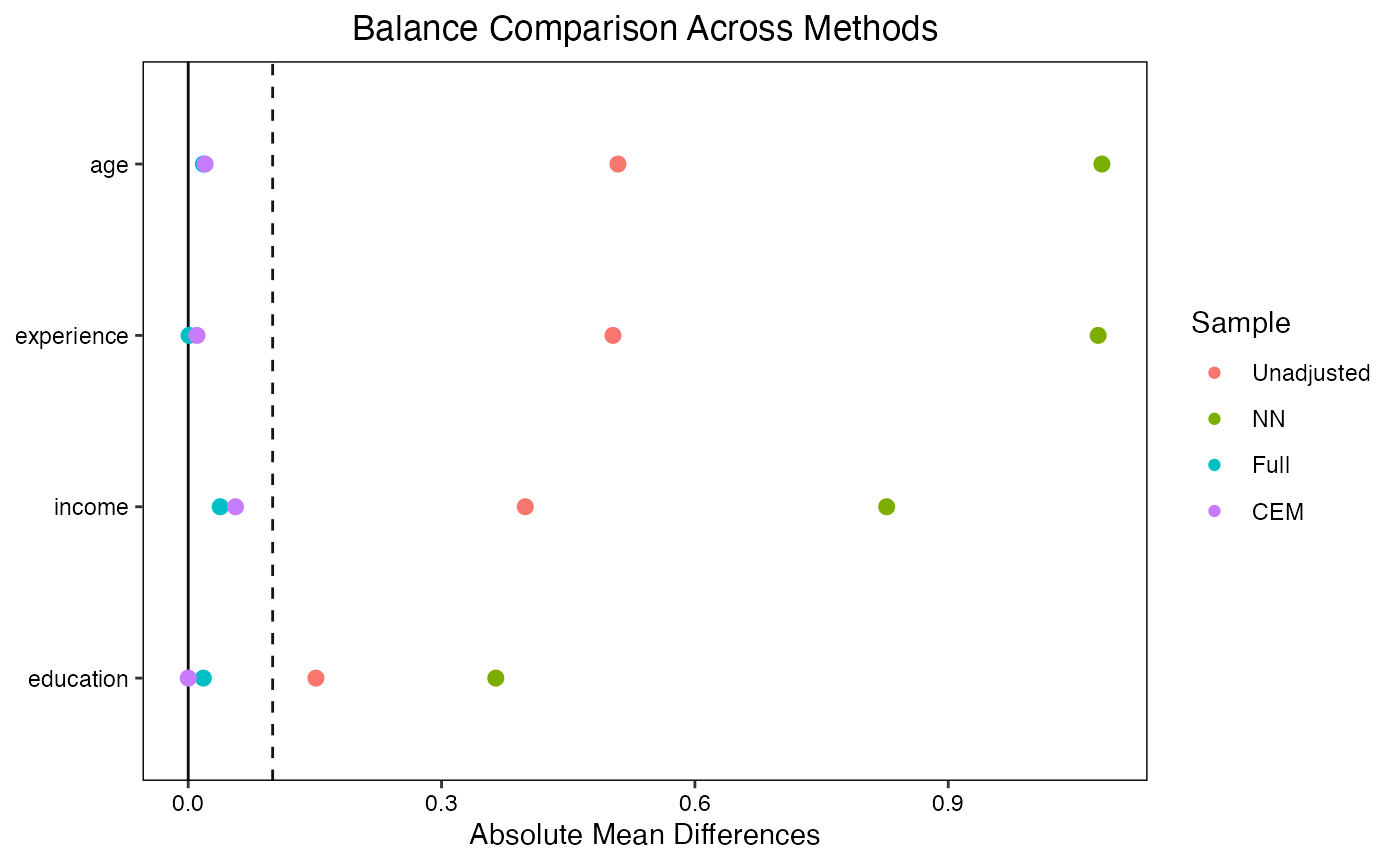

# Compare multiple matching methods

love.plot(

treatment ~ age + income + education + experience,

data = sim_data,

weights = list(

NN = m_nn,

Full = m_full,

CEM = m_cem

),

abs = TRUE,

var.order = "unadjusted",

thresholds = c(m = 0.1),

title = "Balance Comparison Across Methods"

)

#> Note: `s.d.denom` not specified; assuming "control" for NN, "treated"

#> for Full, and "pooled" for CEM.

#> Warning: Standardized mean differences and raw mean differences are present in

#> the same plot. Use the `stars` argument to distinguish between them and

#> appropriately label the x-axis. See `?love.plot` for details.

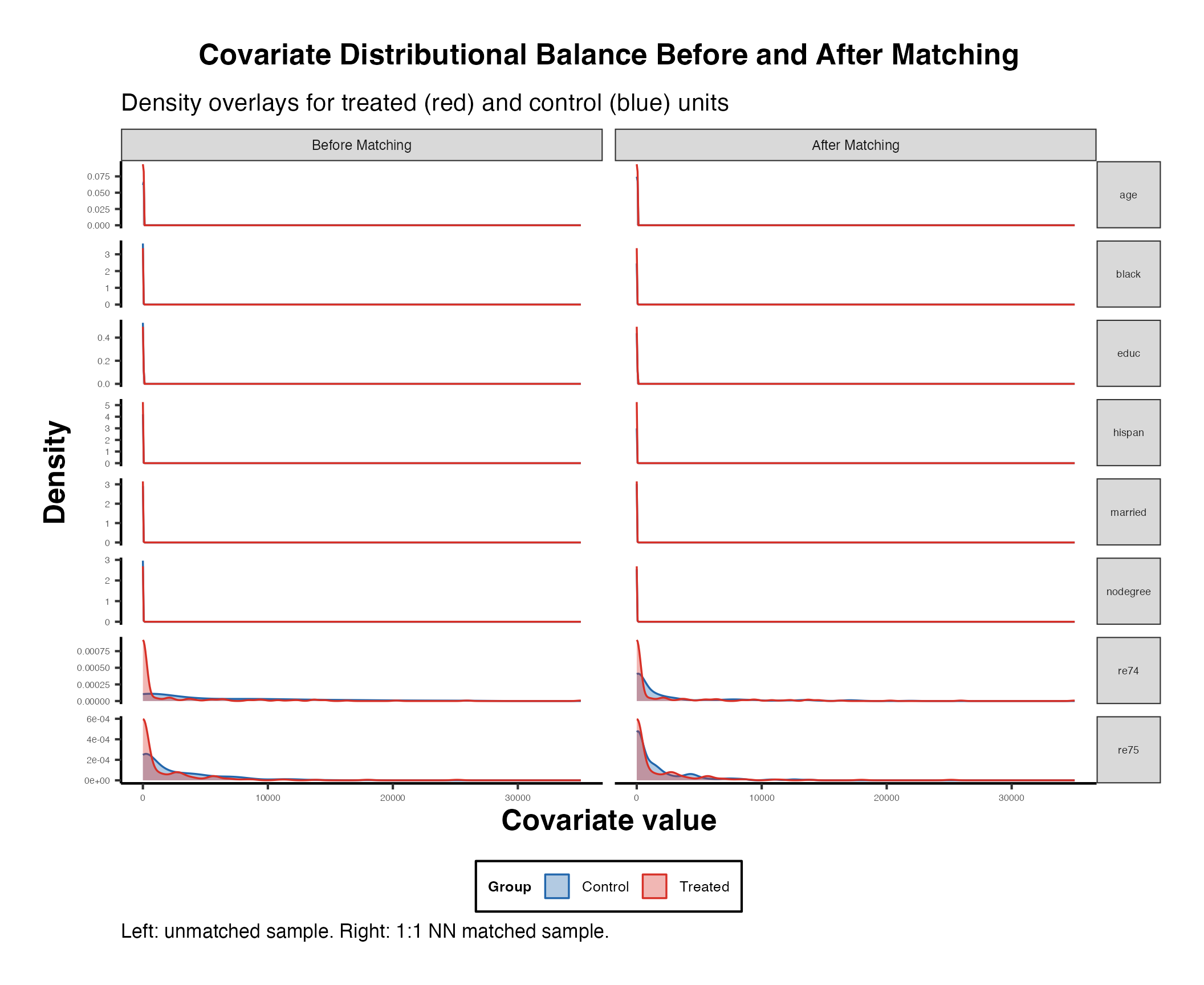

5.3 Distributional Balance with bal.plot()

While SMDs assess differences in means, bal.plot() visualizes the entire distribution of each covariate across treatment groups.

# Density plot for a continuous covariate

bal.plot(m_nn, var.name = "age", which = "both")

#> Ignoring unknown labels:

#> • colour : "Treatment"

# Mirror plot (treated density above, control below)

bal.plot(m_nn, var.name = "income", which = "both", type = "density",

mirror = TRUE)

#> Ignoring unknown labels:

#> • colour : "Treatment"

# Bar plot for a binary covariate

bal.plot(m_nn, var.name = "education", which = "both")

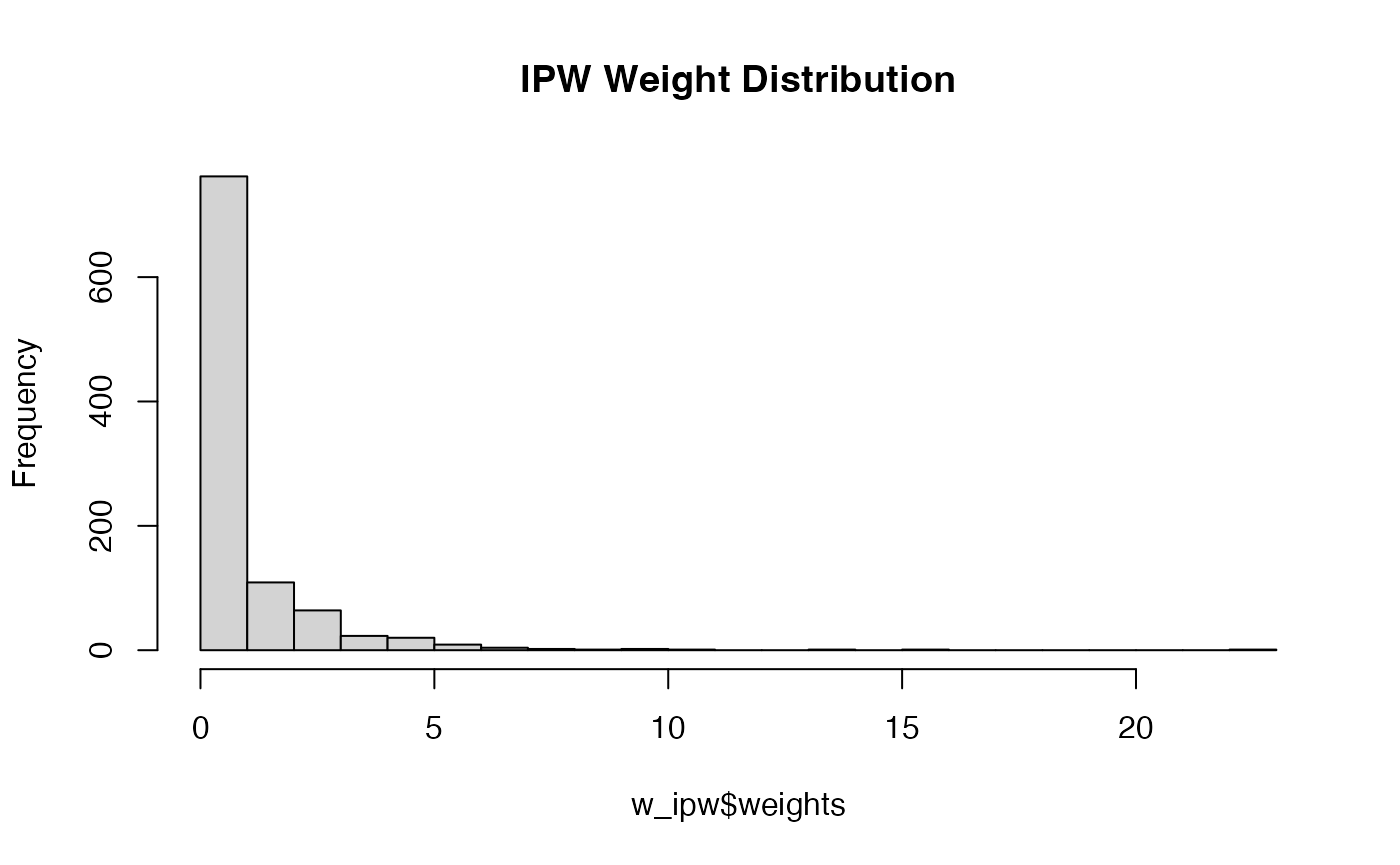

6. The WeightIt Package: Propensity Score Weighting

The WeightIt package (Greifer, 2024) provides a unified interface for estimating balancing weights. Unlike matching, weighting retains all observations and assigns weights to create a pseudo-population in which covariates are balanced.

6.1 Basic Usage

library(WeightIt)

# Standard IPW (inverse probability weighting)

w_ipw <- weightit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "ps", # propensity score weighting

estimand = "ATT"

)

summary(w_ipw)

#> Summary of weights

#>

#> - Weight ranges:

#>

#> Min Max

#> treated 1. || 1.

#> control 0.234 |---------------------------| 22.437

#>

#> - Units with the 5 most extreme weights by group:

#>

#> 1 2 3 4 5

#> treated 1 1 1 1 1

#> 271 215 200 158 43

#> control 9.456 10.21 13.543 15.23 22.437

#>

#> - Weight statistics:

#>

#> Coef of Var MAD Entropy # Zeros

#> treated 0.000 0.0 0.00 0

#> control 0.984 0.6 0.32 0

#>

#> - Effective Sample Sizes:

#>

#> Control Treated

#> Unweighted 313. 687

#> Weighted 159.28 687

# Examine the weight distribution

summary(w_ipw$weights)

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

#> 0.2335 1.0000 1.0000 1.3920 1.0000 22.4372

hist(w_ipw$weights, breaks = 30, main = "IPW Weight Distribution")

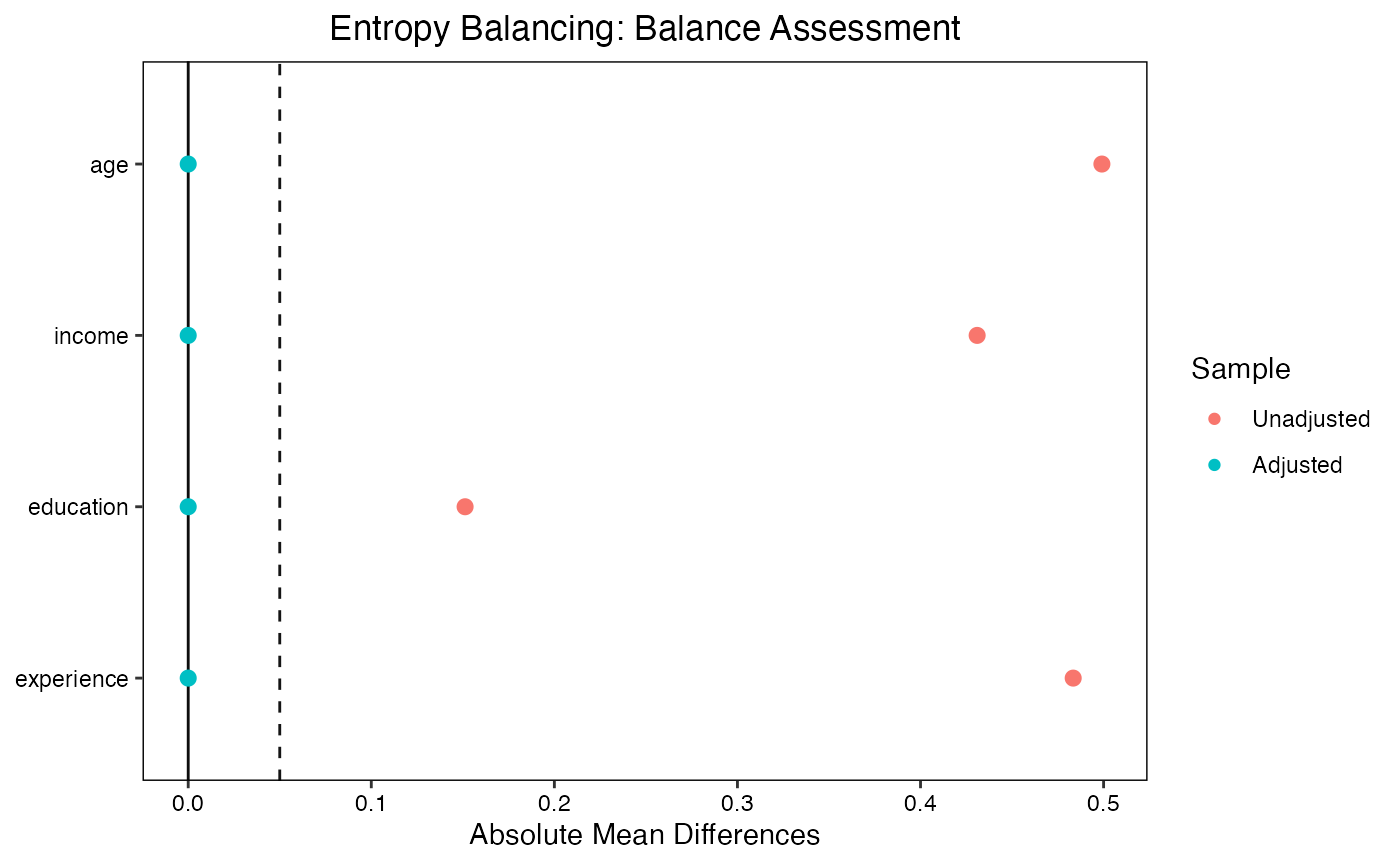

6.2 Entropy Balancing

Entropy balancing (Hainmueller, 2012) finds weights that satisfy exact moment conditions (equality of means, and optionally variances and skewness) while minimizing the entropy distance from uniform weights. This guarantees exact balance on the specified moments.

w_ebal <- weightit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "ebal", # entropy balancing

estimand = "ATT"

)

summary(w_ebal)

#> Summary of weights

#>

#> - Weight ranges:

#>

#> Min Max

#> treated 1. || 1.

#> control 0.112 |---------------------------| 6.909

#>

#> - Units with the 5 most extreme weights by group:

#>

#> 1 2 3 4 5

#> treated 1 1 1 1 1

#> 215 200 158 57 43

#> control 3.959 4.211 5.502 5.831 6.909

#>

#> - Weight statistics:

#>

#> Coef of Var MAD Entropy # Zeros

#> treated 0.000 0.00 0.000 0

#> control 0.843 0.56 0.265 0

#>

#> - Effective Sample Sizes:

#>

#> Control Treated

#> Unweighted 313. 687

#> Weighted 183.24 687

# Entropy balancing achieves near-exact balance

bal.tab(w_ebal, thresholds = c(m = 0.01))

#> Balance Measures

#> Type Diff.Adj M.Threshold

#> age Contin. 0 Balanced, <0.01

#> income Contin. 0 Balanced, <0.01

#> education Binary -0 Balanced, <0.01

#> experience Contin. 0 Balanced, <0.01

#>

#> Balance tally for mean differences

#> count

#> Balanced, <0.01 4

#> Not Balanced, >0.01 0

#>

#> Variable with the greatest mean difference

#> Variable Diff.Adj M.Threshold

#> income 0 Balanced, <0.01

#>

#> Effective sample sizes

#> Control Treated

#> Unadjusted 313. 687

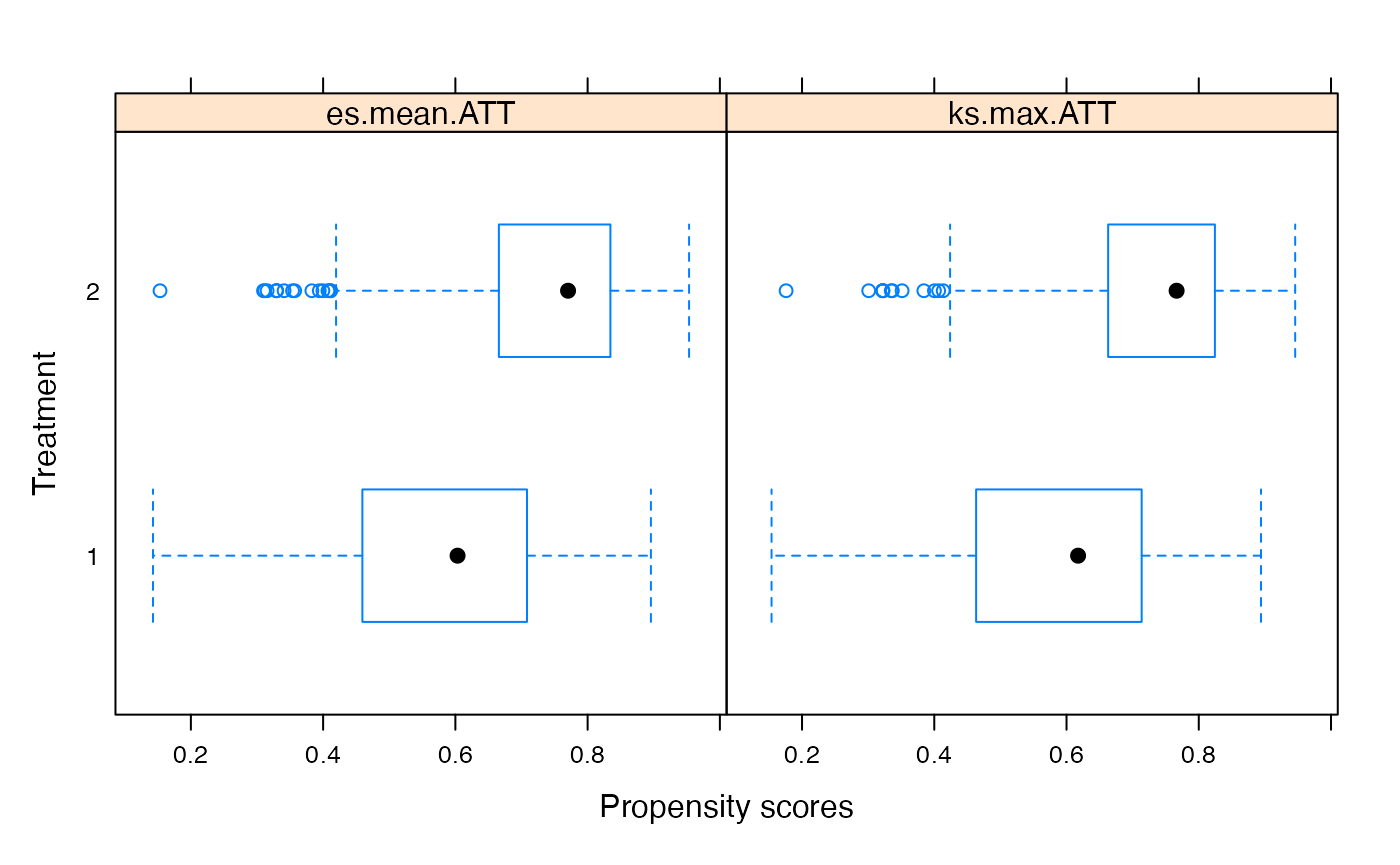

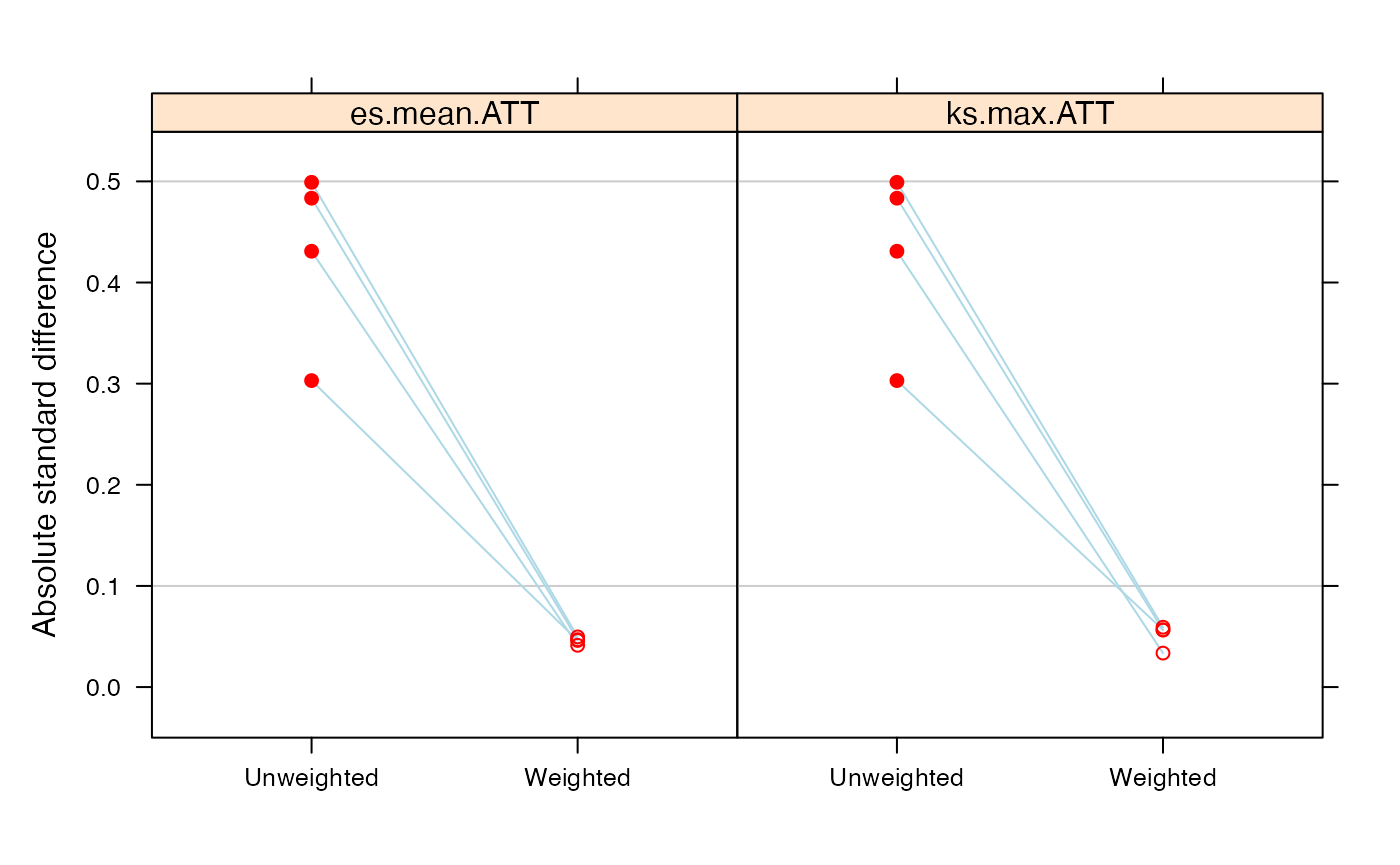

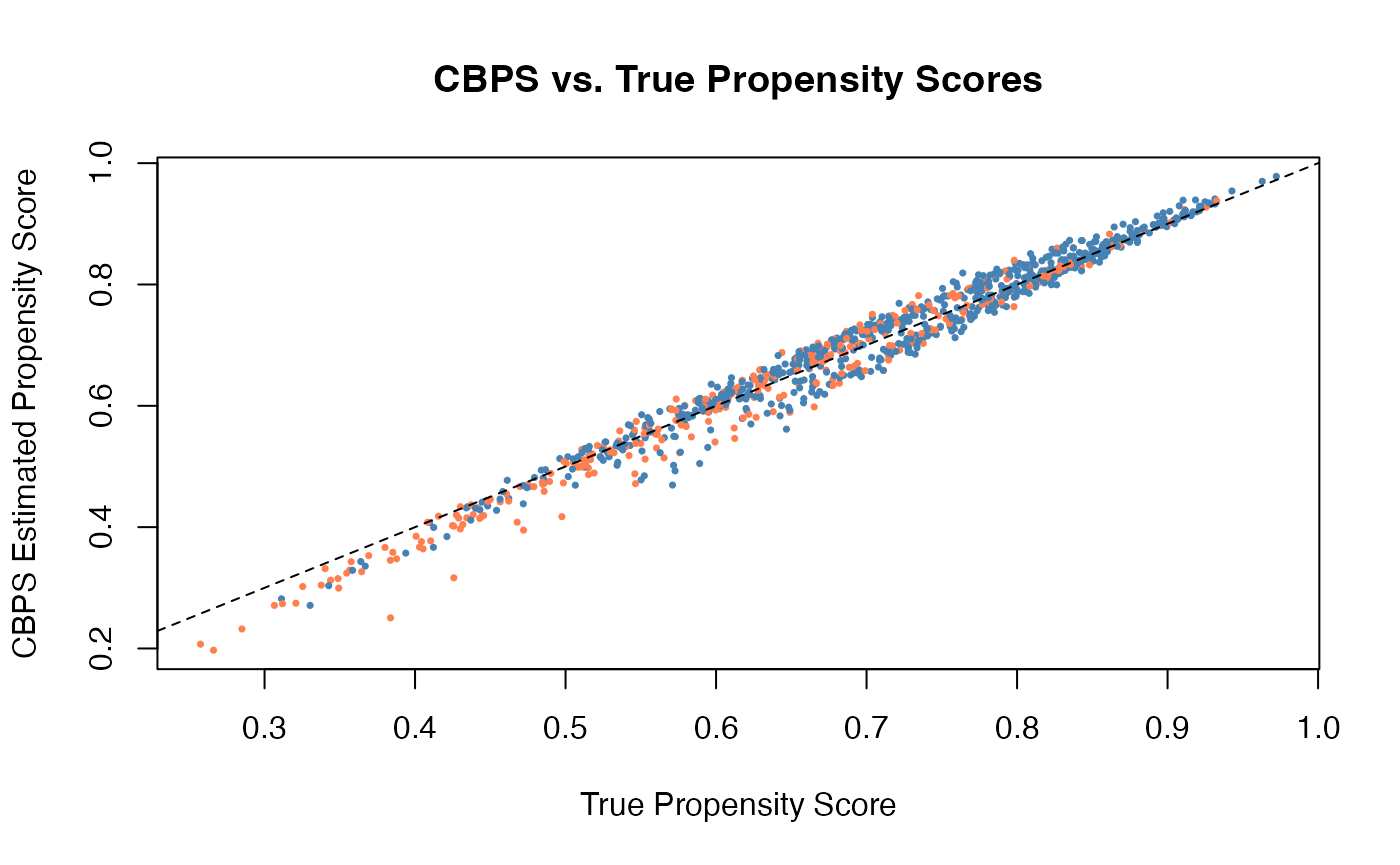

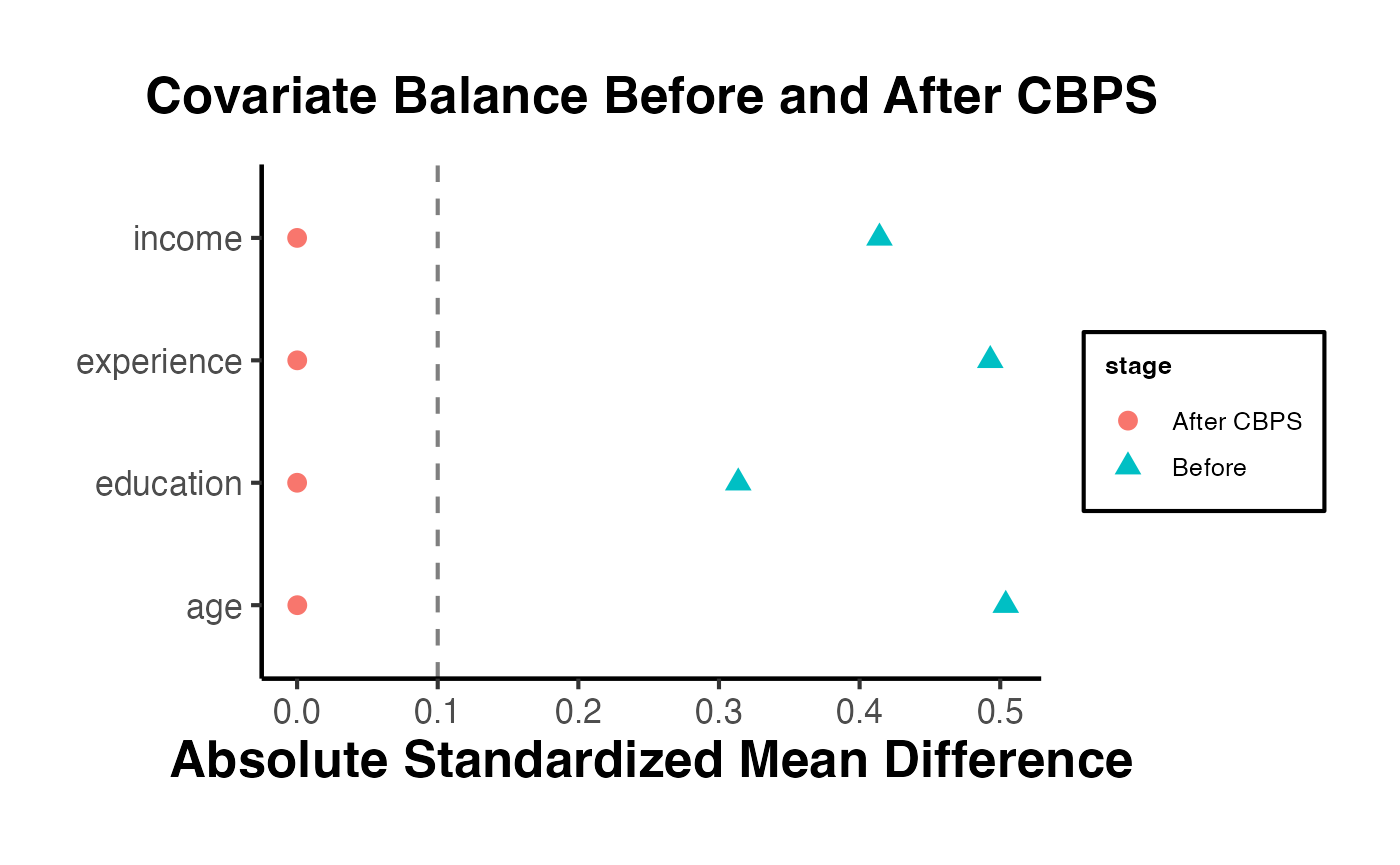

#> Adjusted 183.24 6876.3 Covariate Balancing Propensity Score (CBPS)

CBPS (Imai and Ratkovic, 2014) jointly estimates the propensity score and optimizes covariate balance. It modifies the propensity score estimation to explicitly target balance, rather than treating balance as a byproduct.

w_cbps <- weightit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "cbps",

estimand = "ATT"

)

summary(w_cbps)

#> Summary of weights

#>

#> - Weight ranges:

#>

#> Min Max

#> treated 1. || 1.

#> control 0.245 |---------------------------| 15.165

#>

#> - Units with the 5 most extreme weights by group:

#>

#> 1 2 3 4 5

#> treated 1 1 1 1 1

#> 215 200 158 57 43

#> control 8.689 9.243 12.076 12.798 15.165

#>

#> - Weight statistics:

#>

#> Coef of Var MAD Entropy # Zeros

#> treated 0.000 0.00 0.000 0

#> control 0.843 0.56 0.265 0

#>

#> - Effective Sample Sizes:

#>

#> Control Treated

#> Unweighted 313. 687

#> Weighted 183.24 687

bal.tab(w_cbps, thresholds = c(m = 0.1))

#> Balance Measures

#> Type Diff.Adj M.Threshold

#> prop.score Distance 0.0105 Balanced, <0.1

#> age Contin. -0.0000 Balanced, <0.1

#> income Contin. -0.0000 Balanced, <0.1

#> education Binary 0.0000 Balanced, <0.1

#> experience Contin. 0.0000 Balanced, <0.1

#>

#> Balance tally for mean differences

#> count

#> Balanced, <0.1 5

#> Not Balanced, >0.1 0

#>

#> Variable with the greatest mean difference

#> Variable Diff.Adj M.Threshold

#> income -0 Balanced, <0.1

#>

#> Effective sample sizes

#> Control Treated

#> Unadjusted 313. 687

#> Adjusted 183.24 6876.4 Energy Balancing

Energy balancing minimizes the energy distance between the weighted control distribution and the treated distribution. Unlike moment-based methods, it targets balance on the entire distribution, not just selected moments.

w_energy <- weightit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "energy",

estimand = "ATT"

)

summary(w_energy)

#> Summary of weights

#>

#> - Weight ranges:

#>

#> Min Max

#> treated 1 || 1.

#> control 0 |---------------------------| 7.294

#>

#> - Units with the 5 most extreme weights by group:

#>

#> 1 2 3 4 5

#> treated 1 1 1 1 1

#> 241 160 158 146 8

#> control 4.799 4.941 5.064 5.199 7.294

#>

#> - Weight statistics:

#>

#> Coef of Var MAD Entropy # Zeros

#> treated 0.000 0.000 0.000 0

#> control 1.075 0.736 0.472 0

#>

#> - Effective Sample Sizes:

#>

#> Control Treated

#> Unweighted 313. 687

#> Weighted 145.47 687

bal.tab(w_energy, thresholds = c(m = 0.1))

#> Balance Measures

#> Type Diff.Adj M.Threshold

#> age Contin. 0.0095 Balanced, <0.1

#> income Contin. 0.0046 Balanced, <0.1

#> education Binary 0.0009 Balanced, <0.1

#> experience Contin. 0.0070 Balanced, <0.1

#>

#> Balance tally for mean differences

#> count

#> Balanced, <0.1 4

#> Not Balanced, >0.1 0

#>

#> Variable with the greatest mean difference

#> Variable Diff.Adj M.Threshold

#> age 0.0095 Balanced, <0.1

#>

#> Effective sample sizes

#> Control Treated

#> Unadjusted 313. 687

#> Adjusted 145.47 6876.5 Estimation After Weighting

# Using survey-weighted regression

library(survey)

#> Loading required package: grid

#> Loading required package: Matrix

#>

#> Attaching package: 'Matrix'

#> The following objects are masked from 'package:tidyr':

#>

#> expand, pack, unpack

#> Loading required package: survival

#>

#> Attaching package: 'survey'

#> The following object is masked from 'package:WeightIt':

#>

#> calibrate

#> The following object is masked from 'package:graphics':

#>

#> dotchart

d_ipw <- svydesign(ids = ~1, weights = ~weights, data = data.frame(

sim_data, weights = w_ipw$weights

))

svyglm_fit <- svyglm(outcome ~ treatment, design = d_ipw)

summary(svyglm_fit)

#>

#> Call:

#> svyglm(formula = outcome ~ treatment, design = d_ipw)

#>

#> Survey design:

#> svydesign(ids = ~1, weights = ~weights, data = data.frame(sim_data,

#> weights = w_ipw$weights))

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 41.9302 0.6232 67.28 <2e-16 ***

#> treatment 8.5259 0.7193 11.85 <2e-16 ***

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> (Dispersion parameter for gaussian family taken to be 77.06272)

#>

#> Number of Fisher Scoring iterations: 2

# Or more simply using lm with weights

lm_weighted <- lm(

outcome ~ treatment + age + income + education + experience,

data = sim_data,

weights = w_ebal$weights

)

summary(lm_weighted)

#>

#> Call:

#> lm(formula = outcome ~ treatment + age + income + education +

#> experience, data = sim_data, weights = w_ebal$weights)

#>

#> Weighted Residuals:

#> Min 1Q Median 3Q Max

#> -18.5923 -3.3084 0.0487 3.3269 17.9825

#>

#> Coefficients:

#> Estimate Std. Error t value Pr(>|t|)

#> (Intercept) 6.160e+00 1.438e+00 4.283 2.02e-05 ***

#> treatment 8.746e+00 3.411e-01 25.645 < 2e-16 ***

#> age 6.832e-01 5.172e-02 13.210 < 2e-16 ***

#> income 7.438e-05 1.063e-05 6.995 4.87e-12 ***

#> education 4.353e+00 3.181e-01 13.684 < 2e-16 ***

#> experience 7.602e-02 5.043e-02 1.507 0.132

#> ---

#> Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

#>

#> Residual standard error: 5.001 on 994 degrees of freedom

#> Multiple R-squared: 0.7465, Adjusted R-squared: 0.7453

#> F-statistic: 585.5 on 5 and 994 DF, p-value: < 2.2e-16

coef(lm_weighted)["treatment"]

#> treatment

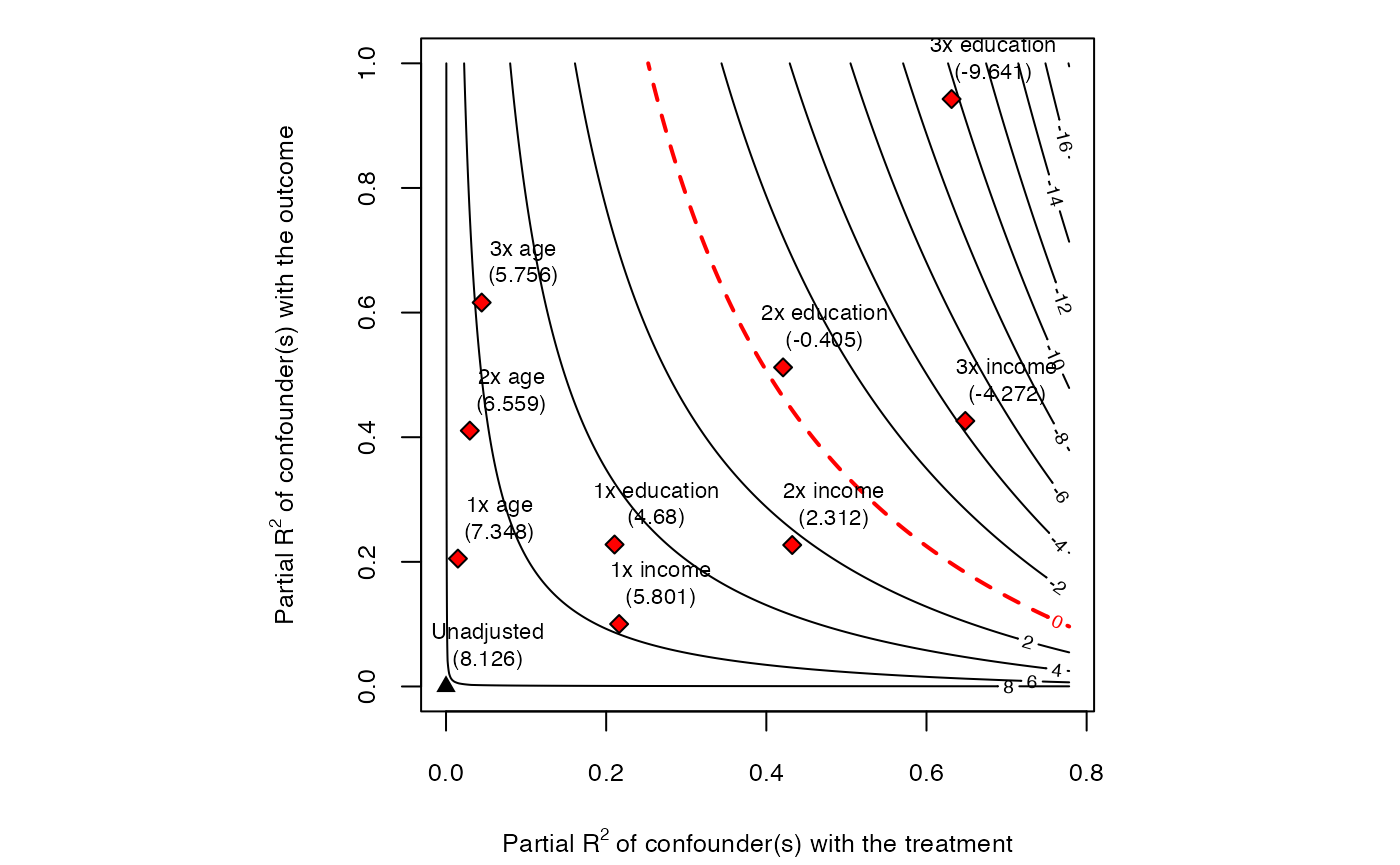

#> 8.7462817. Propensity Score Methods in Detail

7.1 Propensity Score Estimation

The propensity score is most commonly estimated via logistic regression, but flexible methods often perform better.

# Logistic regression

ps_logit <- glm(

treatment ~ age + income + education + experience + I(age^2) +

age:education + income:experience,

data = sim_data,

family = binomial(link = "logit")

)

sim_data$ps_logit <- predict(ps_logit, type = "response")

# Generalized Boosted Model (GBM)

library(gbm)

#> Loaded gbm 2.2.2

#> This version of gbm is no longer under development. Consider transitioning to gbm3, https://github.com/gbm-developers/gbm3

ps_gbm_fit <- gbm(

treatment ~ age + income + education + experience,

data = sim_data,

distribution = "bernoulli",

n.trees = 3000,

interaction.depth = 3,

shrinkage = 0.01,

cv.folds = 5,

verbose = FALSE

)

best_iter <- gbm.perf(ps_gbm_fit, method = "cv", plot.it = FALSE)

sim_data$ps_gbm <- predict(ps_gbm_fit, n.trees = best_iter, type = "response")

# Compare PS distributions

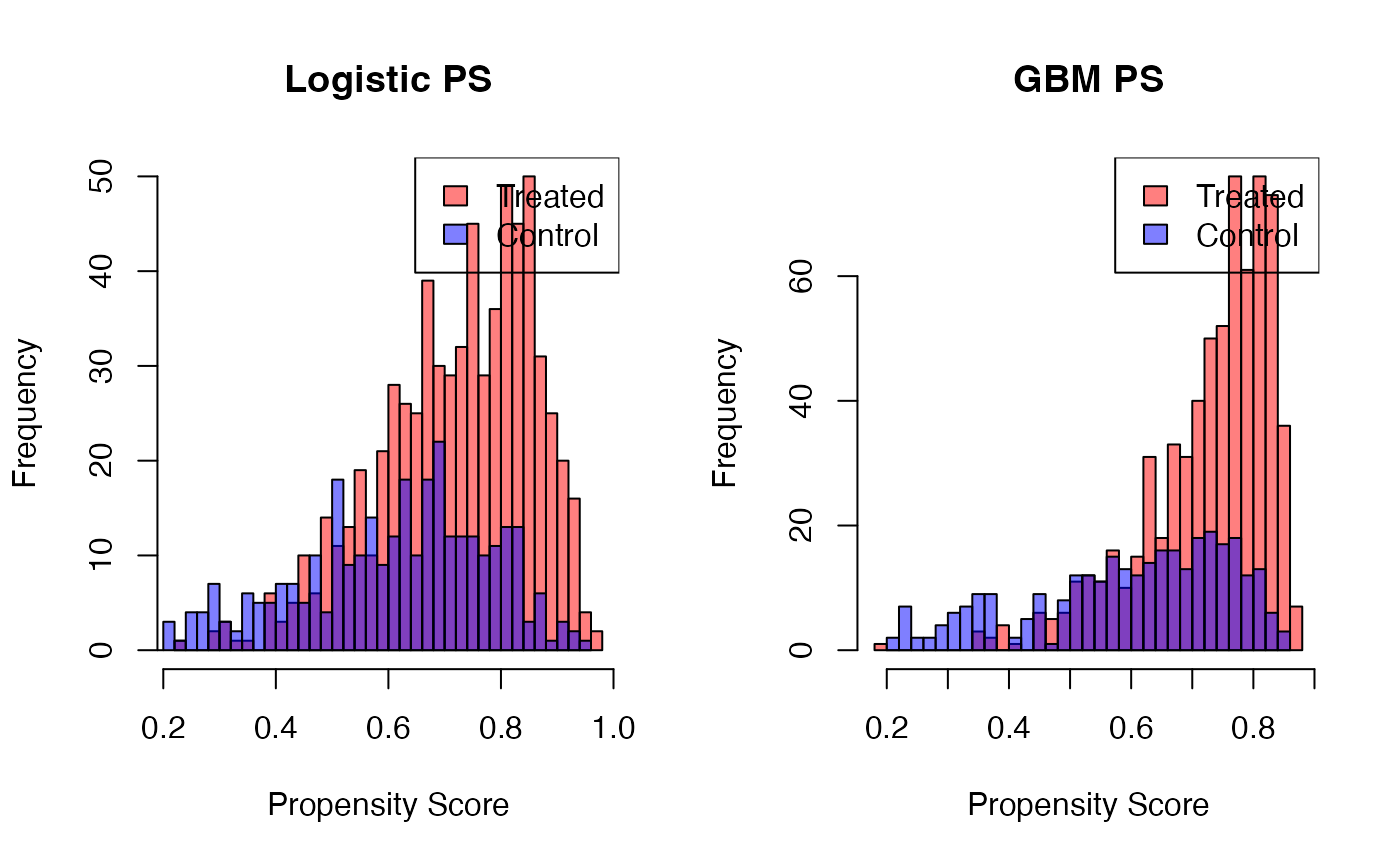

par(mfrow = c(1, 2))

hist(sim_data$ps_logit[sim_data$treatment == 1], col = rgb(1, 0, 0, 0.5),

main = "Logistic PS", xlab = "Propensity Score", breaks = 30)

hist(sim_data$ps_logit[sim_data$treatment == 0], col = rgb(0, 0, 1, 0.5),

add = TRUE, breaks = 30)

legend("topright", c("Treated", "Control"), fill = c(rgb(1,0,0,0.5), rgb(0,0,1,0.5)))

hist(sim_data$ps_gbm[sim_data$treatment == 1], col = rgb(1, 0, 0, 0.5),

main = "GBM PS", xlab = "Propensity Score", breaks = 30)

hist(sim_data$ps_gbm[sim_data$treatment == 0], col = rgb(0, 0, 1, 0.5),

add = TRUE, breaks = 30)

legend("topright", c("Treated", "Control"), fill = c(rgb(1,0,0,0.5), rgb(0,0,1,0.5)))

7.2 Propensity Score Matching

# Manual PS matching using MatchIt with a user-supplied PS

m_user_ps <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "nearest",

distance = sim_data$ps_logit, # user-supplied propensity scores

caliper = 0.1,

replace = FALSE

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

summary(m_user_ps)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "nearest", distance = sim_data$ps_logit,

#> replace = FALSE, caliper = 0.1)

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.7224 0.6092 0.8326 0.6590 0.1982

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> distance 0.2980

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> distance 0.6528 0.6407 0.0895 1.0495 0.0260

#> age 37.9173 37.4911 0.0434 1.1267 0.0197

#> income 47582.3080 47639.9007 -0.0041 0.9151 0.0133

#> education 0.3838 0.3345 0.0988 . 0.0493

#> experience 16.3531 15.5579 0.0800 1.0719 0.0249

#> eCDF Max Std. Pair Dist.

#> distance 0.0704 0.0921

#> age 0.0599 0.8005

#> income 0.0423 0.9317

#> education 0.0493 0.6637

#> experience 0.0634 0.7986

#>

#> Sample Sizes:

#> Control Treated

#> All 313 687

#> Matched 284 284

#> Unmatched 29 403

#> Discarded 0 07.3 Propensity Score Weighting (IPW / IPTW)

Inverse Probability of Treatment Weighting (IPTW) uses the propensity score to reweight the sample. The weights are:

- ATE weights:

- ATT weights:

# Manual IPTW for ATT

sim_data <- sim_data %>%

mutate(

ipw_att = ifelse(treatment == 1, 1,

ps_logit / (1 - ps_logit)),

ipw_ate = ifelse(treatment == 1, 1 / ps_logit,

1 / (1 - ps_logit))

)

# Stabilized weights (reduce variability)

sim_data <- sim_data %>%

mutate(

p_treat = mean(treatment),

sw_ate = ifelse(treatment == 1,

p_treat / ps_logit,

(1 - p_treat) / (1 - ps_logit))

)

# Weighted regression for ATT

lm_ipw_att <- lm(outcome ~ treatment, data = sim_data, weights = ipw_att)

coef(lm_ipw_att)["treatment"]

#> treatment

#> 8.6159797.4 Propensity Score Stratification

# Create 5 strata based on PS quantiles

sim_data$ps_stratum <- cut(

sim_data$ps_logit,

breaks = quantile(sim_data$ps_logit, probs = seq(0, 1, 0.2)),

include.lowest = TRUE,

labels = 1:5

)

# Within-stratum treatment effects

strat_effects <- sim_data %>%

group_by(ps_stratum) %>%

dplyr::summarise(

n_treat = sum(treatment),

n_control = sum(1 - treatment),

att_strat = mean(outcome[treatment == 1]) - mean(outcome[treatment == 0]),

.groups = "drop"

)

print(strat_effects)

#> # A tibble: 5 × 4

#> ps_stratum n_treat n_control att_strat

#> <fct> <int> <dbl> <dbl>

#> 1 1 91 109 10.0

#> 2 2 128 72 8.51

#> 3 3 139 61 8.79

#> 4 4 152 48 8.25

#> 5 5 177 23 10.9

# Weighted average (ATT weights = proportion of treated in each stratum)

strat_effects <- strat_effects %>%

mutate(weight = n_treat / sum(n_treat))

att_strat <- sum(strat_effects$att_strat * strat_effects$weight)

cat("Stratified ATT:", round(att_strat, 2), "\n")

#> Stratified ATT: 9.337.5 Trimming and Truncation

Extreme propensity scores can lead to highly variable weights and unstable estimates. Two remedies:

- Trimming: Discard observations with extreme propensity scores (e.g., outside ).

- Truncation (Winsorizing): Cap extreme weights at a percentile (e.g., the 99th percentile).

# Trimming: drop observations with extreme PS

sim_trimmed <- sim_data %>%

filter(ps_logit >= 0.05 & ps_logit <= 0.95)

cat("Observations retained after trimming:",

nrow(sim_trimmed), "of", nrow(sim_data), "\n")

#> Observations retained after trimming: 996 of 1000

# Re-estimate on trimmed sample

m_nn_trimmed <- matchit(

treatment ~ age + income + education + experience,

data = sim_trimmed,

method = "nearest"

)

#> Warning: Fewer control units than treated units; not all treated units will get

#> a match.

# Truncation: cap extreme weights

w_trunc <- w_ipw$weights

threshold <- quantile(w_trunc[w_trunc > 0], 0.99)

w_trunc[w_trunc > threshold] <- threshold

summary(w_trunc)

#> Min. 1st Qu. Median Mean 3rd Qu. Max.

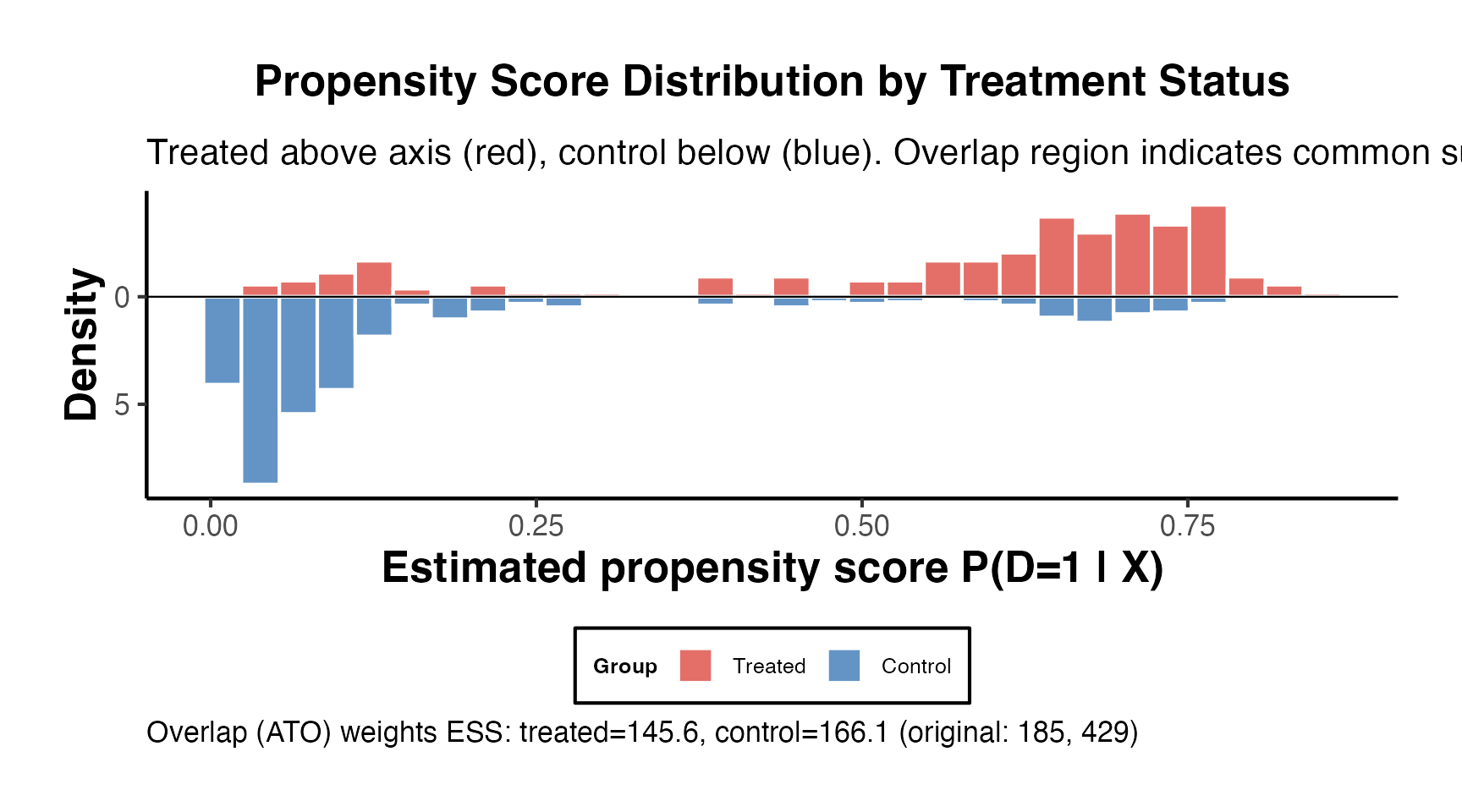

#> 0.2335 1.0000 1.0000 1.3488 1.0000 6.74677.6 Common Support Assessment

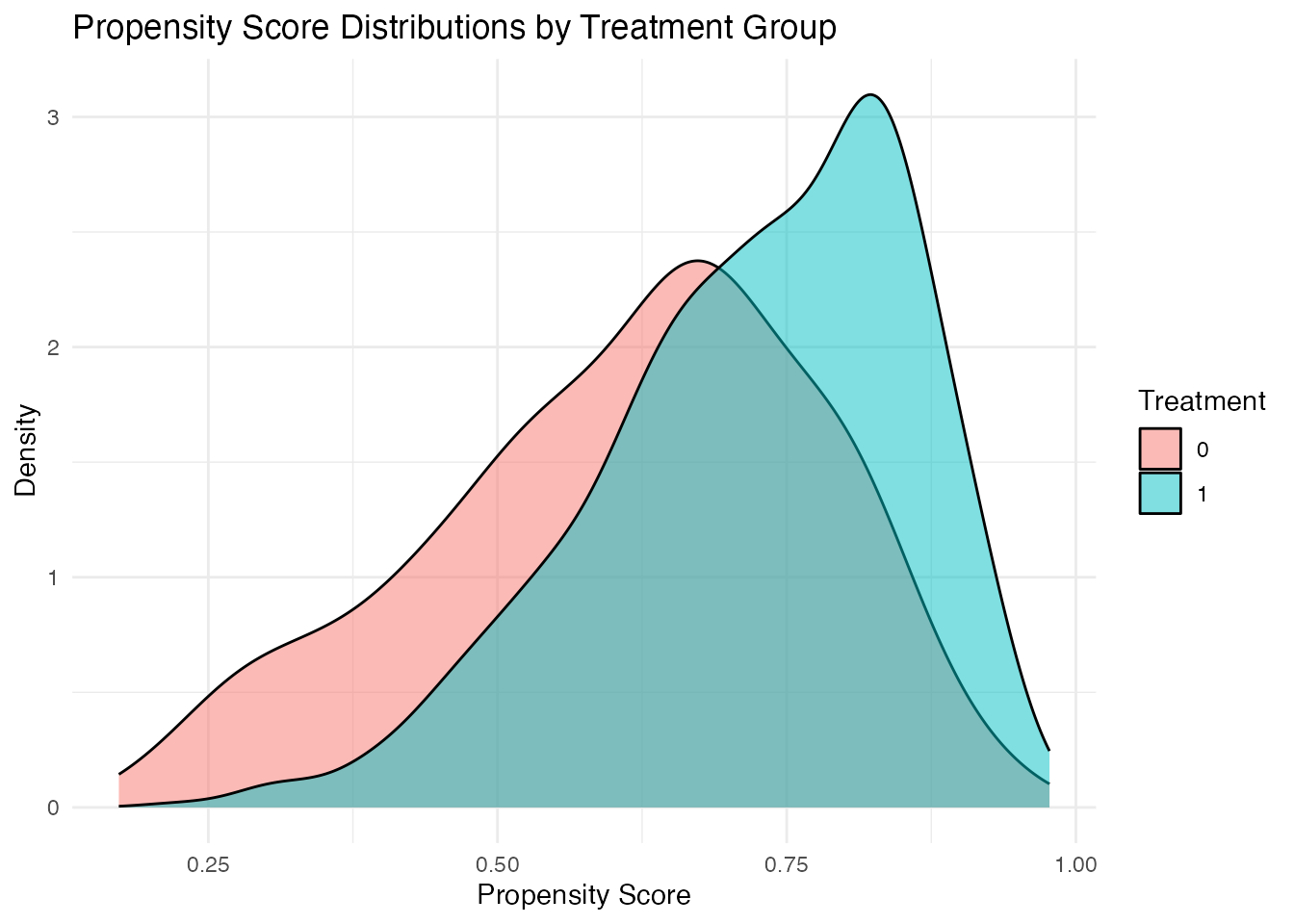

# Visual assessment of common support

ggplot(sim_data, aes(x = ps_logit, fill = factor(treatment))) +

geom_density(alpha = 0.5) +

labs(

title = "Propensity Score Distributions by Treatment Group",

x = "Propensity Score",

y = "Density",

fill = "Treatment"

) +

theme_minimal()

# Identify the region of common support

ps_range_treat <- range(sim_data$ps_logit[sim_data$treatment == 1])

ps_range_control <- range(sim_data$ps_logit[sim_data$treatment == 0])

common_support <- c(

max(ps_range_treat[1], ps_range_control[1]),

min(ps_range_treat[2], ps_range_control[2])

)

cat("Common support region:", round(common_support, 3), "\n")

#> Common support region: 0.225 0.95

# Fraction of observations within common support

in_support <- sim_data$ps_logit >= common_support[1] &

sim_data$ps_logit <= common_support[2]

cat("Fraction in common support:", mean(in_support), "\n")

#> Fraction in common support: 0.9938. Coarsened Exact Matching (CEM) in Depth

CEM (Iacus, King, and Porro, 2012) takes a fundamentally different approach from propensity score methods. Instead of modeling the treatment assignment mechanism, CEM directly imposes a bound on the maximum imbalance.

8.1 How CEM Works

The algorithm proceeds in three steps:

- Coarsen each continuous covariate into bins (strata). Binary and categorical variables are left as-is.

- Exact match on the coarsened covariates. Observations in strata containing both treated and control units are retained; others are discarded.

- Analyze the original (uncoarsened) data from matched strata, using stratum-level weights to account for different numbers of treated and control units per stratum.

The key property of CEM is the congruence principle: restricting the analysis to well-matched strata guarantees that the maximum imbalance (measured by the statistic) is bounded by the coarsening level. Finer coarsening yields better balance but more discarded observations.

library(MatchIt)

# CEM with automatic coarsening

m_cem_auto <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "cem"

)

summary(m_cem_auto)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "cem")

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 38.9883 38.9895 -0.0001 0.9613 0.0102

#> income 49848.0647 49287.1099 0.0396 1.0423 0.0162

#> education 0.4066 0.4066 -0.0000 . 0.0000

#> experience 17.1065 16.9738 0.0133 0.9979 0.0117

#> eCDF Max Std. Pair Dist.

#> age 0.0451 0.1803

#> income 0.0665 0.2008

#> education 0.0000 0.0000

#> experience 0.0453 0.1605

#>

#> Sample Sizes:

#> Control Treated

#> All 313. 687

#> Matched (ESS) 154.41 455

#> Matched 254. 455

#> Unmatched 59. 232

#> Discarded 0. 0

# CEM with user-specified cut points

m_cem_custom <- matchit(

treatment ~ age + income + education + experience,

data = sim_data,

method = "cem",

cutpoints = list(

age = seq(20, 65, by = 5),

income = seq(10000, 90000, by = 10000),

experience = seq(0, 40, by = 5)

),

grouping = list(

education = list(c("0"), c("1"))

)

)

summary(m_cem_custom)

#>

#> Call:

#> matchit(formula = treatment ~ age + income + education + experience,

#> data = sim_data, method = "cem", cutpoints = list(age = seq(20,

#> 65, by = 5), income = seq(10000, 90000, by = 10000),

#> experience = seq(0, 40, by = 5)), grouping = list(education = list(c("0"),

#> c("1"))))

#>

#> Summary of Balance for All Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 41.2769 36.3723 0.4991 1.0399 0.1424

#> income 51830.4982 45727.4053 0.4309 0.8579 0.1167

#> education 0.4643 0.3131 0.3032 . 0.1512

#> experience 19.3843 14.5803 0.4834 1.0828 0.1432

#> eCDF Max

#> age 0.2365

#> income 0.1985

#> education 0.1512

#> experience 0.2200

#>

#> Summary of Balance for Matched Data:

#> Means Treated Means Control Std. Mean Diff. Var. Ratio eCDF Mean

#> age 39.1891 39.3196 -0.0133 1.0158 0.0107

#> income 50041.2755 49781.3746 0.0184 0.9556 0.0102

#> education 0.3679 0.3679 0.0000 . 0.0000

#> experience 17.2167 17.0889 0.0129 0.9992 0.0119

#> eCDF Max Std. Pair Dist.

#> age 0.0568 0.1552

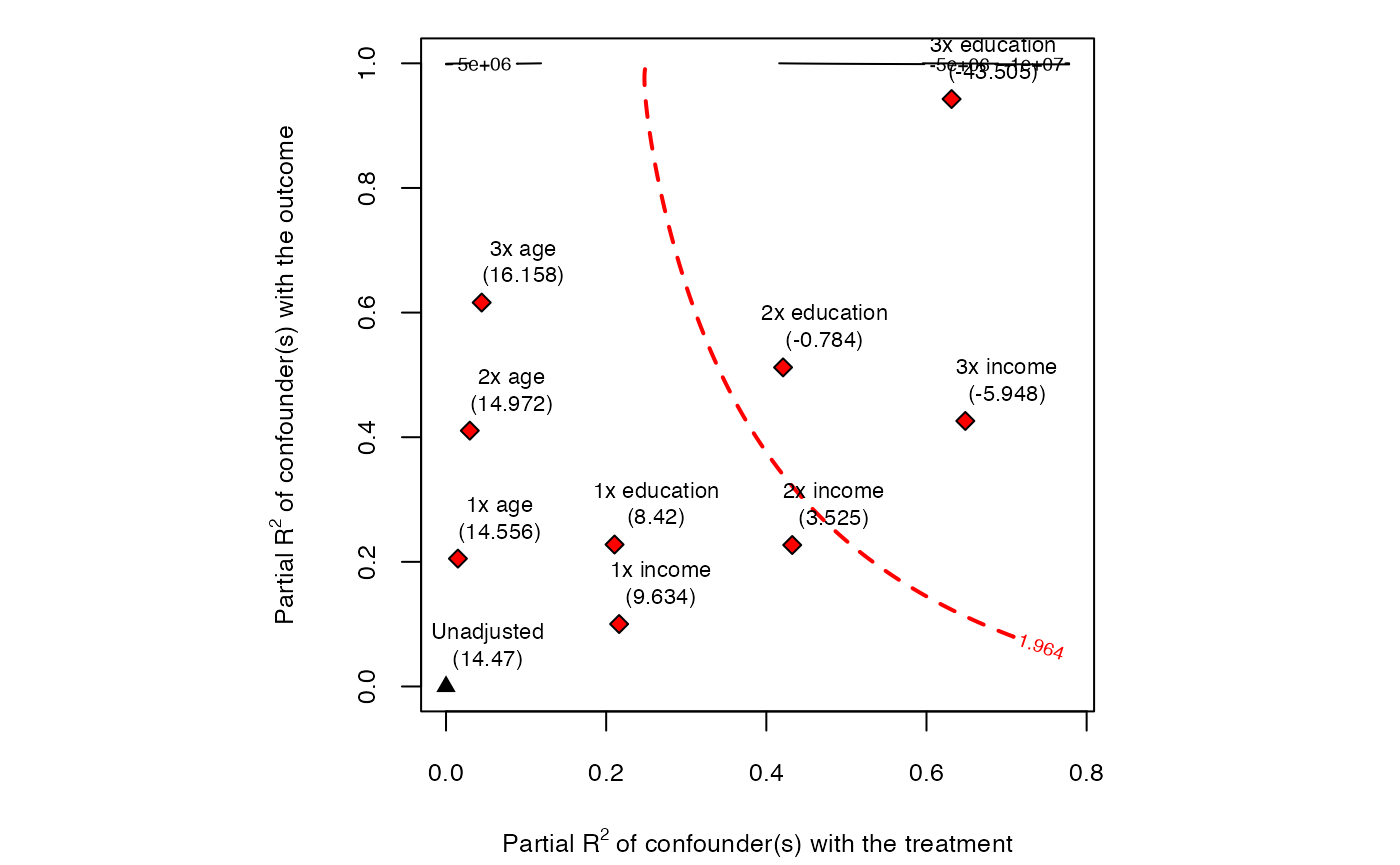

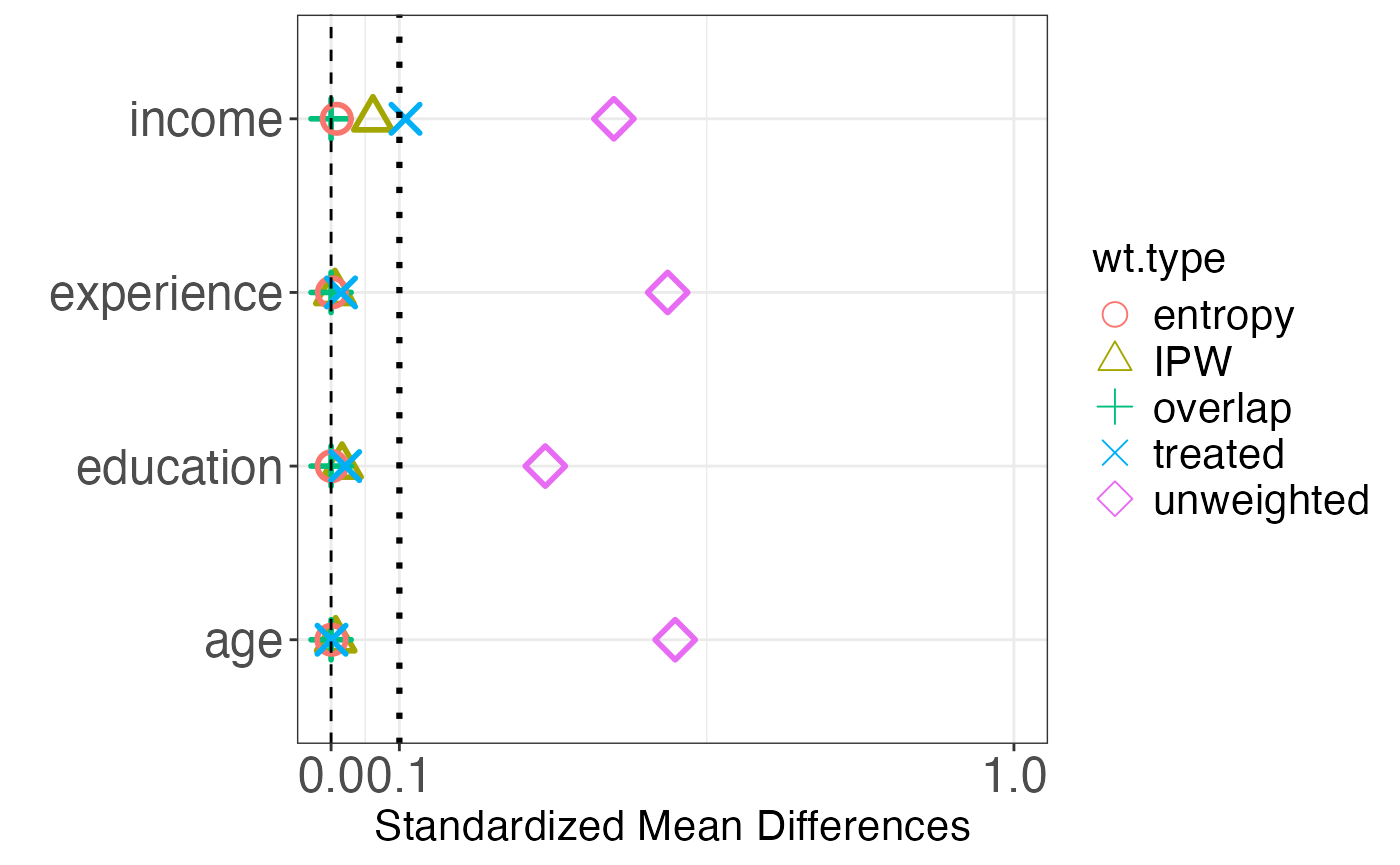

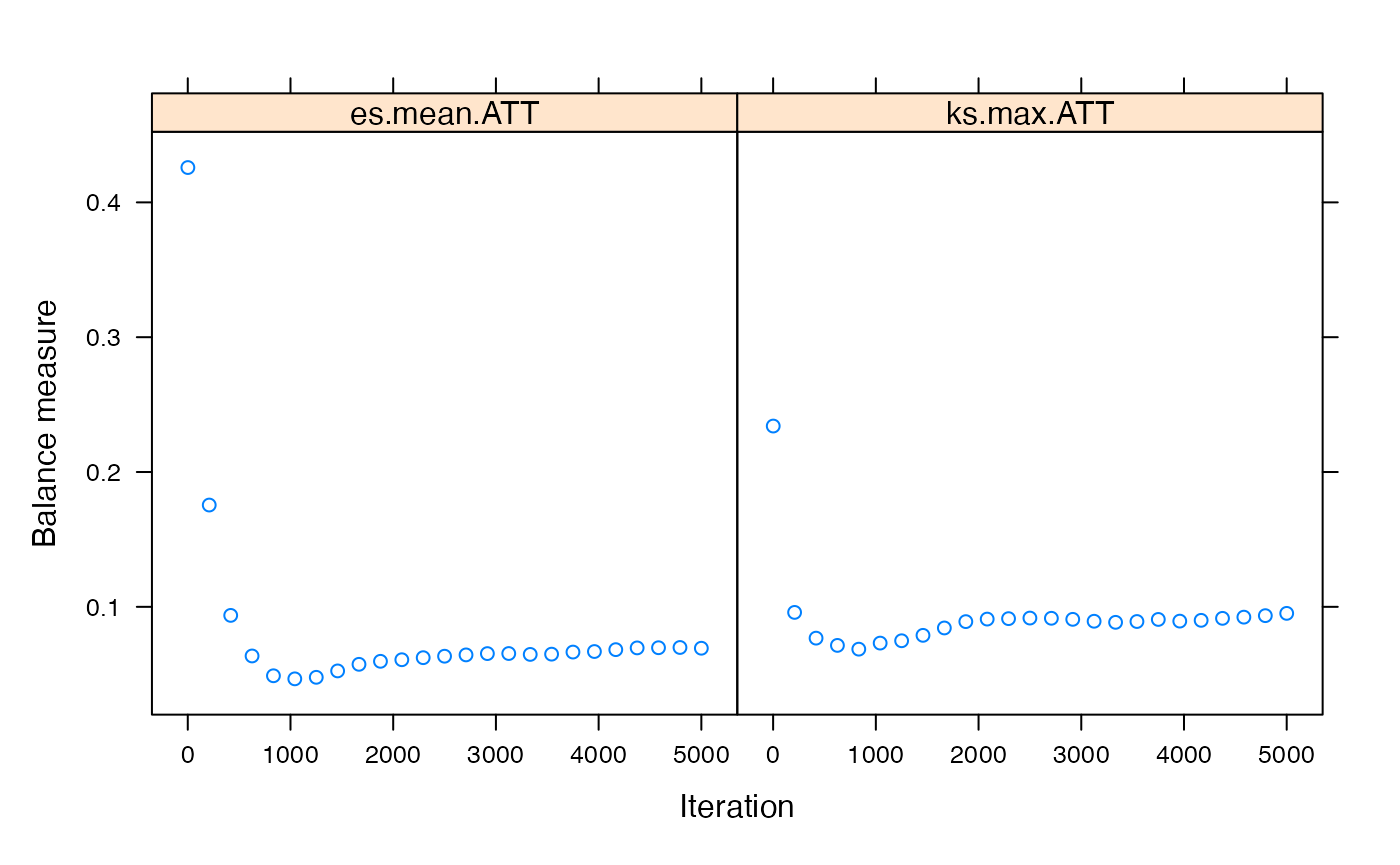

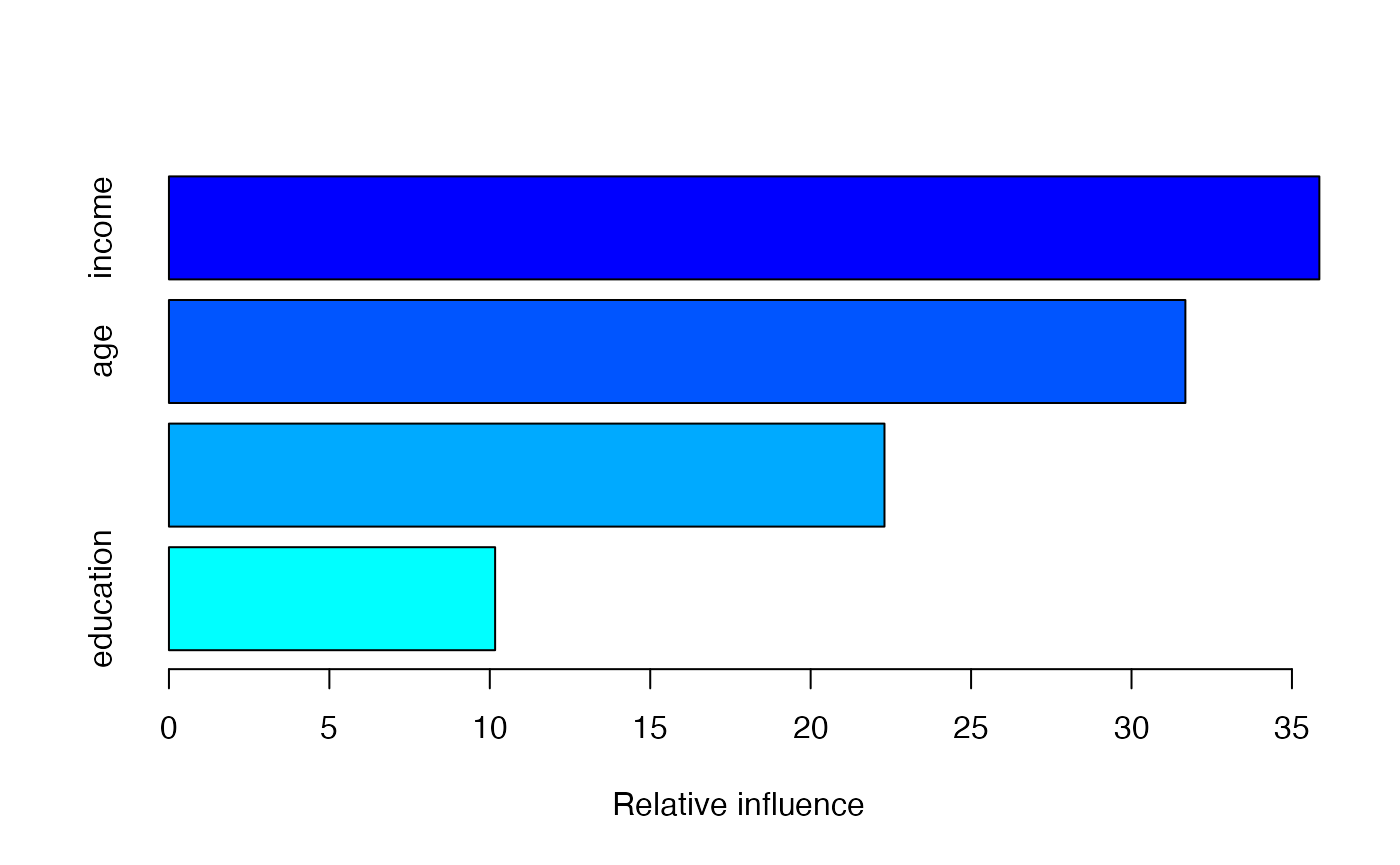

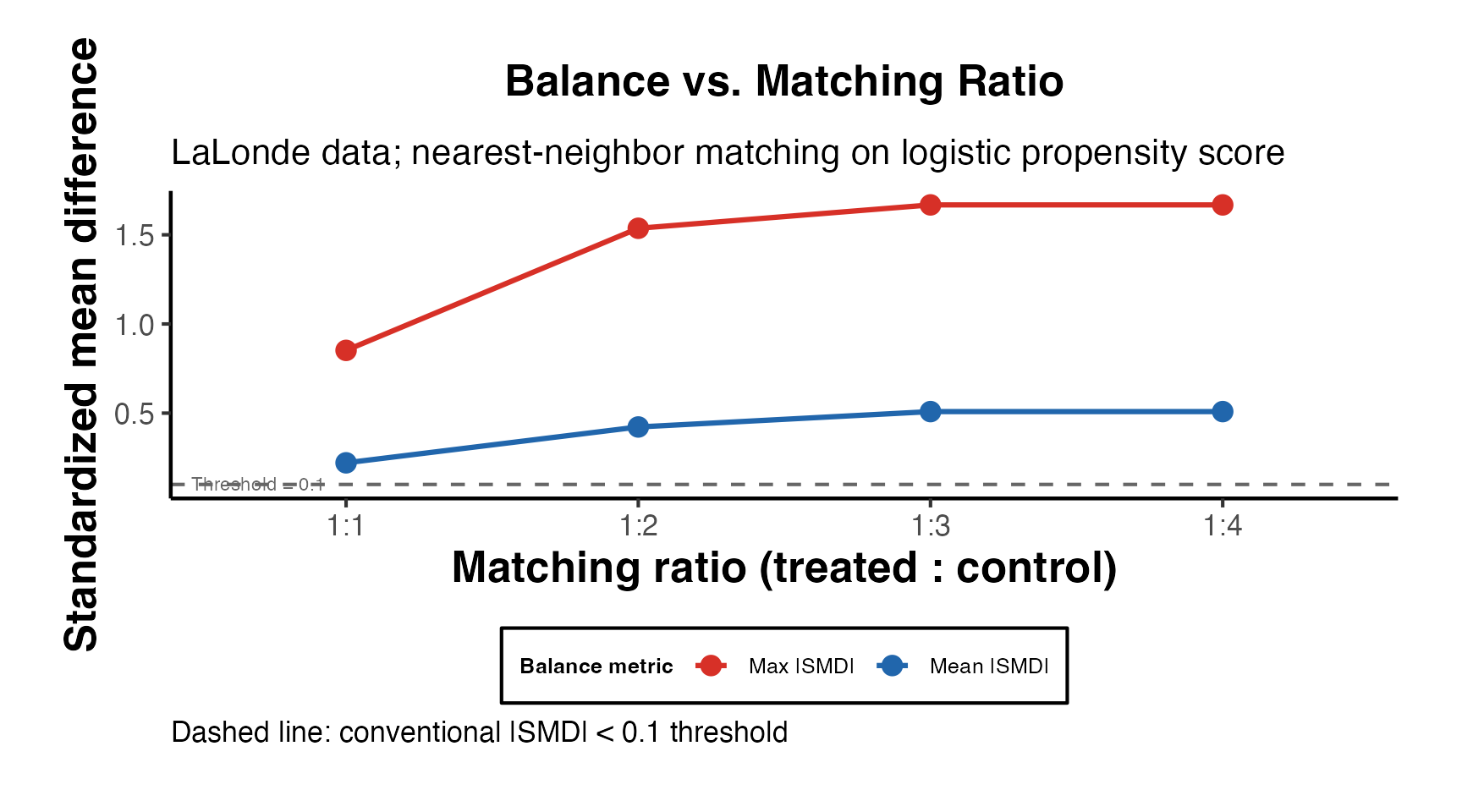

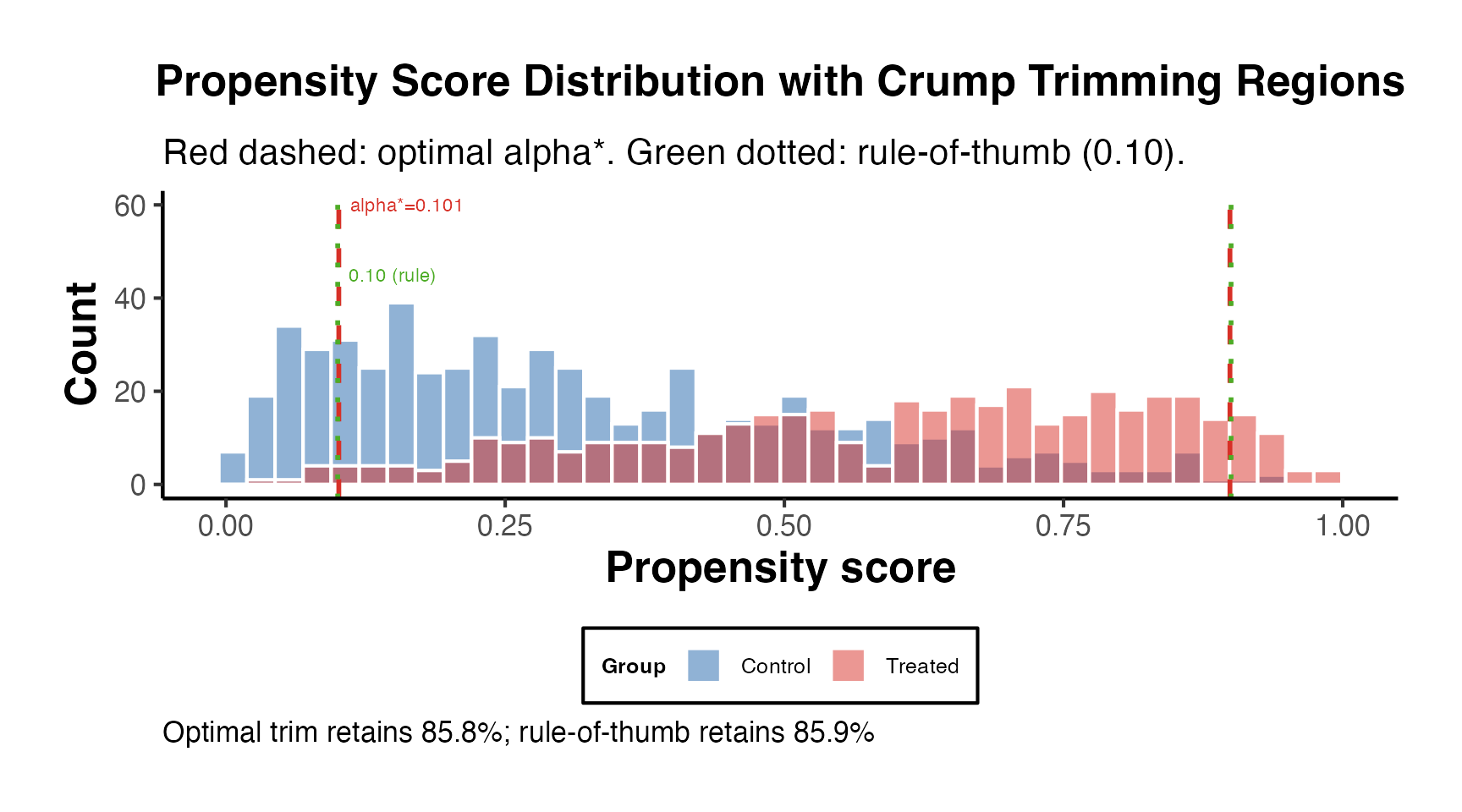

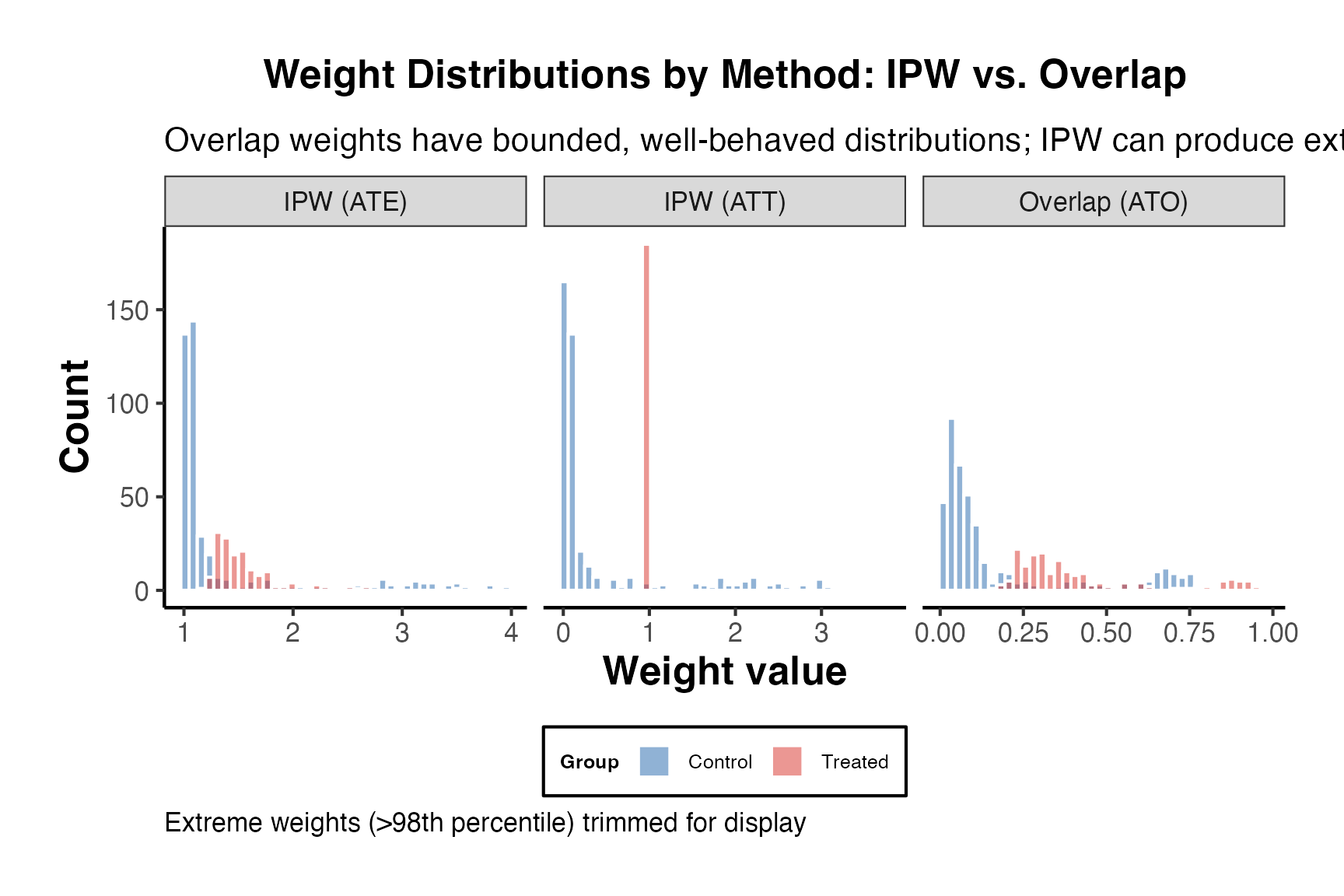

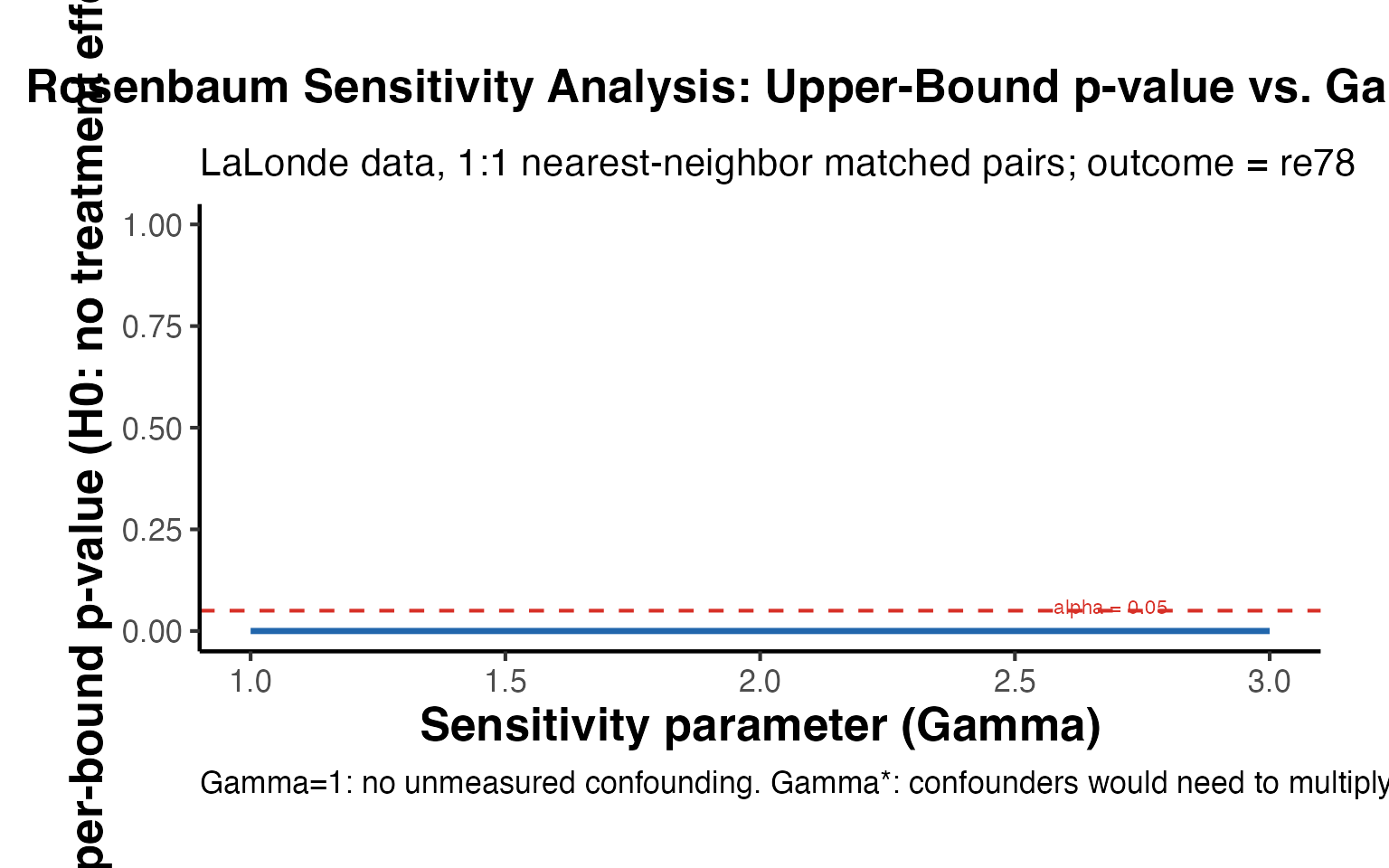

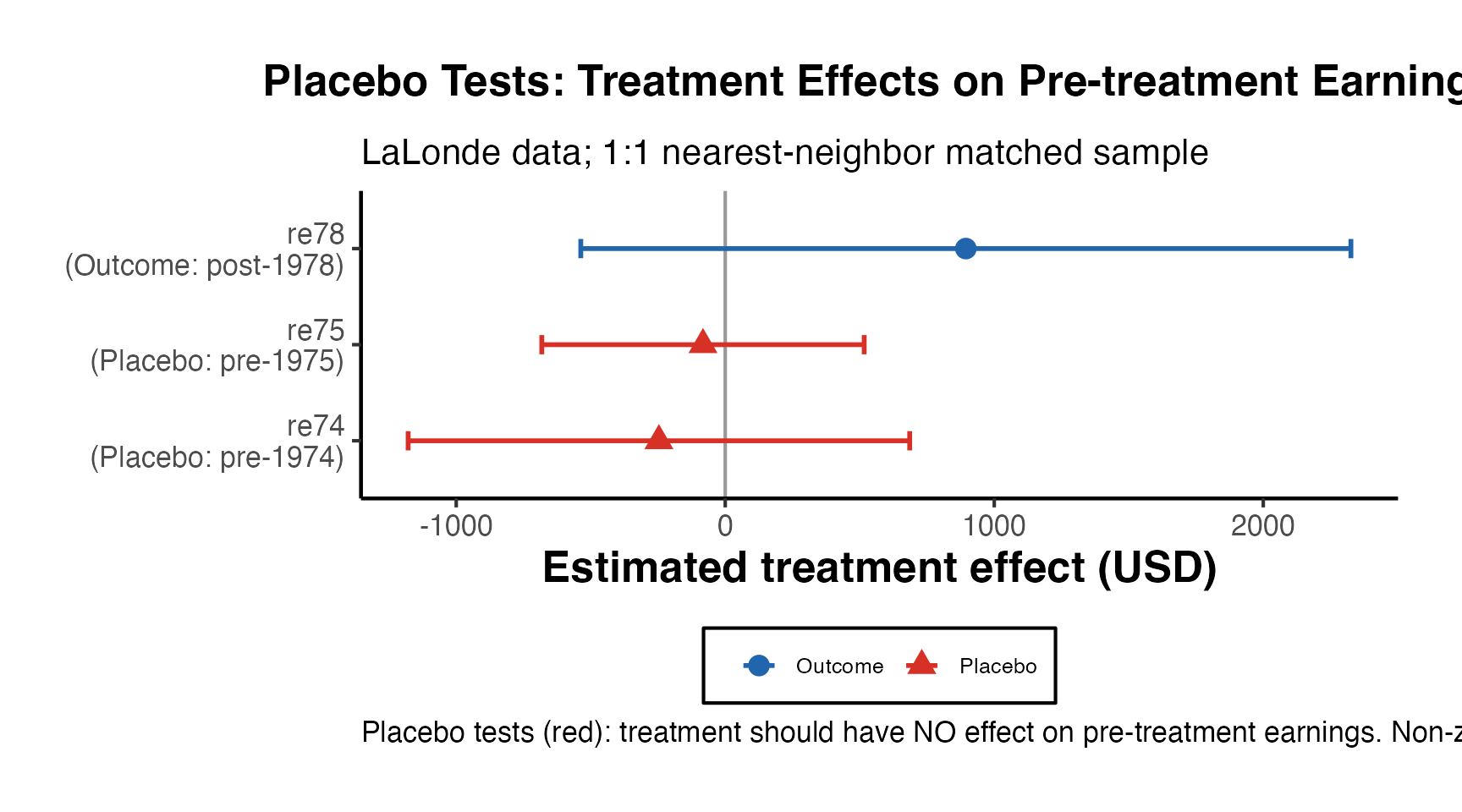

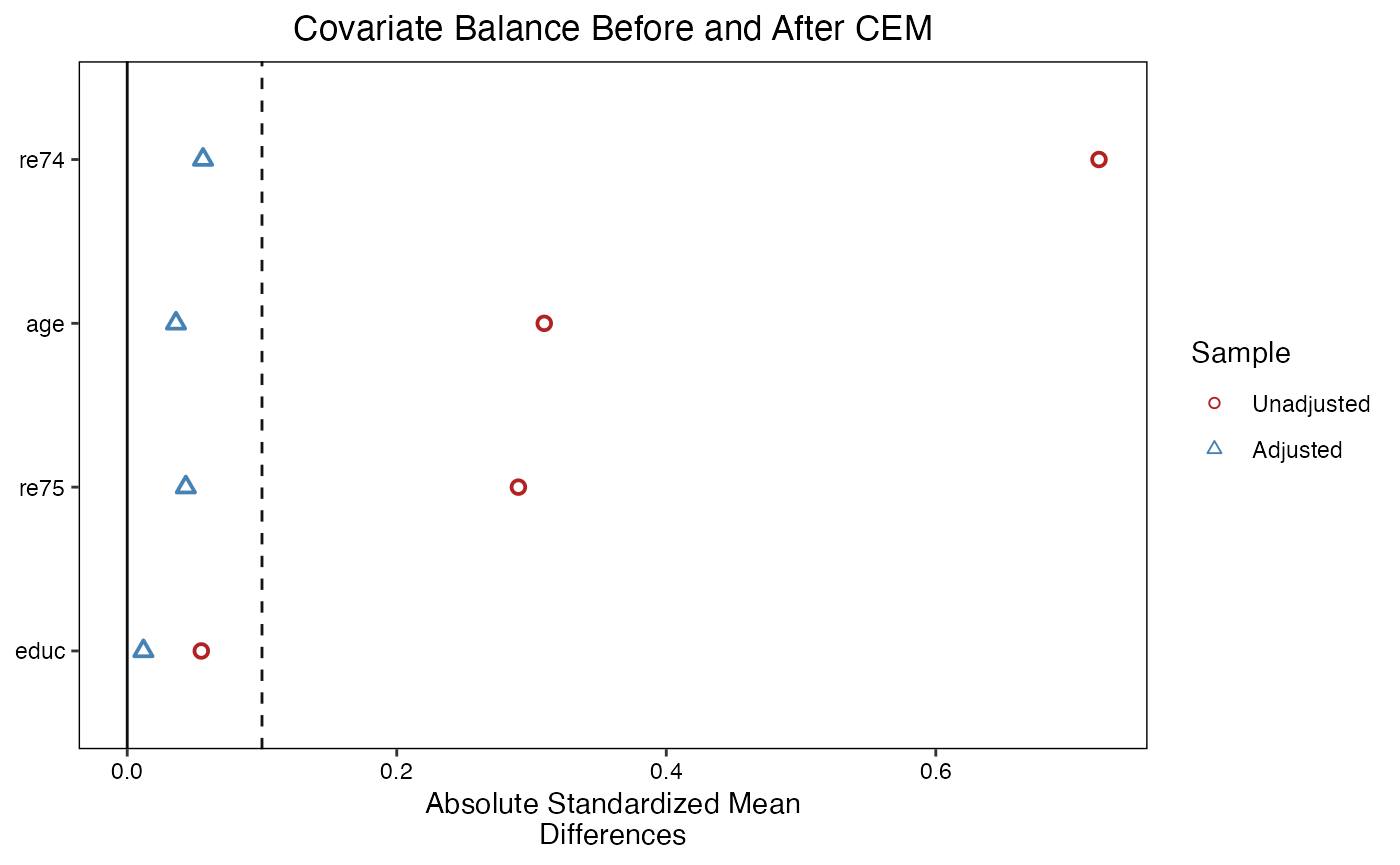

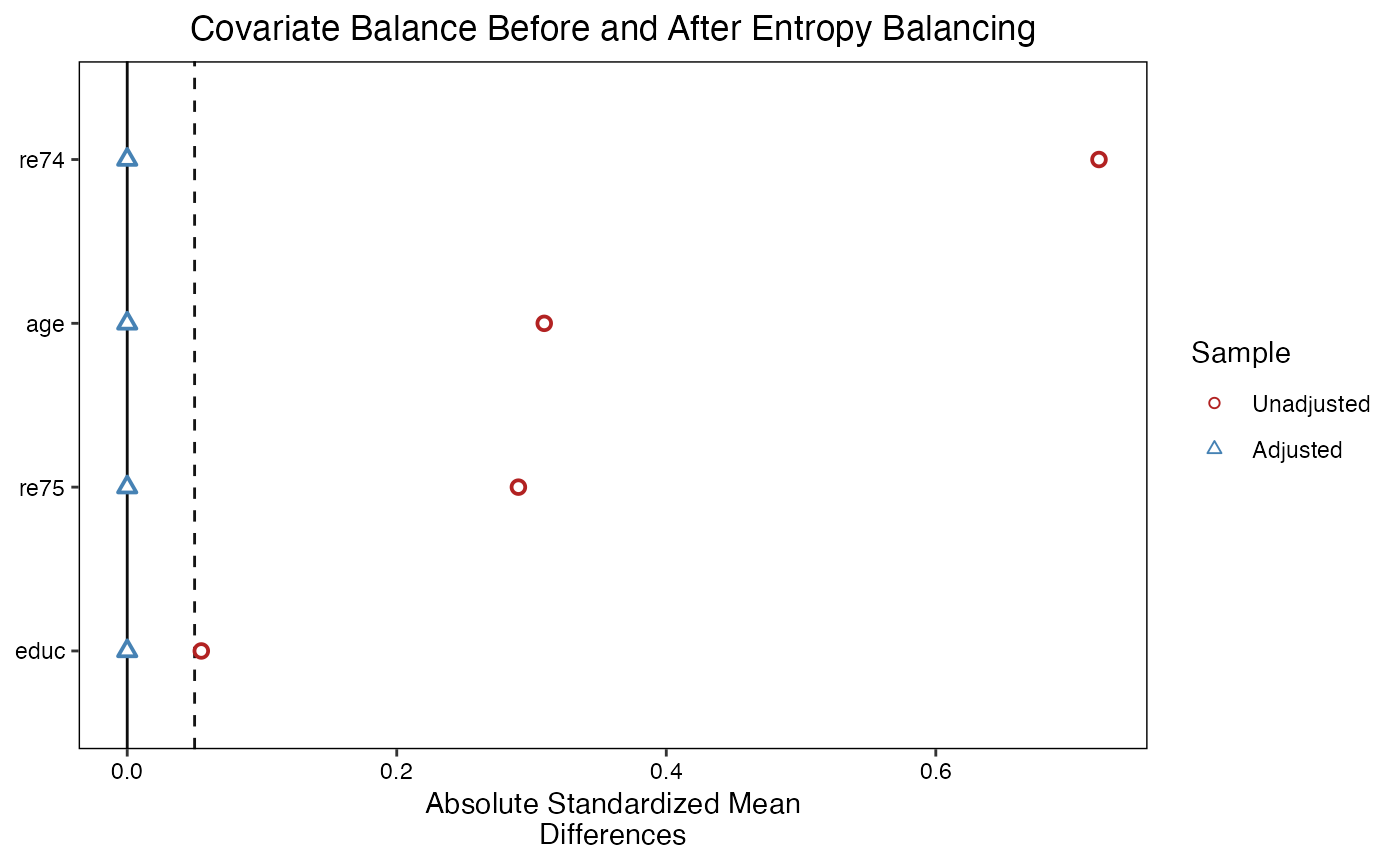

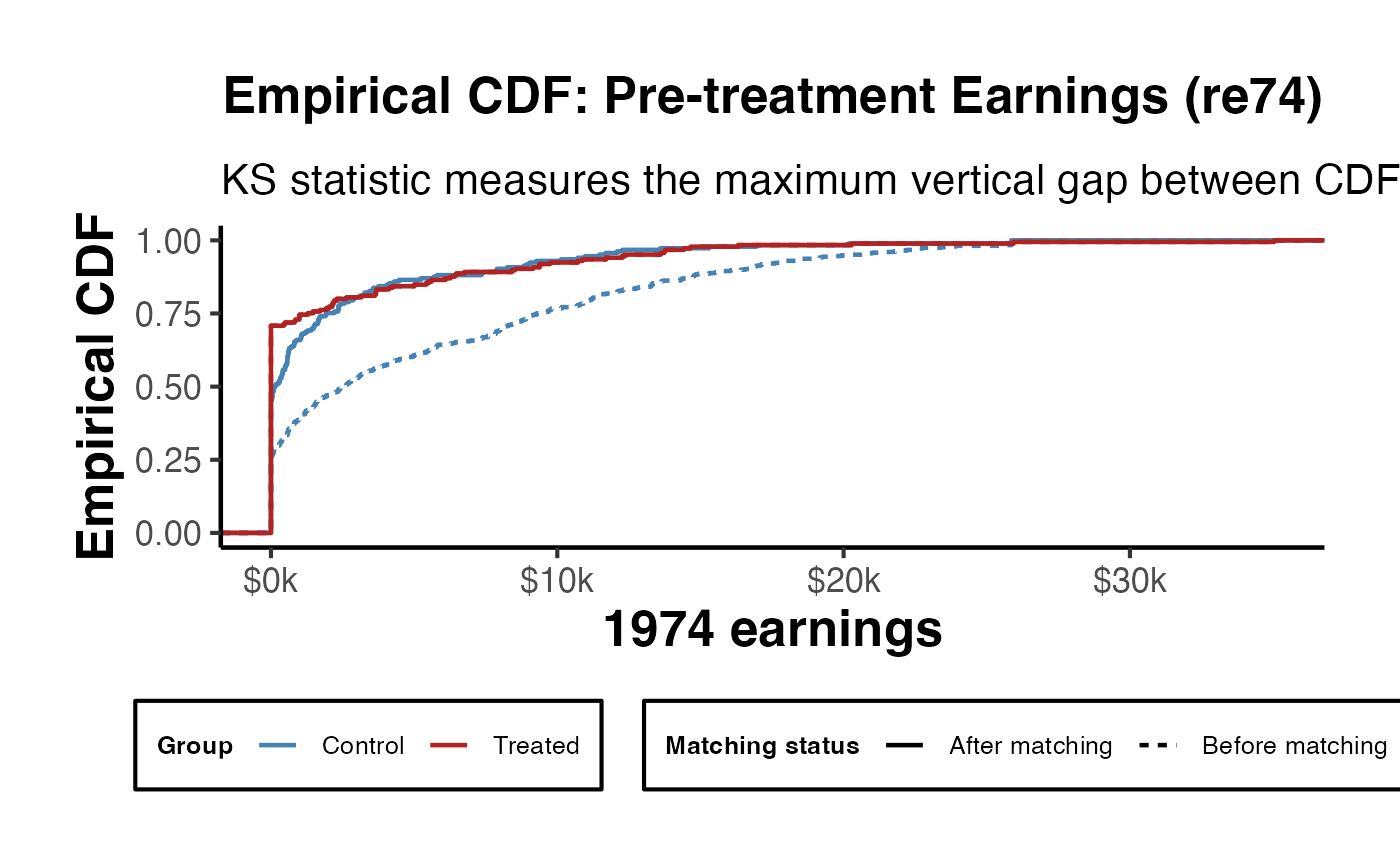

#> income 0.0417 0.2288