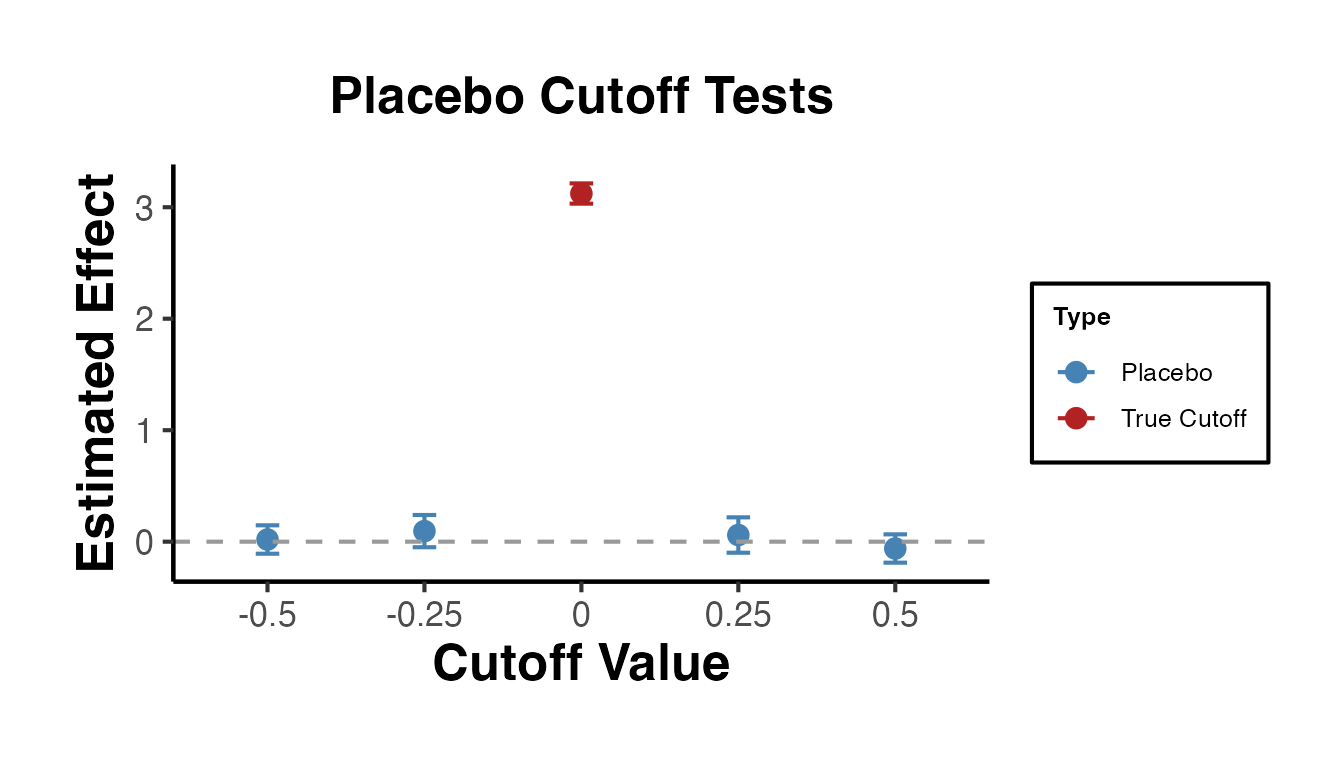

2. Regression Discontinuity

Mike Nguyen

2026-03-22

e_rd.Rmd

library(causalverse)1. Introduction to Regression Discontinuity Designs

Regression Discontinuity (RD) designs are among the most credible quasi-experimental methods for estimating causal effects. The idea is simple: when treatment assignment is determined by whether a continuous running variable (also called a forcing variable or score) crosses a known cutoff, units just above and just below the cutoff are nearly identical in all observable and unobservable characteristics. Any discrete jump in the outcome at the cutoff can therefore be attributed to the treatment.

1.1 Potential Outcomes Framework for RD

Let denote the running variable and the cutoff. Define a treatment indicator:

Each unit has two potential outcomes: (outcome if treated) and (outcome if untreated). The observed outcome is:

The Sharp RD treatment effect at the cutoff is defined as:

Under the continuity assumption (see below), this equals:

This is the average treatment effect at the cutoff, a local estimand.

1.2 Key Identifying Assumptions

The validity of an RD design rests on several assumptions:

- Continuity of conditional expectations: The conditional expectation functions and are continuous at . This is the core assumption: the potential outcomes vary smoothly through the cutoff, so the only thing that changes discontinuously is treatment status. Formally:

No precise manipulation: Units cannot precisely control their value of the running variable to sort themselves above or below the cutoff. If individuals can manipulate their score to receive (or avoid) treatment, the continuity assumption fails because units just above and below the cutoff are no longer comparable. This assumption is testable via density tests (see Section 5 on

rddensity).SUTVA (Stable Unit Treatment Value Assumption): The treatment status of unit does not affect the outcome of unit , and there is a single version of treatment. In the RD context, this means that the cutoff-based rule creates a well-defined treatment with no spillovers across units near the cutoff.

Running variable is not affected by treatment: The running variable itself should be determined before (or independently of) treatment. The running variable is a pre-treatment characteristic.

1.3 Sharp vs. Fuzzy RD

Sharp RD: Treatment is a deterministic function of the running variable. Every unit above the cutoff is treated; every unit below is not:

The compliance is perfect. The treatment effect is identified by the jump in at .

Fuzzy RD: The probability of treatment changes discontinuously at the cutoff but not from 0 to 1. Some units above the cutoff are untreated (never-takers or defiers), and some below are treated (always-takers):

The Fuzzy RD estimand is a ratio, analogous to a Wald/IV estimand:

Under a monotonicity (no defiers) assumption, identifies the local average treatment effect (LATE) for compliers at the cutoff.

1.4 Continuity-Based vs. Local Randomization Approaches

There are two main frameworks for inference in RD designs:

Continuity-based approach: This is the standard framework. It relies on the continuity of conditional expectation functions and uses local polynomial regression to estimate the treatment effect. Inference is based on large-sample approximations. The rdrobust package implements this approach with optimal bandwidth selection and bias-corrected confidence intervals.

Local randomization approach: Near the cutoff, assignment to treatment is “as good as random.” Within a narrow window around the cutoff, the running variable is essentially noise, and treatment assignment is effectively randomized. This framework uses randomization inference (permutation tests) rather than large-sample asymptotics. The rdlocrand package implements this approach.

The choice between frameworks depends on the application. The continuity-based approach is more general, while the local randomization approach can provide more robust inference when a sufficiently narrow window of “as-if random” assignment exists.

2. Simulated Data

We create a rich simulated dataset that we will use throughout this vignette. The data-generating process features a running variable, treatment assignment at a cutoff of zero, a nonlinear outcome function, and several pre-determined covariates.

set.seed(42)

n <- 2000

rd_data <- tibble(

# Running variable: centered at 0

x = runif(n, -1, 1),

# Treatment: sharp assignment at cutoff = 0

treated = as.integer(x >= 0),

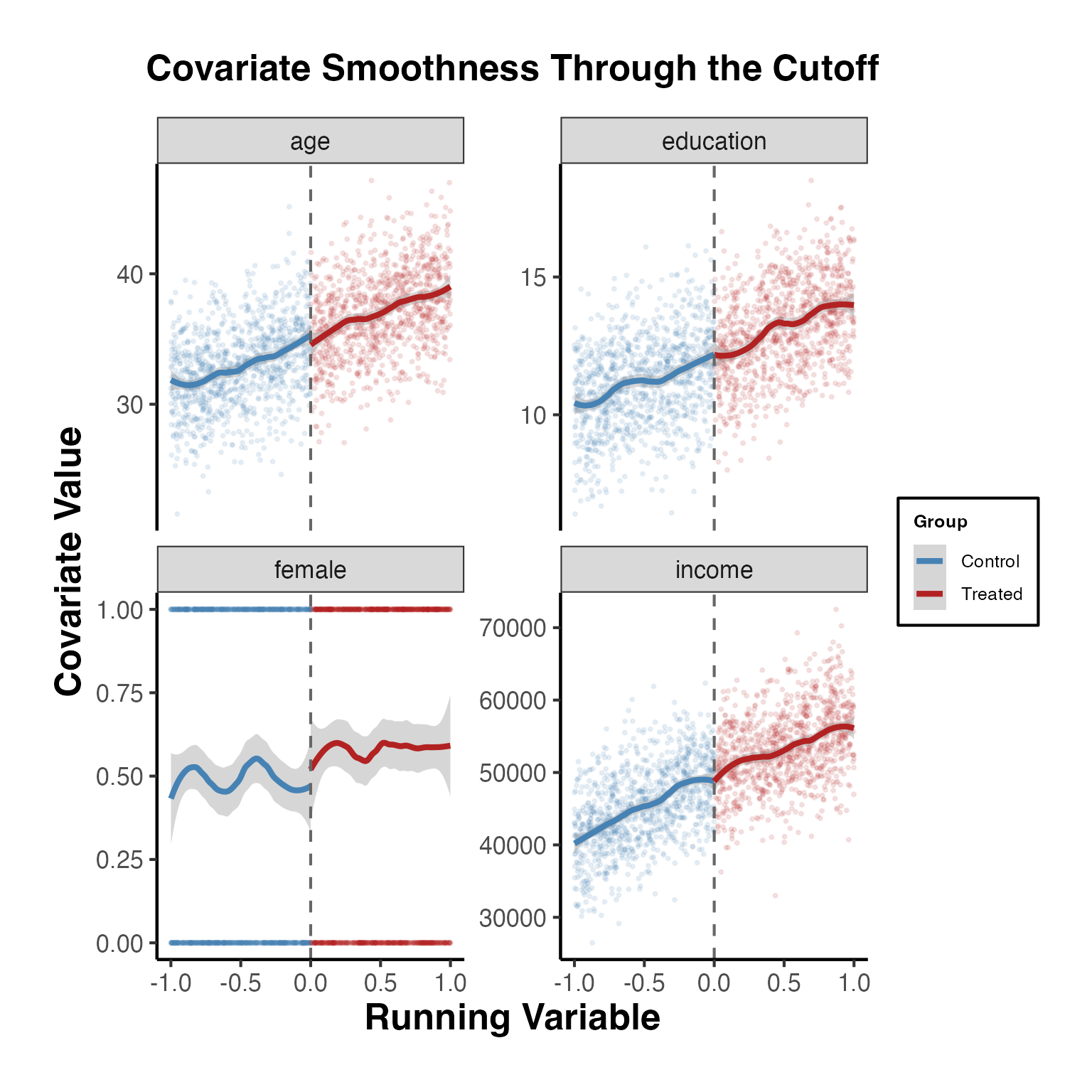

# Pre-determined covariates (should NOT jump at the cutoff)

age = 35 + 4 * x + rnorm(n, sd = 3),

female = rbinom(n, 1, prob = plogis(0.1 + 0.2 * x)),

education = 12 + 2 * x + 0.5 * x^2 + rnorm(n, sd = 1.5),

income = 50000 + 8000 * x - 1500 * x^2 + rnorm(n, sd = 5000),

# Potential outcomes

y0 = 2 + 1.5 * x + 0.8 * x^2 - 0.3 * x^3 + 0.5 * age / 35 + rnorm(n, sd = 0.5),

y1 = y0 + 3 + 0.5 * x, # Treatment effect = 3 at cutoff, varies with x

# Observed outcome

y = ifelse(treated == 1, y1, y0)

) |>

# Interaction of running variable with treatment (for separate slopes)

mutate(x_treated = x * treated)

# True treatment effect at the cutoff (x = 0)

# tau = 3 + 0.5 * 0 = 3

cat("True treatment effect at cutoff:", 3, "\n")

#> True treatment effect at cutoff: 3

cat("Sample size:", n, "\n")

#> Sample size: 2000

cat("Treated:", sum(rd_data$treated), "| Control:", sum(1 - rd_data$treated), "\n")

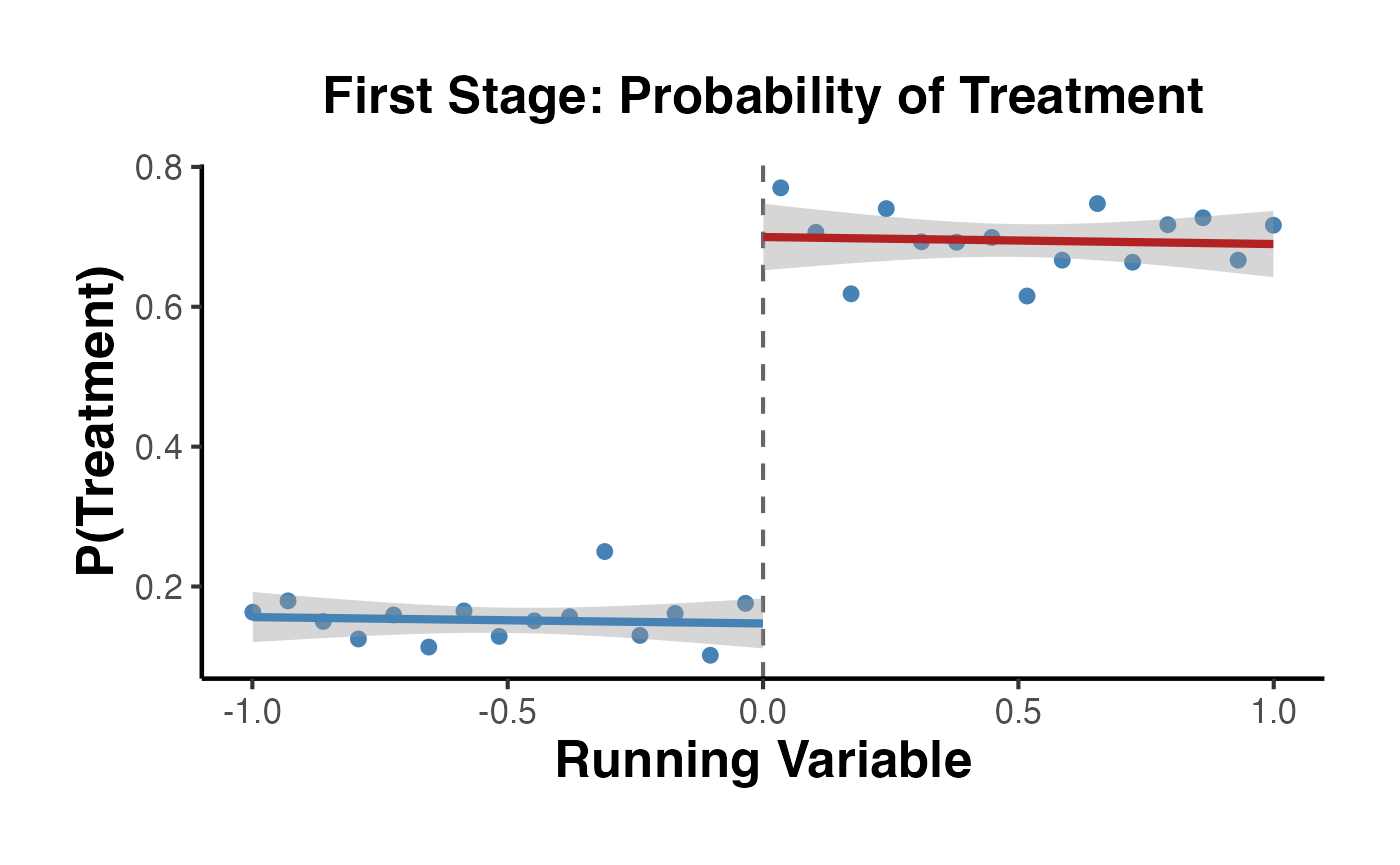

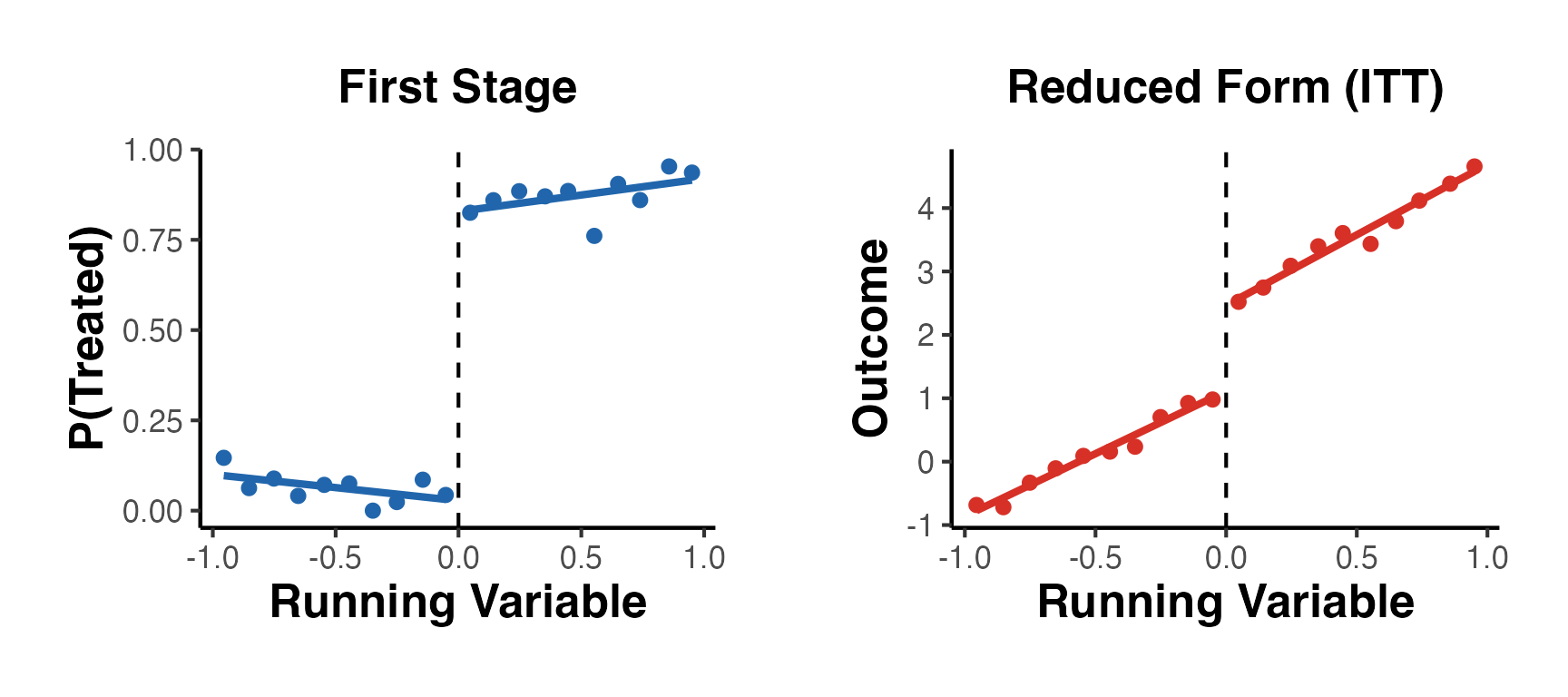

#> Treated: 977 | Control: 1023We also create a separate fuzzy RD dataset where compliance is imperfect:

set.seed(123)

n_fuzzy <- 3000

fuzzy_data <- tibble(

x = runif(n_fuzzy, -1, 1),

above = as.integer(x >= 0),

# Compliance is imperfect:

# Below cutoff: 15% treated (always-takers)

# Above cutoff: 70% treated (compliers + always-takers)

treated = rbinom(n_fuzzy, 1, prob = 0.15 + 0.55 * above),

# Pre-determined covariates

age = 40 + 3 * x + rnorm(n_fuzzy, sd = 4),

female = rbinom(n_fuzzy, 1, prob = 0.5),

# Outcome: true LATE for compliers = 4

y = 1 + 4 * treated + 1.2 * x + 0.6 * x^2 + rnorm(n_fuzzy, sd = 1)

) |>

mutate(x_above = x * above)

cat("Fuzzy RD: First stage jump ~",

round(mean(fuzzy_data$treated[fuzzy_data$above == 1]) -

mean(fuzzy_data$treated[fuzzy_data$above == 0]), 3), "\n")

#> Fuzzy RD: First stage jump ~ 0.5433. Visual RD Analysis

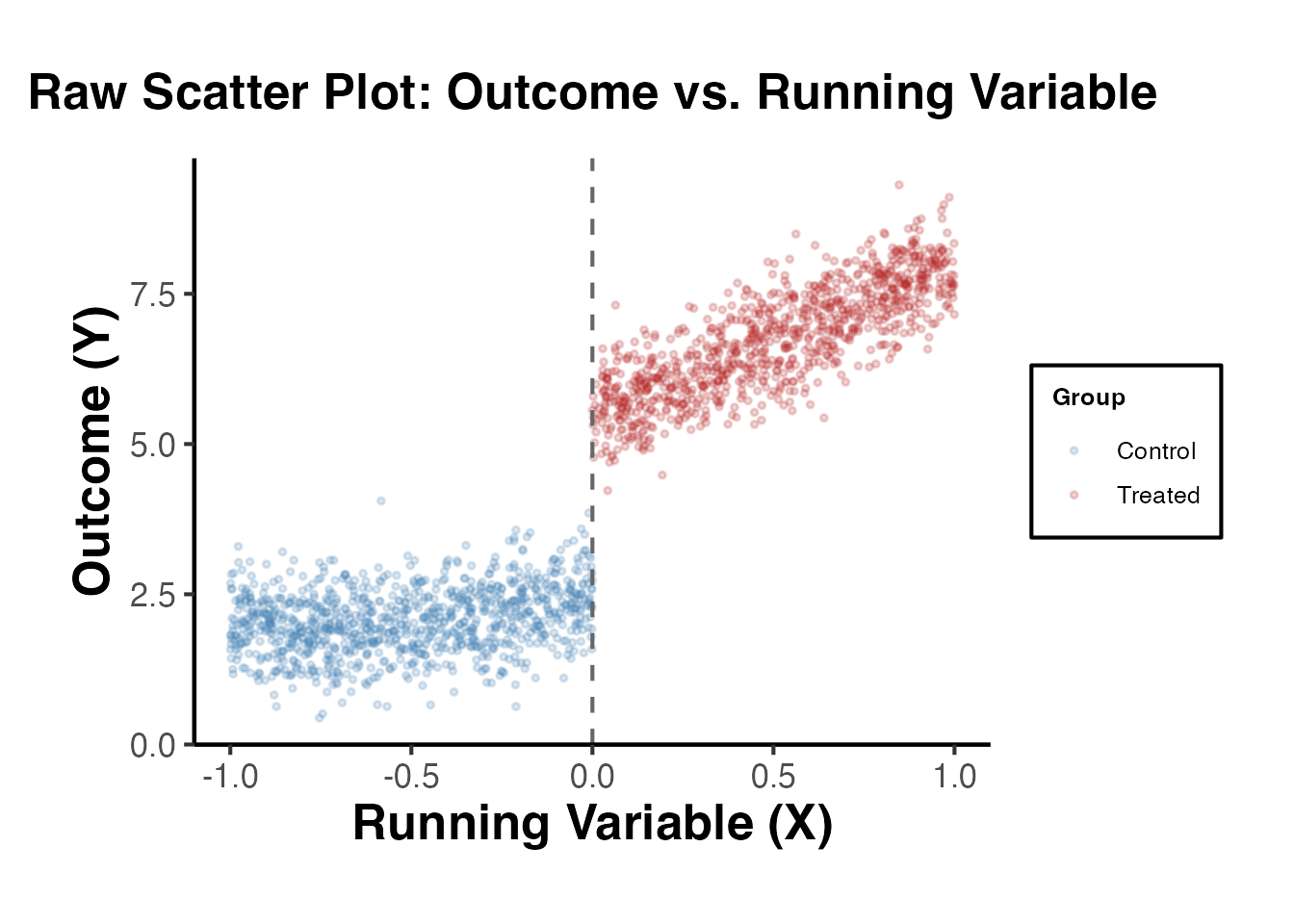

Visualization is the first and most important step in any RD analysis. Before estimating anything, the researcher should inspect the data graphically.

3.1 Raw Scatter Plot

A scatter plot of the outcome against the running variable reveals the discontinuity (or lack thereof) at the cutoff.

ggplot(rd_data, aes(x = x, y = y, color = factor(treated))) +

geom_point(alpha = 0.2, size = 0.8) +

geom_vline(xintercept = 0, linetype = "dashed", color = "grey40") +

scale_color_manual(

values = c("0" = "steelblue", "1" = "firebrick"),

labels = c("Control", "Treated")

) +

labs(

x = "Running Variable (X)",

y = "Outcome (Y)",

color = "Group",

title = "Raw Scatter Plot: Outcome vs. Running Variable"

) +

causalverse::ama_theme()

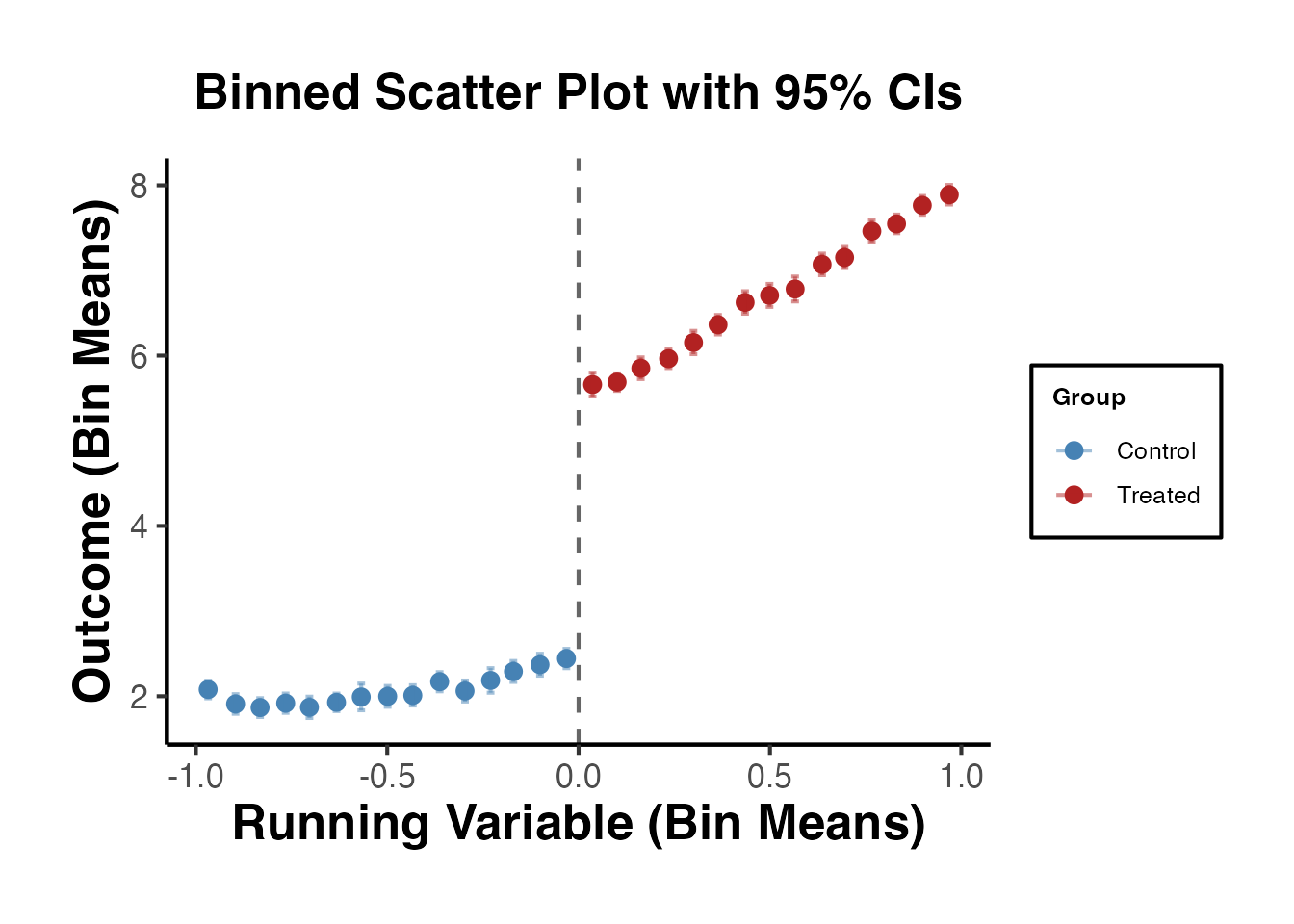

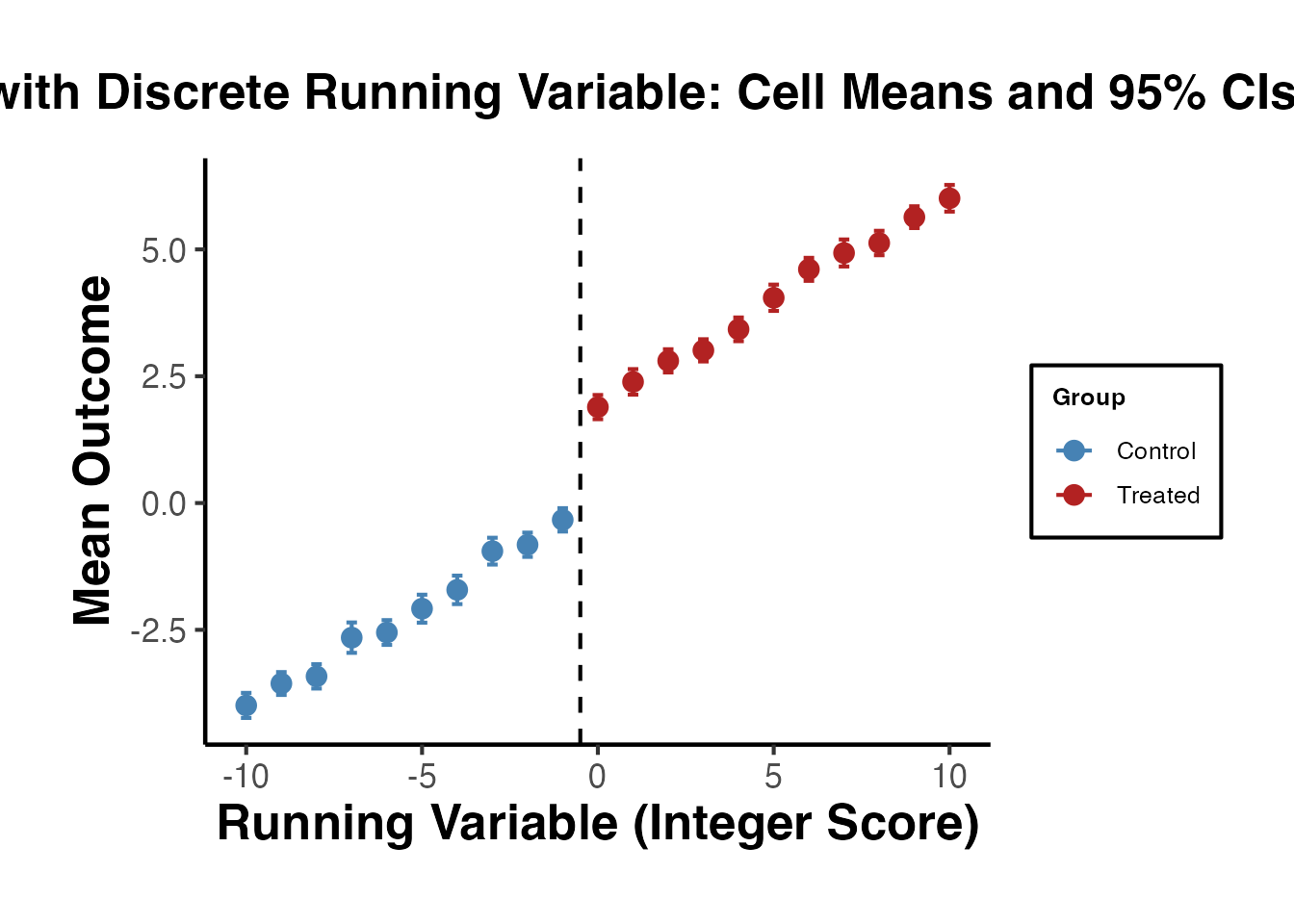

3.2 Binned Scatter Plot

With many observations, a binned scatter plot is more informative. We divide the running variable into equal-width bins and plot the mean outcome within each bin.

n_bins <- 30

binned_data <- rd_data |>

mutate(

bin = cut(x, breaks = n_bins, labels = FALSE),

side = ifelse(x >= 0, "Treated", "Control")

) |>

group_by(bin, side) |>

dplyr::summarise(

x_mean = mean(x),

y_mean = mean(y),

y_se = sd(y) / sqrt(n()),

.groups = "drop"

)

ggplot(binned_data, aes(x = x_mean, y = y_mean, color = side)) +

geom_point(size = 2.5) +

geom_errorbar(

aes(ymin = y_mean - 1.96 * y_se, ymax = y_mean + 1.96 * y_se),

width = 0.02, alpha = 0.5

) +

geom_vline(xintercept = 0, linetype = "dashed", color = "grey40") +

scale_color_manual(values = c("Control" = "steelblue", "Treated" = "firebrick")) +

labs(

x = "Running Variable (Bin Means)",

y = "Outcome (Bin Means)",

color = "Group",

title = "Binned Scatter Plot with 95% CIs"

) +

causalverse::ama_theme()

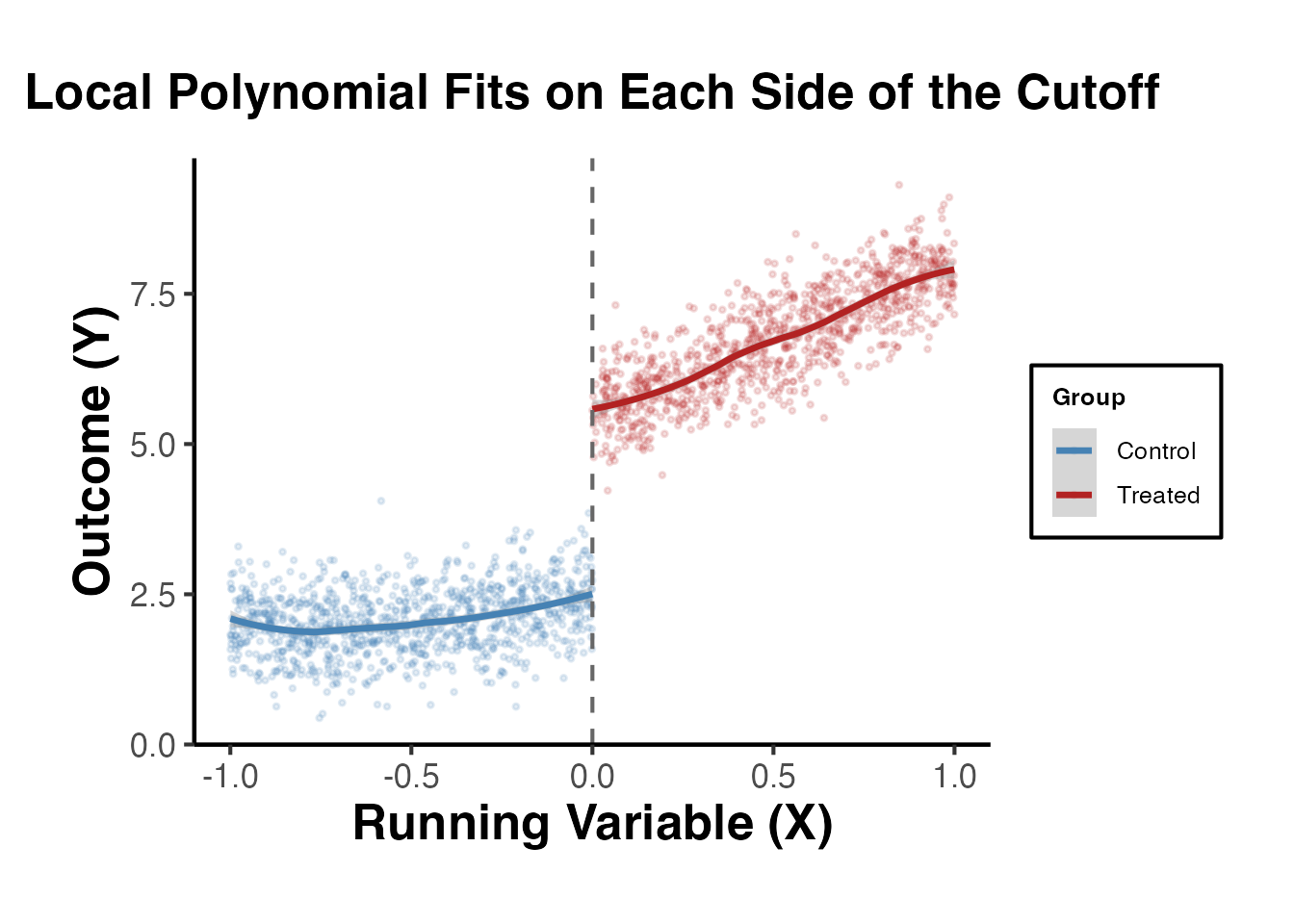

3.3 Local Polynomial Fits

We overlay local polynomial (loess) fits on each side of the cutoff. The gap between the two fitted curves at the cutoff is a visual estimate of the treatment effect.

ggplot(rd_data, aes(x = x, y = y, color = factor(treated))) +

geom_point(alpha = 0.15, size = 0.6) +

geom_smooth(

method = "loess", span = 0.5, se = TRUE, linewidth = 1.2

) +

geom_vline(xintercept = 0, linetype = "dashed", color = "grey40") +

scale_color_manual(

values = c("0" = "steelblue", "1" = "firebrick"),

labels = c("Control", "Treated")

) +

labs(

x = "Running Variable (X)",

y = "Outcome (Y)",

color = "Group",

title = "Local Polynomial Fits on Each Side of the Cutoff"

) +

causalverse::ama_theme()

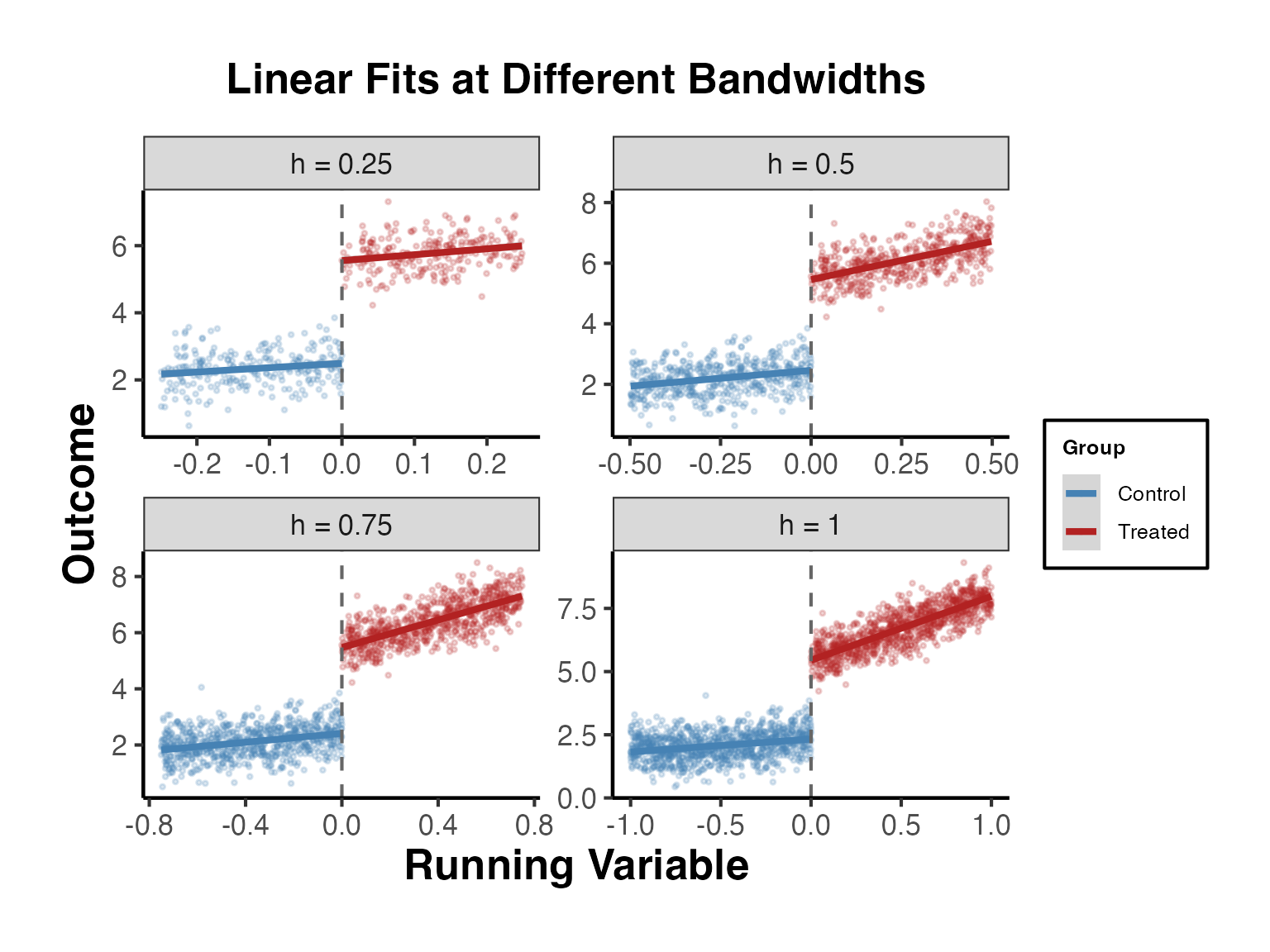

3.4 Linear Fits with Varying Bandwidths

It is useful to see how the visual discontinuity changes with the bandwidth around the cutoff.

bw_list <- c(0.25, 0.5, 0.75, 1.0)

bw_plot_data <- lapply(bw_list, function(h) {

rd_data |>

filter(abs(x) <= h) |>

mutate(bandwidth = paste0("h = ", h))

}) |>

bind_rows()

ggplot(bw_plot_data, aes(x = x, y = y, color = factor(treated))) +

geom_point(alpha = 0.2, size = 0.6) +

geom_smooth(method = "lm", se = TRUE) +

geom_vline(xintercept = 0, linetype = "dashed", color = "grey40") +

facet_wrap(~bandwidth, scales = "free") +

scale_color_manual(

values = c("0" = "steelblue", "1" = "firebrick"),

labels = c("Control", "Treated")

) +

labs(

x = "Running Variable",

y = "Outcome",

color = "Group",

title = "Linear Fits at Different Bandwidths"

) +

causalverse::ama_theme()

4. The rdrobust Package (Calonico, Cattaneo, Titiunik 2014)

The rdrobust package is the gold standard for RD estimation in applied research. It implements:

- MSE-optimal bandwidth selection (Imbens and Kalyanaraman, 2012; Calonico, Cattaneo, and Titiunik, 2014)

- Bias-corrected local polynomial estimators

- Robust confidence intervals that account for bandwidth selection

- RD-specific plotting routines

The key insight is that conventional confidence intervals based on the MSE-optimal bandwidth are invalid because they do not account for the smoothing bias inherent in nonparametric estimation. The rdrobust package corrects for this bias and constructs valid confidence intervals.

4.1 Basic Estimation with rdrobust()

The main function rdrobust() estimates the RD treatment effect using local polynomial regression with optimal bandwidth selection.

library(rdrobust)

# Basic RD estimation with default settings

# - Local linear regression (p = 1)

# - Triangular kernel

# - MSE-optimal bandwidth

rd_est <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0)

summary(rd_est)

#> Sharp RD estimates using local polynomial regression.

#>

#> Number of Obs. 2000

#> BW type mserd

#> Kernel Triangular

#> VCE method NN

#>

#> Number of Obs. 1023 977

#> Eff. Number of Obs. 281 264

#> Order est. (p) 1 1

#> Order bias (q) 2 2

#> BW est. (h) 0.281 0.281

#> BW bias (b) 0.416 0.416

#> rho (h/b) 0.675 0.675

#> Unique Obs. 1023 977

#>

#> =====================================================================

#> Point Robust Inference

#> Estimate z P>|z| [ 95% C.I. ]

#> ---------------------------------------------------------------------

#> RD Effect 3.062 27.830 0.000 [2.855 , 3.288]

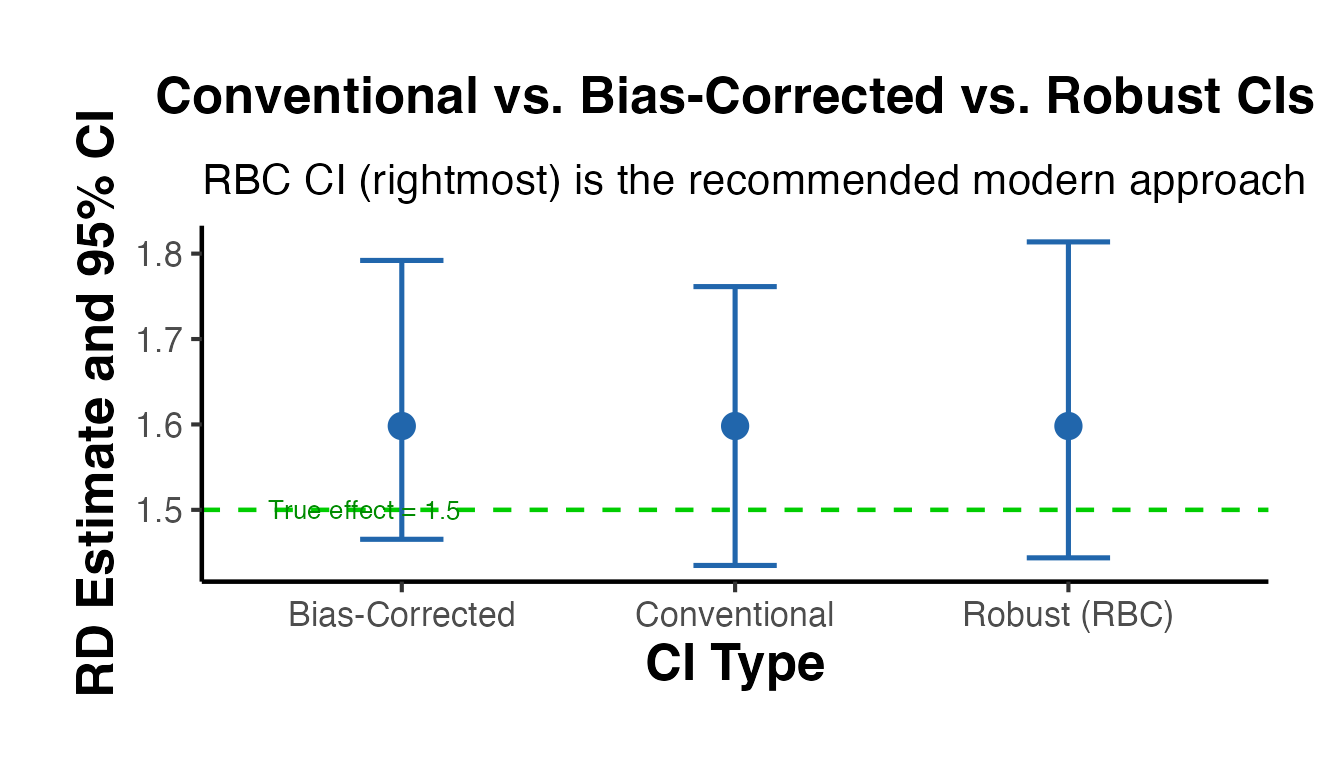

#> =====================================================================The output reports:

- Conventional: the point estimate and confidence interval using the MSE-optimal bandwidth

- Bias-corrected: the point estimate after explicit bias correction

- Robust: the recommended confidence interval that accounts for both bias correction and bandwidth selection

Always report the robust confidence interval. The conventional CI is known to have poor coverage properties.

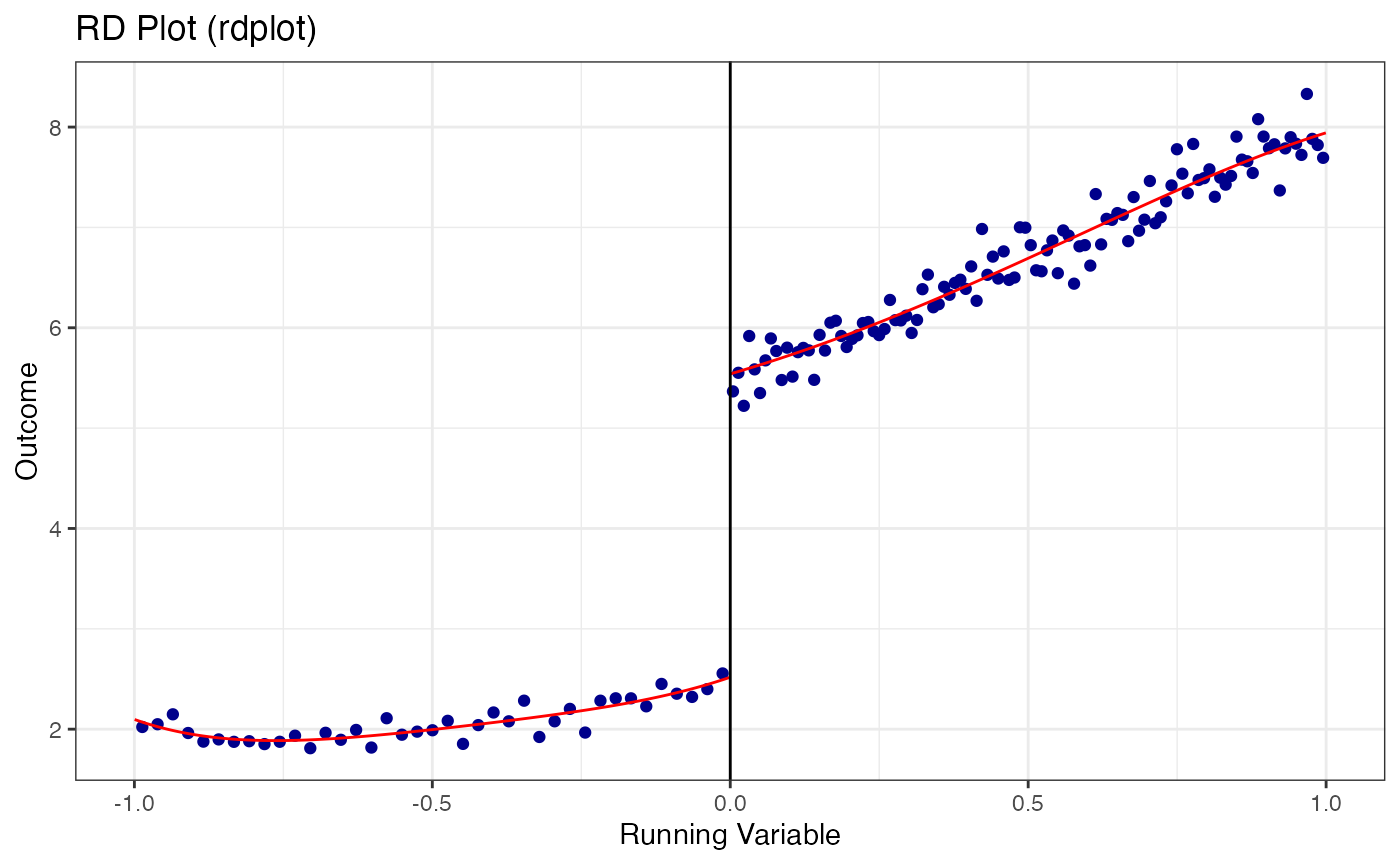

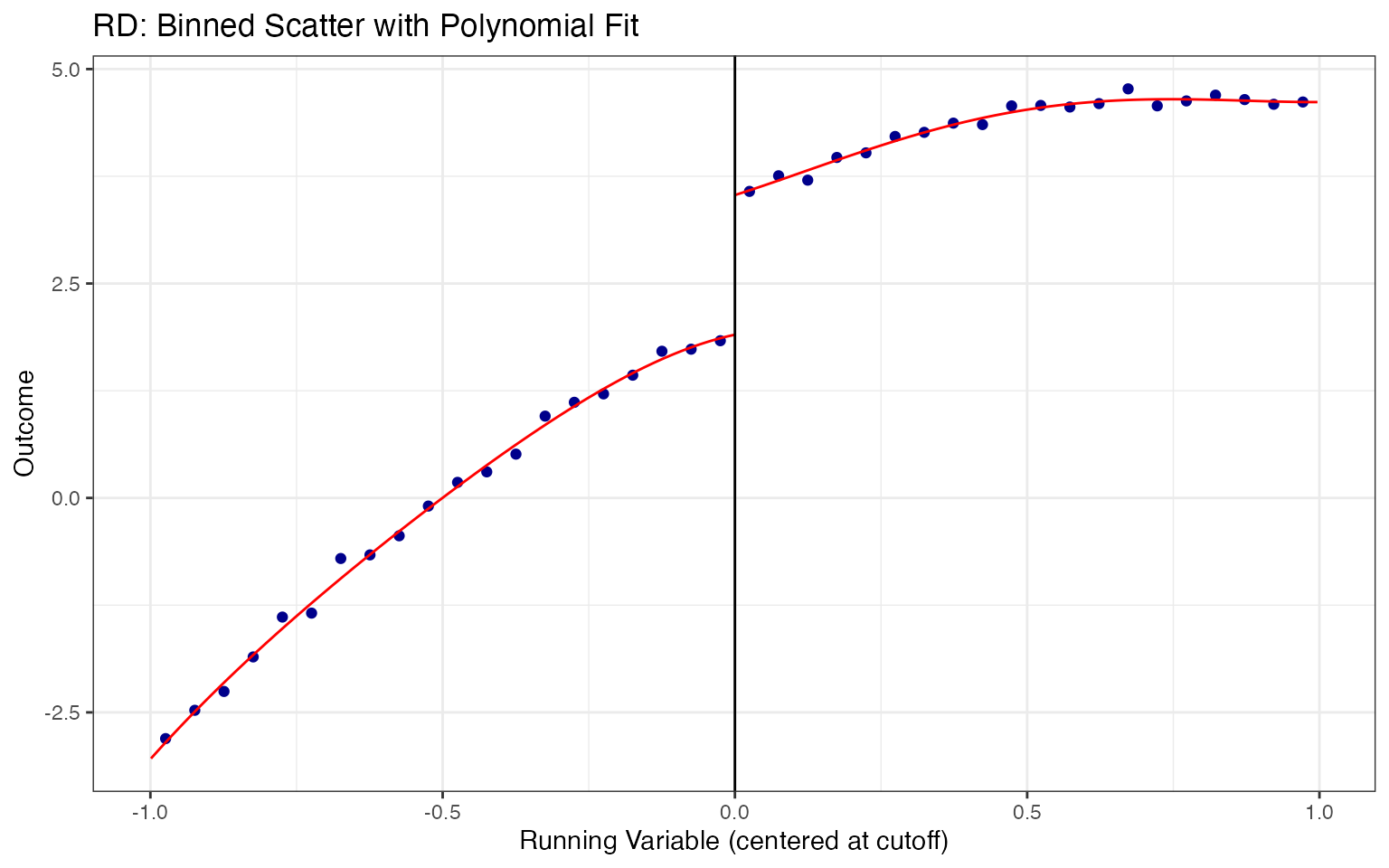

4.2 RD Plots with rdplot()

The rdplot() function creates publication-quality RD plots with evenly-spaced or quantile-spaced bins and fitted polynomials on each side of the cutoff.

# Default RD plot: evenly-spaced bins with 4th-order polynomial

rdplot(

y = rd_data$y,

x = rd_data$x,

c = 0,

title = "RD Plot (rdplot)",

x.label = "Running Variable",

y.label = "Outcome"

)

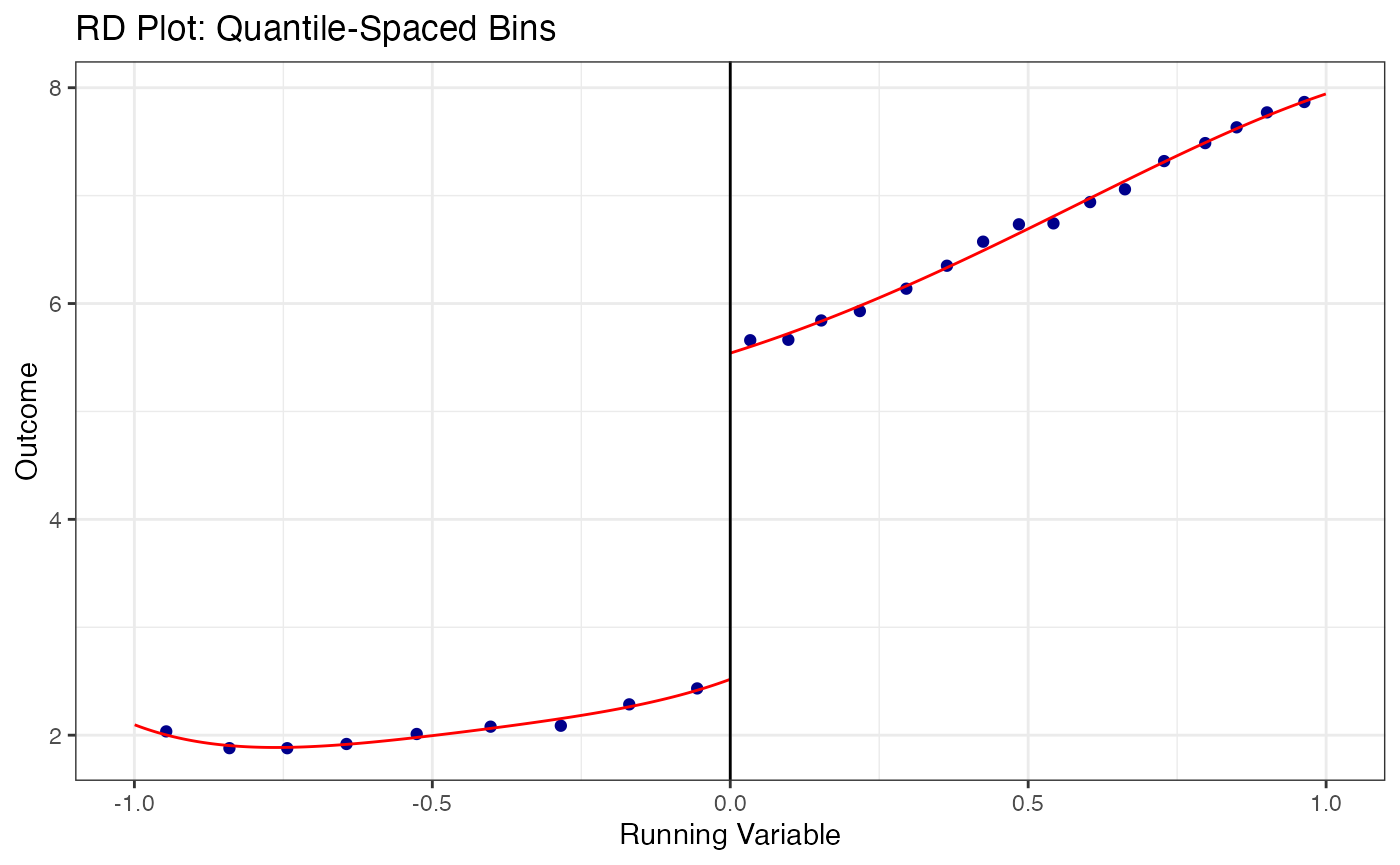

# Quantile-spaced bins (equal number of observations per bin)

rdplot(

y = rd_data$y,

x = rd_data$x,

c = 0,

binselect = "qs",

title = "RD Plot: Quantile-Spaced Bins",

x.label = "Running Variable",

y.label = "Outcome"

)

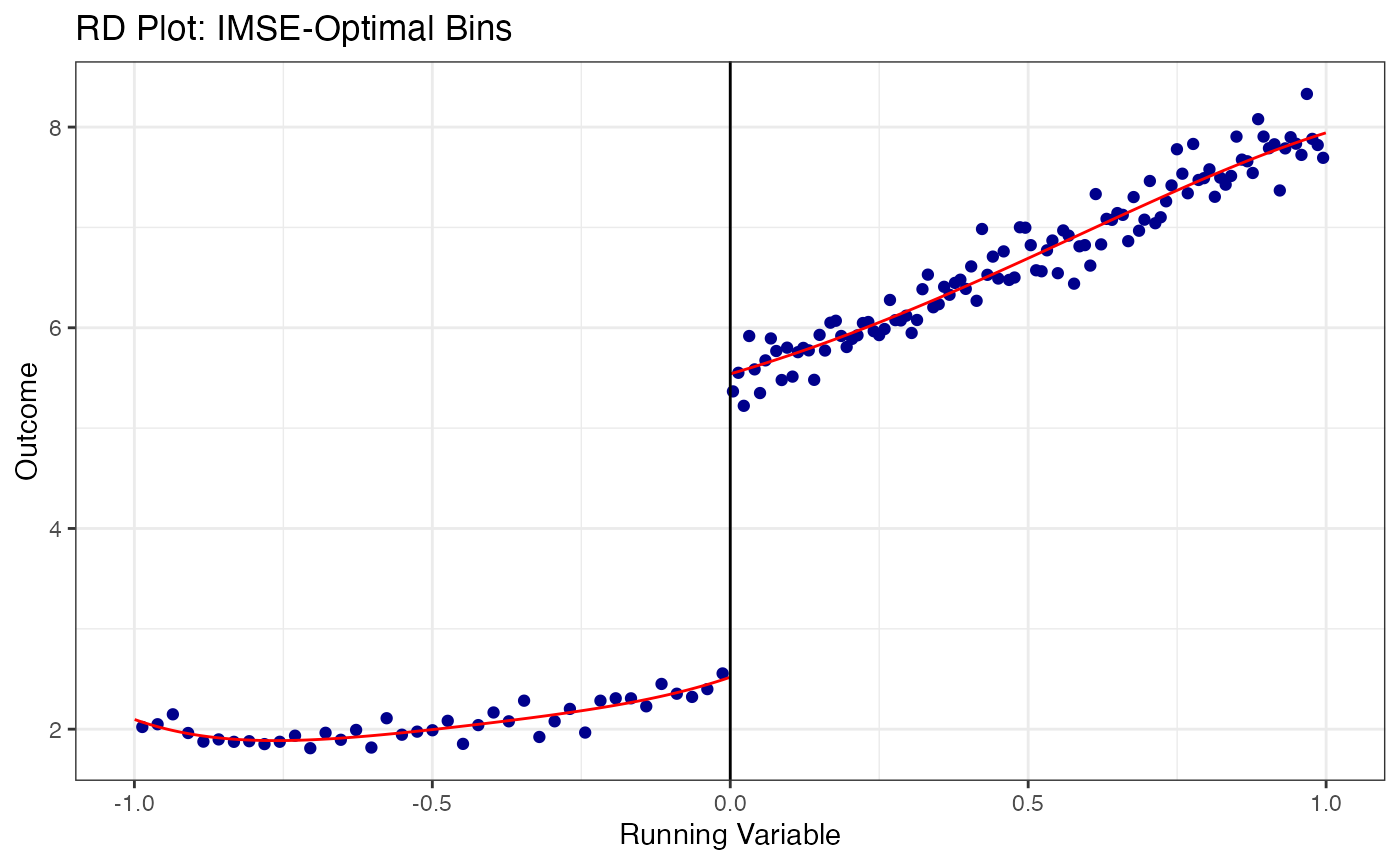

# Evenly-spaced bins with mimicking variance (IMSE-optimal)

rdplot(

y = rd_data$y,

x = rd_data$x,

c = 0,

binselect = "esmv",

title = "RD Plot: IMSE-Optimal Bins",

x.label = "Running Variable",

y.label = "Outcome"

)

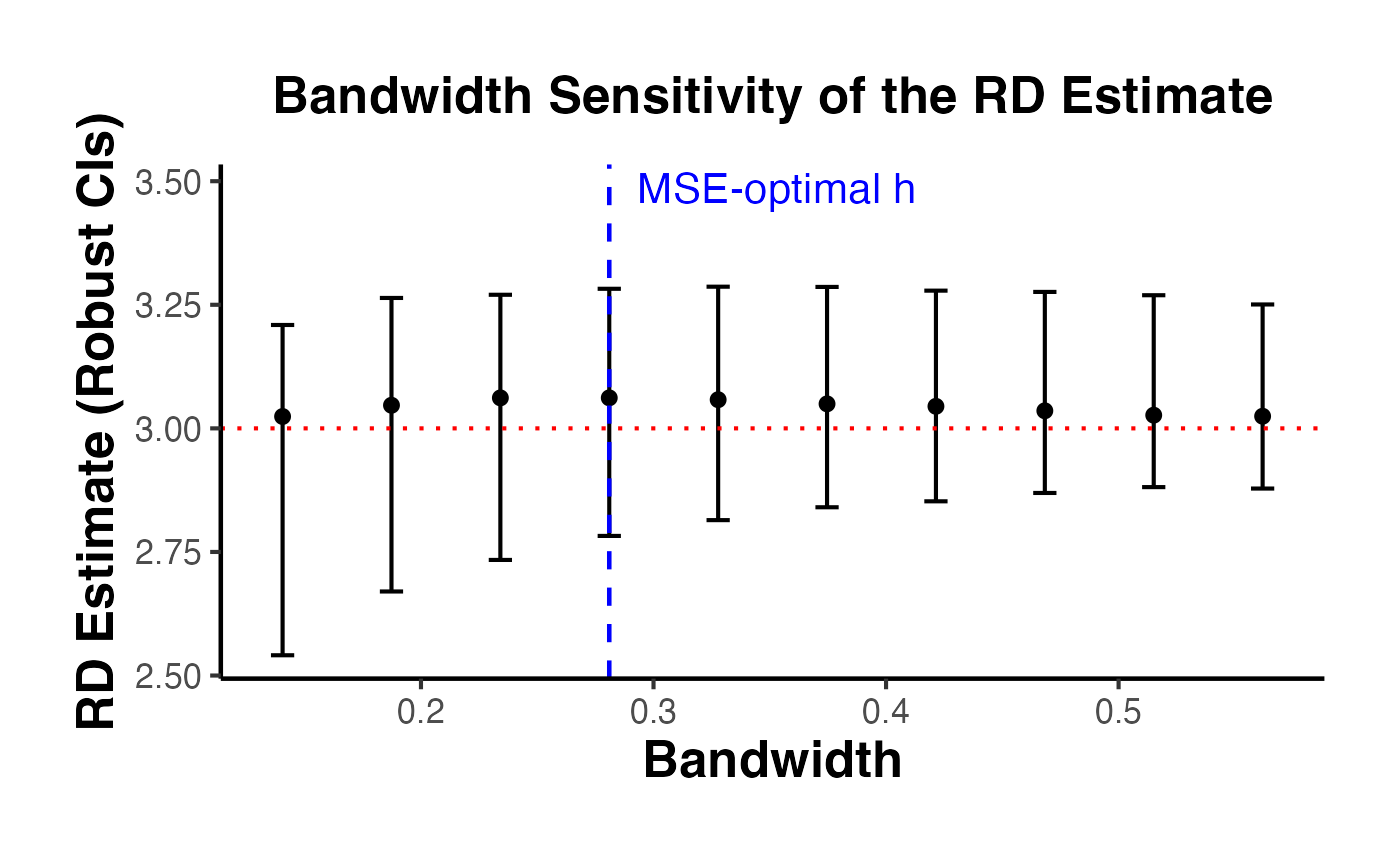

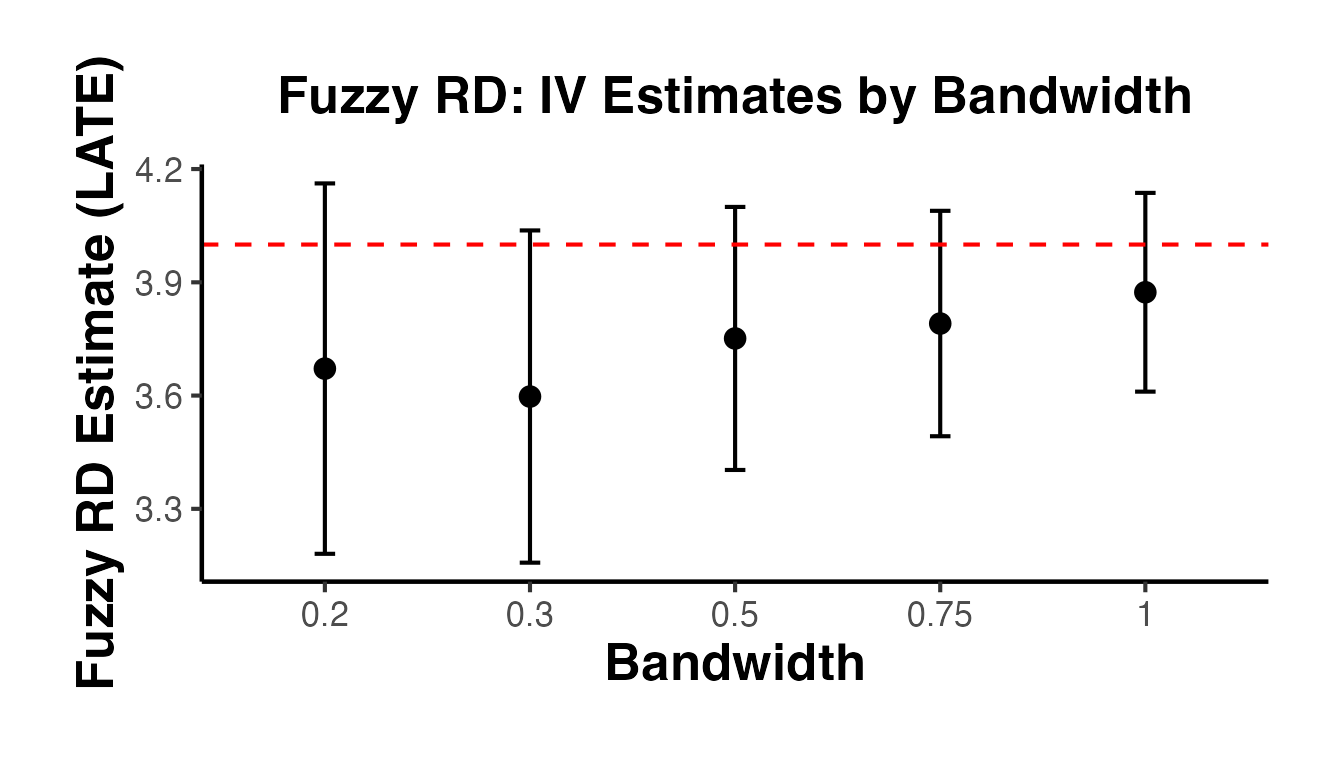

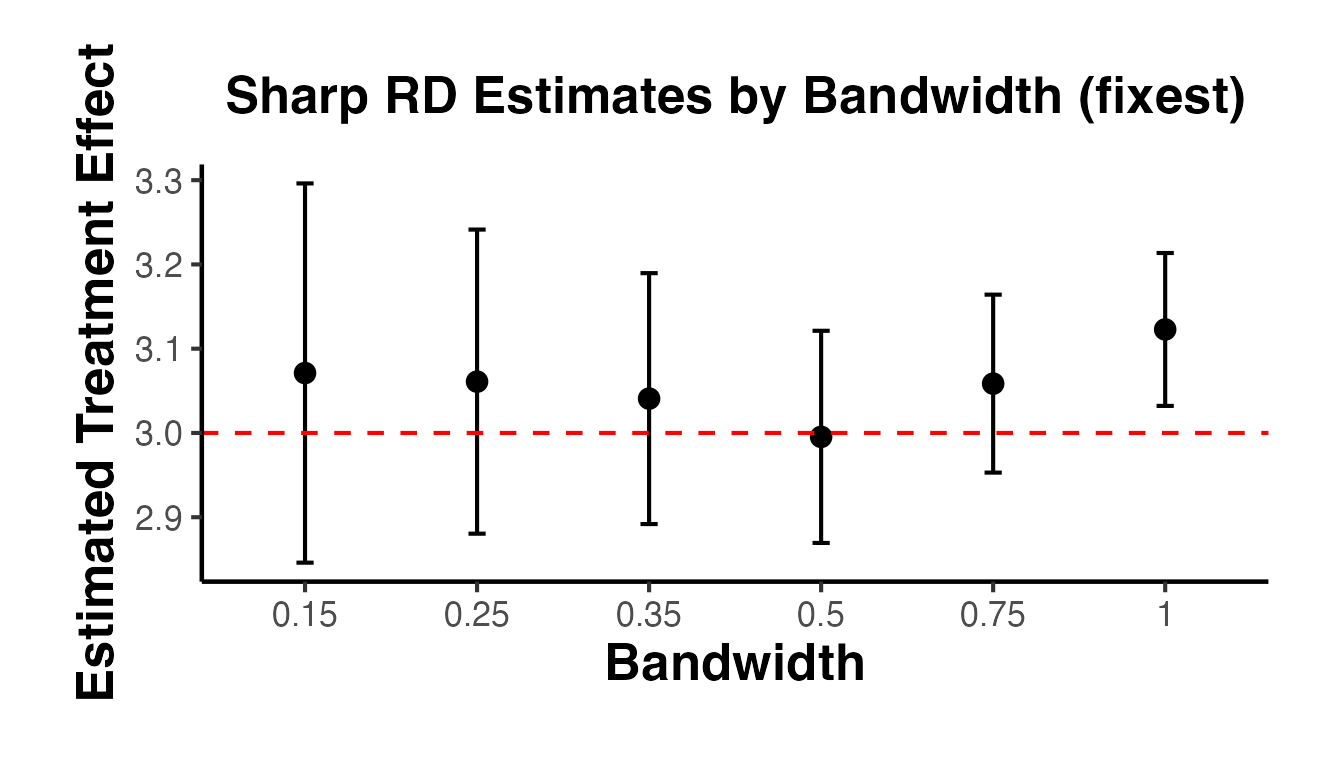

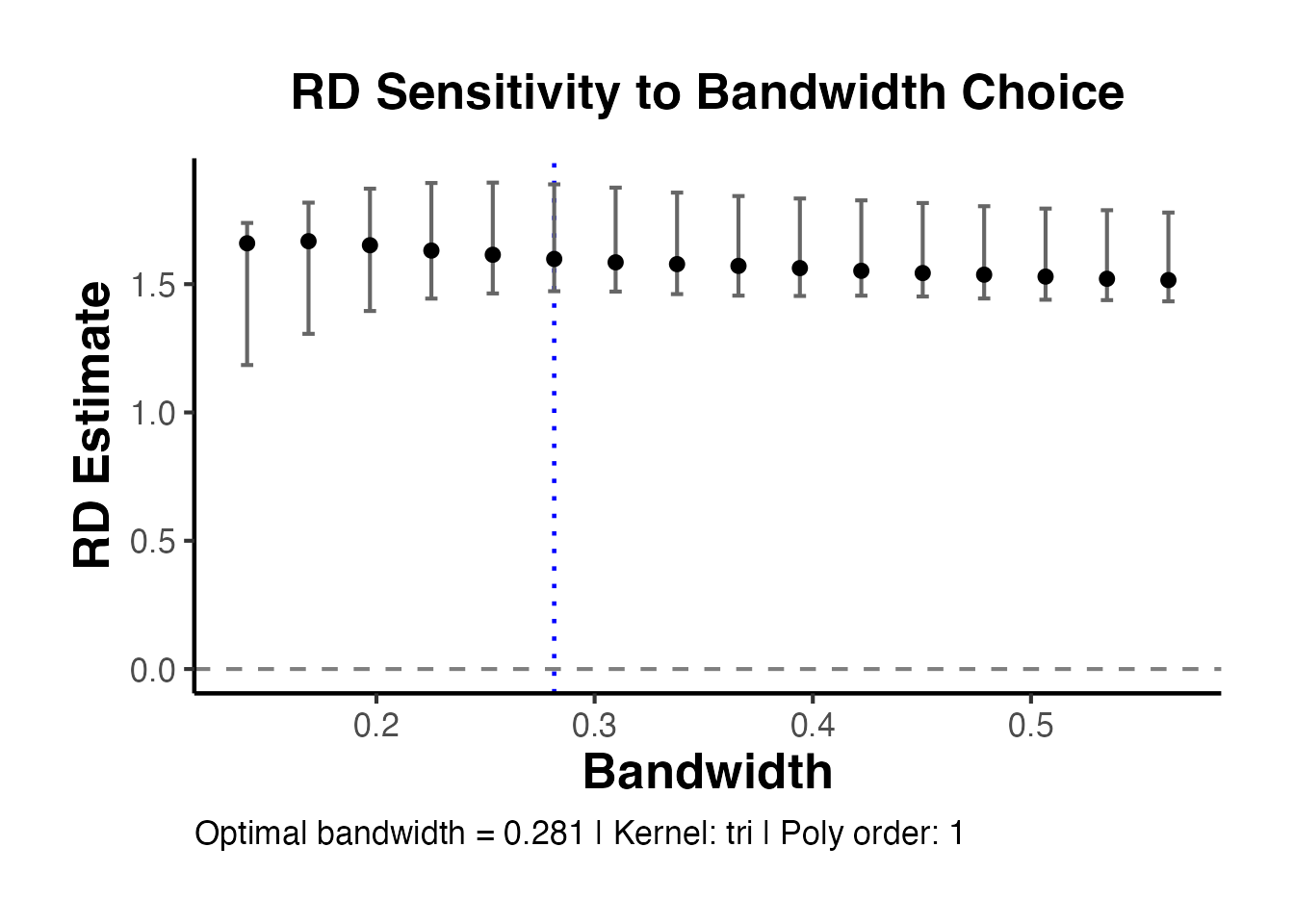

4.3 Bandwidth Sensitivity Analysis

A crucial robustness check is to show how the RD estimate changes with the bandwidth. The estimate should be relatively stable across a range of bandwidths around the optimal one.

# Get the MSE-optimal bandwidth first

rd_opt <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0)

h_opt <- rd_opt$bws[1, 1] # MSE-optimal bandwidth

cat("MSE-optimal bandwidth:", round(h_opt, 3), "\n")

#> MSE-optimal bandwidth: 0.281

# Estimate at a grid of bandwidths

bw_grid <- seq(0.5 * h_opt, 2 * h_opt, length.out = 10)

bw_sensitivity <- lapply(bw_grid, function(h) {

est <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, h = h)

tibble(

bandwidth = h,

estimate = est$coef["Conventional", ],

ci_lower = est$ci["Robust", 1],

ci_upper = est$ci["Robust", 2],

n_eff_l = est$N_h[1],

n_eff_r = est$N_h[2]

)

}) |>

bind_rows()

# Plot bandwidth sensitivity

ggplot(bw_sensitivity, aes(x = bandwidth, y = estimate)) +

geom_point(size = 2) +

geom_errorbar(aes(ymin = ci_lower, ymax = ci_upper), width = 0.01) +

geom_vline(xintercept = h_opt, linetype = "dashed", color = "blue",

linewidth = 0.8) +

geom_hline(yintercept = 3, linetype = "dotted", color = "red") +

annotate("text", x = h_opt, y = max(bw_sensitivity$ci_upper) + 0.2,

label = "MSE-optimal h", color = "blue", hjust = -0.1) +

labs(

x = "Bandwidth",

y = "RD Estimate (Robust CIs)",

title = "Bandwidth Sensitivity of the RD Estimate"

) +

causalverse::ama_theme()

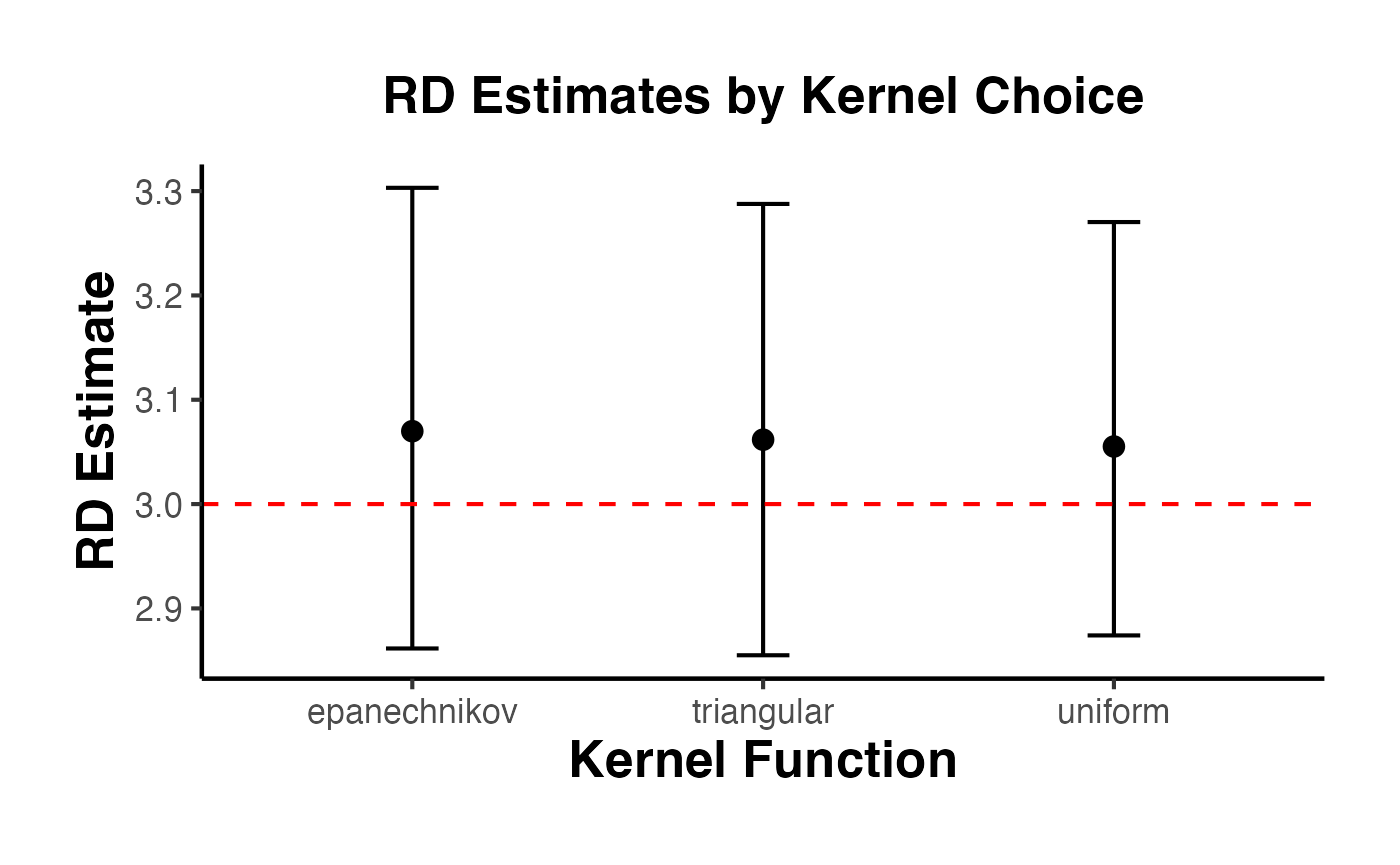

4.4 Different Kernel Choices

The kernel function weights observations by their distance from the cutoff. rdrobust supports three kernels:

- Triangular (default): . Places most weight on observations closest to the cutoff.

- Epanechnikov: . Similar to triangular but smoother.

- Uniform: . Equal weight to all observations within the bandwidth.

kernels <- c("triangular", "epanechnikov", "uniform")

kernel_results <- lapply(kernels, function(k) {

est <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, kernel = k)

tibble(

kernel = k,

estimate = est$coef["Conventional", ],

robust_ci_lower = est$ci["Robust", 1],

robust_ci_upper = est$ci["Robust", 2],

bandwidth = est$bws[1, 1]

)

}) |>

bind_rows()

kernel_results

#> # A tibble: 3 × 5

#> kernel estimate robust_ci_lower robust_ci_upper bandwidth

#> <chr> <dbl> <dbl> <dbl> <dbl>

#> 1 triangular 3.06 2.86 3.29 0.281

#> 2 epanechnikov 3.07 2.86 3.30 0.256

#> 3 uniform 3.06 2.87 3.27 0.271

# Plot kernel comparison

ggplot(kernel_results, aes(x = kernel, y = estimate)) +

geom_point(size = 3) +

geom_errorbar(

aes(ymin = robust_ci_lower, ymax = robust_ci_upper),

width = 0.15

) +

geom_hline(yintercept = 3, linetype = "dashed", color = "red") +

labs(

x = "Kernel Function",

y = "RD Estimate",

title = "RD Estimates by Kernel Choice"

) +

causalverse::ama_theme()

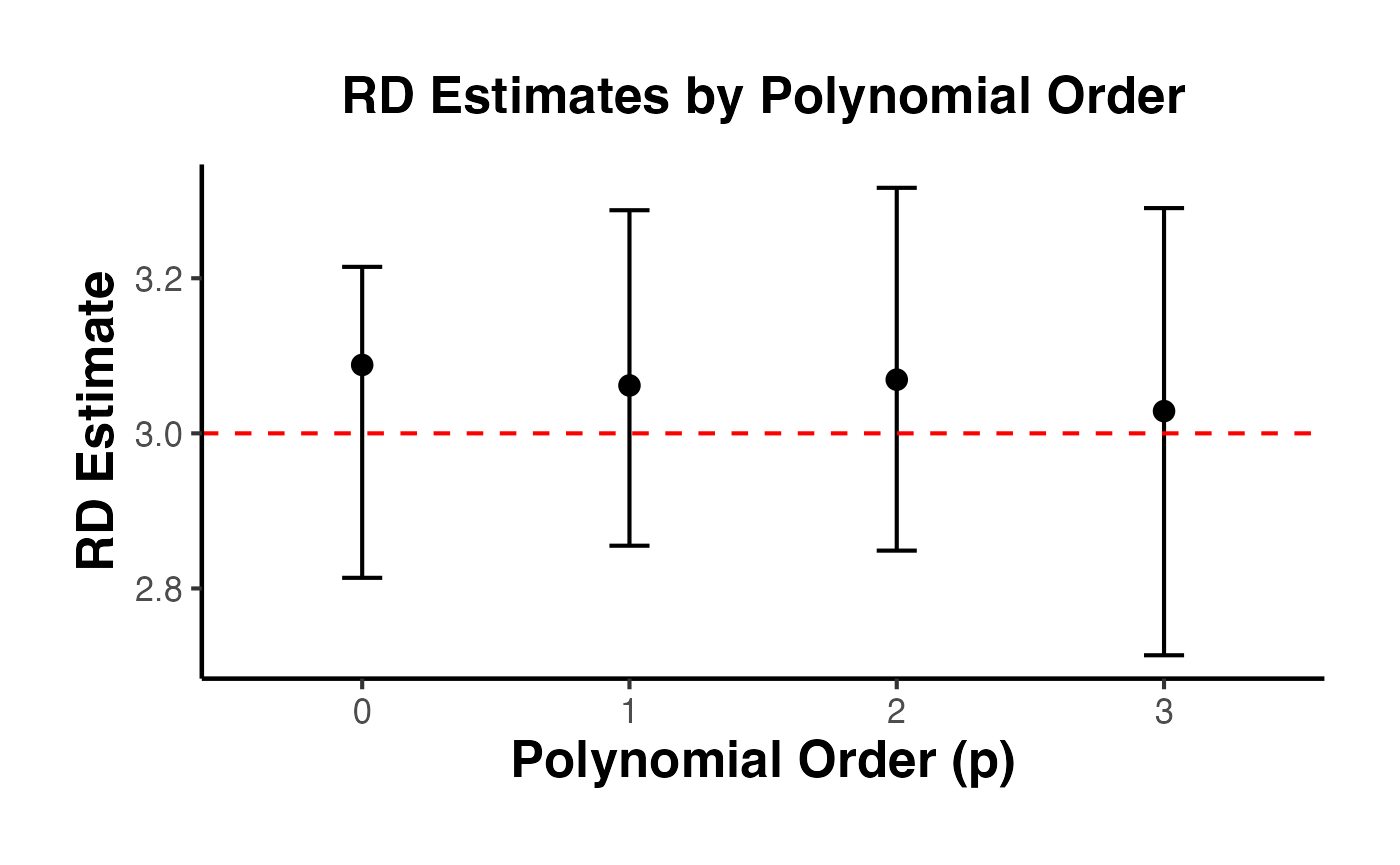

4.5 Different Polynomial Orders

The polynomial order controls the flexibility of the local polynomial fit. The most common choices are:

- : Local constant (Nadaraya-Watson)

-

: Local linear (default in

rdrobust, recommended in most cases) - : Local quadratic

Higher-order polynomials reduce bias but increase variance. Calonico, Cattaneo, and Titiunik (2014) recommend as the default.

poly_orders <- 0:3

poly_results <- lapply(poly_orders, function(p) {

est <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, p = p)

tibble(

poly_order = p,

estimate = est$coef["Conventional", ],

robust_ci_lower = est$ci["Robust", 1],

robust_ci_upper = est$ci["Robust", 2],

bandwidth = est$bws[1, 1]

)

}) |>

bind_rows()

poly_results

#> # A tibble: 4 × 5

#> poly_order estimate robust_ci_lower robust_ci_upper bandwidth

#> <int> <dbl> <dbl> <dbl> <dbl>

#> 1 0 3.09 2.81 3.21 0.0668

#> 2 1 3.06 2.86 3.29 0.281

#> 3 2 3.07 2.85 3.32 0.451

#> 4 3 3.03 2.71 3.29 0.427

ggplot(poly_results, aes(x = factor(poly_order), y = estimate)) +

geom_point(size = 3) +

geom_errorbar(

aes(ymin = robust_ci_lower, ymax = robust_ci_upper),

width = 0.15

) +

geom_hline(yintercept = 3, linetype = "dashed", color = "red") +

labs(

x = "Polynomial Order (p)",

y = "RD Estimate",

title = "RD Estimates by Polynomial Order"

) +

causalverse::ama_theme()

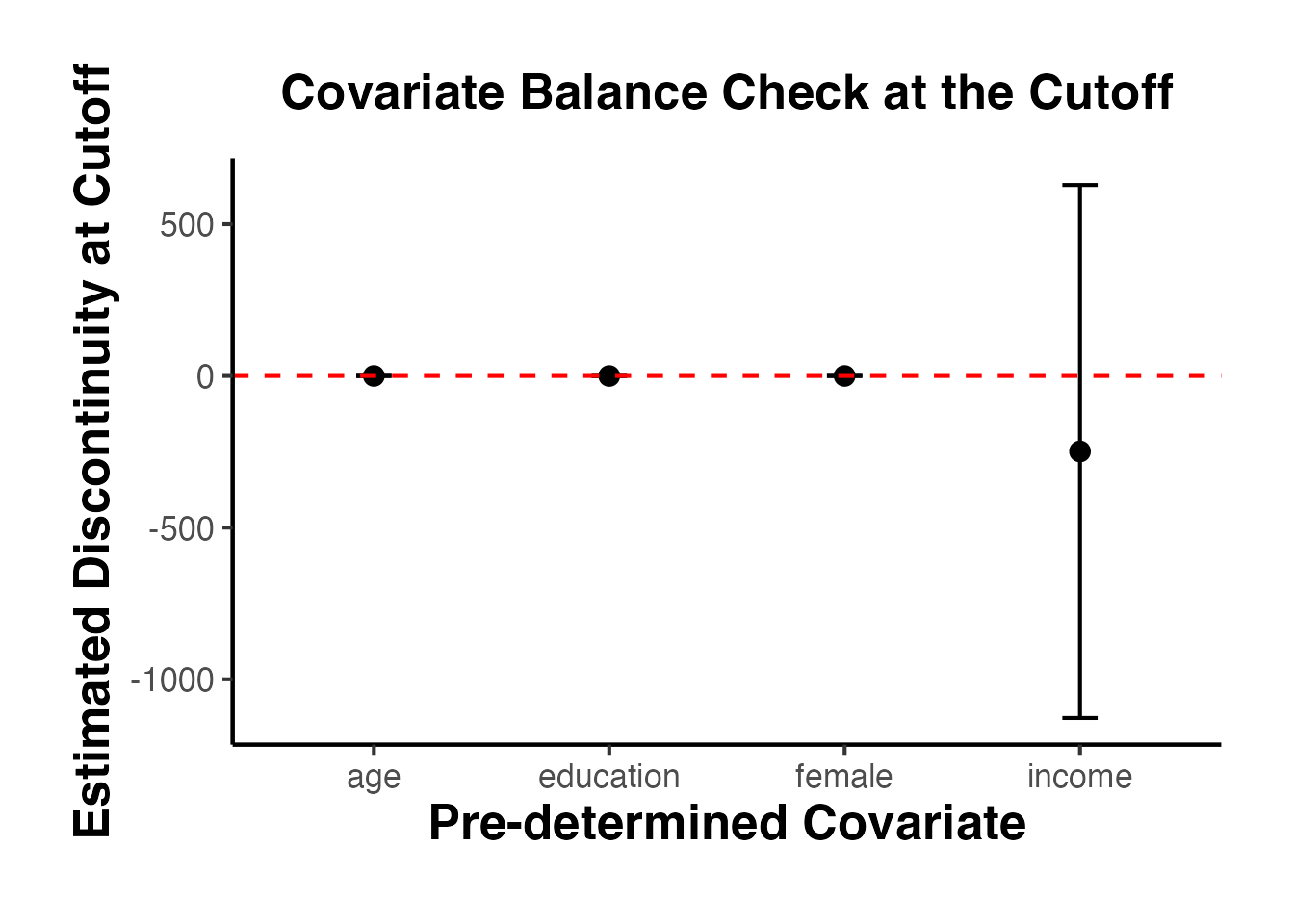

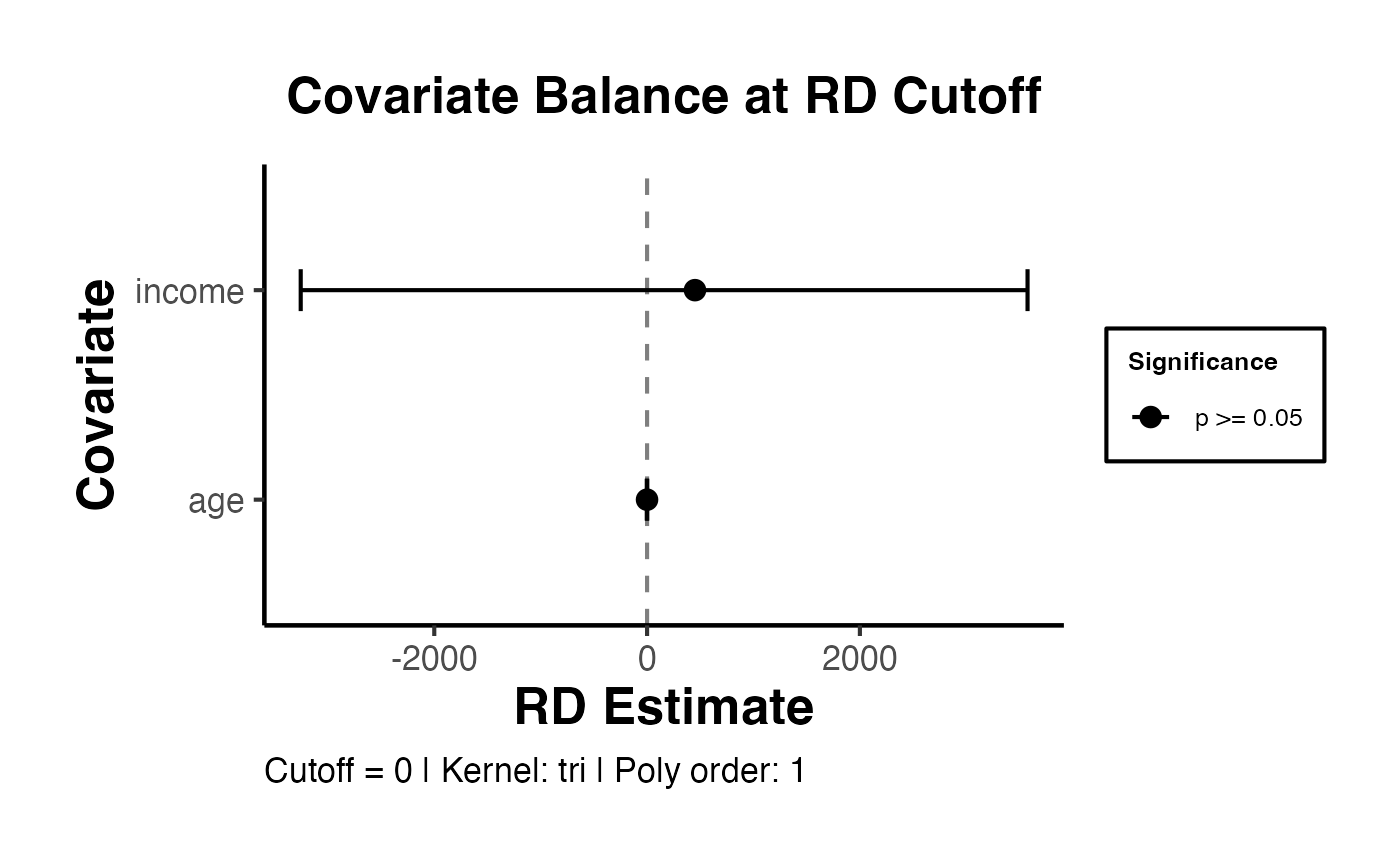

4.6 Including Covariates in rdrobust

Pre-determined covariates can be included in the RD estimation to improve precision. Covariates that predict the outcome reduce residual variance and narrow confidence intervals. Importantly, the point estimate should not change substantially when covariates are included (if it does, this suggests a problem with the design).

# Prepare covariate matrix

covs <- as.matrix(rd_data[, c("age", "female", "education", "income")])

# Estimation without covariates

rd_no_covs <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0)

# Estimation with covariates

rd_with_covs <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, covs = covs)

cat("=== Without Covariates ===\n")

#> === Without Covariates ===

cat("Estimate:", round(rd_no_covs$coef["Conventional", ], 3), "\n")

#> Estimate: 3.062

cat("Robust CI: [", round(rd_no_covs$ci["Robust", 1], 3), ",",

round(rd_no_covs$ci["Robust", 2], 3), "]\n\n")

#> Robust CI: [ 2.855 , 3.288 ]

cat("=== With Covariates ===\n")

#> === With Covariates ===

cat("Estimate:", round(rd_with_covs$coef["Conventional", ], 3), "\n")

#> Estimate: 3.078

cat("Robust CI: [", round(rd_with_covs$ci["Robust", 1], 3), ",",

round(rd_with_covs$ci["Robust", 2], 3), "]\n")

#> Robust CI: [ 2.875 , 3.3 ]4.7 Clustered Standard Errors

When the running variable takes on discrete values (e.g., test scores with integer values) or when there are groups of related observations (e.g., students within schools), clustered standard errors may be needed. The cluster argument in rdrobust implements this.

# Add a cluster variable (e.g., school ID)

rd_data_clustered <- rd_data |>

mutate(school_id = sample(1:50, n(), replace = TRUE))

# Estimation with clustered standard errors

rd_clustered <- rdrobust(

y = rd_data_clustered$y,

x = rd_data_clustered$x,

c = 0,

cluster = rd_data_clustered$school_id

)

summary(rd_clustered)

#> Sharp RD estimates using local polynomial regression.

#>

#> Number of Obs. 2000

#> BW type mserd

#> Kernel Triangular

#> VCE method NN

#>

#> Number of Obs. 1023 977

#> Eff. Number of Obs. 272 258

#> Order est. (p) 1 1

#> Order bias (q) 2 2

#> BW est. (h) 0.273 0.273

#> BW bias (b) 0.396 0.396

#> rho (h/b) 0.689 0.689

#> Unique Obs. 1023 977

#>

#> =====================================================================

#> Point Robust Inference

#> Estimate z P>|z| [ 95% C.I. ]

#> ---------------------------------------------------------------------

#> RD Effect 3.062 26.239 0.000 [2.841 , 3.300]

#> =====================================================================4.8 Additional rdrobust Options

# Using CER-optimal bandwidth (coverage error rate optimal)

# Narrower than MSE-optimal; better coverage properties

rd_cer <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, bwselect = "cerrd")

summary(rd_cer)

#> Sharp RD estimates using local polynomial regression.

#>

#> Number of Obs. 2000

#> BW type cerrd

#> Kernel Triangular

#> VCE method NN

#>

#> Number of Obs. 1023 977

#> Eff. Number of Obs. 180 191

#> Order est. (p) 1 1

#> Order bias (q) 2 2

#> BW est. (h) 0.192 0.192

#> BW bias (b) 0.416 0.416

#> rho (h/b) 0.462 0.462

#> Unique Obs. 1023 977

#>

#> =====================================================================

#> Point Robust Inference

#> Estimate z P>|z| [ 95% C.I. ]

#> ---------------------------------------------------------------------

#> RD Effect 3.047 25.976 0.000 [2.822 , 3.282]

#> =====================================================================

# Specifying different bandwidths on each side

rd_asym <- rdrobust(

y = rd_data$y,

x = rd_data$x,

c = 0,

h = c(0.4, 0.6) # left bandwidth = 0.4, right bandwidth = 0.6

)

summary(rd_asym)

#> Sharp RD estimates using local polynomial regression.

#>

#> Number of Obs. 2000

#> BW type Manual

#> Kernel Triangular

#> VCE method NN

#>

#> Number of Obs. 1023 977

#> Eff. Number of Obs. 395 576

#> Order est. (p) 1 1

#> Order bias (q) 2 2

#> BW est. (h) 0.400 0.600

#> BW bias (b) 0.400 0.600

#> rho (h/b) 1.000 1.000

#> Unique Obs. 1023 977

#>

#> =====================================================================

#> Point Robust Inference

#> Estimate z P>|z| [ 95% C.I. ]

#> ---------------------------------------------------------------------

#> RD Effect 3.003 29.719 0.000 [2.850 , 3.253]

#> =====================================================================

# Using a different bias bandwidth (b) relative to main bandwidth (h)

rd_rho <- rdrobust(y = rd_data$y, x = rd_data$x, c = 0, rho = 0.8)

summary(rd_rho)

#> Sharp RD estimates using local polynomial regression.

#>

#> Number of Obs. 2000

#> BW type mserd

#> Kernel Triangular

#> VCE method NN

#>

#> Number of Obs. 1023 977

#> Eff. Number of Obs. 281 264

#> Order est. (p) 1 1

#> Order bias (q) 2 2

#> BW est. (h) 0.281 0.281

#> BW bias (b) 0.351 0.351

#> rho (h/b) 0.800 0.800

#> Unique Obs. 1023 977

#>

#> =====================================================================

#> Point Robust Inference

#> Estimate z P>|z| [ 95% C.I. ]

#> ---------------------------------------------------------------------

#> RD Effect 3.062 26.364 0.000 [2.840 , 3.296]

#> =====================================================================

# All available bandwidth selectors

bw_selectors <- c("mserd", "msetwo", "msesum", "msecomb1", "msecomb2",

"cerrd", "certwo", "cersum", "cercomb1", "cercomb2")

bw_comparison <- lapply(bw_selectors, function(bw) {

est <- tryCatch(

rdrobust(y = rd_data$y, x = rd_data$x, c = 0, bwselect = bw),

error = function(e) NULL

)

if (!is.null(est)) {

tibble(

selector = bw,

h_left = est$bws[1, 1],

h_right = est$bws[1, 2],

estimate = est$coef["Conventional", ],

robust_ci = paste0("[", round(est$ci["Robust", 1], 3), ", ",

round(est$ci["Robust", 2], 3), "]")

)

}

}) |>

bind_rows()

bw_comparison

#> # A tibble: 10 × 5

#> selector h_left h_right estimate robust_ci

#> <chr> <dbl> <dbl> <dbl> <chr>

#> 1 mserd 0.281 0.281 3.06 [2.855, 3.288]

#> 2 msetwo 0.331 0.254 3.06 [2.852, 3.273]

#> 3 msesum 0.331 0.331 3.06 [2.878, 3.258]

#> 4 msecomb1 0.281 0.281 3.06 [2.855, 3.288]

#> 5 msecomb2 0.331 0.281 3.06 [2.859, 3.275]

#> 6 cerrd 0.192 0.192 3.05 [2.822, 3.282]

#> 7 certwo 0.226 0.174 3.07 [2.848, 3.293]

#> 8 cersum 0.227 0.227 3.06 [2.855, 3.274]

#> 9 cercomb1 0.192 0.192 3.05 [2.822, 3.282]

#> 10 cercomb2 0.226 0.192 3.06 [2.846, 3.287]5. The rddensity Package (Cattaneo, Jansson, Ma 2020)

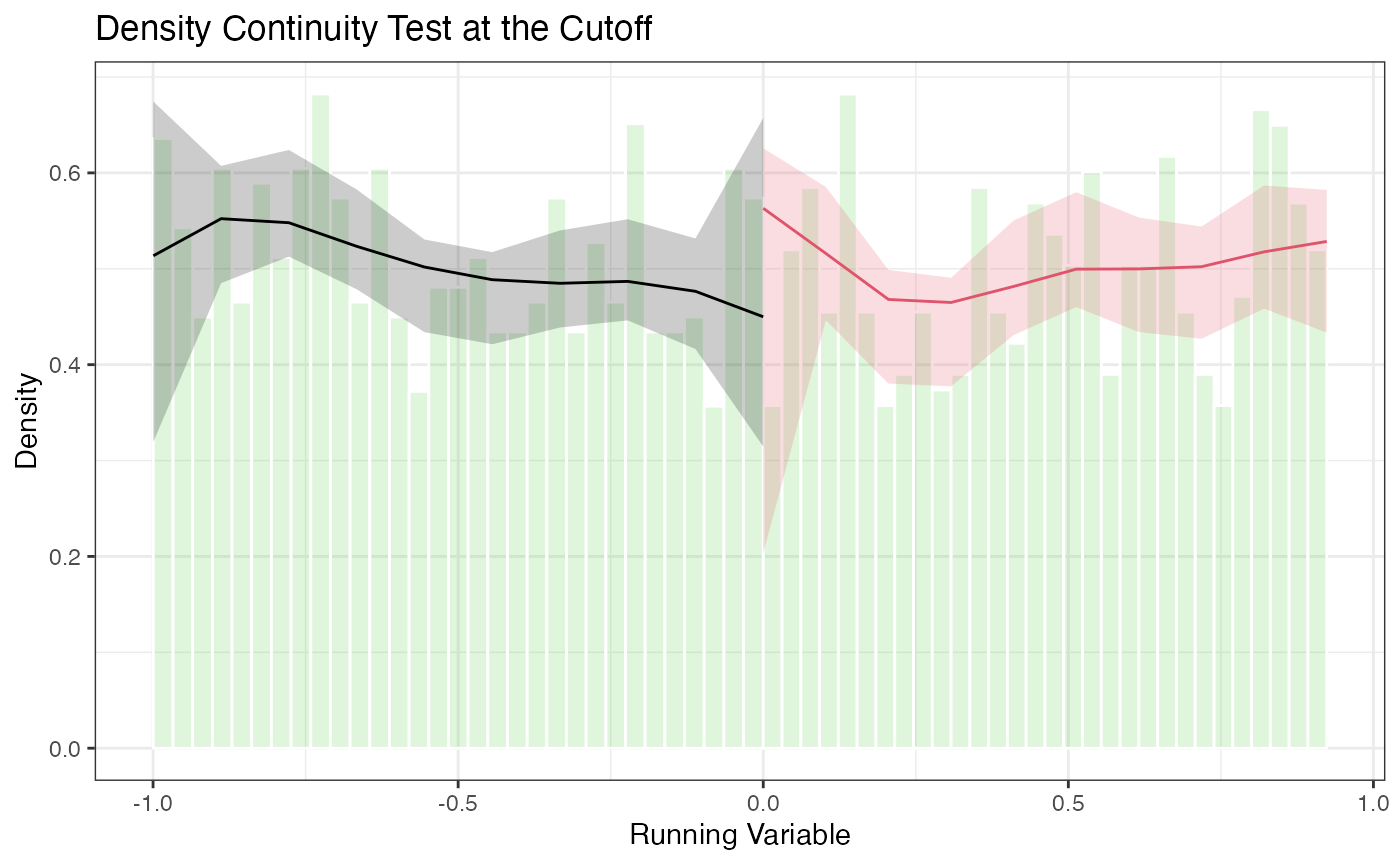

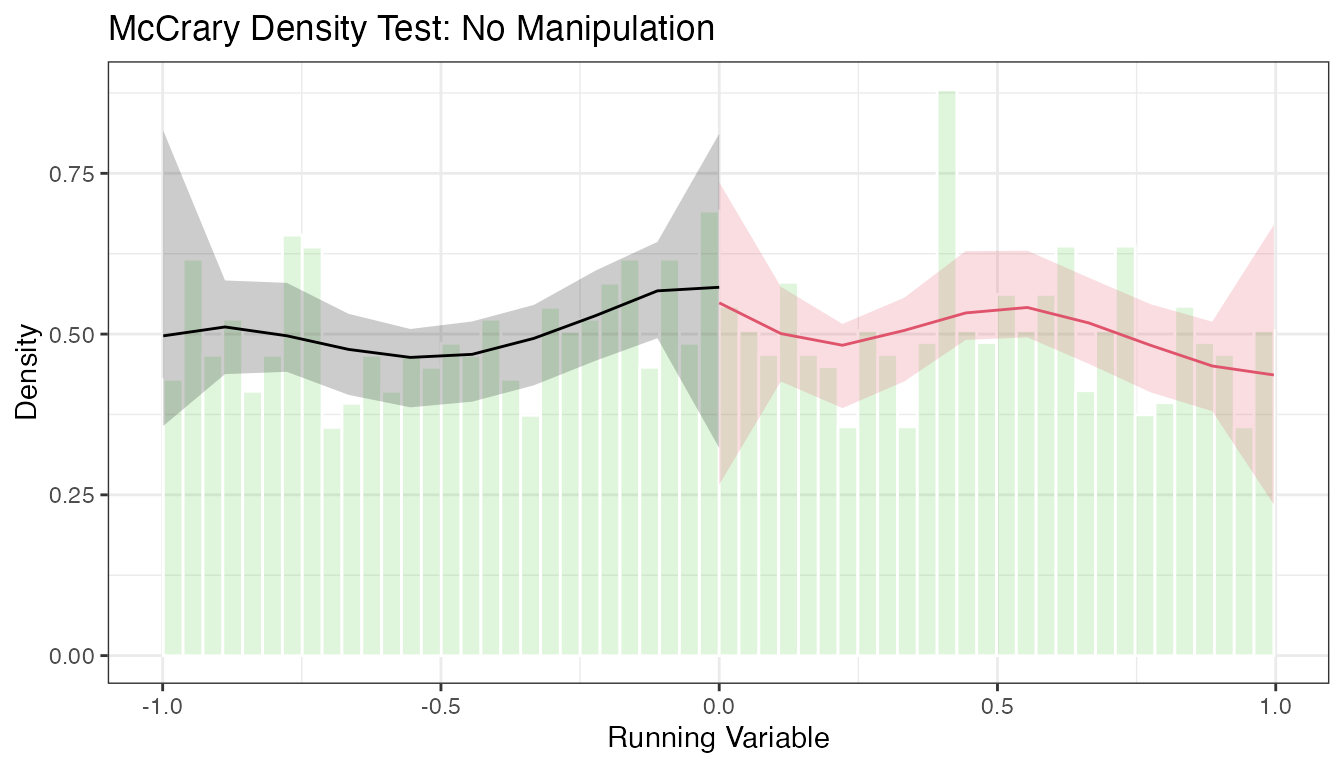

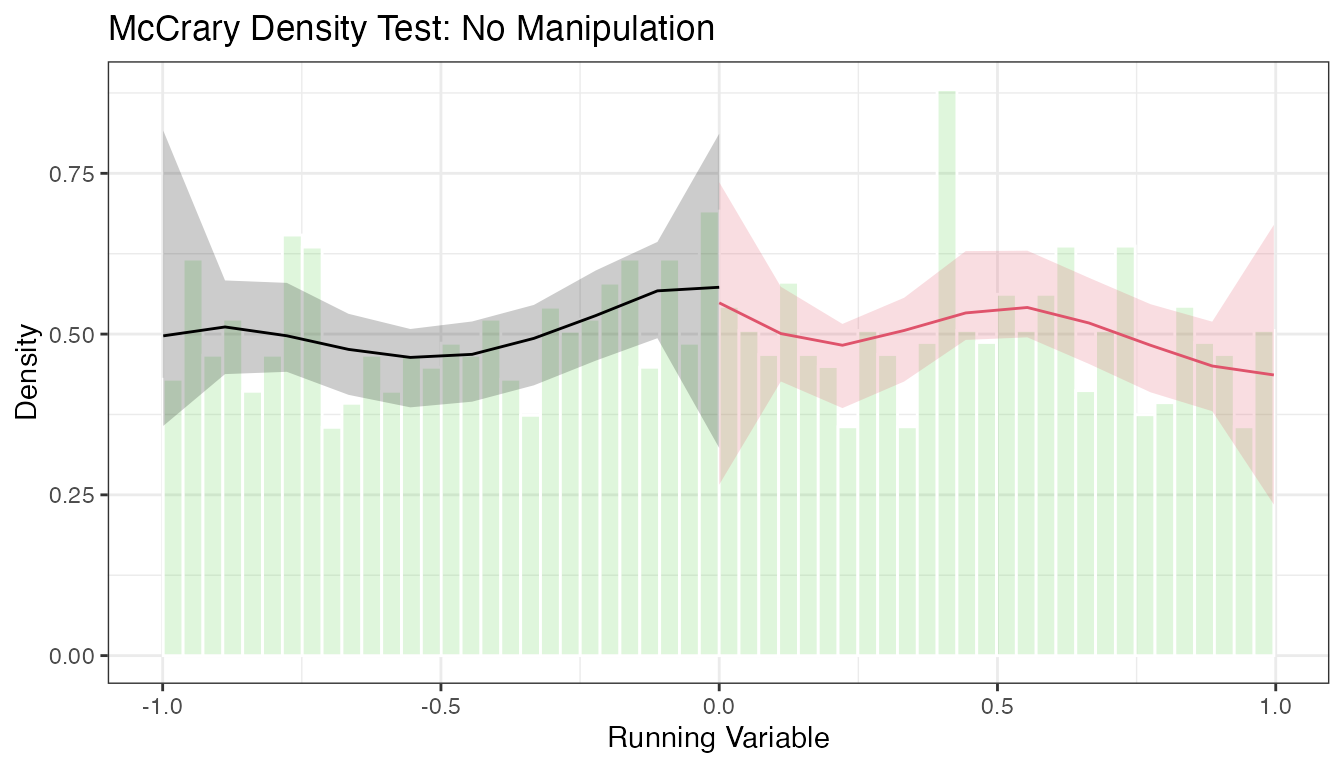

The rddensity package implements formal tests for manipulation of the running variable. The test examines whether the density of the running variable is continuous at the cutoff. A discontinuity in the density is evidence that units are sorting (bunching) around the cutoff, which would violate the no-manipulation assumption.

The test is based on local polynomial density estimation. It is a refinement of the earlier McCrary (2008) test, using bias-corrected inference and robust confidence intervals analogous to rdrobust.

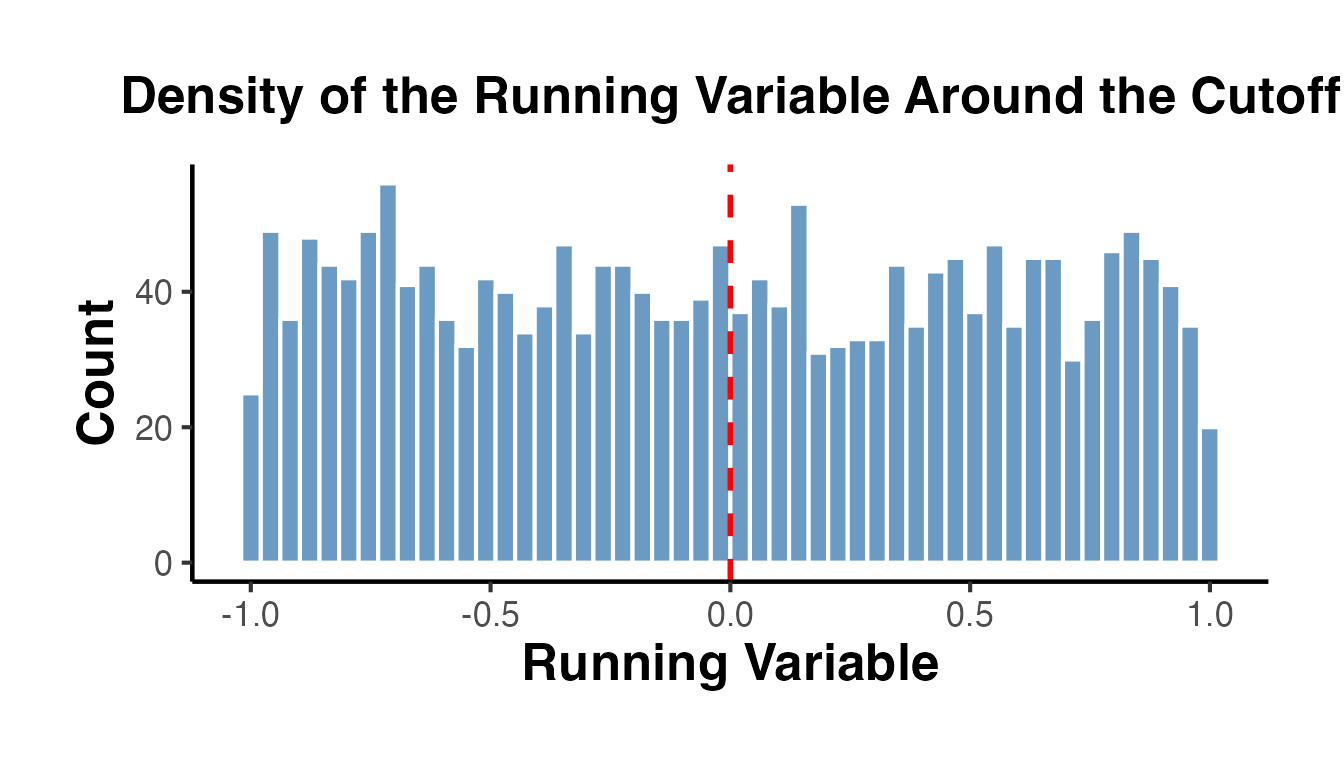

5.1 Histogram Check

Before running a formal test, a histogram provides a visual check:

ggplot(rd_data, aes(x = x)) +

geom_histogram(

bins = 50, fill = "steelblue", color = "white", alpha = 0.8

) +

geom_vline(xintercept = 0, linetype = "dashed", color = "red", linewidth = 1) +

labs(

x = "Running Variable",

y = "Count",

title = "Density of the Running Variable Around the Cutoff"

) +

causalverse::ama_theme()

Since our running variable is drawn from a uniform distribution, we observe no bunching. In real applications, the formal test is essential.

5.2 Formal Density Test with rddensity()

library(rddensity)

# Basic manipulation test

density_test <- rddensity(X = rd_data$x, c = 0)

summary(density_test)

#>

#> Manipulation testing using local polynomial density estimation.

#>

#> Number of obs = 2000

#> Model = unrestricted

#> Kernel = triangular

#> BW method = estimated

#> VCE method = jackknife

#>

#> c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. Number of obs 411 285

#> Order est. (p) 2 2

#> Order bias (q) 3 3

#> BW est. (h) 0.416 0.308

#>

#> Method T P > |T|

#> Robust -0.508 0.6114

#>

#>

#> P-values of binomial tests (H0: p=0.5).

#>

#> Window Length / 2 <c >=c P>|T|

#> 0.030 34 20 0.0759

#> 0.060 70 51 0.1014

#> 0.090 94 86 0.6020

#> 0.119 119 112 0.6931

#> 0.149 141 151 0.5985

#> 0.179 169 184 0.4562

#> 0.209 201 205 0.8817

#> 0.239 237 230 0.7813

#> 0.269 267 254 0.5991

#> 0.298 299 276 0.3589The output reports:

- The estimated density on the left and right of the cutoff

- The T-statistic and p-value for the null hypothesis of continuity

- Both conventional and robust p-values

A large p-value (e.g., > 0.05) is consistent with no manipulation. A small p-value indicates evidence of manipulation.

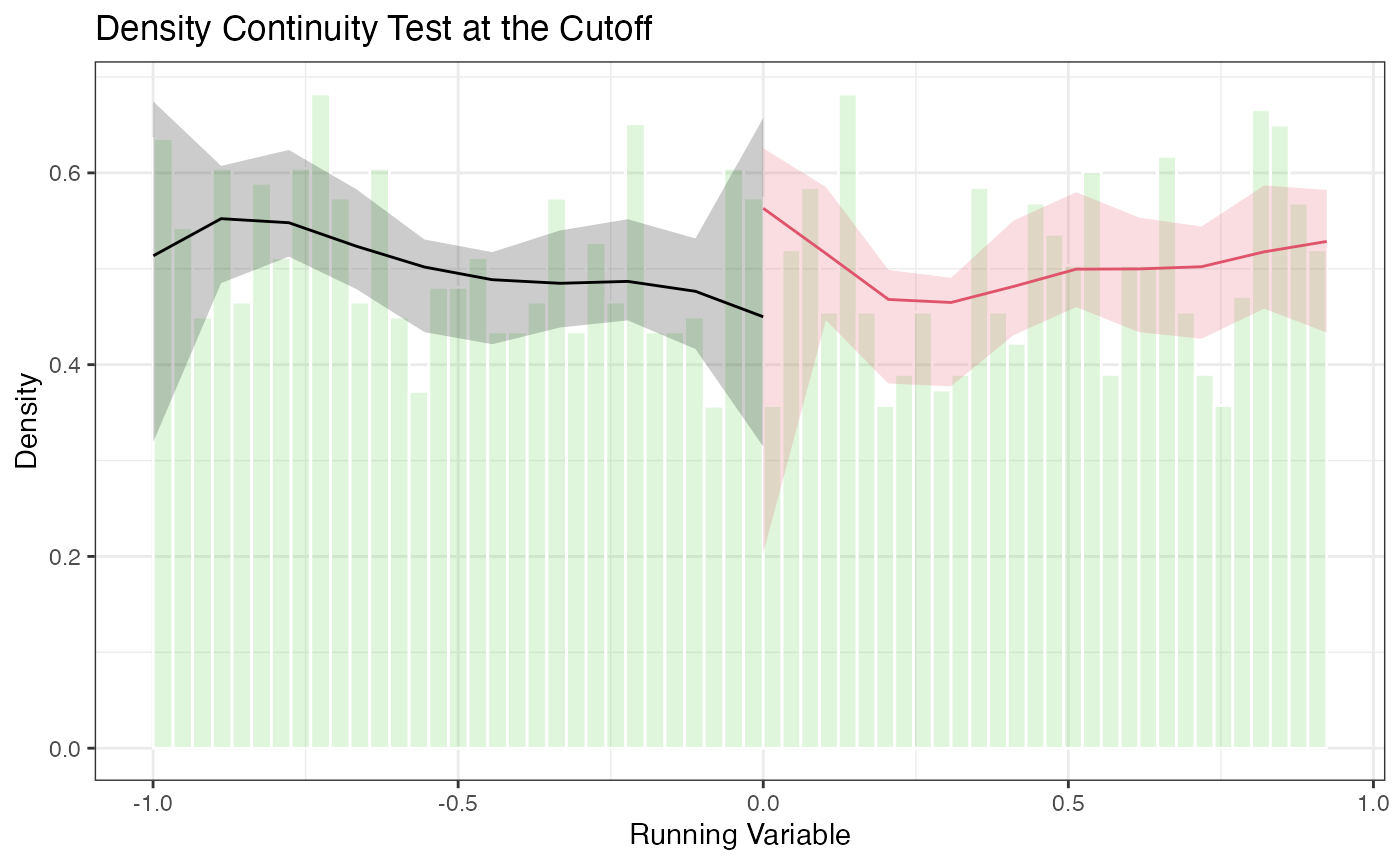

5.3 Density Plots with rdplotdensity()

# Density plot with confidence intervals

# density_test is created in the rddensity-test chunk above

rdplotdensity(

rdd = density_test,

X = rd_data$x,

title = "Density Continuity Test at the Cutoff",

xlabel = "Running Variable",

ylabel = "Density"

)

#> $Estl

#> Call: lpdensity

#>

#> Sample size 1023

#> Polynomial order for point estimation (p=) 2

#> Order of derivative estimated (v=) 1

#> Polynomial order for confidence interval (q=) 3

#> Kernel function triangular

#> Scaling factor 0.51175587793897

#> Bandwidth method user provided

#>

#> Use summary(...) to show estimates.

#>

#> $Estr

#> Call: lpdensity

#>

#> Sample size 977

#> Polynomial order for point estimation (p=) 2

#> Order of derivative estimated (v=) 1

#> Polynomial order for confidence interval (q=) 3

#> Kernel function triangular

#> Scaling factor 0.488744372186093

#> Bandwidth method user provided

#>

#> Use summary(...) to show estimates.

#>

#> $Estplot

The plot shows the estimated density on each side of the cutoff with pointwise confidence intervals. Overlapping confidence intervals at the cutoff are consistent with no manipulation.

5.4 Advanced Options for rddensity

# Different polynomial orders for the density estimator

density_p2 <- rddensity(X = rd_data$x, c = 0, p = 2)

summary(density_p2)

#>

#> Manipulation testing using local polynomial density estimation.

#>

#> Number of obs = 2000

#> Model = unrestricted

#> Kernel = triangular

#> BW method = estimated

#> VCE method = jackknife

#>

#> c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. Number of obs 411 285

#> Order est. (p) 2 2

#> Order bias (q) 3 3

#> BW est. (h) 0.416 0.308

#>

#> Method T P > |T|

#> Robust -0.508 0.6114

#>

#>

#> P-values of binomial tests (H0: p=0.5).

#>

#> Window Length / 2 <c >=c P>|T|

#> 0.030 34 20 0.0759

#> 0.060 70 51 0.1014

#> 0.090 94 86 0.6020

#> 0.119 119 112 0.6931

#> 0.149 141 151 0.5985

#> 0.179 169 184 0.4562

#> 0.209 201 205 0.8817

#> 0.239 237 230 0.7813

#> 0.269 267 254 0.5991

#> 0.298 299 276 0.3589

# Specifying bandwidths manually

density_manual_bw <- rddensity(X = rd_data$x, c = 0, h = c(0.3, 0.3))

summary(density_manual_bw)

#>

#> Manipulation testing using local polynomial density estimation.

#>

#> Number of obs = 2000

#> Model = unrestricted

#> Kernel = triangular

#> BW method = mannual

#> VCE method = jackknife

#>

#> c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. Number of obs 300 278

#> Order est. (p) 2 2

#> Order bias (q) 3 3

#> BW est. (h) 0.3 0.3

#>

#> Method T P > |T|

#> Robust -1.2975 0.1945

#>

#>

#> P-values of binomial tests (H0: p=0.5).

#>

#> Window Length / 2 <c >=c P>|T|

#> 0.030 34 20 0.0759

#> 0.060 70 51 0.1014

#> 0.090 94 86 0.6020

#> 0.119 119 112 0.6931

#> 0.149 141 151 0.5985

#> 0.179 169 184 0.4562

#> 0.209 201 205 0.8817

#> 0.239 237 230 0.7813

#> 0.269 267 254 0.5991

#> 0.298 299 276 0.3589

# Using different kernel

density_uni <- rddensity(X = rd_data$x, c = 0, kernel = "uniform")

summary(density_uni)

#>

#> Manipulation testing using local polynomial density estimation.

#>

#> Number of obs = 2000

#> Model = unrestricted

#> Kernel = uniform

#> BW method = estimated

#> VCE method = jackknife

#>

#> c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. Number of obs 399 311

#> Order est. (p) 2 2

#> Order bias (q) 3 3

#> BW est. (h) 0.402 0.341

#>

#> Method T P > |T|

#> Robust 0.6521 0.5144

#>

#>

#> P-values of binomial tests (H0: p=0.5).

#>

#> Window Length / 2 <c >=c P>|T|

#> 0.030 34 20 0.0759

#> 0.060 70 51 0.1014

#> 0.090 94 86 0.6020

#> 0.119 119 112 0.6931

#> 0.149 141 151 0.5985

#> 0.179 169 184 0.4562

#> 0.209 201 205 0.8817

#> 0.239 237 230 0.7813

#> 0.269 267 254 0.5991

#> 0.298 299 276 0.3589

# Binomial test (useful with discrete running variables)

# When the running variable is discrete, the density test may not apply directly.

# A binomial test checks whether the share of observations just above the cutoff

# is consistent with random assignment.

n_just_below <- sum(rd_data$x >= -0.05 & rd_data$x < 0)

n_just_above <- sum(rd_data$x >= 0 & rd_data$x < 0.05)

binom.test(n_just_above, n_just_below + n_just_above, p = 0.5)

#>

#> Exact binomial test

#>

#> data: n_just_above and n_just_below + n_just_above

#> number of successes = 47, number of trials = 105, p-value = 0.3291

#> alternative hypothesis: true probability of success is not equal to 0.5

#> 95 percent confidence interval:

#> 0.3504736 0.5477955

#> sample estimates:

#> probability of success

#> 0.4476196. The rdpower Package (Cattaneo, Titiunik, Vazquez-Bare 2019)

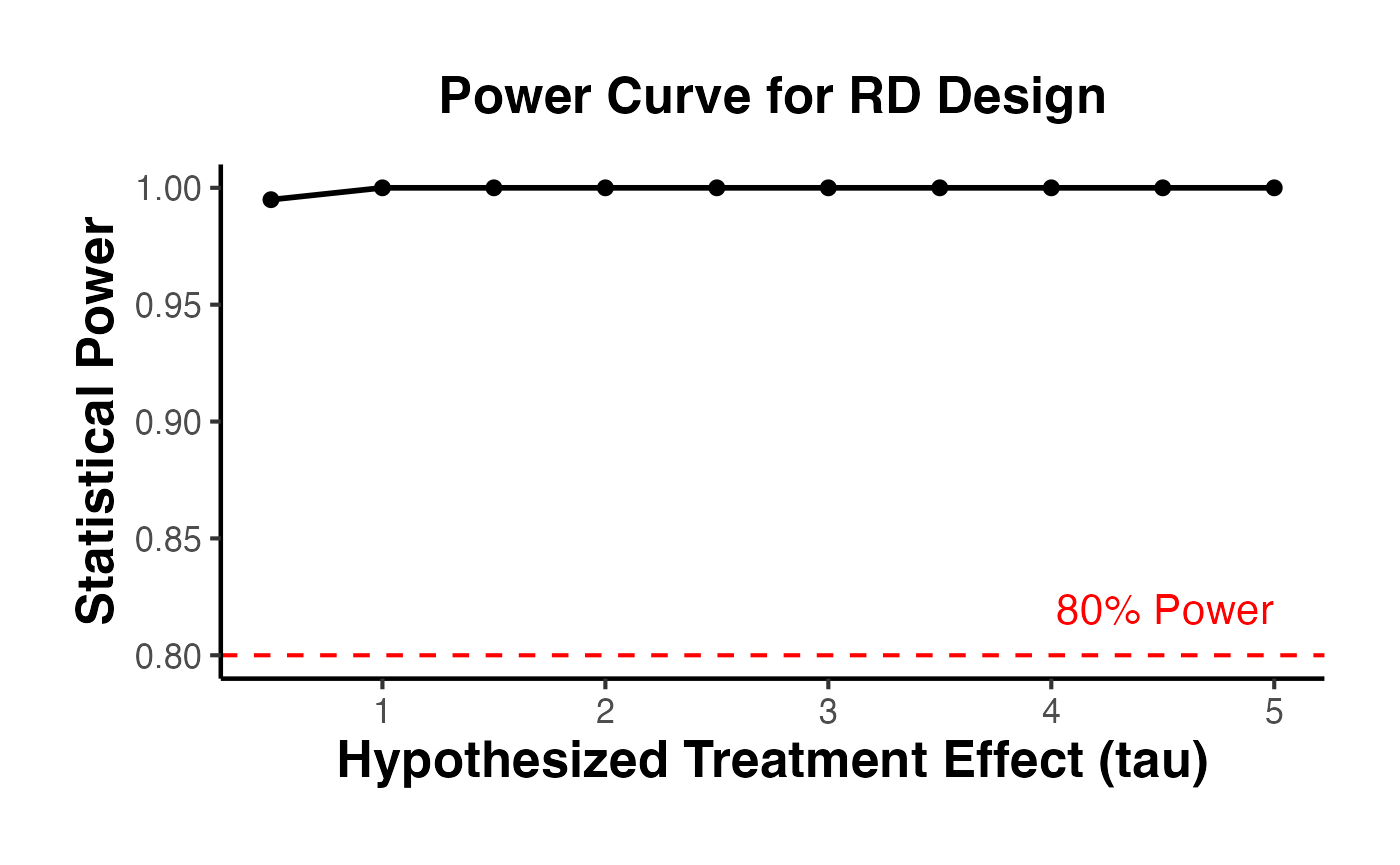

The rdpower package provides tools for power calculations and sample size determination in RD designs. Power analysis is critical for planning new studies and for assessing whether existing studies had adequate power to detect effects of a given size.

6.1 Power Calculations with rdpower()

Given data (or summary statistics) and a hypothesized effect size, rdpower() computes the power of the RD test.

library(rdpower)

# Power calculation using the data directly

# tau = 3 is the hypothesized treatment effect

power_result <- rdpower(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = 3

)

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 3

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.6 1.5 2.4 3

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

print(power_result)

#> $power.rbc

#> [1] 1

#>

#> $se.rbc

#> [1] 0.1103627

#>

#> $sampsi.r

#> [1] 264

#>

#> $sampsi.l

#> [1] 281

#>

#> $samph.r

#> [1] 0.2809692

#>

#> $samph.l

#> [1] 0.2809692

#>

#> $N.r

#> [1] 977

#>

#> $N.l

#> [1] 1023

#>

#> $Nh.l

#> [1] 281

#>

#> $Nh.r

#> [1] 264

#>

#> $tau

#> [1] 3

#>

#> $bias.r

#> [1] -0.2511478

#>

#> $bias.l

#> [1] -0.1288031

#>

#> $Vr.rb

#> [1] 3.238033

#>

#> $Vl.rb

#> [1] 3.606328

#>

#> $alpha

#> [1] 0.056.2 Power for Different Effect Sizes

# How does power change with the effect size?

effect_sizes <- seq(0.5, 5, by = 0.5)

power_by_tau <- lapply(effect_sizes, function(tau) {

pw <- rdpower(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = tau

)

tibble(

tau = tau,

power = pw$power

)

}) |>

bind_rows()

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 0.5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.1 0.25 0.4 0.5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 0.148 0.62 0.952 0.995

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 1

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.2 0.5 0.8 1

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 0.441 0.995 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 1.5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.3 0.75 1.2 1.5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 0.776 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 2

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.4 1 1.6 2

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 0.952 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 2.5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.5 1.25 2 2.5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 0.995 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 3

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.6 1.5 2.4 3

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 3.5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.7 1.75 2.8 3.5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 4

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.8 2 3.2 4

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 4.5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.9 2.25 3.6 4.5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

#>

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 5

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#> New sample 281 264

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 1 2.5 4 5

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

ggplot(power_by_tau, aes(x = tau, y = power)) +

geom_line(linewidth = 1) +

geom_point(size = 2) +

geom_hline(yintercept = 0.8, linetype = "dashed", color = "red") +

annotate("text", x = max(effect_sizes), y = 0.82, label = "80% Power",

color = "red", hjust = 1) +

labs(

x = "Hypothesized Treatment Effect (tau)",

y = "Statistical Power",

title = "Power Curve for RD Design"

) +

causalverse::ama_theme()

6.3 Sample Size Calculations with rdsampsi()

Given a desired power level and hypothesized effect size, rdsampsi() determines the required sample size.

# Required sample size for 80% power to detect tau = 3

sampsi_result <- rdsampsi(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = 3

)

#> Calculating sample size...Sample size obtained.

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 3

#> Power = 0.8

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#>

#>

#> =========================================================================================

#> Number of obs in window Proportion

#> [c-h,c) [c,c+h] Total [c,c+h]

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 4 3 7 0.487

#> =========================================================================================

print(sampsi_result)

#> $sampsi.h.tot

#> [1] 7

#>

#> $sampsi.h.r

#> [1] 3

#>

#> $sampsi.h.l

#> [1] 4

#>

#> $sampsi.tot

#> [1] 22

#>

#> $N.r

#> [1] 977

#>

#> $N.l

#> [1] 1023

#>

#> $Nh.r

#> [1] 264

#>

#> $Nh.l

#> [1] 281

#>

#> $bias.r

#> [1] -0.2511478

#>

#> $bias.l

#> [1] -0.2511478

#>

#> $var.r

#> [1] 3.238033

#>

#> $var.l

#> [1] 3.606328

#>

#> $samph.r

#> [1] 0.2809692

#>

#> $samph.l

#> [1] 0.2809692

#>

#> $tau

#> [1] 3

#>

#> $beta

#> [1] 0.8

#>

#> $alpha

#> [1] 0.05

#>

#> $init.cond

#> [1] 2000

#>

#> $no.iter

#> [1] 28

# Required sample size for 80% power to detect tau = 1.5

# (smaller effect requires more data)

sampsi_small <- rdsampsi(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = 1.5

)

#> Calculating sample size...Sample size obtained.

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 1.5

#> Power = 0.8

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#>

#>

#> =========================================================================================

#> Number of obs in window Proportion

#> [c-h,c) [c,c+h] Total [c,c+h]

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 12 12 24 0.487

#> =========================================================================================

print(sampsi_small)

#> $sampsi.h.tot

#> [1] 24

#>

#> $sampsi.h.r

#> [1] 12

#>

#> $sampsi.h.l

#> [1] 12

#>

#> $sampsi.tot

#> [1] 85

#>

#> $N.r

#> [1] 977

#>

#> $N.l

#> [1] 1023

#>

#> $Nh.r

#> [1] 264

#>

#> $Nh.l

#> [1] 281

#>

#> $bias.r

#> [1] -0.2511478

#>

#> $bias.l

#> [1] -0.2511478

#>

#> $var.r

#> [1] 3.238033

#>

#> $var.l

#> [1] 3.606328

#>

#> $samph.r

#> [1] 0.2809692

#>

#> $samph.l

#> [1] 0.2809692

#>

#> $tau

#> [1] 1.5

#>

#> $beta

#> [1] 0.8

#>

#> $alpha

#> [1] 0.05

#>

#> $init.cond

#> [1] 2000

#>

#> $no.iter

#> [1] 21

# Using data-based approach with different tau

sampsi_summary <- rdsampsi(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = 2

)

#> Calculating sample size...Sample size obtained.

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 2

#> Power = 0.8

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 281 264

#> BW loc. poly. 0.281 0.281

#> Order loc. poly. 1 1

#> Sampling BW 0.281 0.281

#>

#>

#> =========================================================================================

#> Number of obs in window Proportion

#> [c-h,c) [c,c+h] Total [c,c+h]

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 7 7 14 0.487

#> =========================================================================================

print(sampsi_summary)

#> $sampsi.h.tot

#> [1] 14

#>

#> $sampsi.h.r

#> [1] 7

#>

#> $sampsi.h.l

#> [1] 7

#>

#> $sampsi.tot

#> [1] 48

#>

#> $N.r

#> [1] 977

#>

#> $N.l

#> [1] 1023

#>

#> $Nh.r

#> [1] 264

#>

#> $Nh.l

#> [1] 281

#>

#> $bias.r

#> [1] -0.2511478

#>

#> $bias.l

#> [1] -0.2511478

#>

#> $var.r

#> [1] 3.238033

#>

#> $var.l

#> [1] 3.606328

#>

#> $samph.r

#> [1] 0.2809692

#>

#> $samph.l

#> [1] 0.2809692

#>

#> $tau

#> [1] 2

#>

#> $beta

#> [1] 0.8

#>

#> $alpha

#> [1] 0.05

#>

#> $init.cond

#> [1] 2000

#>

#> $no.iter

#> [1] 246.4 Power with Covariates

# Covariates that predict the outcome can increase power

covs <- as.matrix(rd_data[, c("age", "education")])

power_with_covs <- rdpower(

data = data.frame(y = rd_data$y, x = rd_data$x),

cutoff = 0,

tau = 3,

covs = covs

)

#>

#> Number of obs = 2000

#> BW type = mserd

#> Kernel type = Triangular

#> VCE method = NN

#> Derivative = 0

#> HA: tau = 3

#>

#>

#> Cutoff c = 0 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 275 262

#> BW loc. poly. 0.276 0.276

#> Order loc. poly. 1 1

#> Sampling BW 0.276 0.276

#> New sample 275 262

#>

#>

#> =========================================================================================

#> Power against: H0: tau = 0.2*tau = 0.5*tau = 0.8*tau = tau =

#> 0 0.6 1.5 2.4 3

#> -----------------------------------------------------------------------------------------

#> Robust bias-corrected 0.05 1 1 1 1

#> =========================================================================================

print(power_with_covs)

#> $power.rbc

#> [1] 1

#>

#> $se.rbc

#> [1] 0.1094834

#>

#> $sampsi.r

#> [1] 262

#>

#> $sampsi.l

#> [1] 275

#>

#> $samph.r

#> [1] 0.2760553

#>

#> $samph.l

#> [1] 0.2760553

#>

#> $N.r

#> [1] 977

#>

#> $N.l

#> [1] 1023

#>

#> $Nh.l

#> [1] 275

#>

#> $Nh.r

#> [1] 262

#>

#> $tau

#> [1] 3

#>

#> $bias.r

#> [1] -0.2399738

#>

#> $bias.l

#> [1] -0.1315514

#>

#> $Vr.rb

#> [1] 3.236204

#>

#> $Vl.rb

#> [1] 3.381728

#>

#> $alpha

#> [1] 0.057. The rdlocrand Package (Cattaneo, Frandsen, Titiunik 2015)

The rdlocrand package implements the local randomization approach to RD inference. Instead of relying on continuity and local polynomial estimation, this approach treats the RD design as a local experiment within a narrow window around the cutoff. Within this window, treatment assignment is assumed to be as good as random.

The key advantages of the local randomization approach are:

- Valid inference even with very few observations near the cutoff

- No need for bandwidth selection for estimation (the window is selected based on balance, not MSE)

- Finite-sample exact inference via permutation tests

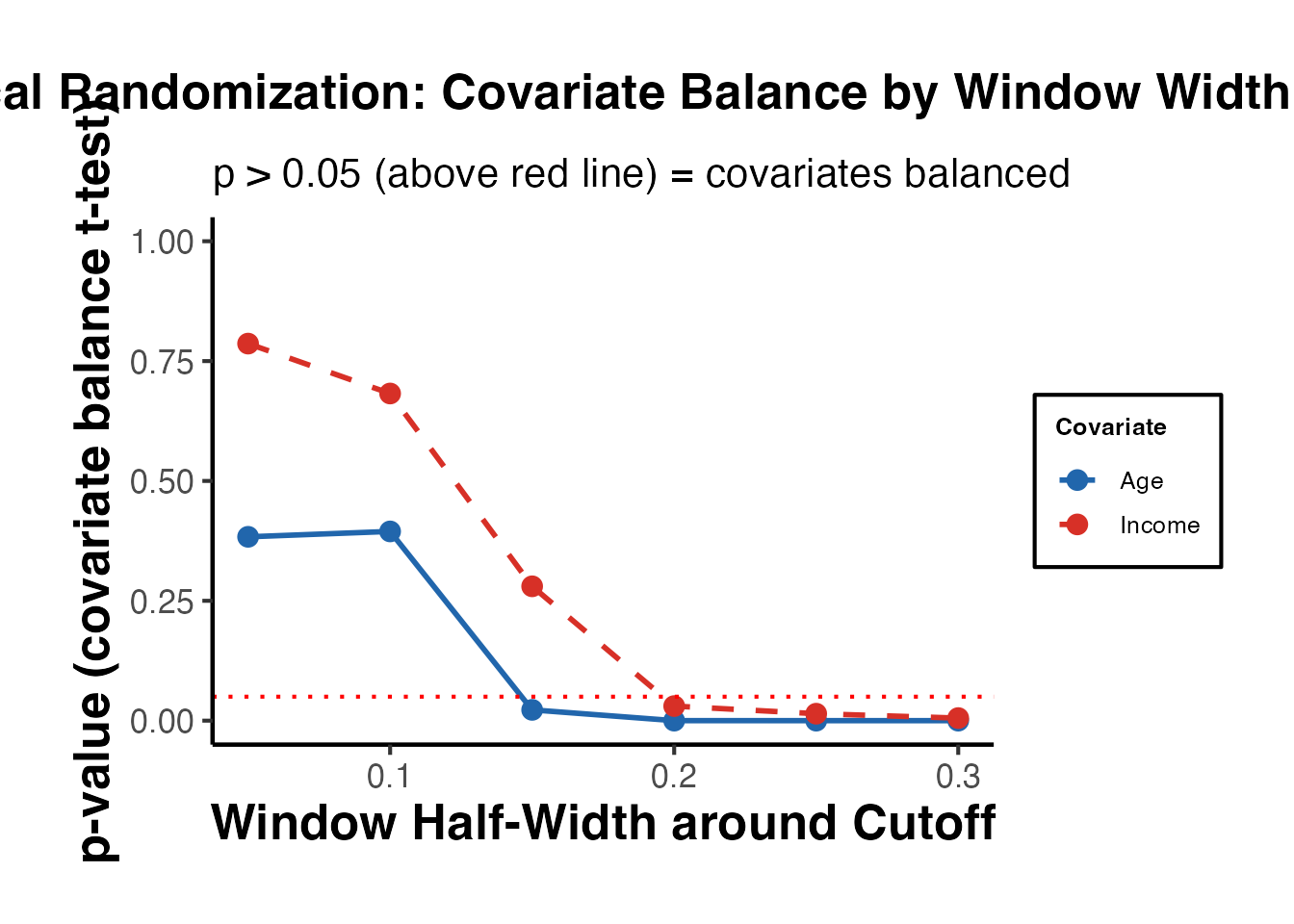

7.1 Window Selection with rdwinselect()

The first step is to select the window within which the local randomization assumption holds. rdwinselect() tests whether pre-determined covariates are balanced across the cutoff within progressively wider windows.

library(rdlocrand)

# Prepare covariates for balance testing

# In rdlocrand v1.1+: R = running variable (first arg), X = covariate matrix (second arg)

covariates <- as.matrix(rd_data[, c("age", "female", "education", "income")])

window_result <- rdwinselect(

R = rd_data$x, # running variable

X = covariates, # pre-treatment covariates

cutoff = 0,

wmin = 0.10,

wstep = 0.05,

nwindows = 8,

seed = 42

)

#>

#>

#> Window selection for RD under local randomization

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Testing method = rdrandinf

#> Balance test = diffmeans

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> 1st percentile 10 10

#> 5th percentile 51 48

#> 10th percentile 103 98

#> 20th percentile 204 195

#>

#> ================================================================================

#> Window p-value Var. name Bin.test Obs<c Obs>=c

#> ================================================================================

#> -0.1000 0.1000 0.138 income 0.778 103 98

#> -0.1500 0.1500 0.004 income 0.601 143 153

#> -0.2000 0.2000 0.006 income 0.840 194 199

#> -0.2500 0.2500 0.000 income 0.716 246 237

#> -0.3000 0.3000 0.000 age 0.382 300 278

#> -0.3500 0.3500 0.000 age 0.376 350 326

#> -0.4000 0.4000 0.000 age 0.449 395 373

#> -0.4500 0.4500 0.000 age 0.609 439 423

#> ================================================================================

#> Smallest window does not pass covariate test.

#> Decrease smallest window or reduce level.

summary(window_result)

#> Length Class Mode

#> w_left 1 -none- logical

#> w_right 1 -none- logical

#> wlist_left 8 -none- numeric

#> wlist_right 8 -none- numeric

#> results 56 -none- numeric

#> summary 10 -none- numericThe function returns a sequence of windows and p-values from balance tests. The recommended window is the largest window for which covariates remain balanced.

7.2 Randomization Inference with rdrandinf()

Once the window is selected, rdrandinf() performs randomization-based inference for the treatment effect.

# Randomization inference with the selected window

# Suppose the selected window is [-0.1, 0.1]

ri_result <- rdrandinf(

Y = rd_data$y,

R = rd_data$x,

cutoff = 0,

wl = -0.1, # left endpoint of window

wr = 0.1, # right endpoint of window

seed = 42

)

#>

#> Selected window = [-0.1;0.1]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 103 98

#> Mean of outcome 2.411 5.663

#> S.d. of outcome 0.519 0.515

#> Window -0.100 0.100

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.260

#> ================================================================================

#> Diff. in means 3.252 0.000 0.000 0.945

#> ================================================================================

summary(ri_result)

#> Length Class Mode

#> sumstats 10 -none- numeric

#> obs.stat 1 -none- numeric

#> p.value 1 -none- numeric

#> asy.pvalue 1 -none- numeric

#> window 2 -none- numeric7.3 Bernoulli vs. Fixed Margins Randomization

rdrandinf supports different randomization mechanisms:

# Bernoulli randomization (default)

# Each unit is independently assigned to treatment with probability p

ri_bernoulli <- rdrandinf(

Y = rd_data$y,

R = rd_data$x,

cutoff = 0,

wl = -0.1,

wr = 0.1,

bernoulli = TRUE,

seed = 42

)

#>

#> Selected window = [-0.1;0.1]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = Bernoulli

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 103 98

#> Mean of outcome 2.411 5.663

#> S.d. of outcome 0.519 0.515

#> Window -0.100 0.100

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.260

#> ================================================================================

#> Diff. in means 3.252 NaN 0.000 0.945

#> ================================================================================

# Fixed margins randomization

# The number treated and control is fixed at the observed values

ri_fixed <- rdrandinf(

Y = rd_data$y,

R = rd_data$x,

cutoff = 0,

wl = -0.1,

wr = 0.1,

bernoulli = FALSE,

seed = 42

)

#>

#> Selected window = [-0.1;0.1]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = Bernoulli

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 103 98

#> Mean of outcome 2.411 5.663

#> S.d. of outcome 0.519 0.515

#> Window -0.100 0.100

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.260

#> ================================================================================

#> Diff. in means 3.252 NaN 0.000 0.945

#> ================================================================================

cat("Bernoulli p-value:", ri_bernoulli$p.value, "\n")

#> Bernoulli p-value: NaN

cat("Fixed margins p-value:", ri_fixed$p.value, "\n")

#> Fixed margins p-value: NaN7.4 Sensitivity to Window Choice

# Estimate across a range of windows

windows <- seq(0.05, 0.30, by = 0.05)

ri_sensitivity <- lapply(windows, function(w) {

est <- rdrandinf(

Y = rd_data$y,

R = rd_data$x,

cutoff = 0,

wl = -w,

wr = w,

seed = 42

)

tibble(

window_half = w,

estimate = est$obs.stat,

p_value = est$p.value,

n_window = sum(abs(rd_data$x) <= w)

)

}) |>

bind_rows()

#>

#> Selected window = [-0.05;0.05]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 58 47

#> Mean of outcome 2.498 5.607

#> S.d. of outcome 0.499 0.484

#> Window -0.050 0.050

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.249

#> ================================================================================

#> Diff. in means 3.109 0.000 0.000 0.736

#> ================================================================================

#>

#> Selected window = [-0.1;0.1]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 103 98

#> Mean of outcome 2.411 5.663

#> S.d. of outcome 0.519 0.515

#> Window -0.100 0.100

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.260

#> ================================================================================

#> Diff. in means 3.252 0.000 0.000 0.945

#> ================================================================================

#>

#> Selected window = [-0.15;0.15]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 143 153

#> Mean of outcome 2.394 5.684

#> S.d. of outcome 0.490 0.507

#> Window -0.150 0.150

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.245

#> ================================================================================

#> Diff. in means 3.290 0.000 0.000 0.988

#> ================================================================================

#>

#> Selected window = [-0.2;0.2]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 194 199

#> Mean of outcome 2.373 5.735

#> S.d. of outcome 0.499 0.513

#> Window -0.200 0.200

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.249

#> ================================================================================

#> Diff. in means 3.361 0.000 0.000 0.998

#> ================================================================================

#>

#> Selected window = [-0.25;0.25]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 246 237

#> Mean of outcome 2.335 5.769

#> S.d. of outcome 0.534 0.502

#> Window -0.250 0.250

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.267

#> ================================================================================

#> Diff. in means 3.434 0.000 0.000 1.000

#> ================================================================================

#>

#> Selected window = [-0.3;0.3]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 300 278

#> Mean of outcome 2.299 5.816

#> S.d. of outcome 0.540 0.515

#> Window -0.300 0.300

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.270

#> ================================================================================

#> Diff. in means 3.517 0.000 0.000 1.000

#> ================================================================================

ri_sensitivity

#> # A tibble: 6 × 4

#> window_half estimate p_value n_window

#> <dbl> <dbl> <dbl> <int>

#> 1 0.05 3.11 0 105

#> 2 0.1 3.25 0 201

#> 3 0.15 3.29 0 296

#> 4 0.2 3.36 0 393

#> 5 0.25 3.43 0 483

#> 6 0.3 3.52 0 5787.5 Randomization Inference with Covariates

# Including covariates for precision

ri_covs <- rdrandinf(

Y = rd_data$y,

R = rd_data$x,

cutoff = 0,

wl = -0.1,

wr = 0.1,

covariates = as.matrix(rd_data[, c("age", "education")]),

seed = 42

)

#>

#> Selected window = [-0.1;0.1]

#>

#> Running randomization-based test...

#> Randomization-based test complete.

#>

#>

#> Number of obs = 2000

#> Order of poly = 0

#> Kernel type = uniform

#> Reps = 1000

#> Window = set by user

#> H0: tau = 0.000

#> Randomization = fixed margins

#>

#> Cutoff c = 0.000 Left of c Right of c

#> Number of obs 1023 977

#> Eff. number of obs 103 98

#> Mean of outcome 2.411 5.663

#> S.d. of outcome 0.519 0.515

#> Window -0.100 0.100

#>

#> ================================================================================

#> Finite sample Large sample

#> ------------------ -----------------------------

#> Statistic T P>|T| P>|T| Power vs d = 0.260

#> ================================================================================

#> Diff. in means 3.252 0.000 0.000 0.945

#> ================================================================================

summary(ri_covs)

#> Length Class Mode

#> sumstats 10 -none- numeric

#> obs.stat 1 -none- numeric

#> p.value 1 -none- numeric

#> asy.pvalue 1 -none- numeric

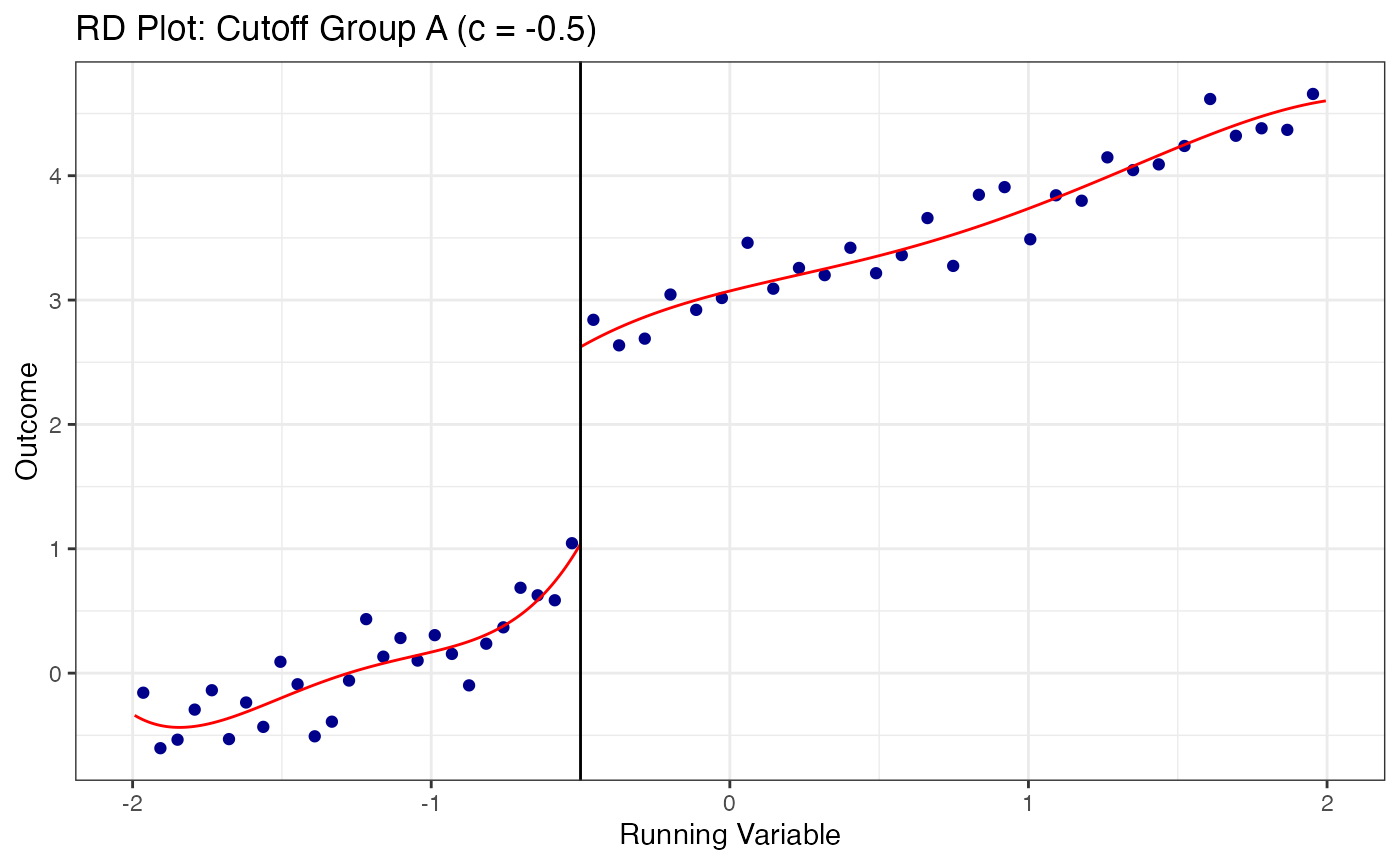

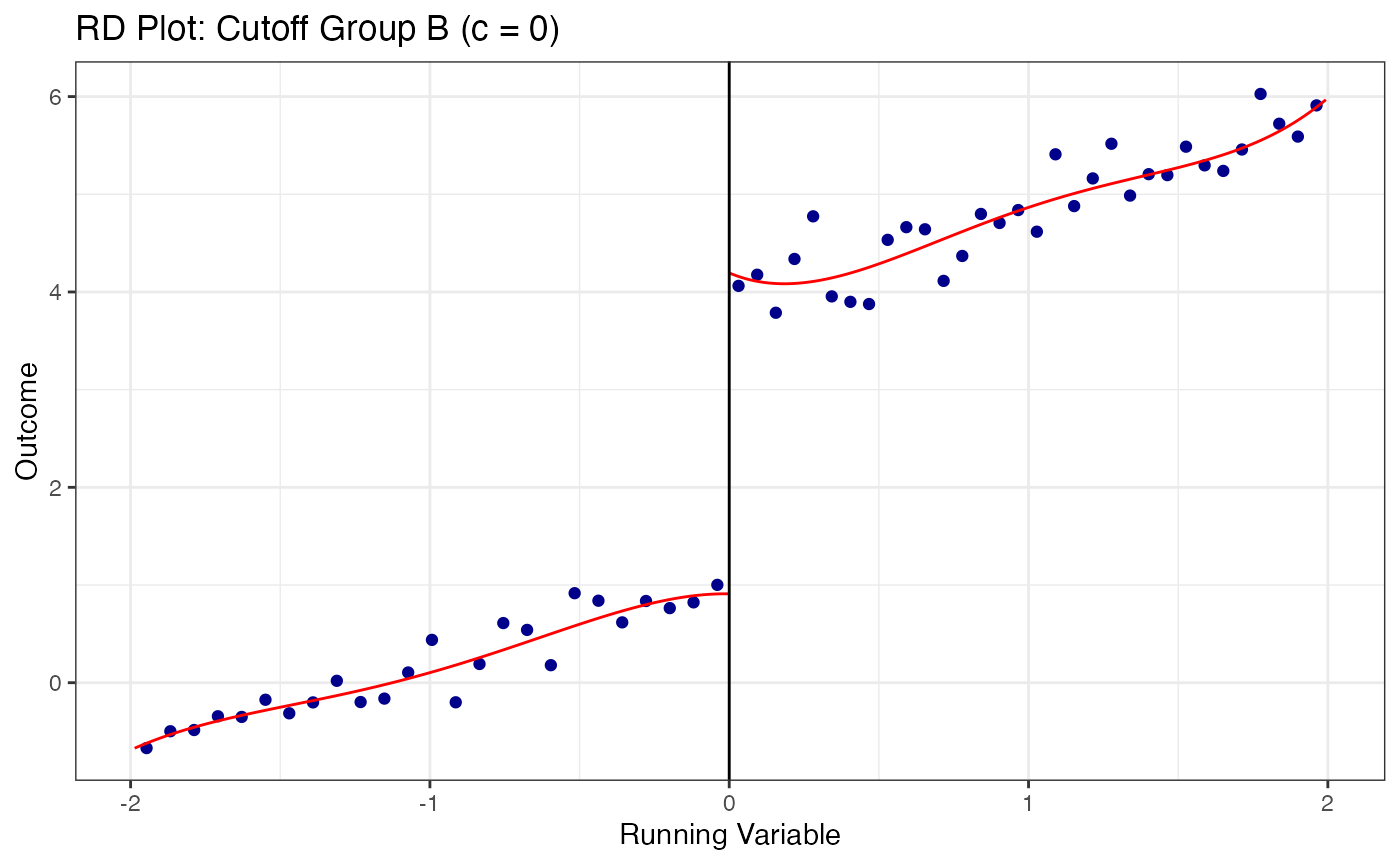

#> window 2 -none- numeric8. The rdmulti Package (Cattaneo, Keele, Titiunik, Vazquez-Bare 2021)

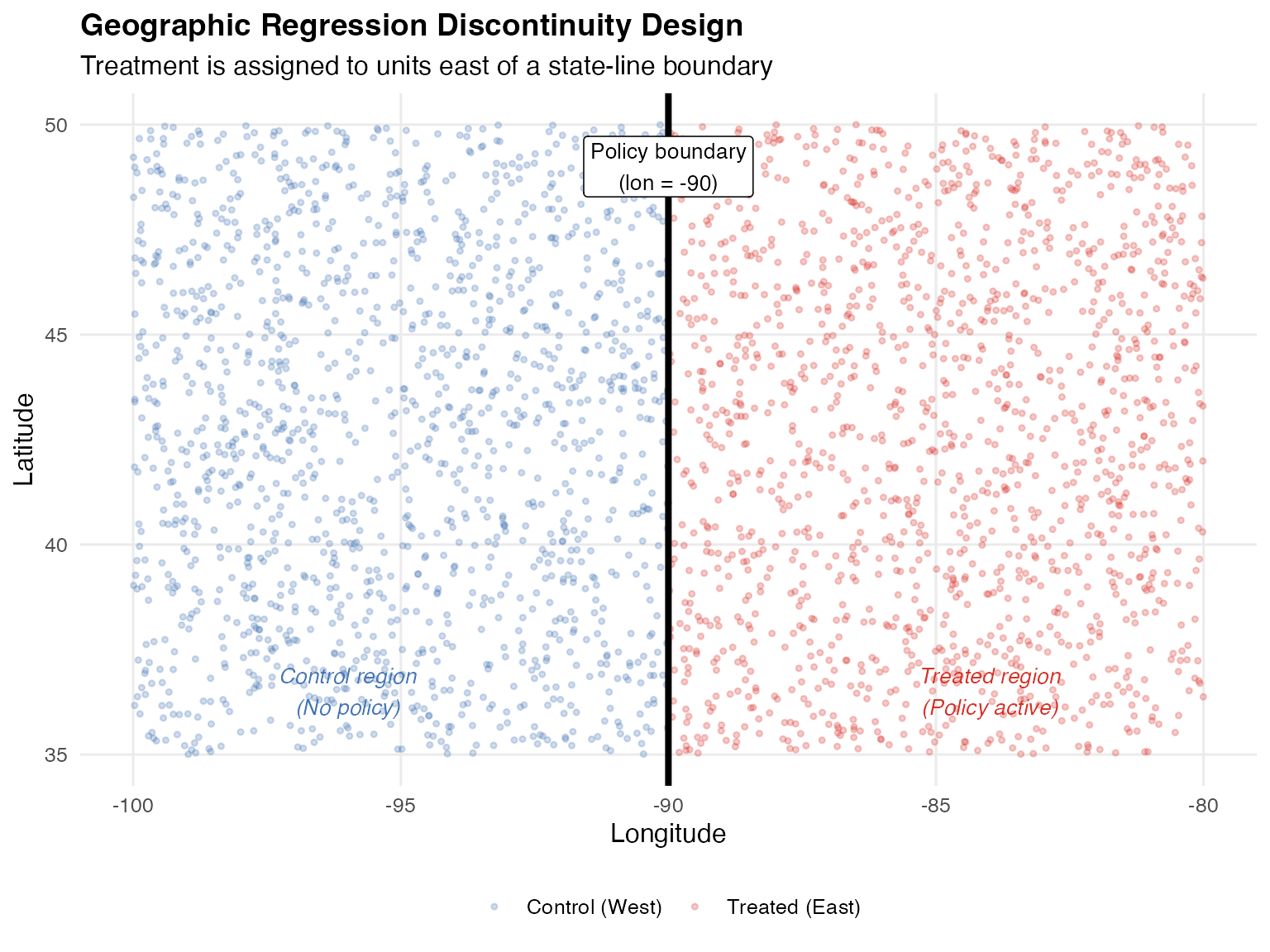

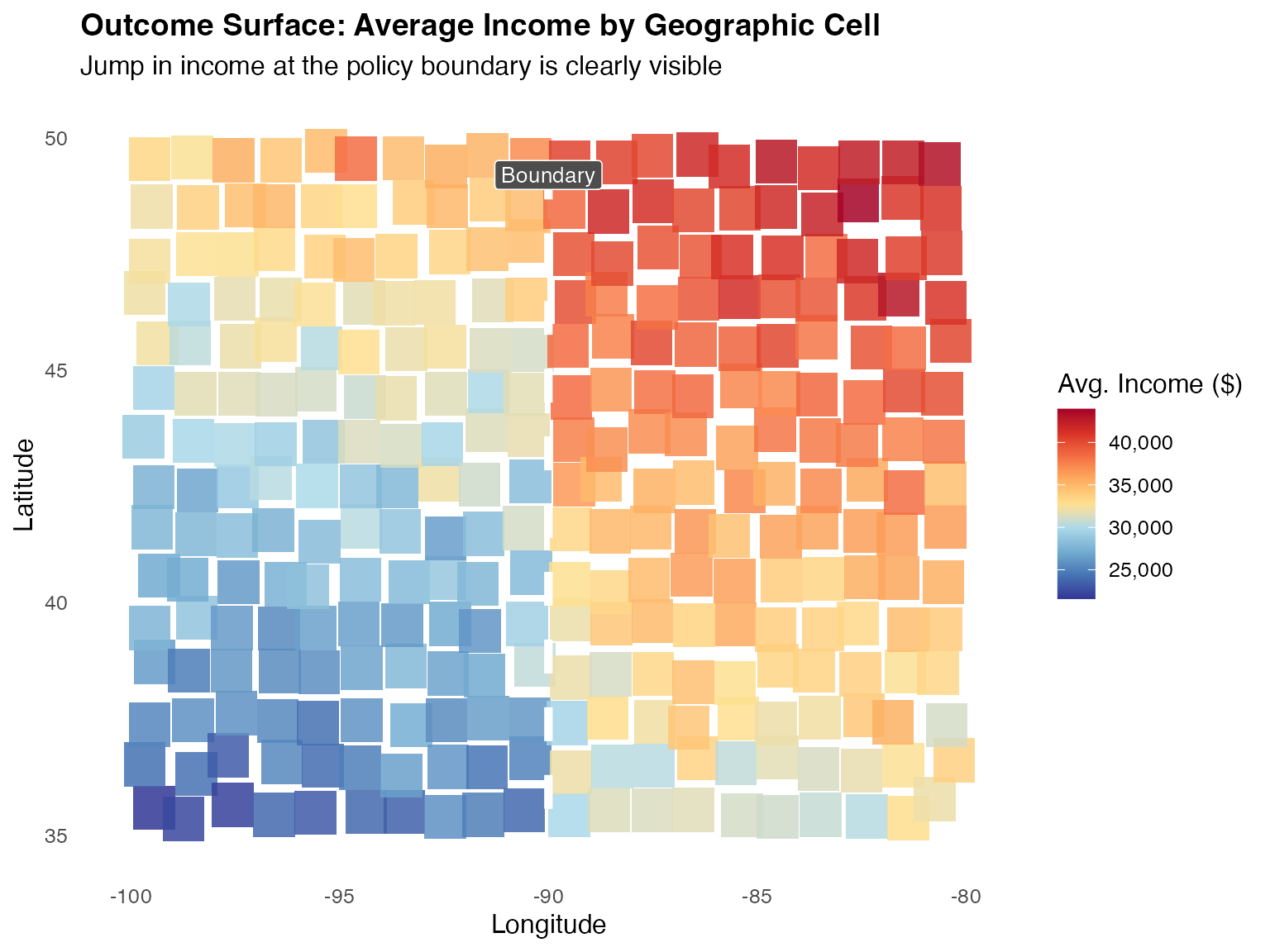

The rdmulti package extends the RD framework to settings with multiple cutoffs or multiple running variables. These settings are common in practice:

- Multiple cutoffs: Different states set different eligibility thresholds for the same program

- Multiple scores: Eligibility depends on multiple test scores simultaneously (e.g., math and reading)

8.1 Simulated Multi-Cutoff Data

library(rdmulti)

# Simulate data with multiple cutoffs

set.seed(2024)

n_multi <- 3000

multi_data <- tibble(

# Running variable

x = runif(n_multi, -2, 2),

# Three different cutoffs (e.g., different states)

cutoff_group = sample(c("A", "B", "C"), n_multi, replace = TRUE),

cutoff_value = case_when(

cutoff_group == "A" ~ -0.5,

cutoff_group == "B" ~ 0.0,

cutoff_group == "C" ~ 0.5

),

# Treatment: above group-specific cutoff

treated = as.integer(x >= cutoff_value),

# Outcome: different effects at different cutoffs

effect = case_when(

cutoff_group == "A" ~ 2.0,

cutoff_group == "B" ~ 3.0,

cutoff_group == "C" ~ 1.5

),

y = 1 + effect * treated + 0.8 * x + rnorm(n_multi, sd = 0.8)

)8.2 Multi-Cutoff RD with rdmc()

The rdmc() function estimates treatment effects at each cutoff separately and then combines them into an overall estimate.

# Multi-cutoff RD estimation

mc_result <- rdmc(

Y = multi_data$y,

X = multi_data$x,

C = multi_data$cutoff_value

)

#>

#> Cutoff-specific RD estimation with robust bias-corrected inference

#> ================================================================================

#> Cutoff Coef. P-value 95% CI hl hr Nh Weight

#> ================================================================================

#> -0.500 1.741 0.000 1.124 2.270 0.457 0.457 267 0.337

#> 0.000 3.180 0.000 2.790 3.666 0.566 0.566 265 0.334

#> 0.500 1.335 0.000 0.808 1.827 0.502 0.502 261 0.329

#> --------------------------------------------------------------------------------

#> Weighted 2.088 0.000 1.789 2.378 . . 793 .

#> Pooled 1.980 0.000 1.718 2.248 0.680 0.680 1050 .

#> ================================================================================

summary(mc_result)

#> Length Class Mode

#> tau 1 -none- numeric

#> se.rb 1 -none- numeric

#> pv.rb 1 -none- numeric

#> ci.rb.l 1 -none- numeric

#> ci.rb.r 1 -none- numeric

#> hl 1 -none- numeric

#> hr 1 -none- numeric

#> Nhl 1 -none- numeric

#> Nhr 1 -none- numeric

#> B 5 -none- numeric

#> V 5 -none- numeric

#> Coefs 5 -none- numeric

#> V_cl 5 -none- numeric

#> W 3 -none- numeric

#> Nh 10 -none- numeric

#> CI 10 -none- numeric

#> CI_cl 10 -none- numeric

#> H 10 -none- numeric

#> Bbw 10 -none- numeric

#> Pv 5 -none- numeric

#> Pv_cl 5 -none- numeric

#> rdrobust.results 33 rdrobust list

#> cfail 0 -none- numericThe function reports:

- Cutoff-specific estimates with confidence intervals

- A pooled estimate (weighted average across cutoffs)

- Effective sample sizes at each cutoff

8.3 Multi-Score RD with rdms()

When treatment depends on multiple running variables simultaneously, rdms() handles the multi-dimensional boundary.

# rdms: Multiple cutoffs along a single running variable

# Classic example: multiple school district thresholds

set.seed(42)

n_ms <- 3000

# Running variable: test score (e.g., 0-100)

x_score <- runif(n_ms, 40, 100)

# Three cutoffs: 60, 70, 80 determine three different program levels

# Each unit is associated with its nearest cutoff

C_vec <- c(60, 70, 80)

cutoff_assign <- cut(x_score, breaks = c(-Inf, 65, 75, Inf),

labels = c(1, 2, 3)) |> as.integer()

C_assigned <- C_vec[cutoff_assign]

# Treatment: above own cutoff

treated_ms <- as.integer(x_score >= C_assigned)

y_ms <- 5 + 3 * treated_ms + 0.05 * x_score + rnorm(n_ms, sd = 1.5)

# Estimate RD at each cutoff separately using rdrobust

cat("=== RD Estimates at Three Cutoffs ===\n")

#> === RD Estimates at Three Cutoffs ===

for (cv in C_vec) {

idx <- abs(x_score - cv) <= 15 # local window

fit <- rdrobust::rdrobust(y = y_ms[idx], x = x_score[idx], c = cv)

cat(sprintf("Cutoff = %d: tau = %.3f (SE = %.3f, p = %.3f, N = %d)\n",

cv, fit$coef[3], fit$se[3], fit$pv[3], sum(idx)))

}

#> Cutoff = 60: tau = 3.359 (SE = 0.467, p = 0.000, N = 1509)

#> Cutoff = 70: tau = 3.507 (SE = 0.635, p = 0.000, N = 1457)

#> Cutoff = 80: tau = 3.033 (SE = 0.496, p = 0.000, N = 1471)8.4 Visualization for Multi-Cutoff Designs

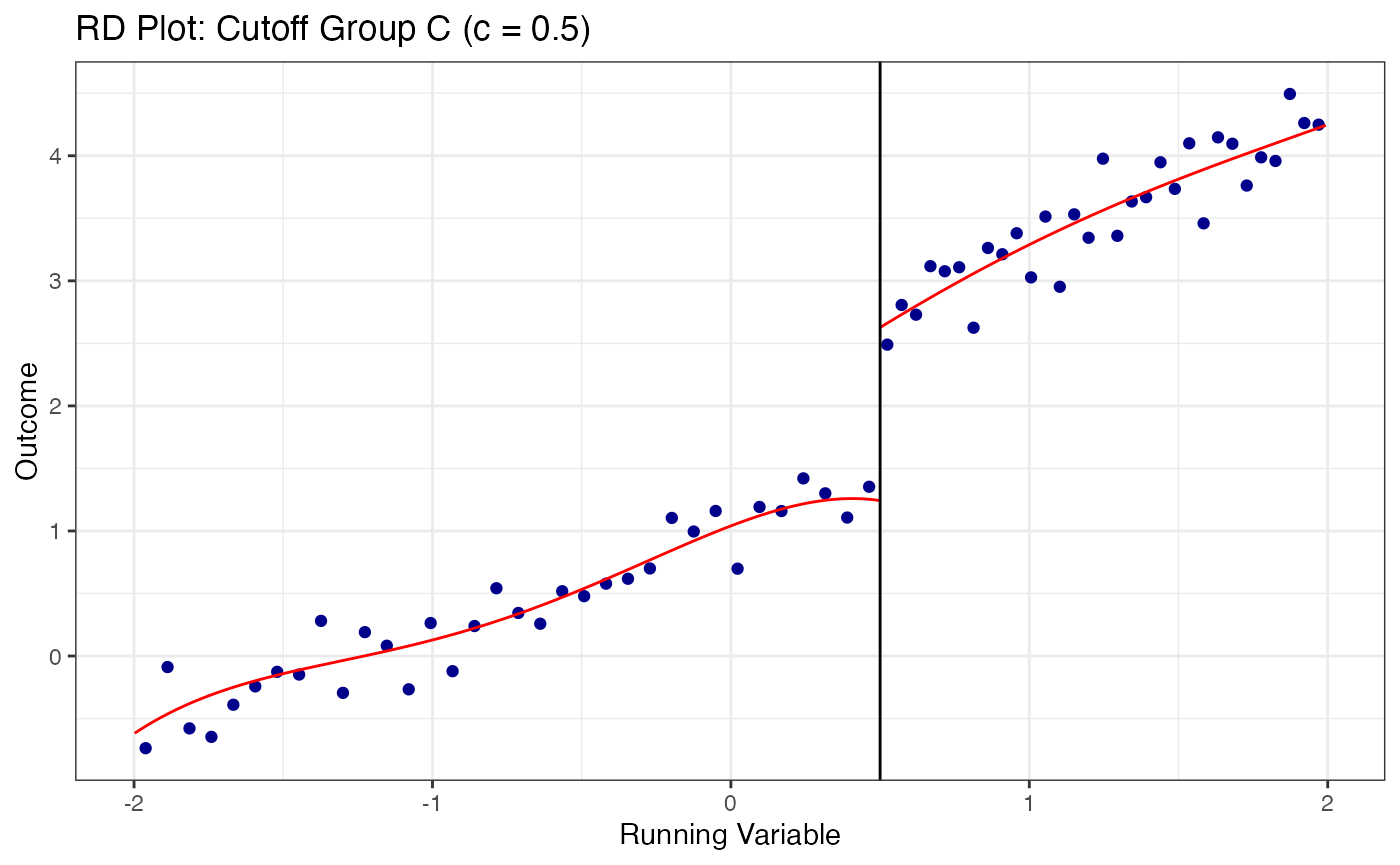

# Plot RD at each cutoff separately

cutoff_labels <- c("A" = -0.5, "B" = 0.0, "C" = 0.5)

for (grp in names(cutoff_labels)) {

sub_data <- multi_data |> filter(cutoff_group == grp)

c_val <- cutoff_labels[grp]

rdplot(

y = sub_data$y,

x = sub_data$x,

c = c_val,

title = paste0("RD Plot: Cutoff Group ", grp, " (c = ", c_val, ")"),

x.label = "Running Variable",

y.label = "Outcome"

)

}

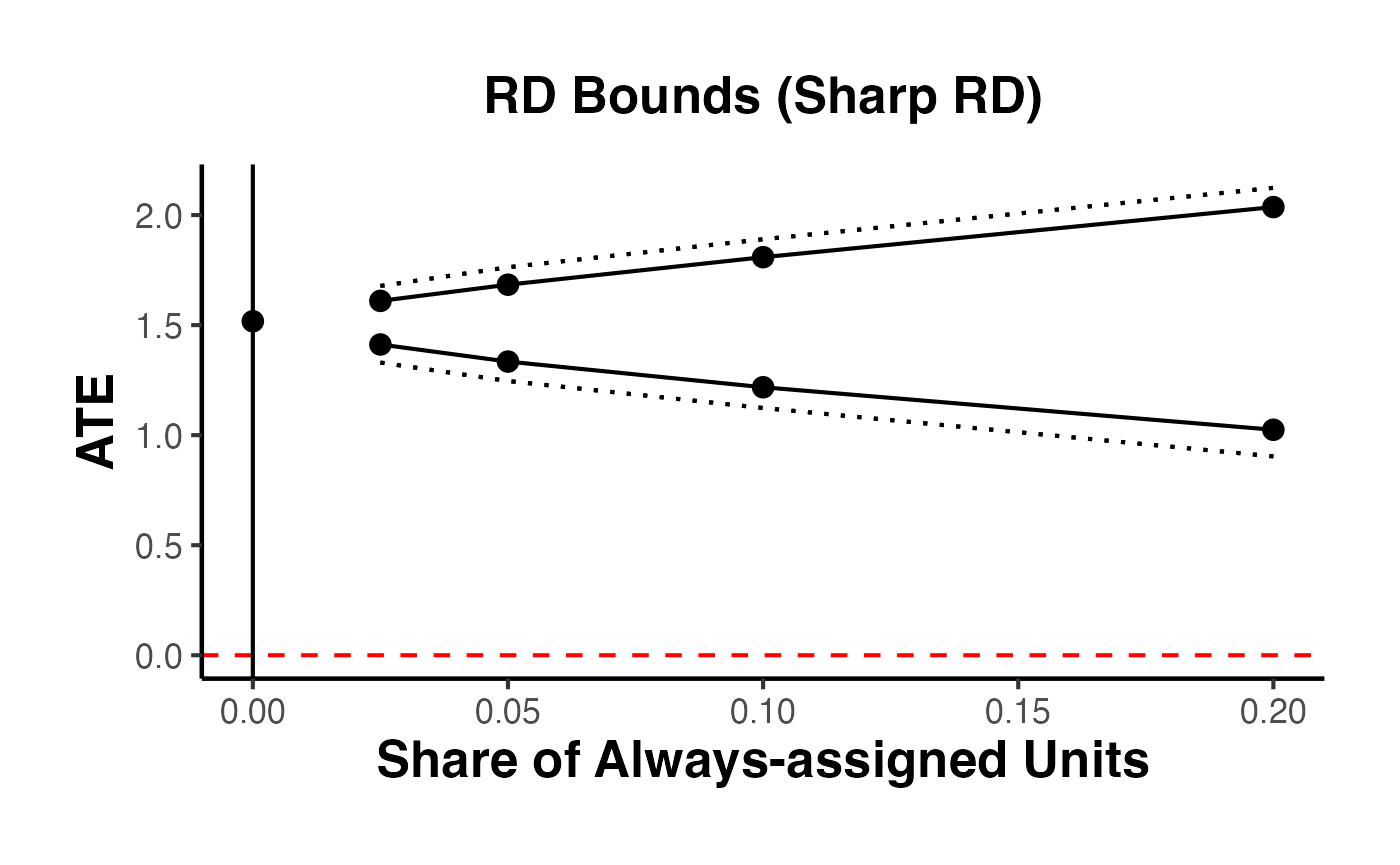

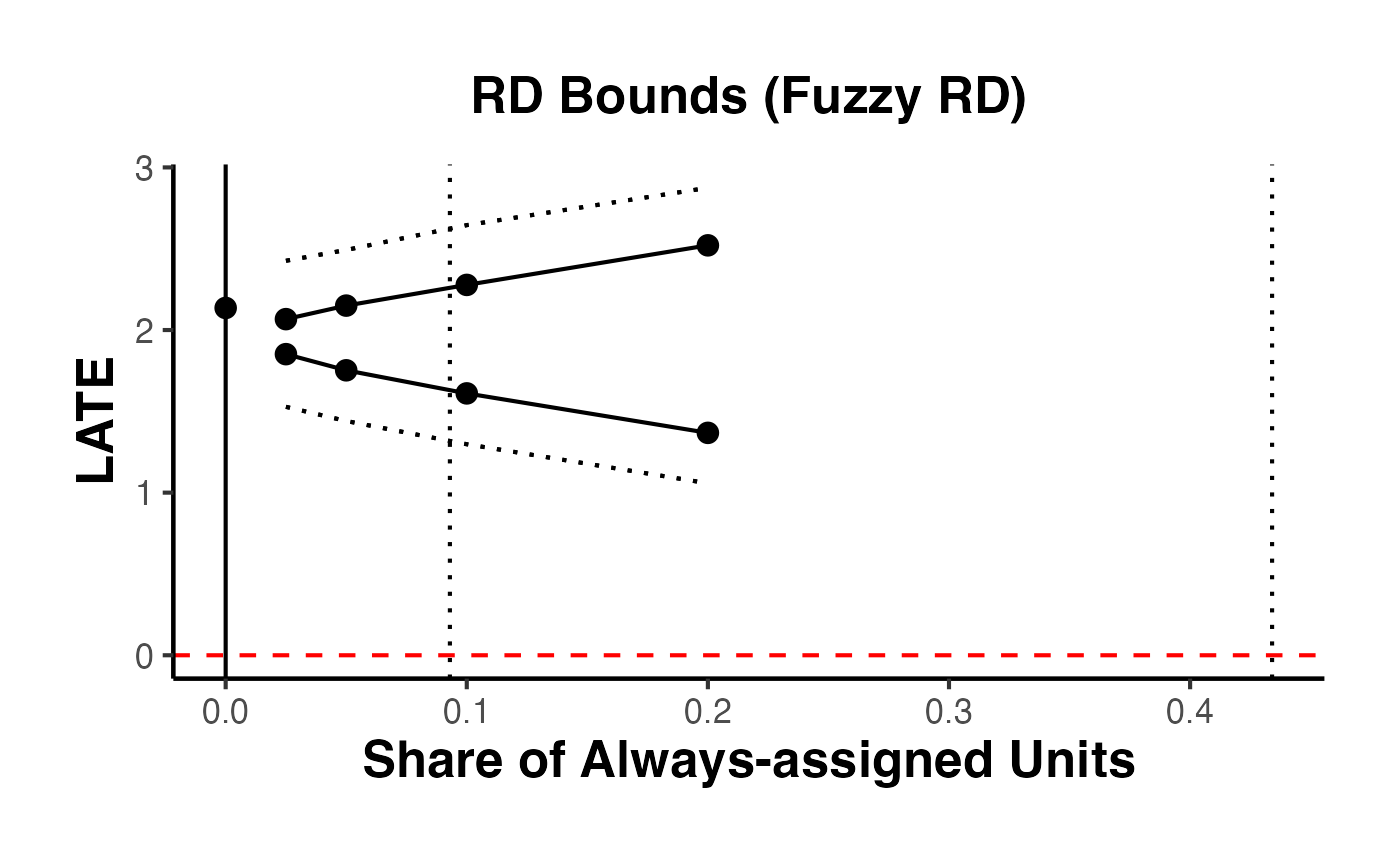

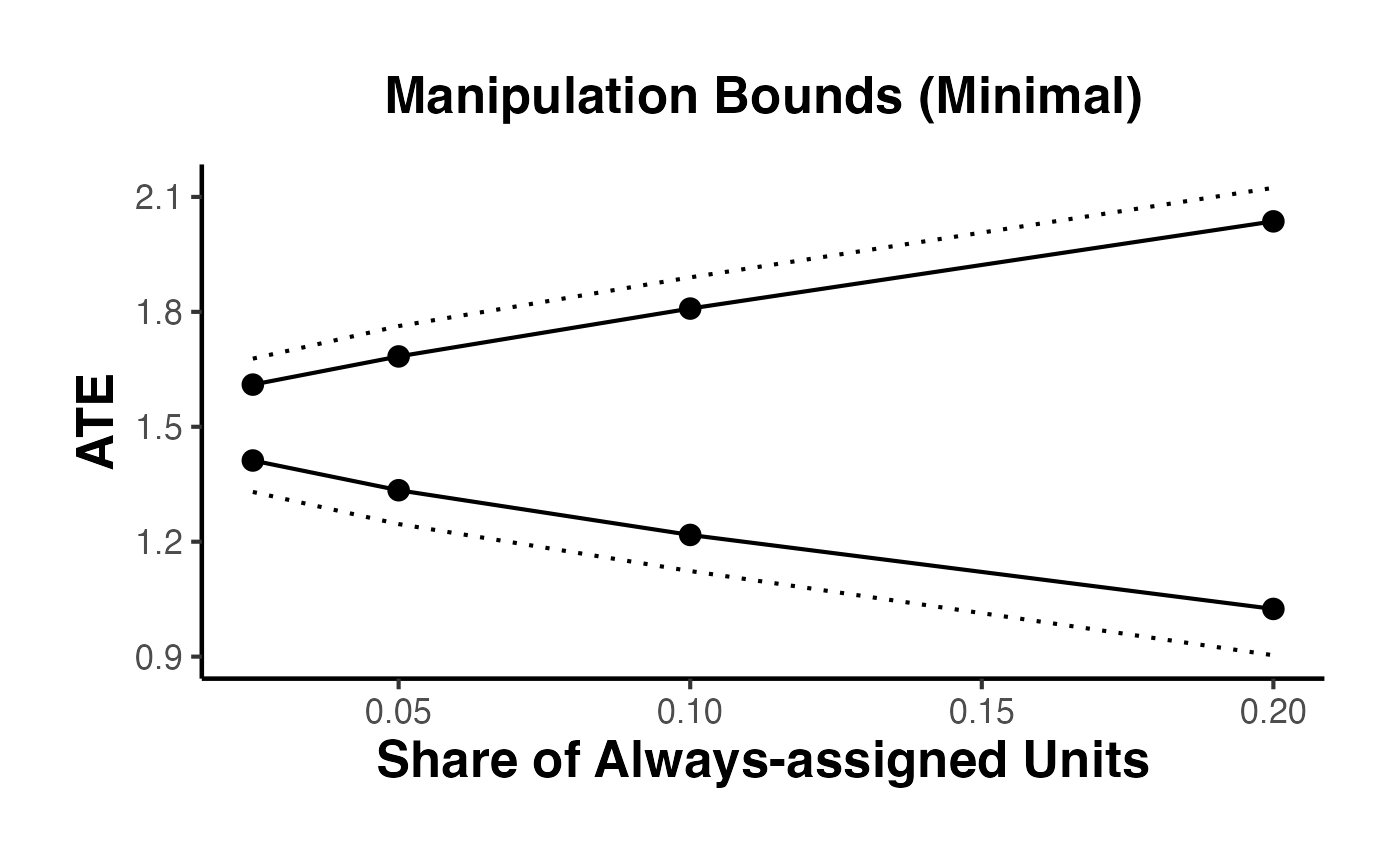

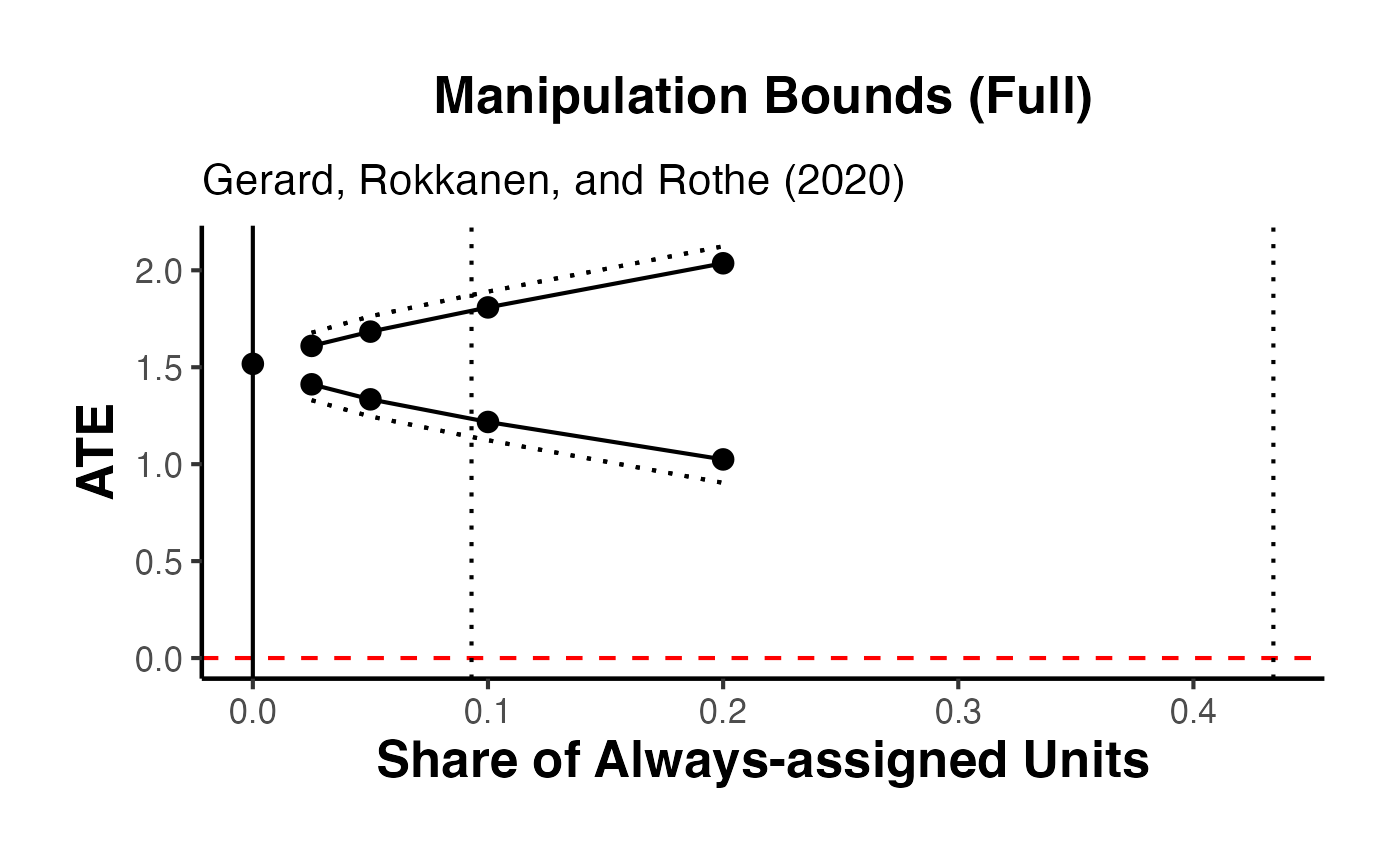

9. RD Bounds with the rdbounds Package and causalverse

When manipulation of the running variable is present or cannot be ruled out, standard RD estimates may be biased. Gerard, Rokkanen, and Rothe (2020) develop a framework for bounding the treatment effect under varying assumptions about the share of “always-assigned” units – those who manipulate their running variable to receive treatment.

The rdbounds package computes these bounds, and causalverse::plot_rd_aa_share() provides a clean visualization.

9.1 Conceptual Framework

The key idea is:

- Let denote the share of always-assigned (manipulating) units

- For each assumed value of , the treatment effect is partially identified (bounded)

- As increases (more manipulation assumed), the bounds widen

- At (no manipulation), we recover the standard RD point estimate

The data itself provides an estimate of the manipulation share (from the density discontinuity).

9.2 Full rdbounds Workflow for Sharp RD

library(rdbounds)

# Step 1: Generate sample data from rdbounds

set.seed(1)

rd_bound_data <- rdbounds::rdbounds_sampledata(10000, covs = FALSE)

#> [1] "True tau: 0.117999815082062"

#> [1] "True treatment effect on potentially-assigned: 2"

#> [1] "True treatment effect on right side of cutoff: 2.35399944524618"

# Step 2: Estimate bounds

rdbounds_est <- rdbounds::rdbounds(

y = rd_bound_data$y,

x = rd_bound_data$x,

treatment = rd_bound_data$treatment,

c = 0,

discrete_x = FALSE,

discrete_y = FALSE,

bwsx = c(.2, .5),

bwy = 1,

kernel = "epanechnikov",

orders = 1,

evaluation_ys = seq(from = 0, to = 15, by = 1),

refinement_A = TRUE,

refinement_B = TRUE,

right_effects = TRUE,

potential_taus = c(.025, .05, .1, .2),

yextremes = c(0, 15),

num_bootstraps = 5

)

#> [1] "The proportion of always-assigned units just to the right of the cutoff is estimated to be 0"

#> [1] "2026-03-22 00:21:12.434436 Estimating CDFs for point estimates"

#> [1] "2026-03-22 00:21:12.771561 .....Estimating CDFs for units just to the right of the cutoff"

#> [1] "2026-03-22 00:21:15.910835 Estimating CDFs with nudged tau (tau_star)"

#> [1] "2026-03-22 00:21:15.966282 .....Estimating CDFs for units just to the right of the cutoff"

#> [1] "2026-03-22 00:21:19.602653 Beginning parallelized output by bootstrap.."

#> [1] "2026-03-22 00:21:24.459971 Estimating CDFs with fixed tau value of: 0.025"

#> [1] "2026-03-22 00:21:24.528206 Estimating CDFs with fixed tau value of: 0.05"

#> [1] "2026-03-22 00:21:24.698436 Estimating CDFs with fixed tau value of: 0.1"