3. Synthetic Difference-in-Differences

Mike Nguyen

2026-03-22

b_synthdid.Rmd

library(causalverse)

knitr::opts_chunk$set(

warning = FALSE,

message = FALSE,cache = TRUE)Theory and Background

Overview

Synthetic Difference-in-Differences (SynthDID) is a causal inference method introduced by Arkhangelsky et al. (2021) that combines the strengths of two well-established approaches: Synthetic Control (SC) and Difference-in-Differences (DID). The key insight is that by combining unit weights (from SC) with time weights (a new addition), SynthDID achieves double robustness properties and improved efficiency over either method alone.

Mathematical Formulation

Consider a balanced panel with units observed over time periods. Among these, units are controls (never treated) and units receive treatment starting at period . Let denote the observed outcome for unit at time .

The SynthDID estimator solves the following optimization problem:

where:

- is the treatment indicator (equals 1 if unit is treated at time , 0 otherwise),

- are unit weights that make the weighted average of control units approximate the treated units in the pre-treatment period,

- are time weights that make the weighted average of pre-treatment periods approximate the post-treatment periods for control units,

- and are unit and time fixed effects.

In practice, the estimator can be written in a more compact “double-differencing” form:

where is the average outcome of treated units in the post-treatment period, and the -superscript denotes a time-weighted average over pre-treatment periods.

Unit Weights ()

The unit weights are chosen to minimize the discrepancy between the weighted combination of control units and the treated units in the pre-treatment period. Specifically, they solve:

where is the simplex (non-negative weights that sum to one), and is a regularization parameter. The intercept is a key difference from standard SC—it allows for a level shift, which connects SynthDID to DID.

Time Weights ()

Time weights are unique to SynthDID and not present in standard SC or DID. They solve:

These time weights focus the comparison on pre-treatment periods that are most informative for predicting post-treatment outcomes among control units. Periods that are more similar to post-treatment periods receive higher weight.

Comparison with SC and DID

The SynthDID framework nests both SC and DID as special cases:

| Method | Unit Weights () | Time Weights () | Intercept |

|---|---|---|---|

| DID | Uniform () | Uniform () | Yes (implicit) |

| SC | Optimized (no intercept) | Zero (only uses levels) | No |

| SynthDID | Optimized (with intercept) | Optimized | Yes |

- DID assumes parallel trends and weights all control units and pre-treatment periods equally. It is valid when the parallel trends assumption holds but can be biased otherwise.

- SC constructs a synthetic control by weighting donor units to match the treated unit’s pre-treatment trajectory in levels. It does not difference, so it relies on a good level match.

- SynthDID combines both approaches. By using optimized unit weights (like SC) and optimized time weights plus an intercept (like DID), it achieves a form of “double robustness”: it remains consistent if either the SC-style level match or the DID-style parallel trends assumption approximately holds.

Assumptions

The formal assumptions underlying SynthDID include:

No anticipation: Treatment has no effect before the treatment period. Units do not change behavior in anticipation of treatment.

SUTVA (Stable Unit Treatment Value Assumption): The potential outcomes for any unit are unaffected by the treatment assignment of other units (no spillovers), and there is only one version of treatment.

Approximate factor model: Outcomes follow an approximate factor model structure: where are time fixed effects, are unit-specific factor loadings, are common factors, and are idiosyncratic errors.

Regularity conditions: The number of pre-treatment periods and control units must grow, and the error terms must satisfy certain mixing and moment conditions for asymptotic inference.

Overlap in pre-treatment outcomes: The pre-treatment trajectory of treated units must lie approximately within the convex hull of control units’ trajectories (similar to SC).

A key advantage of SynthDID over pure DID is that it relaxes the strict parallel trends assumption. Instead, it requires only that the weighted combination of control units (after double-differencing) approximates the treated units’ counterfactual trajectory.

Synthetic DID

Block Adoption (Assignment)

This section contains the code from the synthdid package (Arkhangelsky et al. 2021), which can handle block adoption (i.e., when all units are treated at the same time).

library(synthdid)

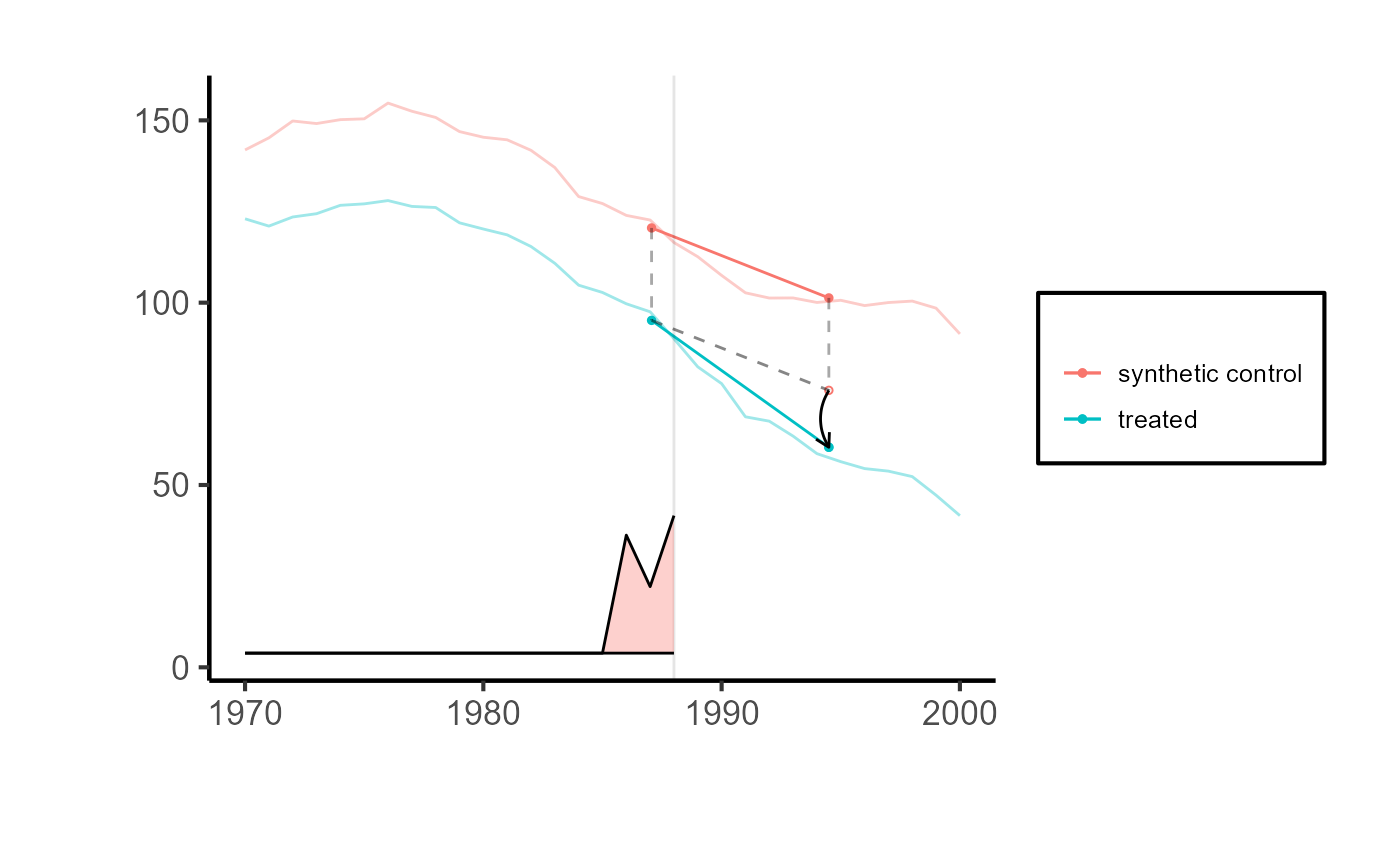

# Estimate the effect of California Proposition 99 on cigarette consumption

data('california_prop99')

setup = synthdid::panel.matrices(synthdid::california_prop99)

tau.hat = synthdid::synthdid_estimate(setup$Y, setup$N0, setup$T0)

print(summary(tau.hat))

#> $estimate

#> [1] -15.60383

#>

#> $se

#> [,1]

#> [1,] NA

#>

#> $controls

#> estimate 1

#> Nevada 0.124

#> New Hampshire 0.105

#> Connecticut 0.078

#> Delaware 0.070

#> Colorado 0.058

#> Illinois 0.053

#> Nebraska 0.048

#> Montana 0.045

#> Utah 0.042

#> New Mexico 0.041

#> Minnesota 0.039

#> Wisconsin 0.037

#> West Virginia 0.034

#> North Carolina 0.033

#> Idaho 0.031

#> Ohio 0.031

#> Maine 0.028

#> Iowa 0.026

#>

#> $periods

#> estimate 1

#> 1988 0.427

#> 1986 0.366

#> 1987 0.206

#>

#> $dimensions

#> N1 N0 N0.effective T1 T0 T0.effective

#> 1.000 38.000 16.388 12.000 19.000 2.783

# for only one treated unit, we can only use the placebo method

# se = sqrt(vcov(tau.hat, method='placebo'))

# sqrt(vcov(tau.hat, method='bootstrap'))

# sqrt(vcov(tau.hat, method='jackknife'))

# sprintf('point estimate: %1.2f', tau.hat)

# sprintf('95%% CI (%1.2f, %1.2f)', tau.hat - 1.96 * se, tau.hat + 1.96 * se)

synthdid::synthdid_plot(tau.hat) + ama_theme()

Using fixest dataset

If you want to use the base_stagg dataset, you have to choose just 1 period, because function synthdid_estimate cannot handle staggered adoption.

df_stagg <- fixest::base_stagg |>

dplyr::filter(year_treated %in% c(10000, 8)) |>

dplyr::transmute(

id = as.factor(id),

year = as.integer(year),

y = as.double(y),

treated = as.integer(if_else(time_to_treatment >= 0, 1, 0))

)

head(df_stagg)

#> id year y treated

#> 1 84 1 -1.5837825 0

#> 2 81 1 1.7723144 0

#> 3 80 1 -0.7819864 0

#> 4 79 1 -4.7819034 0

#> 5 78 1 -8.6996118 0

#> 6 77 1 1.6714719 0

setup_stagg = df_stagg |>

# specify column names

synthdid::panel.matrices(

unit = "id",

time = "year",

outcome = "y",

treatment = "treated"

)

head(fixest::base_did)

#> y x1 id period post treat

#> 1 2.87530627 0.5365377 1 1 0 1

#> 2 1.86065272 -3.0431894 1 2 0 1

#> 3 0.09416524 5.5768439 1 3 0 1

#> 4 3.78147485 -2.8300587 1 4 0 1

#> 5 -2.55819959 -5.0443544 1 5 0 1

#> 6 1.72873240 -0.6363849 1 6 1 1

df = fixest::base_did |>

mutate(

id = as.factor(id),

period = as.integer(period),

y = as.double(y),

post = as.integer(post)

) |>

# correct treatment

mutate(treatment = as.integer(if_else(treat == 0, 0, post)))

setup <- df |>

synthdid::panel.matrices(

unit = "id",

time = "period",

outcome = "y",

treatment = "treatment"

)

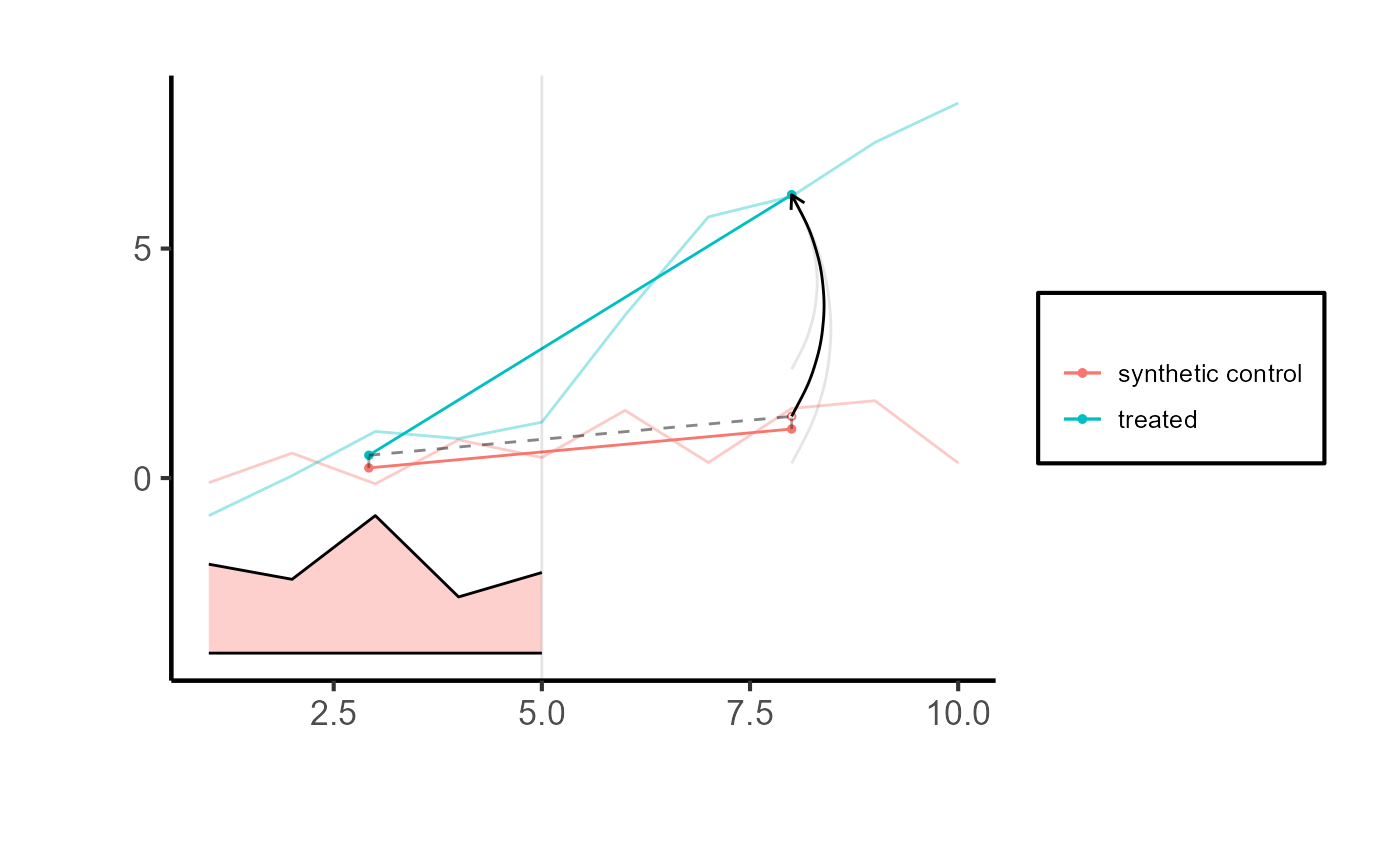

tau.hat = synthdid::synthdid_estimate(setup$Y, setup$N0, setup$T0)

tau.hat

#> synthdid: 4.828 +- 1.025. Effective N0/N0 = 52.4/53~1.0. Effective T0/T0 = 4.6/5~0.9. N1,T1 = 55,5.

# sqrt(vcov(tau.hat, method='bootstrap'))

sqrt(vcov(tau.hat, method='jackknife'))

#> [,1]

#> [1,] 0.5228316

# to use this SE method, must have more controls than treated units

# can try the base_stagg dataset from above

# sqrt(vcov(tau.hat, method='placebo'))

# Comparing counterfactual estimate to real-world observation

synthdid::synthdid_plot(tau.hat

# , se.method = "placebo"

) + ama_theme()

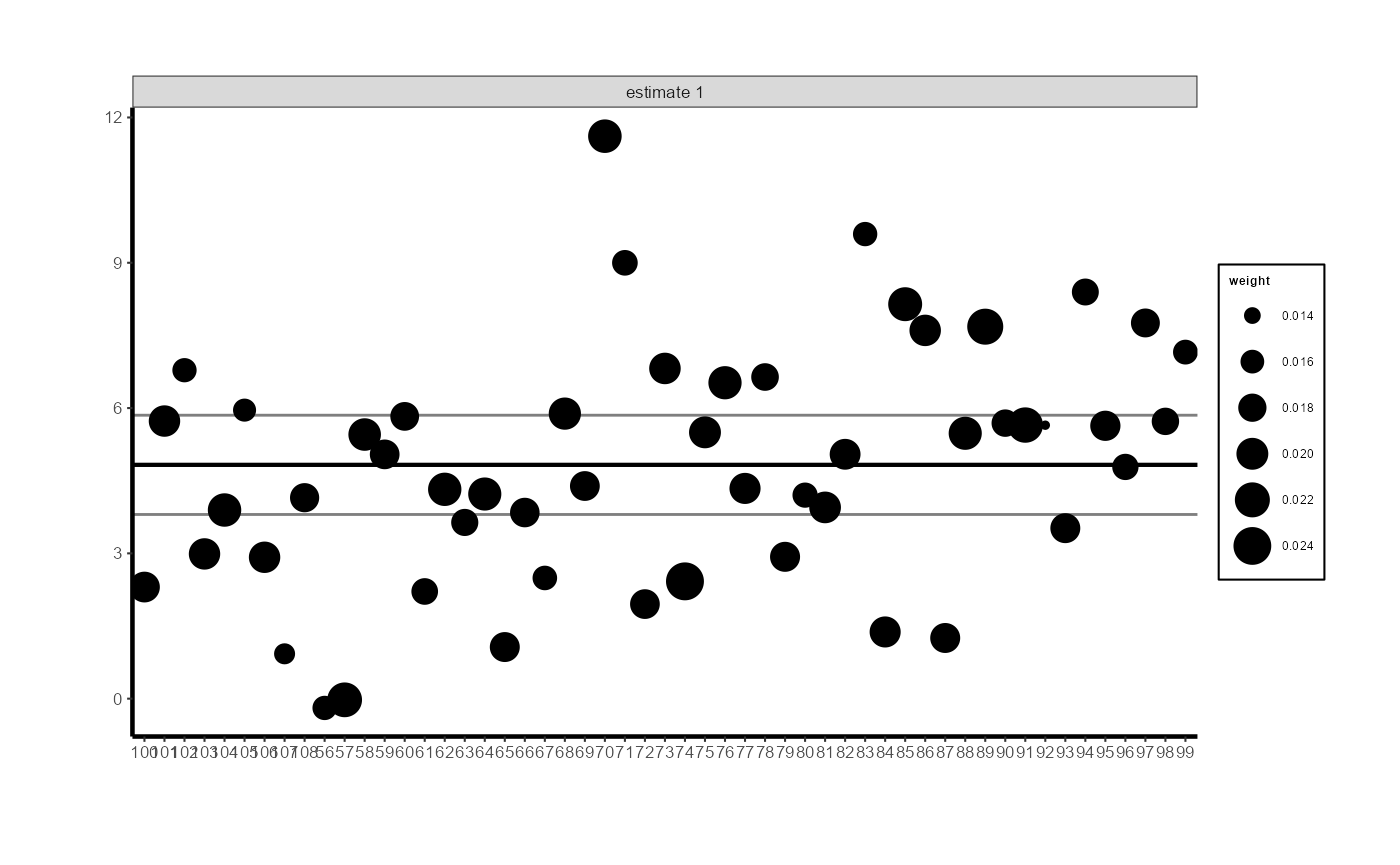

# Control unit contribution plot (black is the ATE, grey lines are CIs)

synthdid::synthdid_units_plot(tau.hat) +

ama_theme(base_size = 8) +

ggplot2::theme(axis.text.x = ggplot2::element_text(angle = 45, hjust = 1, size = 7))

# pre-treatment parallel trends

# synthdid::synthdid_plot(tau.hat, overlay = 1)

# synthdid::synthdid_plot(tau.hat, overlay = .8)

# synthdid::synthdid_placebo_plot(tau.hat)

# synthdid::synthdid_rmse_plot(tau.hat)Incorporating Covariates (Residualization)

The standard SynthDID estimator operates on a single outcome variable and does not directly accept covariates. However, time-varying covariates can be incorporated through a residualization approach: first regress the outcome on covariates, then apply SynthDID to the residuals.

Why Use Covariates?

Covariates can improve SynthDID estimation in several ways:

- Improved pre-treatment fit: By removing variation explained by observables, the residualized outcome may be easier to match across treated and control units.

- Reduced bias: If covariates are correlated with both treatment assignment and outcomes, including them reduces omitted variable bias.

- Increased precision: Removing covariate-driven variation reduces noise, leading to tighter standard errors.

The Residualization Approach

To incorporate time-varying elements into the unit and time weights of the synthdid, consider using residuals obtained from regressing observed outcomes against time-varying variables. This can be expressed as:

where is derived from the regression equation .

The steps are:

- Regress the outcome on covariates (pooling all units and time periods).

- Extract the residuals.

- Apply SynthDID to the residuals as the new outcome.

Single Covariate Example

setup2 <- df |>

dplyr::mutate(y_res = residuals(lm(y ~ x1))) |>

synthdid::panel.matrices(

unit = "id",

time = "period",

outcome = "y_res",

treatment = "treatment"

)

tau.hat2 = synthdid::synthdid_estimate(setup2$Y, setup2$N0, setup2$T0)

tau.hat2

#> synthdid: 4.850 +- 0.923. Effective N0/N0 = 52.5/53~1.0. Effective T0/T0 = 4.6/5~0.9. N1,T1 = 55,5.Multiple Covariates

When multiple covariates are available, simply include them in the regression:

# Example with multiple covariates

# base_did only has x1, so we demonstrate with x1 (extend similarly for x2, x3, etc.)

df_multi <- df |>

dplyr::mutate(y_res = residuals(lm(y ~ x1)))

setup_multi <- df_multi |>

synthdid::panel.matrices(

unit = "id",

time = "period",

outcome = "y_res",

treatment = "treatment"

)

tau_multi = synthdid::synthdid_estimate(setup_multi$Y, setup_multi$N0, setup_multi$T0)

tau_multi

#> synthdid: 4.850 +- 0.923. Effective N0/N0 = 52.5/53~1.0. Effective T0/T0 = 4.6/5~0.9. N1,T1 = 55,5.Important Considerations for Residualization

When using the residualization approach, keep the following in mind:

- Use pre-treatment data only for the regression (ideally). Running the regression on all data including post-treatment periods may introduce post-treatment bias if the treatment affects the covariates. A conservative approach is:

# Conservative approach: estimate beta from pre-treatment data only

beta_pre <- lm(y ~ x1, data = df |> dplyr::filter(post == 0))

df$y_res_conservative <- df$y - predict(beta_pre, newdata = df)

head(df[, c("id", "period", "y", "y_res_conservative")])

#> id period y y_res_conservative

#> 1 1 1 2.87530627 2.060713

#> 2 1 2 1.86065272 4.562316

#> 3 1 3 0.09416524 -5.671367

#> 4 1 4 3.78147485 6.273786

#> 5 1 5 -2.55819959 2.109147

#> 6 1 6 1.72873240 2.066265Do not include treatment-affected covariates: If a covariate is itself affected by the treatment (a “bad control” or mediator), including it will bias the treatment effect estimate.

Unit and time fixed effects: If the covariates include unit-specific or time-specific means, consider whether these overlap with the fixed effects already implicit in SynthDID.

Comparing with and without covariates: It is good practice to report results both with and without covariate adjustment. If results are similar, this provides additional confidence in robustness.

tau.sc = synthdid::sc_estimate(setup$Y, setup$N0, setup$T0)

tau.did = synthdid::did_estimate(setup$Y, setup$N0, setup$T0)

estimates = list(tau.did, tau.sc, tau.hat)

names(estimates) = c('Diff-in-Diff', 'Synthetic Control', 'Synthetic Diff-in-Diff')

print(unlist(estimates))

#> Diff-in-Diff Synthetic Control Synthetic Diff-in-Diff

#> 4.993390 4.475815 4.827761

synthdid::synthdid_plot(estimates) +

ama_theme()

Spaghetti plots

top.controls = synthdid::synthdid_controls(tau.hat)[1:10, , drop=FALSE]

synthdid::synthdid_plot(tau.hat, spaghetti.units=rownames(top.controls)) + ama_theme()

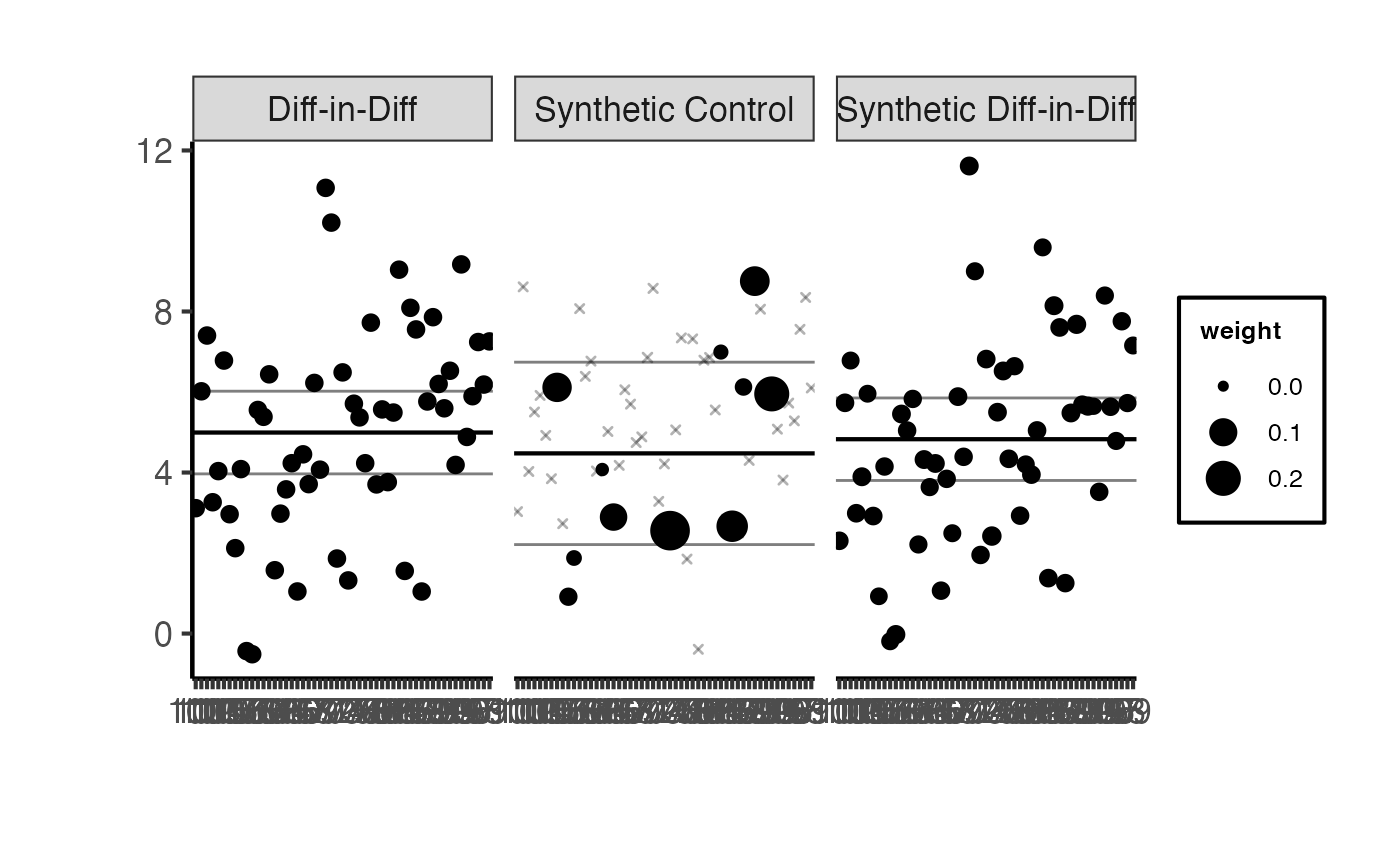

synthdid::synthdid_units_plot(estimates) +

ama_theme() +

ggplot2::theme(axis.text.x = ggplot2::element_text(angle = 45, hjust = 1, size = 7))

synthdid::synthdid_units_plot(tau.hat, units = rownames(top.controls)) +

ama_theme() +

ggplot2::theme(axis.text.x = ggplot2::element_text(angle = 45, hjust = 1, size = 8))

Diagnostics

Good practice requires inspecting the quality of the synthetic control fit before interpreting treatment effect estimates. This section demonstrates several diagnostic tools available in the synthdid package.

Inspecting Unit Weights

The synthdid_controls() function returns the unit weights assigned to each control unit. Examining these weights helps understand which control units contribute most to the synthetic control.

# Get all unit weights

unit_weights <- synthdid::synthdid_controls(tau.hat)

# Display top contributing control units

head(unit_weights, 10)

#> estimate 1

#> 74 0.02410098

#> 89 0.02270290

#> 91 0.02225061

#> 57 0.02183605

#> 85 0.02128654

#> 70 0.02102706

#> 104 0.02095126

#> 62 0.02090213

#> 76 0.02082322

#> 64 0.02076289

# How many controls have non-negligible weight?

cat("Number of controls with weight > 0.01:",

sum(unit_weights > 0.01), "\n")

#> Number of controls with weight > 0.01: 47

cat("Total weight on top 5 controls:",

sum(sort(unit_weights, decreasing = TRUE)[1:5]), "\n")

#> Total weight on top 5 controls: 0.1121771A well-behaved SynthDID estimate typically places non-trivial weight on a relatively small number of control units, producing a sparse and interpretable synthetic control.

Pre-Treatment Fit Assessment

Evaluating how well the synthetic control matches the treated unit(s) in the pre-treatment period is essential. A poor pre-treatment fit suggests the synthetic control may not provide a reliable counterfactual.

# Overlay plot: shows treated vs synthetic in pre-treatment

# overlay = 1 means full overlap in display

synthdid::synthdid_plot(tau.hat, overlay = 1) +

ama_theme() +

ggplot2::ggtitle("Pre-treatment Fit: Treated vs. Synthetic Control")

The overlay parameter controls the degree of visual overlap between treated and synthetic trajectories. A value of 1 maximizes overlap, making it easier to assess pre-treatment fit. Values between 0 and 1 provide partial overlay.

# Partial overlay for alternative visualization

synthdid::synthdid_plot(tau.hat, overlay = 0.8) +

ama_theme() +

ggplot2::ggtitle("Partial Overlay (0.8)")

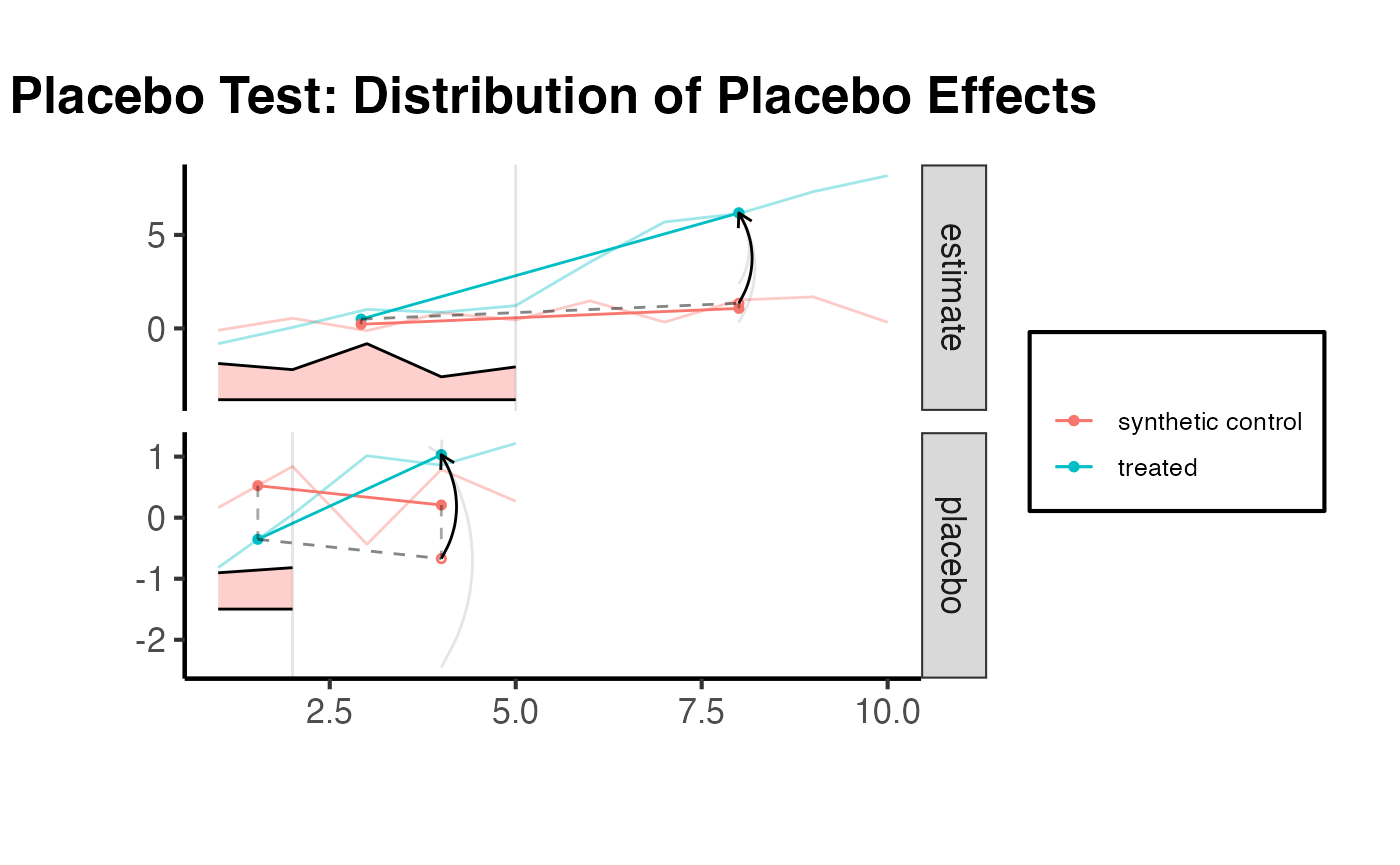

Placebo and RMSE Diagnostics

The synthdid_placebo_plot() function performs placebo tests by iteratively treating each control unit as if it were treated. If the method is working well, the placebo effects should be centered around zero, and the true treatment effect should stand out.

synthdid::synthdid_placebo_plot(tau.hat) +

ama_theme() +

ggplot2::ggtitle("Placebo Test: Distribution of Placebo Effects")

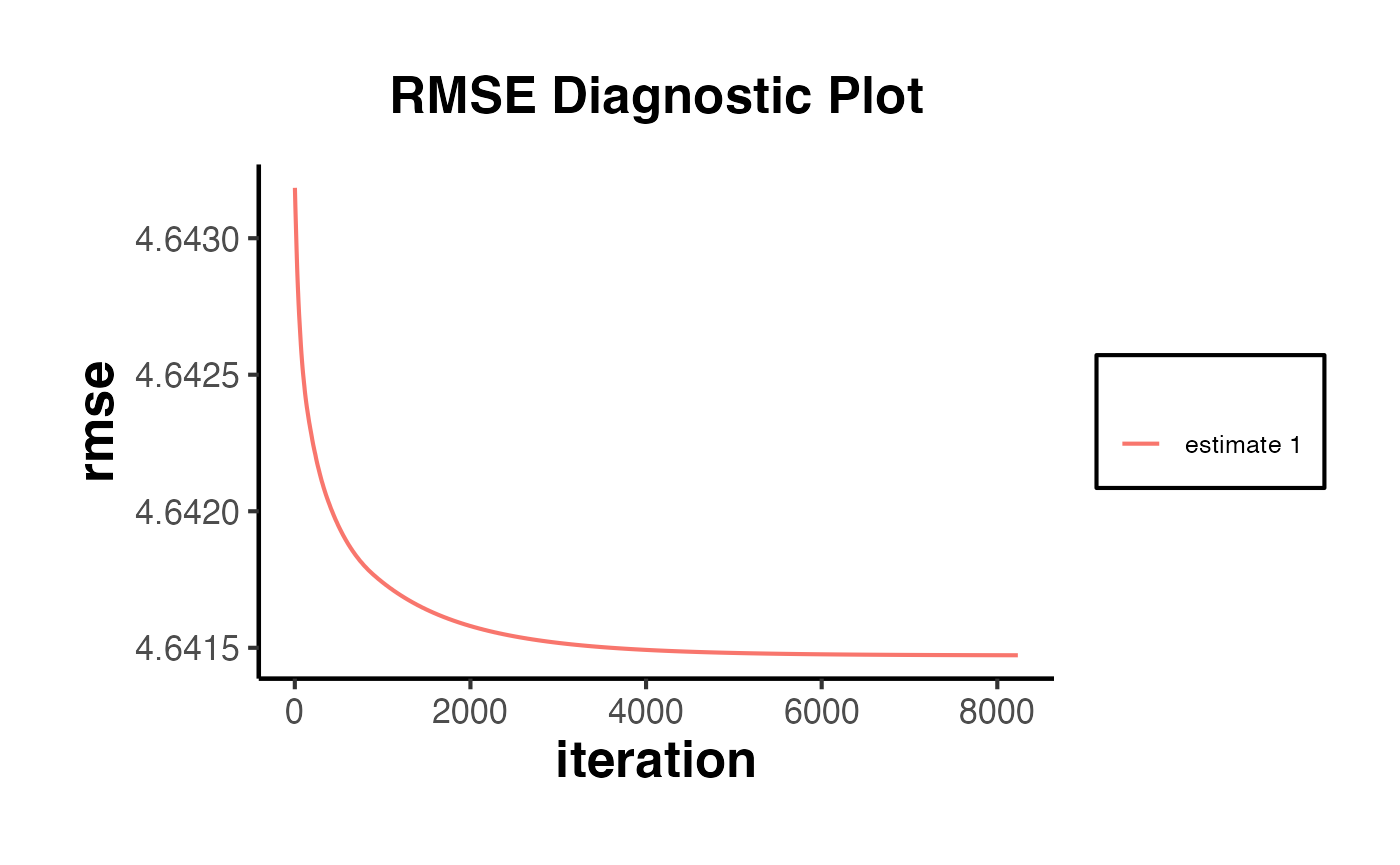

The synthdid_rmse_plot() function displays the root mean squared error of the pre-treatment fit for the treated unit relative to placebo fits for control units.

synthdid::synthdid_rmse_plot(tau.hat) +

ama_theme() +

ggplot2::ggtitle("RMSE Diagnostic Plot")

If the treated unit’s pre-treatment RMSE is substantially larger than the placebo units’ RMSE values, this indicates a poor pre-treatment fit and the results should be interpreted with caution.

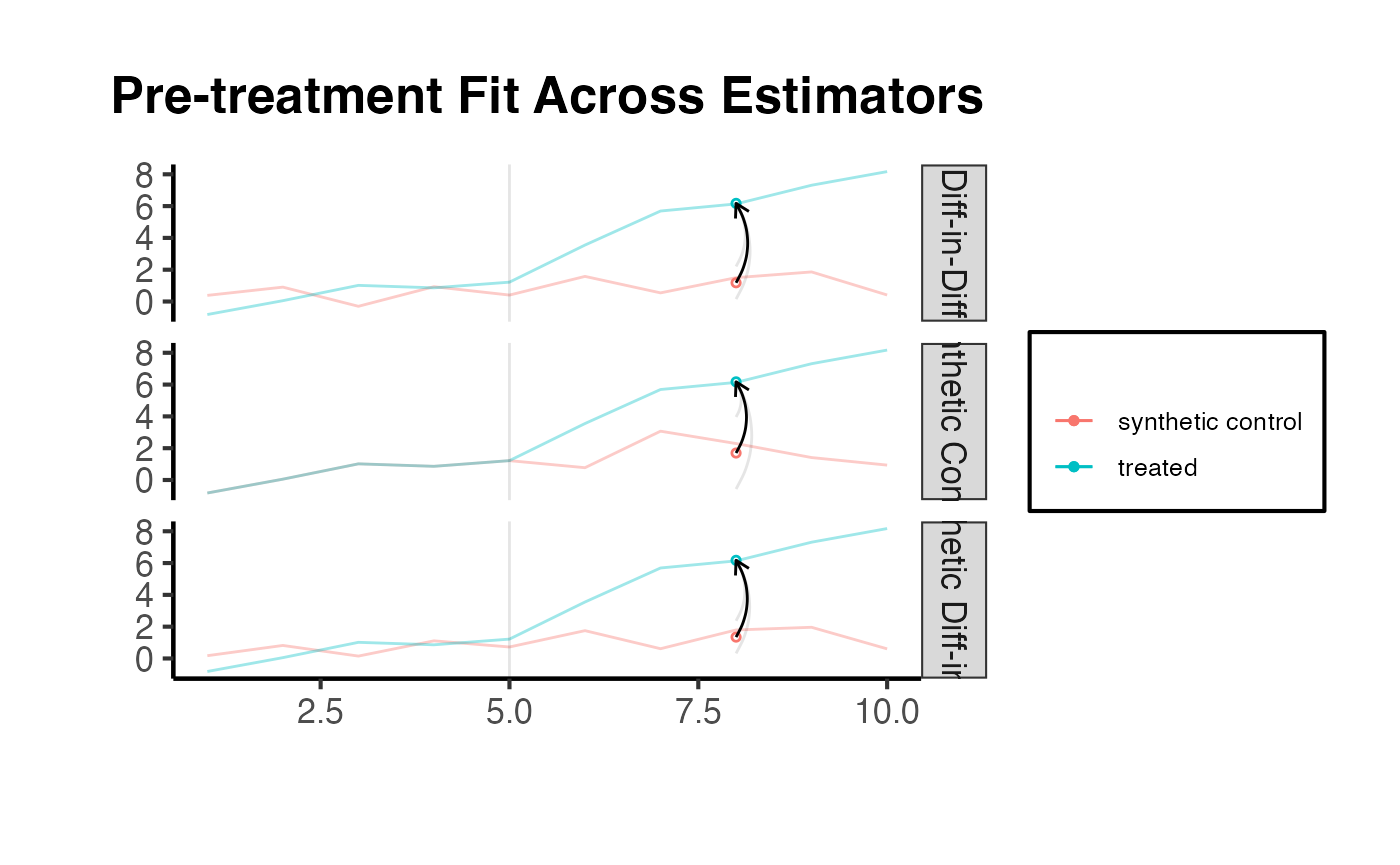

Comparing Diagnostics Across Estimators

It can also be informative to compare diagnostic plots across DID, SC, and SynthDID to understand how each method constructs its counterfactual.

# Recall: estimates list was created earlier

# estimates = list(tau.did, tau.sc, tau.hat)

synthdid::synthdid_plot(estimates, overlay = 1) +

ama_theme() +

ggplot2::ggtitle("Pre-treatment Fit Across Estimators")

Standard Errors and Inference

Inference for SynthDID requires special methods because the weights are estimated from the data. The synthdid package provides three approaches for computing standard errors, each with different properties and applicability.

Placebo Method

The placebo method computes standard errors by iteratively reassigning treatment to control units. For each placebo iteration, a control unit is treated as if it received the treatment, and the SynthDID estimator is recomputed on the remaining controls. The standard deviation of these placebo estimates provides the standard error.

When to use: The placebo method is preferred when there is only one treated unit (as in the California Proposition 99 example). It requires that the number of control units exceeds the number of treated units ().

setup_ca = synthdid::panel.matrices(synthdid::california_prop99)

tau_ca = synthdid::synthdid_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

# Placebo SE (works with single treated unit)

se_placebo = sqrt(vcov(tau_ca, method = 'placebo'))

cat("Placebo SE:", round(se_placebo, 3), "\n")

#> Placebo SE: 10.988

cat("95% CI: (", round(tau_ca - 1.96 * se_placebo, 2), ",",

round(tau_ca + 1.96 * se_placebo, 2), ")\n")

#> 95% CI: ( -37.14 , 5.93 )The causalverse package provides synthdid_se_placebo(), which extends the placebo method to compute per-period standard errors, useful for examining how uncertainty varies across post-treatment periods.

# Per-period placebo SE using causalverse

se_per_placebo <- causalverse::synthdid_se_placebo(tau_ca, replications = 500)

print(se_per_placebo)

#> [1] 0.0000000 0.0000000 0.0000000 0.0000000 0.0000000 0.0000000

#> [7] 0.0000000 0.0000000 0.0000000 0.0000000 0.0000000 0.0000000

#> [13] 0.0000000 0.0000000 0.0000000 0.0000000 0.9753081 0.6982304

#> [19] 1.1515451 3.6942604 8.1181143 8.9459650 9.9971758 10.1986216

#> [25] 11.5297336 12.4457975 12.7908651 13.6441388 12.6979526 13.4358033

#> [31] 13.9389997 3.6942604 5.4551748 6.2090102 6.9327953 7.4077444

#> [37] 7.8063416 8.2300288 8.5699643 8.8712930 9.0372998 9.2210960

#> [43] 9.4440224Jackknife Method

The jackknife method estimates variance by systematically leaving out one unit at a time and recomputing the estimate. This is the leave-one-out approach commonly used in statistics.

When to use: The jackknife method requires more than one treated unit. When there is only one treated unit, causalverse::synthdid_se_jacknife() automatically falls back to the placebo method.

# Jackknife SE (requires multiple treated units)

# Using base_did which has multiple treated units

se_jack = sqrt(vcov(tau.hat, method = 'jackknife'))

cat("Jackknife SE:", round(se_jack, 3), "\n")

#> Jackknife SE: 0.523The causalverse package provides synthdid_se_jacknife(), which extends the standard jackknife to produce per-period standard errors:

# Per-period jackknife SE using causalverse

se_per_jack <- causalverse::synthdid_se_jacknife(tau.hat)

print(se_per_jack)

#> [1] 0.1693245 0.1566397 0.2301585 0.1284699 0.1639381 0.8780495 0.9689758

#> [8] 1.1750561 0.9525326 1.1468373 0.8780495 0.6799381 0.6388343 0.5293119

#> [15] 0.5228316Bootstrap Method

The bootstrap method resamples control units with replacement and recomputes the estimate for each bootstrap sample.

When to use: Bootstrap can be used as an alternative to the jackknife when there are multiple treated units. It is generally more computationally intensive but can be more robust in finite samples.

Comparison of SE Methods

The table below summarizes the properties of each inference method:

| Method | Min. Treated Units | Requirement | Computational Cost | Per-Period |

|---|---|---|---|---|

| Placebo | 1 | Moderate | Yes (via causalverse) |

|

| Jackknife | 2+ (fallback to placebo if 1) | None | Low | Yes (via causalverse) |

| Bootstrap | 2+ | None | High | No (standard) |

Constructing Confidence Intervals

Once standard errors are obtained, confidence intervals can be constructed in the usual way:

For a 95% confidence interval, .

cat("=== Comparison of SE Methods (base_did data) ===\n")

#> === Comparison of SE Methods (base_did data) ===

cat("Point estimate:", round(as.numeric(tau.hat), 3), "\n\n")

#> Point estimate: 4.828

cat("Jackknife SE:", round(se_jack, 3), "\n")

#> Jackknife SE: 0.523

cat(" 95% CI: (", round(as.numeric(tau.hat) - 1.96 * se_jack, 2), ",",

round(as.numeric(tau.hat) + 1.96 * se_jack, 2), ")\n\n")

#> 95% CI: ( 3.8 , 5.85 )

cat("Bootstrap SE:", round(se_boot, 3), "\n")

#> Bootstrap SE: 0.568

cat(" 95% CI: (", round(as.numeric(tau.hat) - 1.96 * se_boot, 2), ",",

round(as.numeric(tau.hat) + 1.96 * se_boot, 2), ")\n")

#> 95% CI: ( 3.71 , 5.94 )Multiple Estimators with panel_estimate()

The causalverse::panel_estimate() function provides a unified interface for computing treatment effect estimates using multiple methods simultaneously. This is valuable for assessing the robustness of findings across different identifying assumptions.

Available Estimators

The package supports the following estimators:

| Estimator | Function | Description |

|---|---|---|

synthdid |

synthdid::synthdid_estimate() |

Synthetic Difference-in-Differences: combines unit weights, time weights, and an intercept. |

did |

synthdid::did_estimate() |

Standard Difference-in-Differences: uniform weights on all control units and all pre-treatment periods. |

sc |

synthdid::sc_estimate() |

Synthetic Control: optimized unit weights with no intercept and no time weights. |

sc_ridge |

Custom wrapper | SC with ridge-regularized unit weights. Regularization parameter scales as . |

difp |

Custom wrapper | De-meaned SynthDID: uses uniform time weights () with SynthDID-style unit weights. |

difp_ridge |

Custom wrapper | DIFP with ridge regularization on unit weights. |

Running All Estimators

setup = synthdid::panel.matrices(synthdid::california_prop99)

# Run for specific estimators

results_selected = causalverse::panel_estimate(

setup,

selected_estimators = c("synthdid", "did", "sc")

)

results_selected

#> $synthdid

#> $synthdid$estimate

#> synthdid: -15.604 +- NA. Effective N0/N0 = 16.4/38~0.4. Effective T0/T0 = 2.8/19~0.1. N1,T1 = 1,12.

#>

#> $synthdid$std.error

#> [1] 10.05324

#>

#>

#> $did

#> $did$estimate

#> synthdid: -27.349 +- NA. Effective N0/N0 = 38.0/38~1.0. Effective T0/T0 = 19.0/19~1.0. N1,T1 = 1,12.

#>

#> $did$std.error

#> [1] 15.81479

#>

#>

#> $sc

#> $sc$estimate

#> synthdid: -19.620 +- NA. Effective N0/N0 = 3.8/38~0.1. Effective T0/T0 = Inf/19~Inf. N1,T1 = 1,12.

#>

#> $sc$std.error

#> [1] 11.16422

# to access more details in the estimate object

summary(results_selected$did$estimate)

#> $estimate

#> [1] -27.34911

#>

#> $se

#> [,1]

#> [1,] NA

#>

#> $controls

#> estimate 1

#> Wyoming 0.026

#> Wisconsin 0.026

#> West Virginia 0.026

#> Virginia 0.026

#> Vermont 0.026

#> Utah 0.026

#> Texas 0.026

#> Tennessee 0.026

#> South Dakota 0.026

#> South Carolina 0.026

#> Rhode Island 0.026

#> Pennsylvania 0.026

#> Oklahoma 0.026

#> Ohio 0.026

#> North Dakota 0.026

#> North Carolina 0.026

#> New Mexico 0.026

#> New Hampshire 0.026

#> Nevada 0.026

#> Nebraska 0.026

#> Montana 0.026

#> Missouri 0.026

#> Mississippi 0.026

#> Minnesota 0.026

#> Maine 0.026

#> Louisiana 0.026

#> Kentucky 0.026

#> Kansas 0.026

#> Iowa 0.026

#> Indiana 0.026

#> Illinois 0.026

#> Idaho 0.026

#> Georgia 0.026

#> Delaware 0.026

#> Connecticut 0.026

#>

#> $periods

#> estimate 1

#> 1988 0.053

#> 1987 0.053

#> 1986 0.053

#> 1985 0.053

#> 1984 0.053

#> 1983 0.053

#> 1982 0.053

#> 1981 0.053

#> 1980 0.053

#> 1979 0.053

#> 1978 0.053

#> 1977 0.053

#> 1976 0.053

#> 1975 0.053

#> 1974 0.053

#> 1973 0.053

#> 1972 0.053

#> 1971 0.053

#>

#> $dimensions

#> N1 N0 N0.effective T1 T0 T0.effective

#> 1 38 38 12 19 19

process_panel_estimate(results_selected)

#> Method Estimate SE

#> 1 SYNTHDID -15.60 10.05

#> 2 DID -27.35 15.81

#> 3 SC -19.62 11.16Now run all six estimators (excluding mc which requires the MCPanel package):

# Run all available estimators

results_all = causalverse::panel_estimate(

setup,

selected_estimators = c("synthdid", "did", "sc", "sc_ridge", "difp", "difp_ridge")

)

# Format results into a clean table

results_table <- causalverse::process_panel_estimate(results_all)

results_table

#> Method Estimate SE

#> 1 SYNTHDID -15.60 10.05

#> 2 DID -27.35 15.81

#> 3 SC -19.62 11.16

#> 4 SC_RIDGE -21.72 11.30

#> 5 DIFP -11.10 10.19

#> 6 DIFP_RIDGE -16.12 10.17Interpreting the Results

When comparing across estimators:

- If all methods agree, this provides strong evidence for the treatment effect, as it is robust to different identifying assumptions.

- If DID and SynthDID agree but SC differs, this suggests that level matching (SC) is difficult but the parallel trends assumption may hold.

- If SC and SynthDID agree but DID differs, this suggests that parallel trends may be violated, but there is a good level match among weighted control units.

- If methods disagree substantially, investigate the assumptions of each method carefully and rely on the one whose assumptions are most plausible in your context.

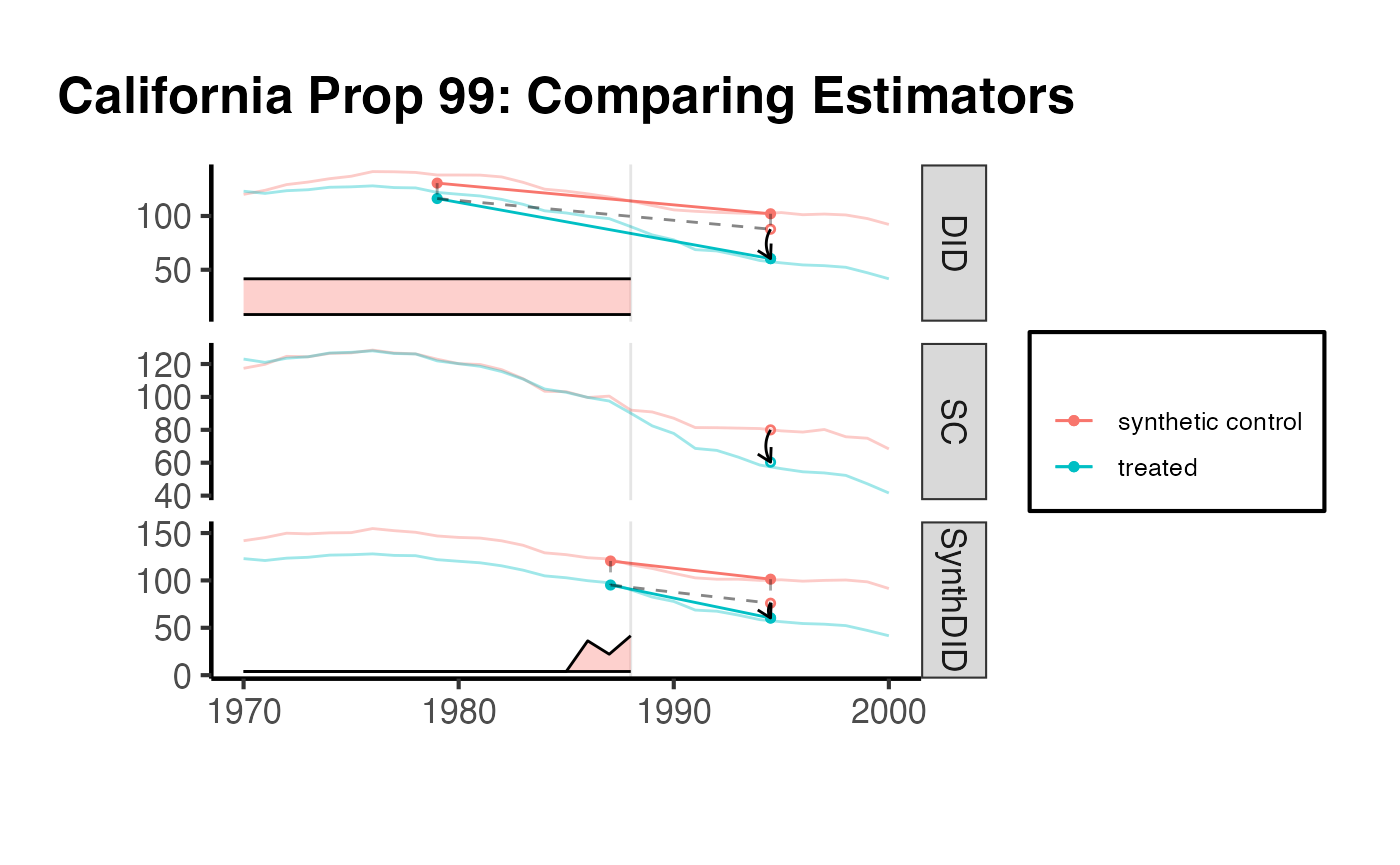

Visualizing Estimator Comparison

# Create estimates for plotting

tau.sc_ca = synthdid::sc_estimate(setup$Y, setup$N0, setup$T0)

tau.did_ca = synthdid::did_estimate(setup$Y, setup$N0, setup$T0)

tau.sdid_ca = synthdid::synthdid_estimate(setup$Y, setup$N0, setup$T0)

estimates_ca = list(tau.did_ca, tau.sc_ca, tau.sdid_ca)

names(estimates_ca) = c('DID', 'SC', 'SynthDID')

synthdid::synthdid_plot(estimates_ca) +

ama_theme() +

ggplot2::ggtitle("California Prop 99: Comparing Estimators")

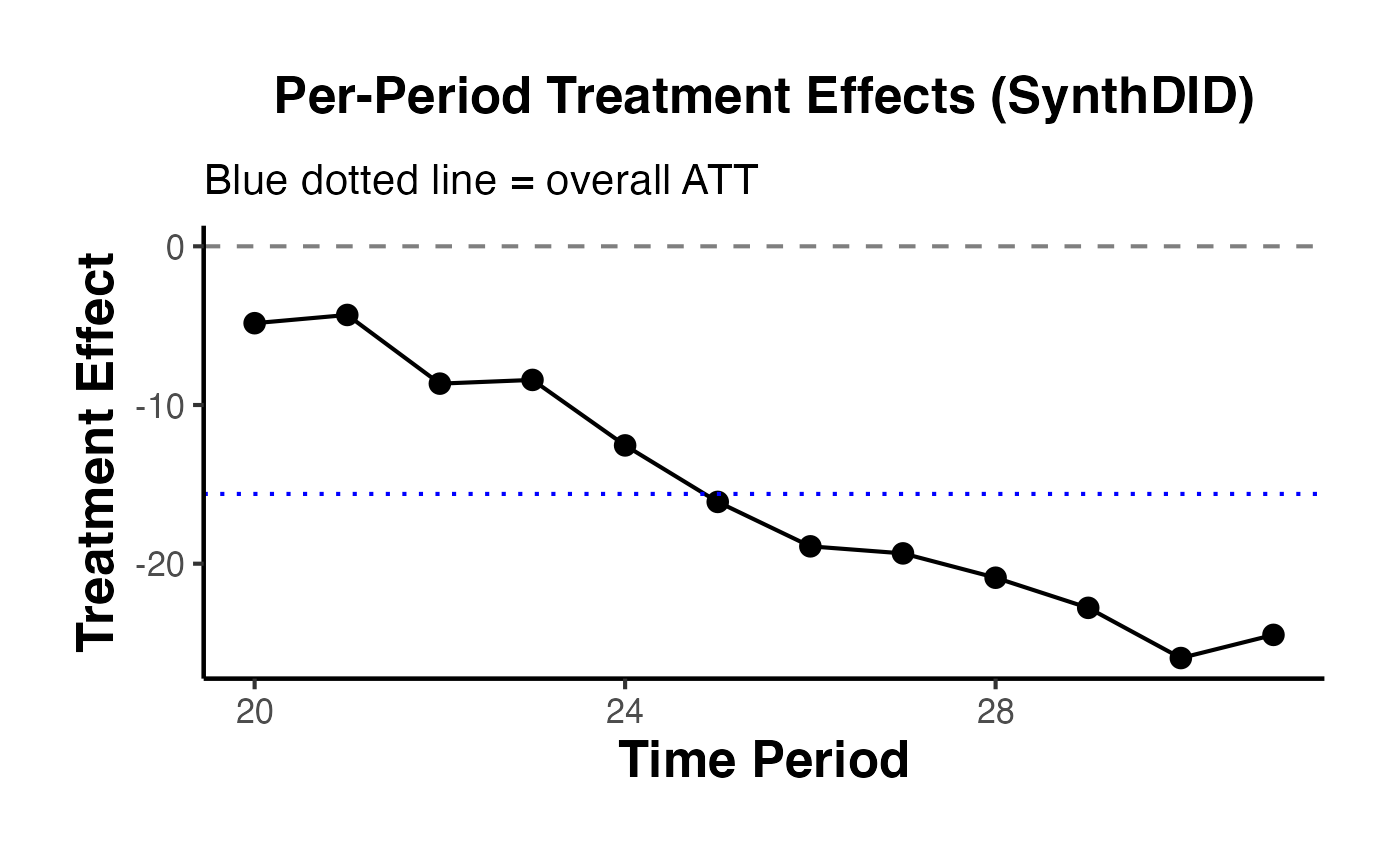

Per-Period Treatment Effects

While the overall ATT provides a single summary of the treatment effect, researchers are often interested in how the effect evolves over time. The causalverse::synthdid_est_per() function decomposes the SynthDID estimate into per-period treatment effects.

Decomposing Effects by Period

The per-period estimator computes, for each post-treatment period :

where is the synthetic control prediction for period using the estimated unit and time weights.

# Using the base_did setup from earlier

sdid_est <- synthdid::synthdid_estimate(setup$Y, setup$N0, setup$T0)

# Compute per-period effects

per_period <- causalverse::synthdid_est_per(

Y = setup$Y,

N0 = setup$N0,

T0 = setup$T0,

weights = attr(sdid_est, 'weights')

)

# View the structure

cat("Treatment effects by period:\n")

#> Treatment effects by period:

print(round(per_period$est, 3))

#> [1] 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.000

#> [10] 0.000 0.000 0.000 0.000 0.000 0.000 0.000 0.409 0.039

#> [19] -0.448 -4.845 -4.326 -8.654 -8.419 -12.545 -16.106 -18.906 -19.350

#> [28] -20.884 -22.782 -25.945 -24.485 -4.845 -4.585 -5.941 -6.561 -7.758

#> [37] -9.149 -10.543 -11.644 -12.671 -13.682 -14.796 -15.604

cat("\nNumber of treated units:", per_period$Ntr, "\n")

#>

#> Number of treated units: 1

cat("Number of control units:", per_period$Nco, "\n")

#> Number of control units: 38The output includes:

-

est: A vector of treatment effects for each period (pre-treatment periods should be near zero), followed by cumulative ATEs for post-treatment periods. -

y_obs: Observed (weighted) outcomes for treated units. -

y_pred: Predicted counterfactual outcomes for treated units. -

lambda.synth: The time weights used. -

NtrandNco: Counts of treated and control units.

Visualizing Per-Period Effects

# Extract post-treatment effects

T0_ca <- setup$T0

T_total <- ncol(setup$Y)

T1_ca <- T_total - T0_ca

# The first T_total elements are per-period, remaining are cumulative ATEs

per_period_effects <- per_period$est[1:T_total]

post_effects <- per_period_effects[(T0_ca + 1):T_total]

# Create a plot of per-period effects

effect_df <- data.frame(

Period = (T0_ca + 1):T_total,

Effect = post_effects

)

ggplot2::ggplot(effect_df, ggplot2::aes(x = Period, y = Effect)) +

ggplot2::geom_point(size = 3) +

ggplot2::geom_line() +

ggplot2::geom_hline(yintercept = 0, linetype = "dashed", color = "gray50") +

ggplot2::geom_hline(

yintercept = as.numeric(sdid_est),

linetype = "dotted",

color = "blue"

) +

ggplot2::labs(

x = "Time Period",

y = "Treatment Effect",

title = "Per-Period Treatment Effects (SynthDID)",

subtitle = "Blue dotted line = overall ATT"

) +

ama_theme()

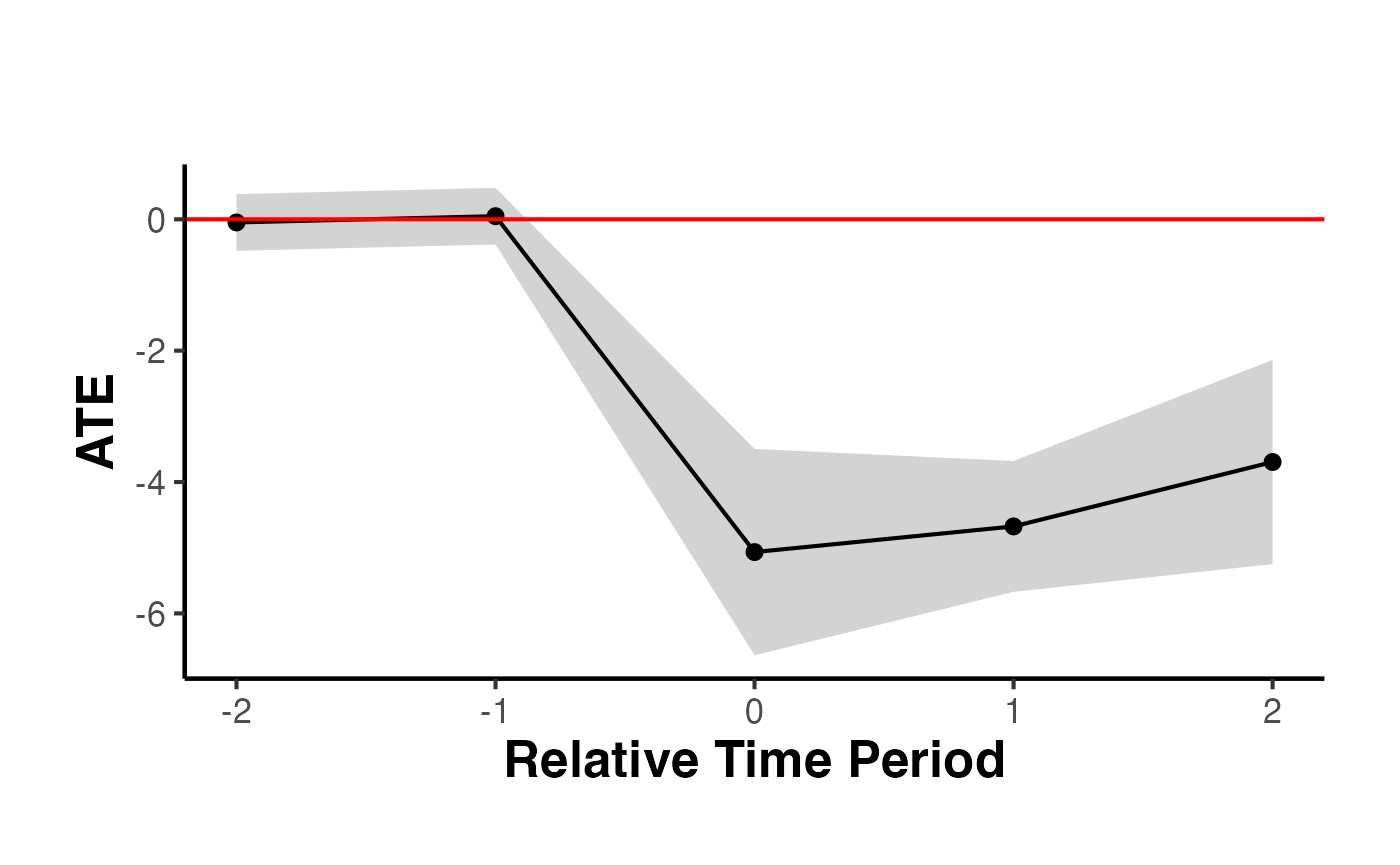

Staggered Adoptions

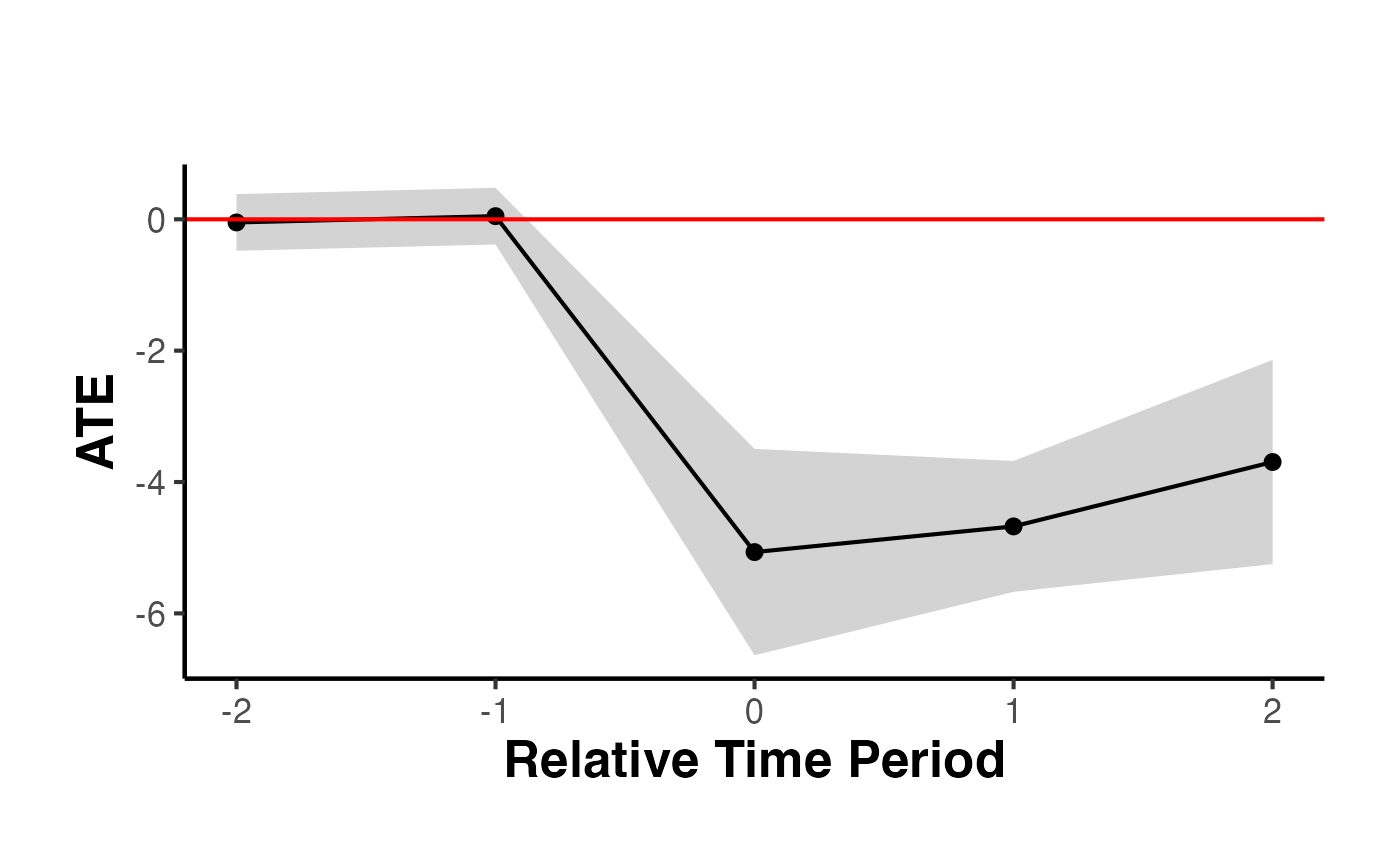

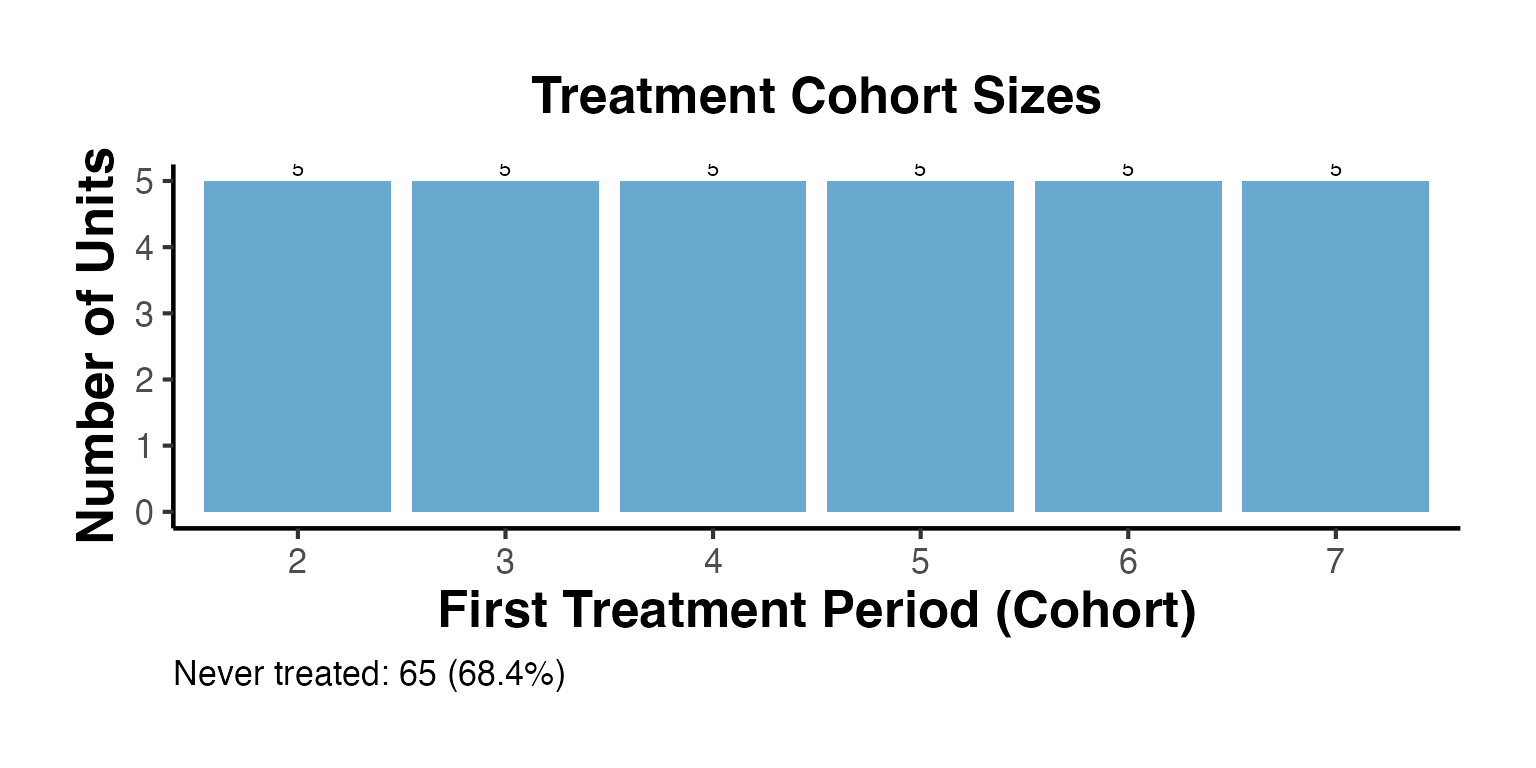

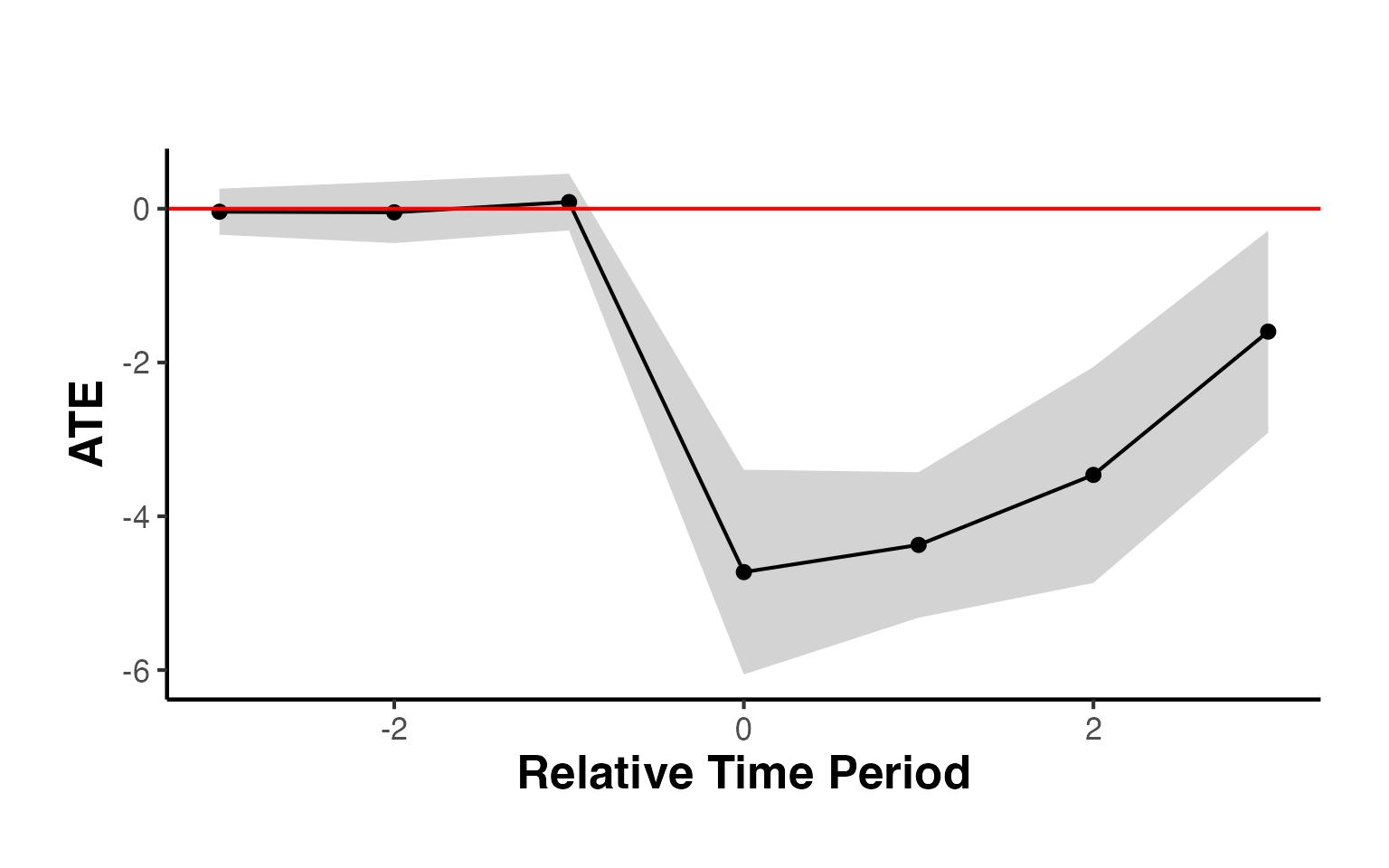

This vignette adapts the block assignment method from Arkhangelsky et al. (2021) for staggered adoption scenarios. Following the approach suggested by Ben-Michael, Feller, and Rothstein (2022), separate assignment matrices are created for each treatment period to fit the block assignment context. The synthdid estimator is then applied to each sub-sample. Finally, the Average Treatment Effect on the Treated (ATT) is calculated as a weighted average of all ATTs for all treated periods, with weights based on the proportion of treated units in each time period for each sub-sample.

df <- fixest::base_stagg |>

dplyr::mutate(treatvar = if_else(time_to_treatment >= 0, 1, 0)) |>

dplyr::mutate(treatvar = as.integer(if_else(year_treated > (5 + 2), 0, treatvar)))

est <- causalverse::synthdid_est_ate(

data = df,

adoption_cohorts = 5:7,

lags = 2,

leads = 2,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y",

)

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

data.frame(

Period = names(est$TE_mean_w),

ATE = est$TE_mean_w,

SE = est$SE_mean_w

) |>

causalverse::nice_tab()

#> Period ATE SE

#> 1 -2 -0.05 0.22

#> 2 -1 0.05 0.22

#> 3 0 -5.07 0.80

#> 4 1 -4.68 0.51

#> 5 2 -3.70 0.79

#> 6 cumul.0 -5.07 0.80

#> 7 cumul.1 -4.87 0.55

#> 8 cumul.2 -4.48 0.53

causalverse::synthdid_plot_ate(est)

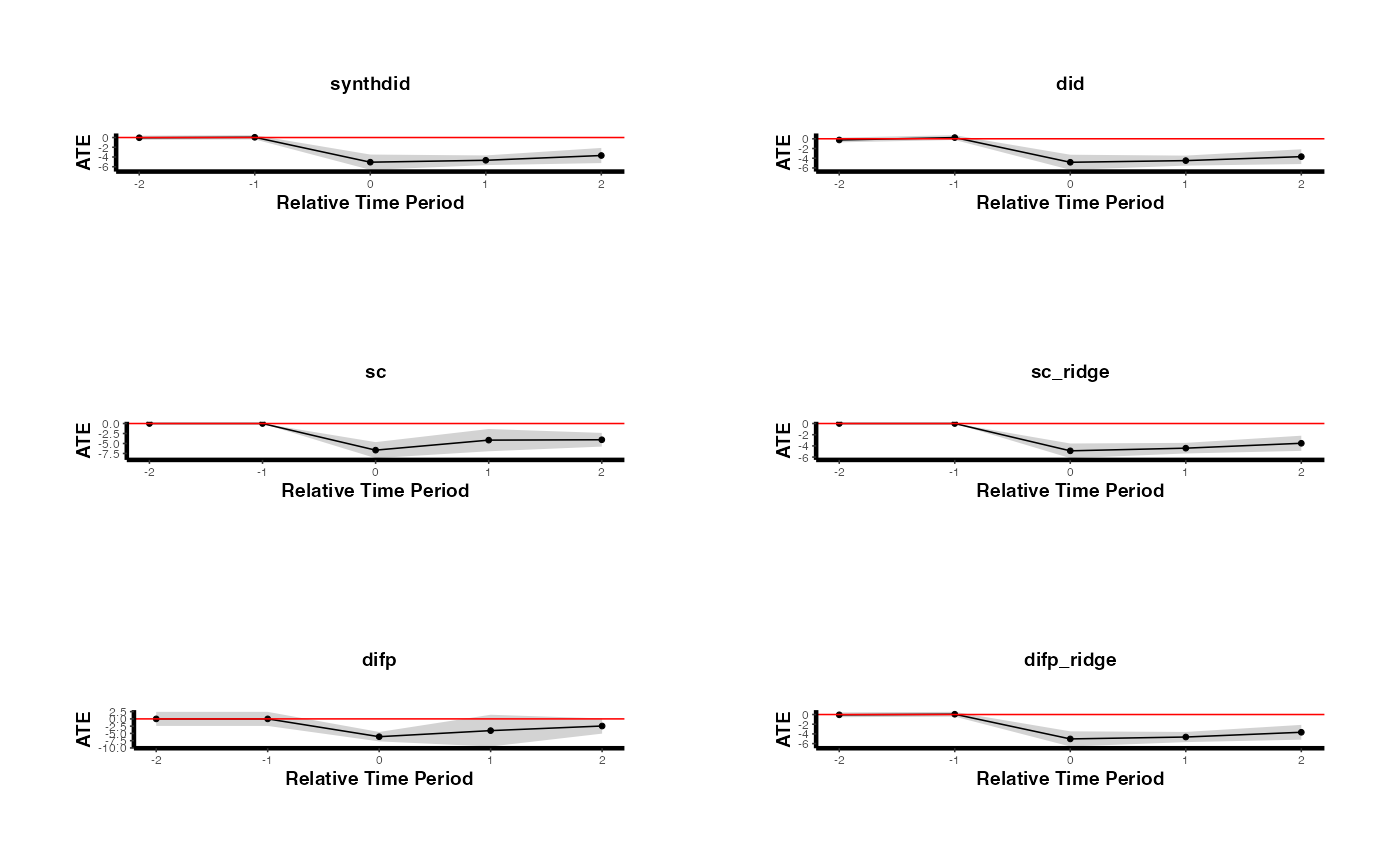

Compare to different estimators (e.g., DID and SC)

methods <- c("synthdid", "did", "sc", "sc_ridge", "difp", "difp_ridge")

estimates_methods <- lapply(methods, function(method) {

causalverse::synthdid_est_ate(

data = df,

adoption_cohorts = 5:7,

lags = 2,

leads = 2,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y",

method = method

)

})

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

plots <- lapply(seq_along(estimates_methods), function(i) {

causalverse::synthdid_plot_ate(estimates_methods[[i]],

title = methods[i],

theme = causalverse::ama_theme(base_size = 6)) +

theme(axis.text.x = element_text(angle = 45, hjust = 1, size = 8))

})

gridExtra::grid.arrange(grobs = plots, ncol = 2)

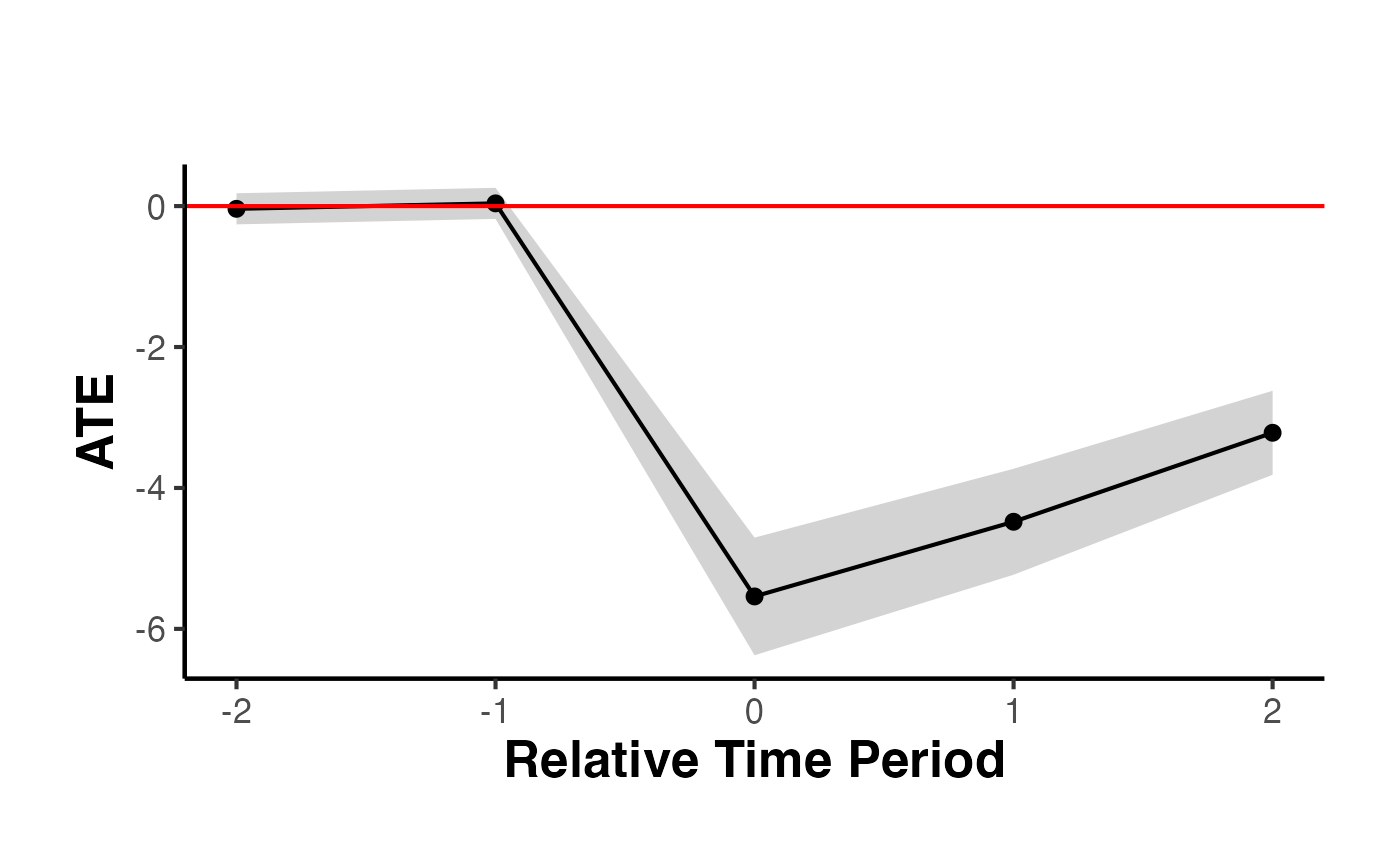

Subgroup Analysis (i.e., exploring treatment effects heterogeneity)

est_sub <- causalverse::synthdid_est_ate(

data = df,

adoption_cohorts = 5:7,

lags = 2,

leads = 2,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y",

# a vector of subgroup id (from unit id)

subgroup = c(

# some are treated

"11", "30", "49" ,

# some are control within this period

"20", "25", "21")

)

#> Adoption Cohort: 5

#> Treated units: 3 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 0 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 0 Control units: 55

data.frame(

Period = names(est_sub$TE_mean_w),

ATE = est_sub$TE_mean_w,

SE = est_sub$SE_mean_w

) |>

causalverse::nice_tab()

#> Period ATE SE

#> 1 -2 0.32 0.44

#> 2 -1 -0.32 0.44

#> 3 0 -4.29 1.68

#> 4 1 -4.00 1.52

#> 5 2 -3.44 2.90

#> 6 cumul.0 -4.29 1.68

#> 7 cumul.1 -4.14 1.52

#> 8 cumul.2 -3.91 1.82

causalverse::synthdid_plot_ate(est)

To incorporate time-varying variables, use the residuals obtained from regressing the outcome on these variables.

df_x <- fixest::base_stagg |>

dplyr::mutate(treatvar = if_else(time_to_treatment >= 0, 1, 0)) |>

dplyr::mutate(treatvar = as.integer(if_else(year_treated > (5 + 2), 0, treatvar))) |>

mutate(y_res = residuals(lm(y ~ x1)))

est_x <- causalverse::synthdid_est_ate(

data = df_x,

adoption_cohorts = 5:7,

lags = 2,

leads = 2,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y_res"

)

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 65

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 55

data.frame(

Period = names(est_x$TE_mean_w),

ATE = est_x$TE_mean_w,

SE = est_x$SE_mean_w

) |>

causalverse::nice_tab()

#> Period ATE SE

#> 1 -2 -0.04 0.11

#> 2 -1 0.04 0.11

#> 3 0 -5.54 0.43

#> 4 1 -4.48 0.38

#> 5 2 -3.22 0.30

#> 6 cumul.0 -5.54 0.43

#> 7 cumul.1 -5.01 0.36

#> 8 cumul.2 -4.41 0.29

causalverse::synthdid_plot_ate(est_x)

Best Practices and Practical Guidance

When to Use SynthDID vs. SC vs. DID

Choosing the right estimator depends on the features of your data and the plausibility of the identifying assumptions:

Use DID when:

- You have many treated and control units.

- The parallel trends assumption is credible (e.g., pre-treatment trends are visually parallel).

- Treatment is not concentrated in a single unit or a very small number of units.

- You want a simple, well-understood baseline estimate.

Use SC when:

- You have one or very few treated units (e.g., a single country, state, or firm).

- The treated unit’s pre-treatment outcome trajectory can be closely matched by a weighted combination of control units in levels.

- The number of pre-treatment periods is large relative to the number of control units.

- Parallel trends is implausible, but level matching is achievable.

Use SynthDID when:

- You want the benefits of both SC and DID without committing to one set of assumptions.

- You have a moderate number of treated units (one or more).

- You want double robustness: the estimator is consistent if either the SC-type or DID-type assumption holds.

- You want data-driven time weights that focus on the most informative pre-treatment periods.

Pre-Treatment Fit Assessment

Before reporting SynthDID results, always assess the quality of the pre-treatment fit:

Visual inspection: Use

synthdid_plot()withoverlay = 1to compare treated and synthetic trajectories in the pre-treatment period. They should track each other closely.RMSE check: Use

synthdid_rmse_plot()to compare the treated unit’s pre-treatment RMSE against placebo units. The treated unit’s RMSE should not be an outlier.Weight sparsity: Use

synthdid_controls()to inspect unit weights. A very diffuse weight distribution (many controls with small weights) may indicate a poor match.Placebo tests: Use

synthdid_placebo_plot()to check that the estimated effect is unusual compared to placebo effects. If many placebo effects are as large as the true effect, the result is not statistically compelling.

Reporting Guidelines

When reporting SynthDID results in academic papers or policy reports, include the following:

Point estimate and standard error: Report the ATT with at least one SE method. State which SE method was used and why.

Confidence interval: Report the 95% confidence interval.

Pre-treatment fit plot: Show the treated vs. synthetic control trajectory, including the pre-treatment period.

Unit weights: Report or visualize the control unit weights. Identify the top contributing control units.

Robustness across estimators: Report results from DID, SC, and SynthDID side by side. Discuss any discrepancies.

Sensitivity to specification: Consider varying the number of pre-treatment periods, the set of control units, or the covariate specification.

Placebo tests: Report the results of placebo tests (in-time or in-space placebos).

Staggered adoption: If treatment is staggered, clearly describe the cohort-by-cohort estimation strategy and how the overall ATT is aggregated.

Common Pitfalls

Avoid these common mistakes when applying SynthDID:

Too few pre-treatment periods: SynthDID requires sufficient pre-treatment data to estimate both unit and time weights reliably. As a rule of thumb, at least 10 pre-treatment periods are desirable.

Too few control units: With very few controls, the unit weights cannot achieve a good match and the placebo SE method will have low power.

Post-treatment covariates: Never residualize on variables that are themselves affected by the treatment.

Ignoring weight quality: Always inspect the unit and time weights. Extreme weights (e.g., all weight on a single control) suggest fragility.

Staggered adoption without adjustment: The base

synthdid_estimate()function assumes block adoption. For staggered designs, usecausalverse::synthdid_est_ate()which handles cohort-by-cohort estimation.Over-interpreting per-period effects: Per-period effects are noisier than the overall ATT. Focus on the overall pattern rather than individual period estimates unless you have theoretical reasons to expect time-varying effects.

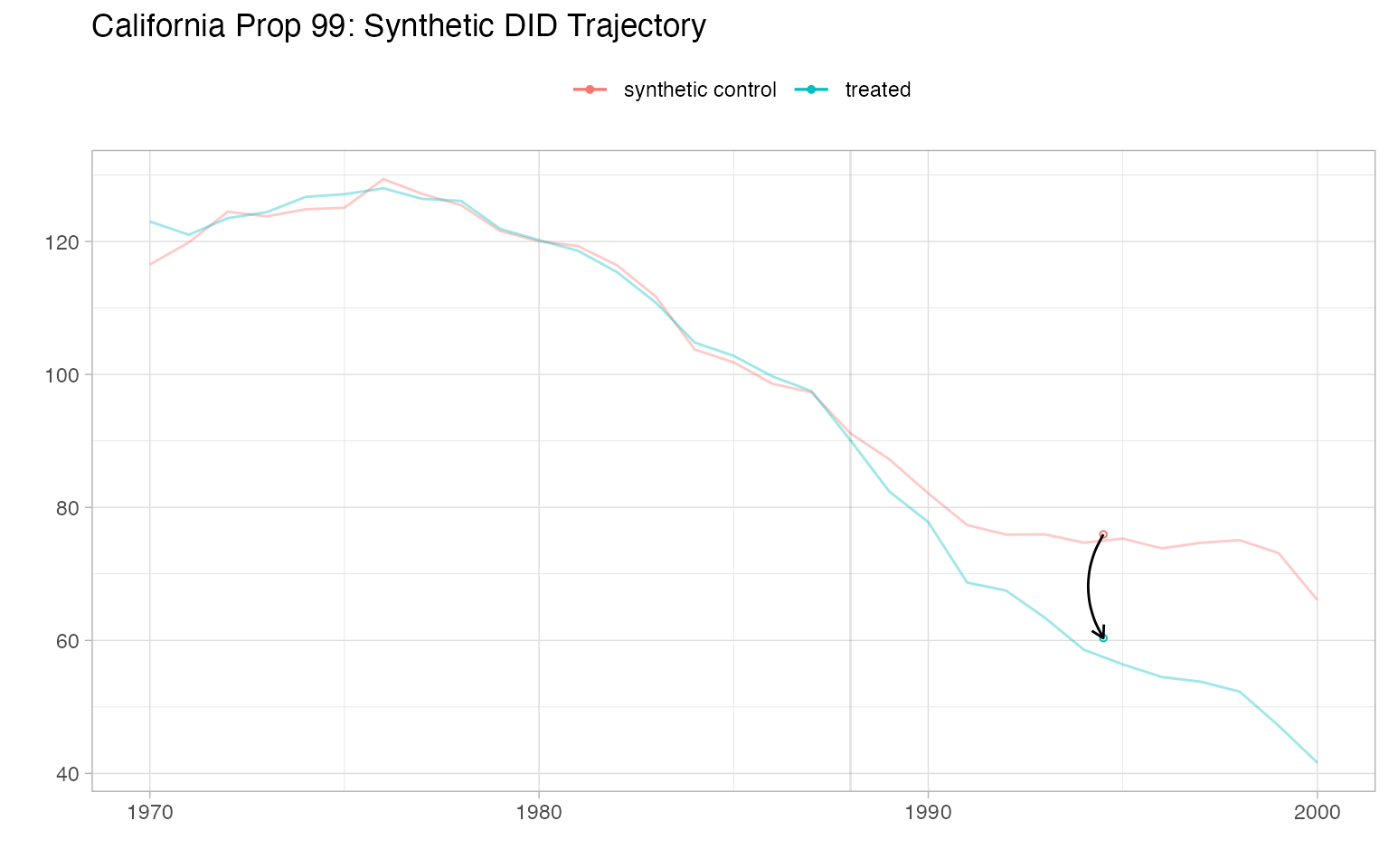

Comprehensive Visualization Suite for Synthetic DID

Treated vs. Synthetic Control Trajectory

The most important figure in any synthetic control paper is the trajectory plot showing how the treated unit and its synthetic counterpart evolved over time.

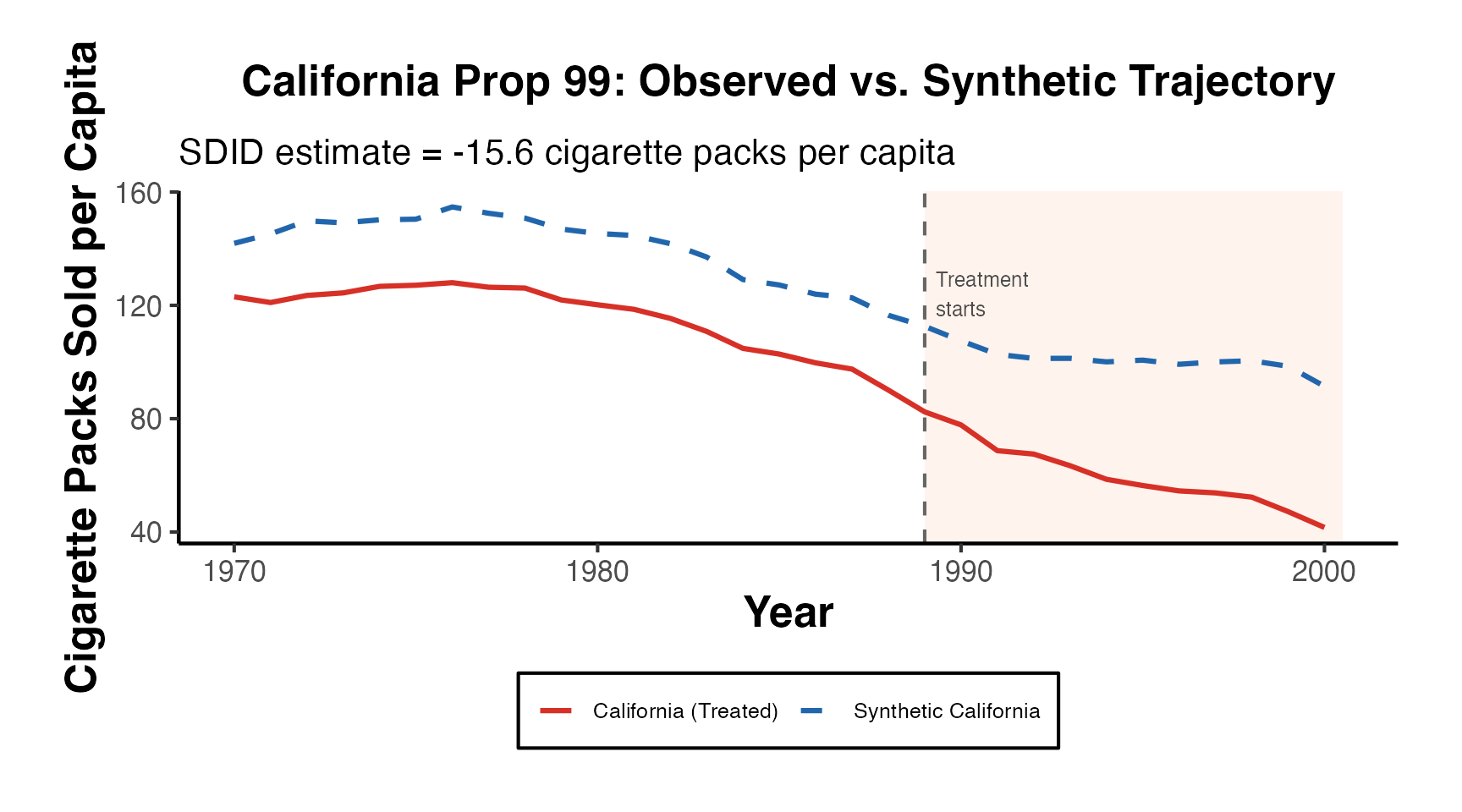

library(synthdid)

# Use the California Prop 99 data (canonical SC example)

data("california_prop99")

setup_ca <- synthdid::panel.matrices(california_prop99)

# Estimate SynthDID

est_sdid <- synthdid::synthdid_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

cat("SDID Estimate:", round(as.numeric(est_sdid), 3), "\n")

#> SDID Estimate: -15.604

# Built-in trajectory plot

plot(est_sdid, overlay = 1) +

ggplot2::ggtitle("California Prop 99: Synthetic DID Trajectory")

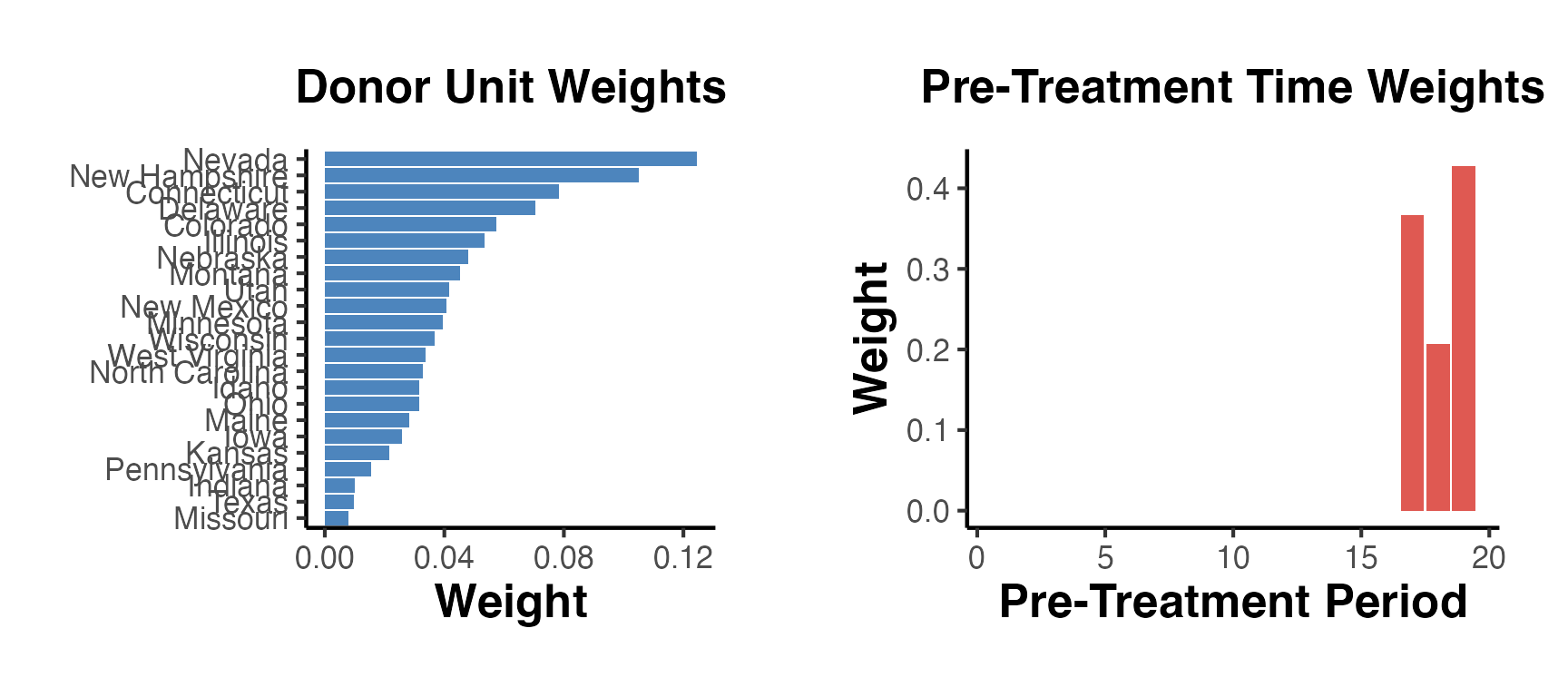

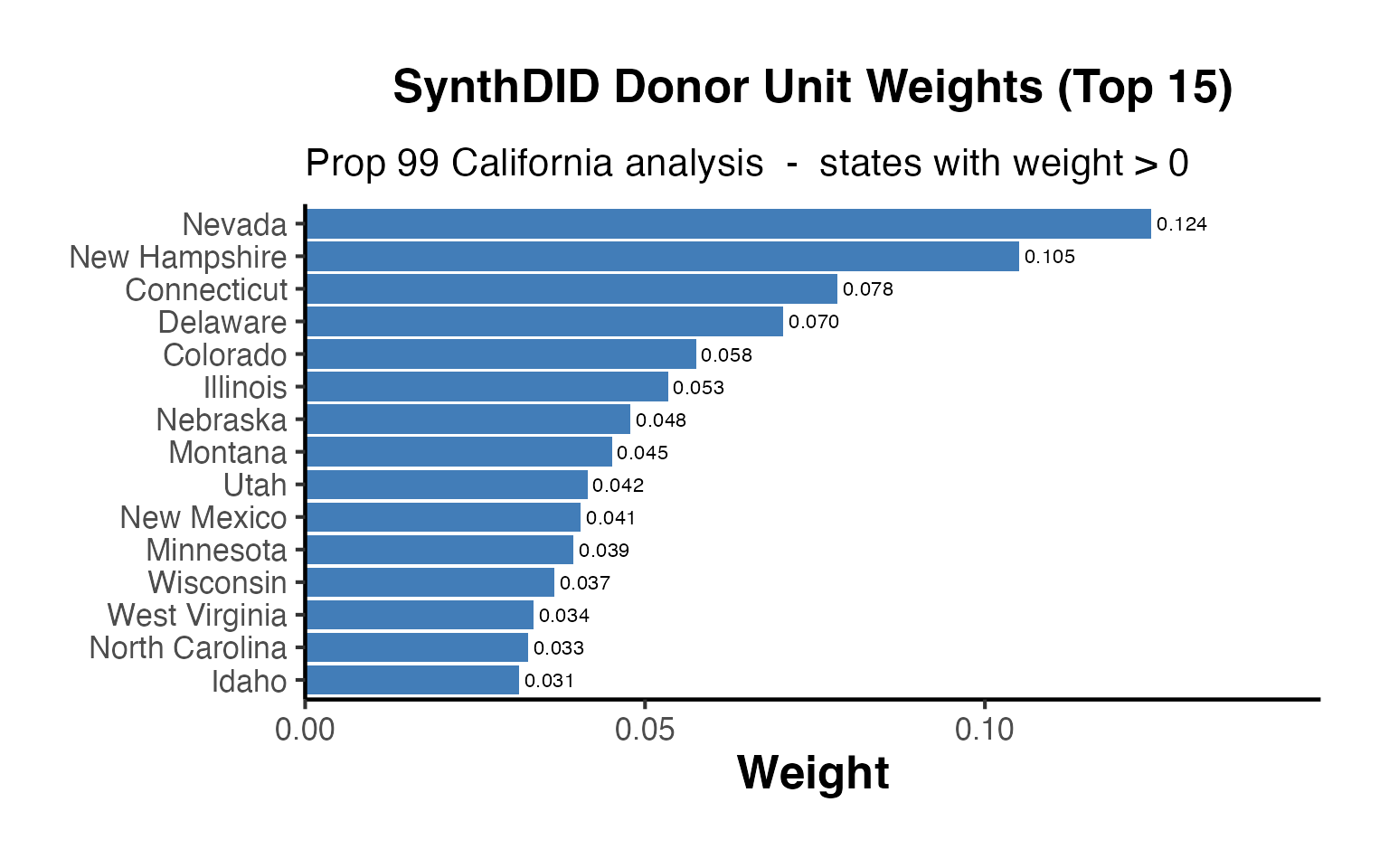

Unit and Time Weights

Understanding which donors and pre-treatment periods receive the most weight is critical for assessing credibility.

sdid_weights <- attr(est_sdid, "weights")

# Unit weights

omega_df <- data.frame(

unit = rownames(setup_ca$Y)[seq_len(setup_ca$N0)],

weight = sdid_weights$omega

)

omega_df <- omega_df[omega_df$weight > 0.005, ]

omega_df <- omega_df[order(omega_df$weight, decreasing = TRUE), ]

omega_df$unit <- factor(omega_df$unit, levels = rev(omega_df$unit))

p_omega <- ggplot(omega_df, aes(x = unit, y = weight)) +

geom_col(fill = "#2166AC", alpha = 0.8) +

coord_flip() +

labs(title = "Donor Unit Weights", x = NULL, y = "Weight") +

causalverse::ama_theme()

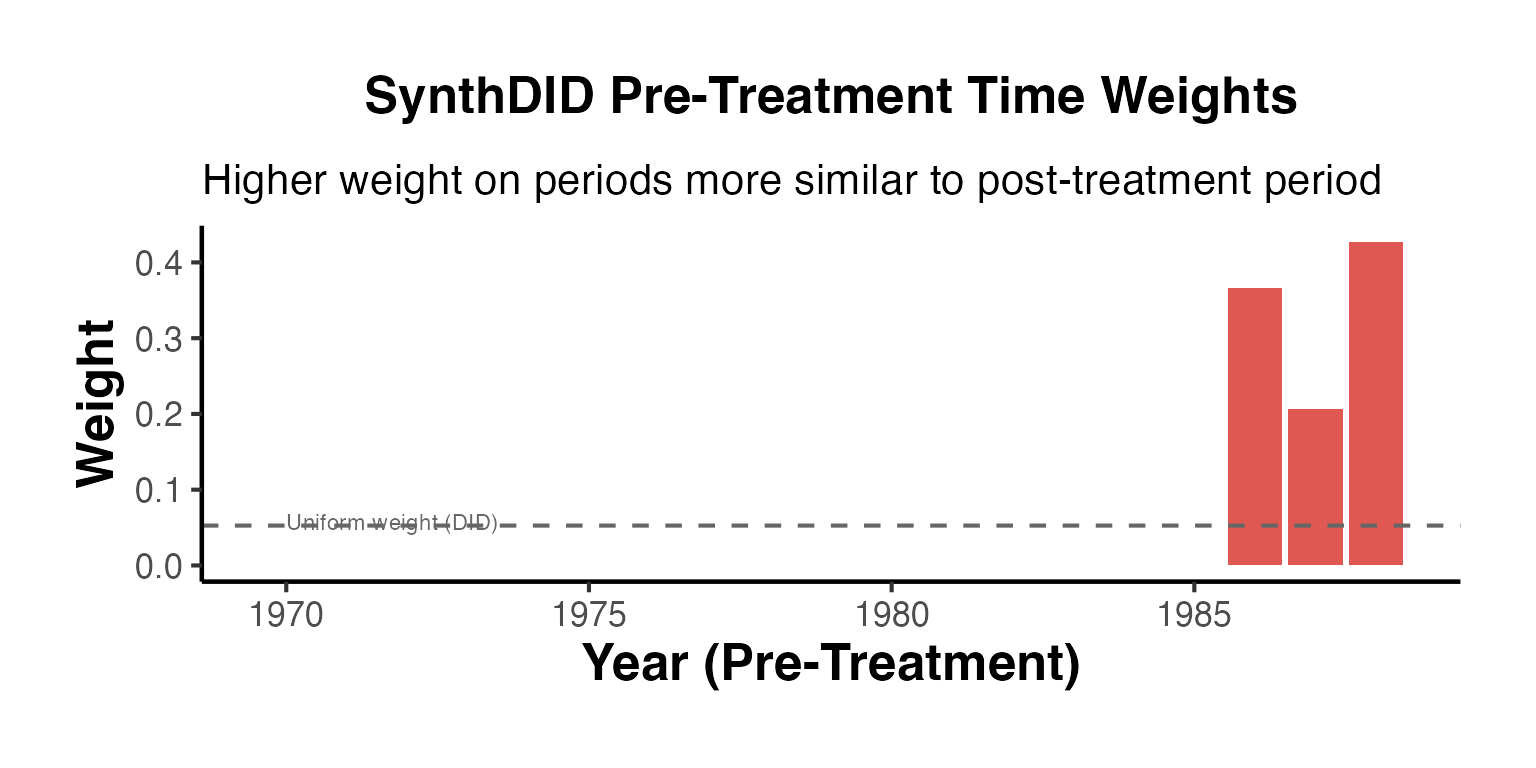

# Time weights

lambda_df <- data.frame(

time = seq_len(setup_ca$T0),

weight = sdid_weights$lambda

)

p_lambda <- ggplot(lambda_df, aes(x = time, y = weight)) +

geom_col(fill = "#D73027", alpha = 0.8) +

labs(title = "Pre-Treatment Time Weights", x = "Pre-Treatment Period",

y = "Weight") +

causalverse::ama_theme()

gridExtra::grid.arrange(p_omega, p_lambda, ncol = 2)

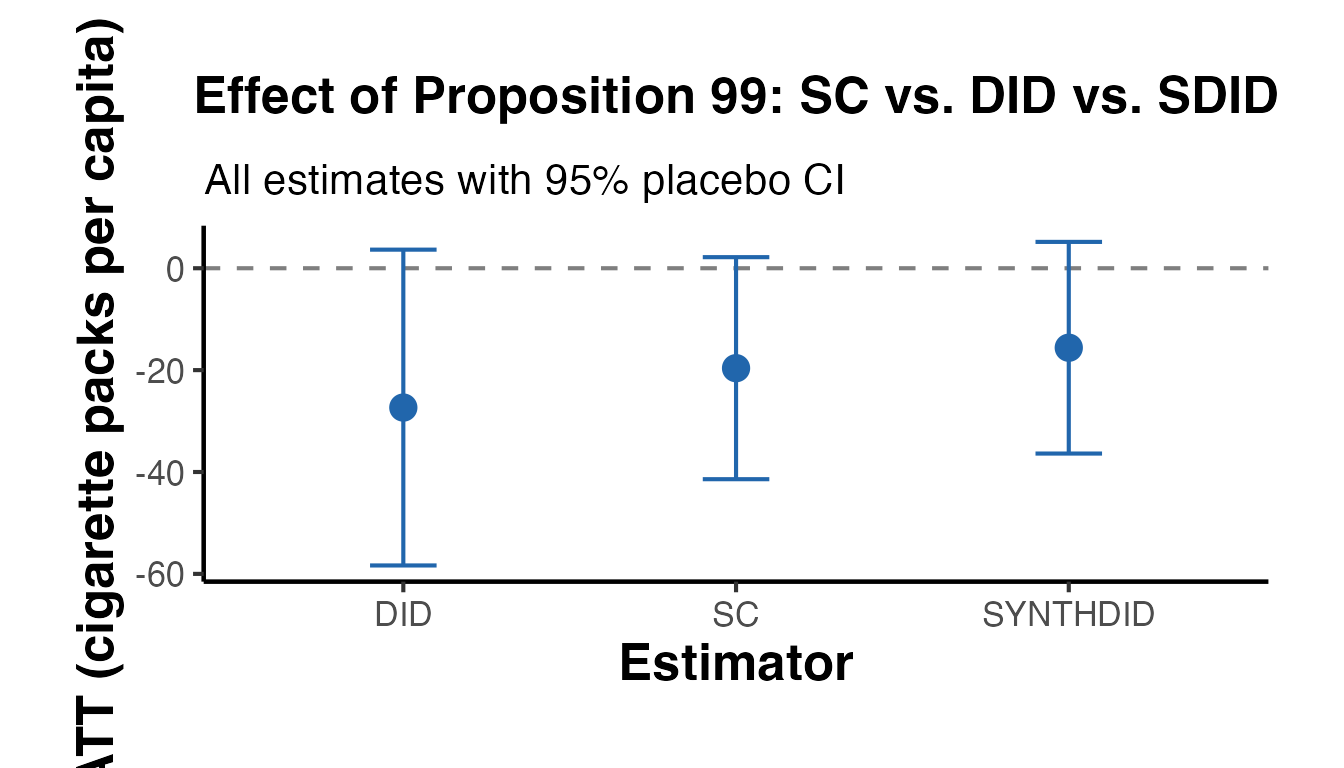

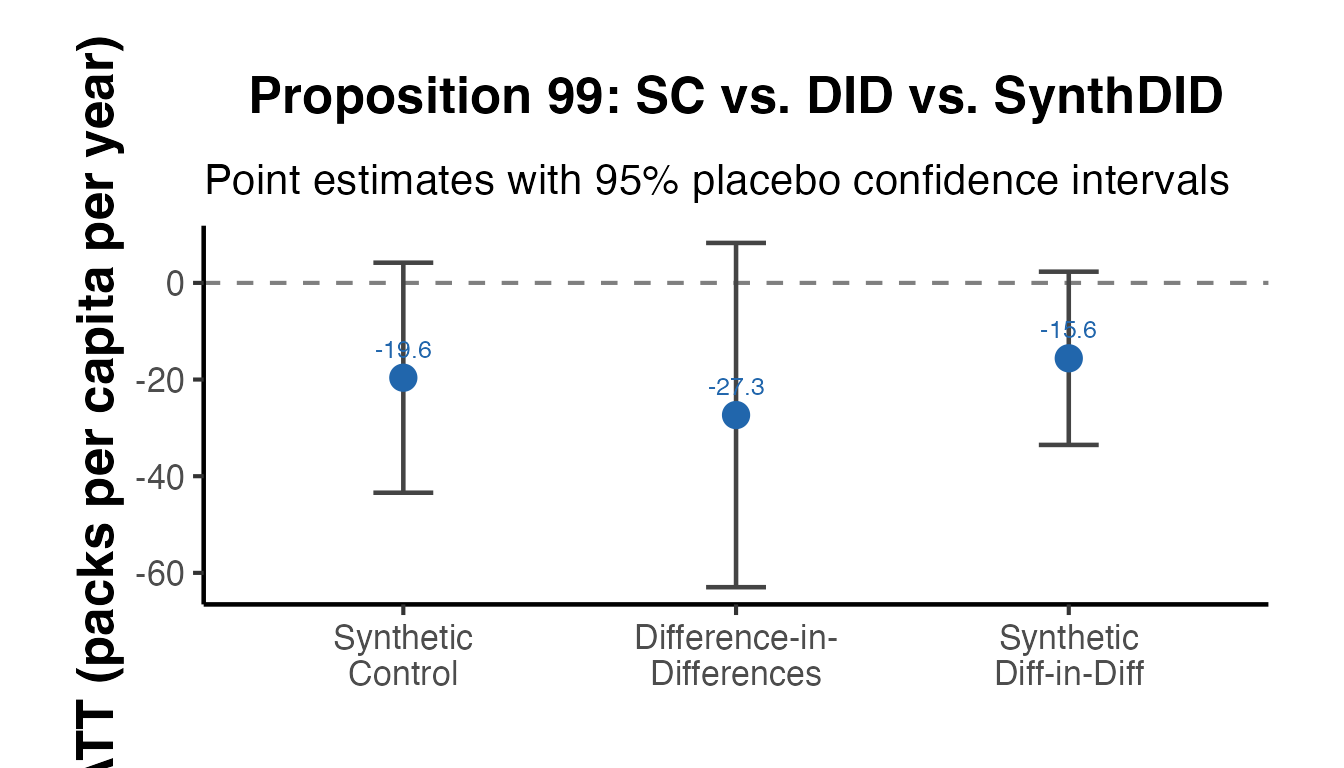

Estimator Comparison

Compare SC, DID, and SDID side-by-side:

results_compare <- causalverse::panel_estimate(

setup_ca,

selected_estimators = c("sc", "did", "synthdid")

)

cat("=== Estimator Comparison ===\n")

#> === Estimator Comparison ===

print(causalverse::process_panel_estimate(results_compare))

#> Method Estimate SE

#> 1 SC -19.62 11.12

#> 2 DID -27.35 15.81

#> 3 SYNTHDID -15.60 10.60

# Visual comparison

comp_df <- data.frame(

method = toupper(names(results_compare)),

estimate = sapply(results_compare, function(x) as.numeric(x$estimate)),

se = sapply(results_compare, function(x) as.numeric(x$std.error))

)

comp_df$ci_lo <- comp_df$estimate - 1.96 * comp_df$se

comp_df$ci_hi <- comp_df$estimate + 1.96 * comp_df$se

ggplot(comp_df, aes(x = method, y = estimate)) +

geom_hline(yintercept = 0, linetype = "dashed", color = "gray50") +

geom_point(size = 4, color = "#2166AC") +

geom_errorbar(aes(ymin = ci_lo, ymax = ci_hi),

width = 0.2, color = "#2166AC") +

labs(

title = "Effect of Proposition 99: SC vs. DID vs. SDID",

subtitle = "All estimates with 95% placebo CI",

x = "Estimator",

y = "ATT (cigarette packs per capita)"

) +

causalverse::ama_theme()

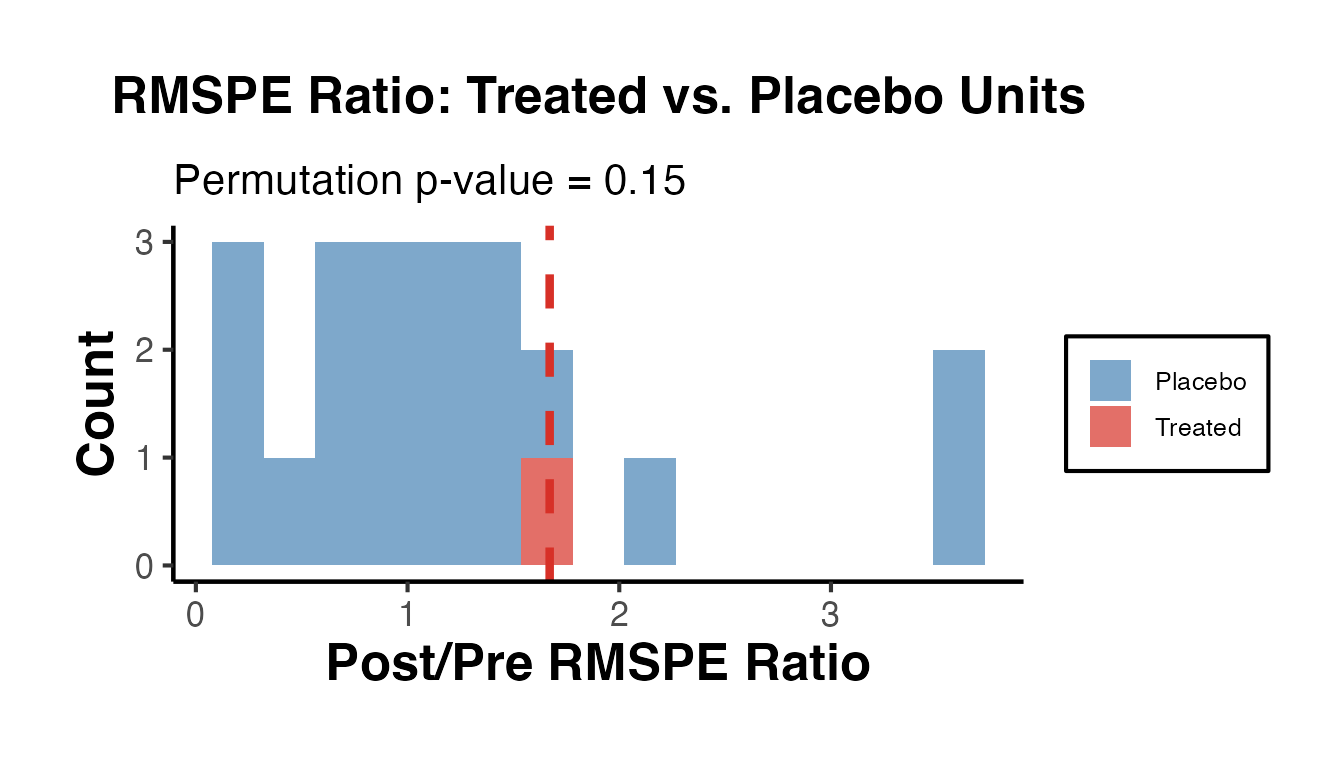

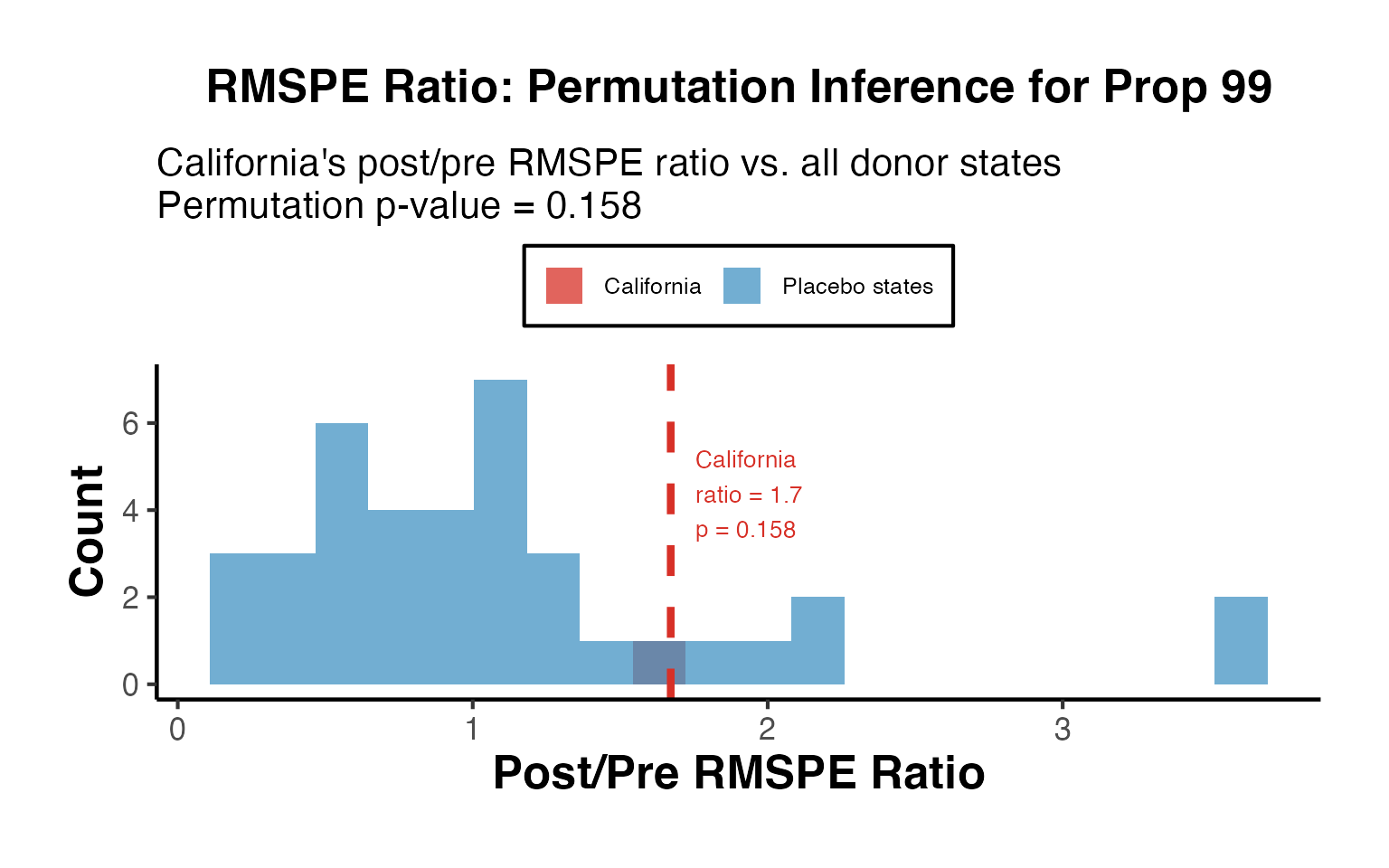

RMSPE Ratio: Placebo Inference

The RMSPE (Root Mean Squared Prediction Error) ratio compares post-treatment fit to pre-treatment fit, providing a non-parametric p-value.

# Extract gaps

synth_wts <- sdid_weights$omega

synth_Y <- as.vector(t(synth_wts) %*% setup_ca$Y[seq_len(setup_ca$N0), ])

treated_Y <- setup_ca$Y[nrow(setup_ca$Y), ]

time_labels <- as.numeric(colnames(setup_ca$Y))

treat_yr <- time_labels[setup_ca$T0 + 1]

treated_gap <- treated_Y - synth_Y

pre_mask <- time_labels < treat_yr

post_mask <- time_labels >= treat_yr

rmspe_pre <- sqrt(mean(treated_gap[pre_mask]^2))

rmspe_post <- sqrt(mean(treated_gap[post_mask]^2))

ratio_treat <- rmspe_post / rmspe_pre

# Placebo units (subsample for speed)

n_placebo <- min(20, setup_ca$N0)

placebo_ratios <- vapply(seq_len(n_placebo), function(j) {

g_pre <- setup_ca$Y[j, pre_mask] -

colMeans(setup_ca$Y[setdiff(seq_len(setup_ca$N0), j), pre_mask])

g_post <- setup_ca$Y[j, post_mask] -

colMeans(setup_ca$Y[setdiff(seq_len(setup_ca$N0), j), post_mask])

pre_r <- sqrt(mean(g_pre^2))

if (pre_r < 1e-10) return(NA_real_)

sqrt(mean(g_post^2)) / pre_r

}, numeric(1))

placebo_ratios <- na.omit(placebo_ratios)

p_val_rmspe <- mean(placebo_ratios >= ratio_treat)

cat("RMSPE ratio (treated): ", round(ratio_treat, 3), "\n")

#> RMSPE ratio (treated): 1.671

cat("Placebo p-value: ", round(p_val_rmspe, 3), "\n")

#> Placebo p-value: 0.15

ratio_df <- data.frame(

ratio = c(as.numeric(placebo_ratios), ratio_treat),

type = c(rep("Placebo", length(placebo_ratios)), "Treated")

)

ggplot(ratio_df, aes(x = ratio, fill = type)) +

geom_histogram(bins = 15, alpha = 0.7) +

scale_fill_manual(values = c("Placebo" = "steelblue",

"Treated" = "#D73027")) +

geom_vline(xintercept = ratio_treat, color = "#D73027",

linewidth = 1.5, linetype = "dashed") +

labs(

title = "RMSPE Ratio: Treated vs. Placebo Units",

subtitle = paste0("Permutation p-value = ", round(p_val_rmspe, 3)),

x = "Post/Pre RMSPE Ratio",

y = "Count",

fill = NULL

) +

causalverse::ama_theme()

Staggered Adoption: ATE Across Cohorts

ate_results <- causalverse::synthdid_est_ate(

data = fixest::base_stagg,

method = "synthdid"

)

causalverse::synthdid_plot_ate(ate_results)Extended Visualization Suite

This section demonstrates the full visualization toolkit available in causalverse for synthetic DID analyses.

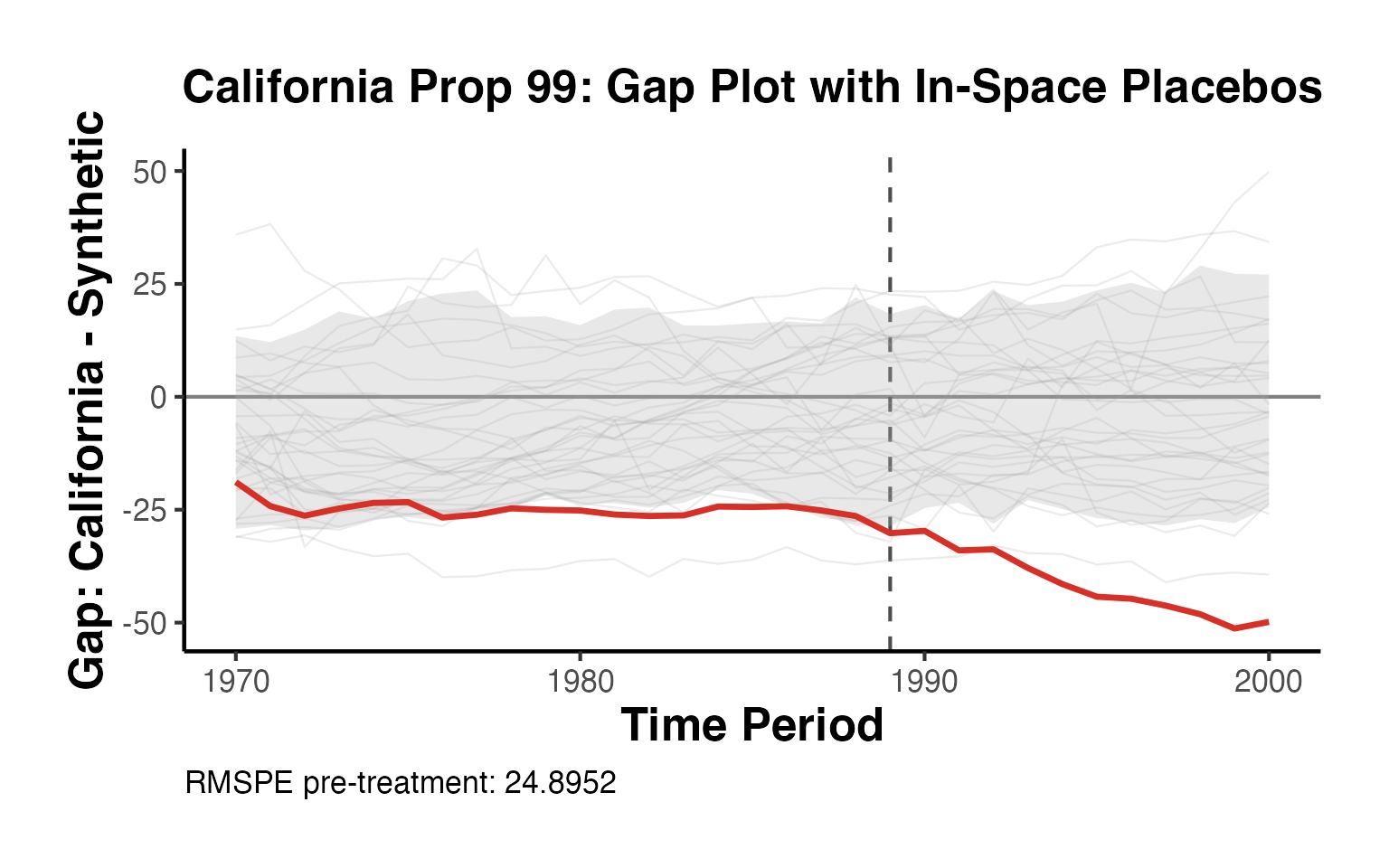

Gap Plot with In-Space Placebos

The causalverse::sc_gap_plot() function plots the treatment-synthetic gap over time and overlays donor unit gaps as in-space placebos, providing visual inference about whether the treated unit’s gap is unusual.

library(synthdid)

# California Prop 99 setup

data("california_prop99")

setup_ca <- synthdid::panel.matrices(california_prop99)

est_sdid <- synthdid::synthdid_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

wts <- attr(est_sdid, "weights")

# Treated unit trajectory and synthetic

Y <- setup_ca$Y

N0 <- setup_ca$N0

T0 <- setup_ca$T0

N <- nrow(Y)

T_total <- ncol(Y)

time_lbl <- as.numeric(colnames(Y))

treat_yr <- time_lbl[T0 + 1]

synth_Y <- as.vector(t(wts$omega) %*% Y[seq_len(N0), ])

treated_Y <- Y[N, ]

# Compute donor gaps (each control treated as if it were treated, with equal weights)

donor_gaps_list <- lapply(seq_len(N0), function(j) {

other_ctrl <- setdiff(seq_len(N0), j)

synth_j <- colMeans(Y[other_ctrl, , drop = FALSE])

as.numeric(Y[j, ] - synth_j)

})

causalverse::sc_gap_plot(

treated_outcome = as.numeric(treated_Y),

synthetic_outcome = synth_Y,

time_periods = time_lbl,

treatment_period = treat_yr,

donor_gaps = donor_gaps_list,

placebo_alpha = 0.20,

placebo_rmspe_filter = 2,

ci_level = 0.90,

title = "California Prop 99: Gap Plot with In-Space Placebos",

unit_label = "California"

) + causalverse::ama_theme()

The red line shows California’s gap (treated minus synthetic). Gray lines show donor state gaps estimated with equal-weight synthetic controls. Units with pre-treatment RMSPE more than twice California’s are excluded following Abadie, Diamond and Hainmueller (2010).

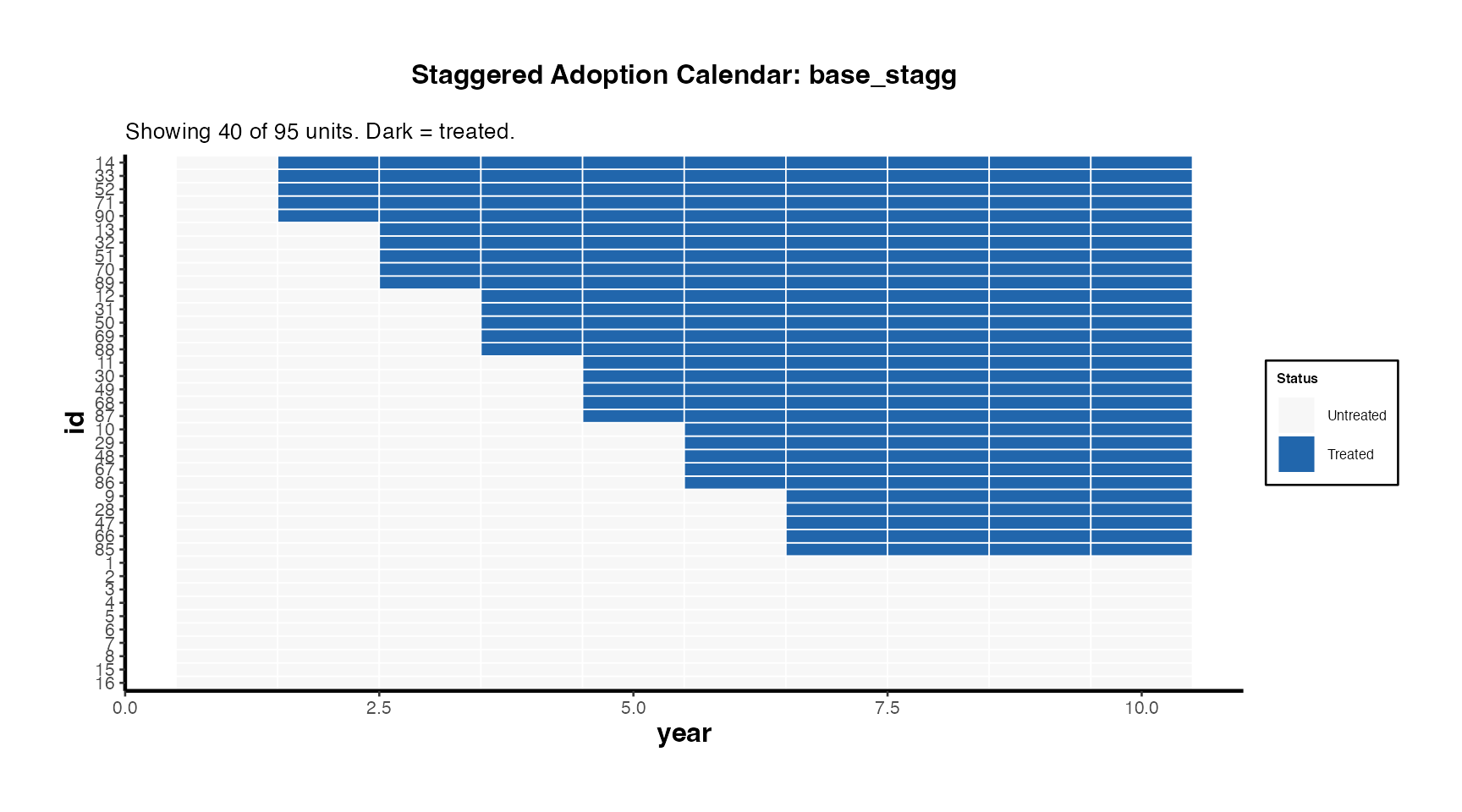

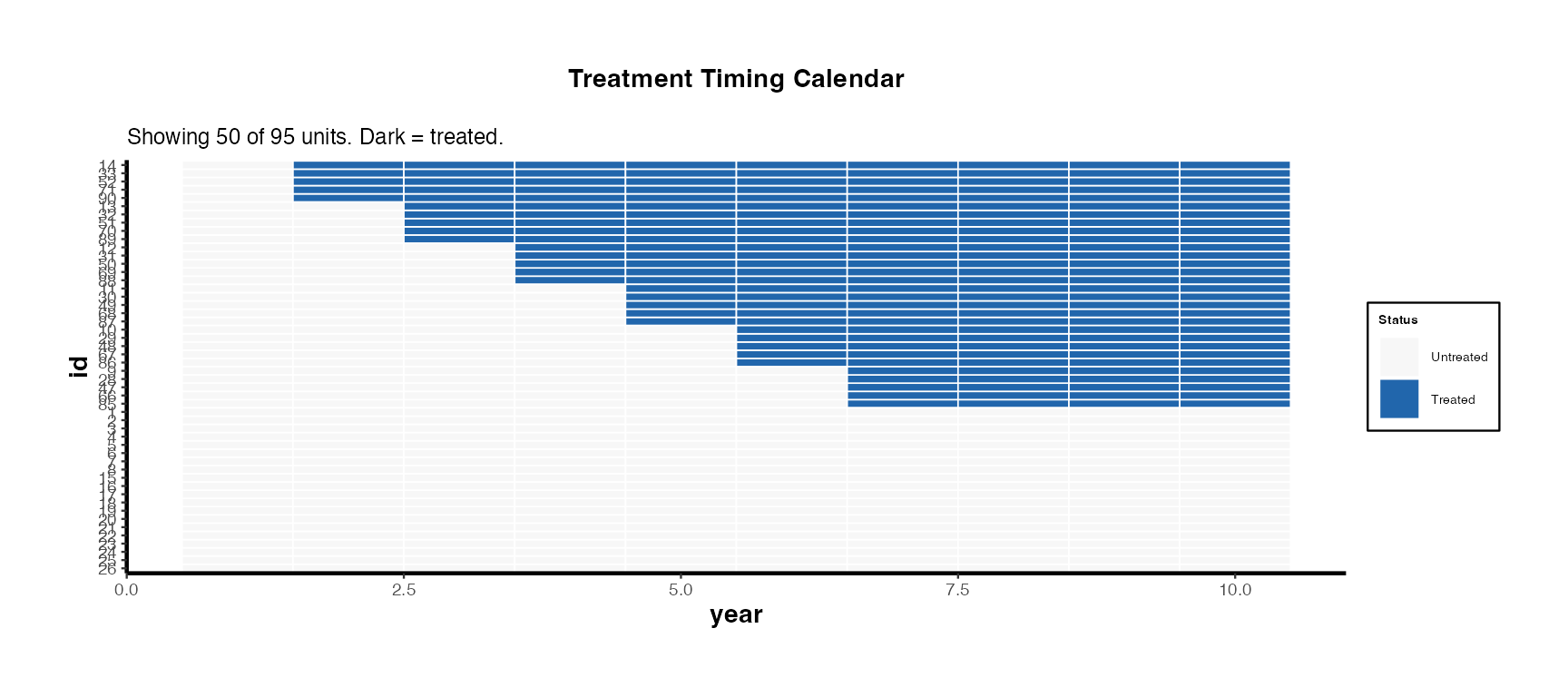

Treatment Calendar: Staggered Adoption Timing

# Prepare base_stagg: add a proper binary treatment column

df_stagg_cal <- fixest::base_stagg |>

dplyr::mutate(treatment = as.integer(time_to_treatment >= 0 &

year_treated <= (5 + 2)))

# Treatment calendar heatmap

causalverse::treatment_calendar(

data = df_stagg_cal,

unit_var = "id",

time_var = "year",

treat_var = "treatment",

max_units = 40,

title = "Staggered Adoption Calendar: base_stagg"

) + causalverse::ama_theme(base_size = 10)

The calendar heatmap communicates treatment timing heterogeneity at a glance. Units are sorted by first treatment date (earliest adopters at top). Dark cells indicate treated periods.

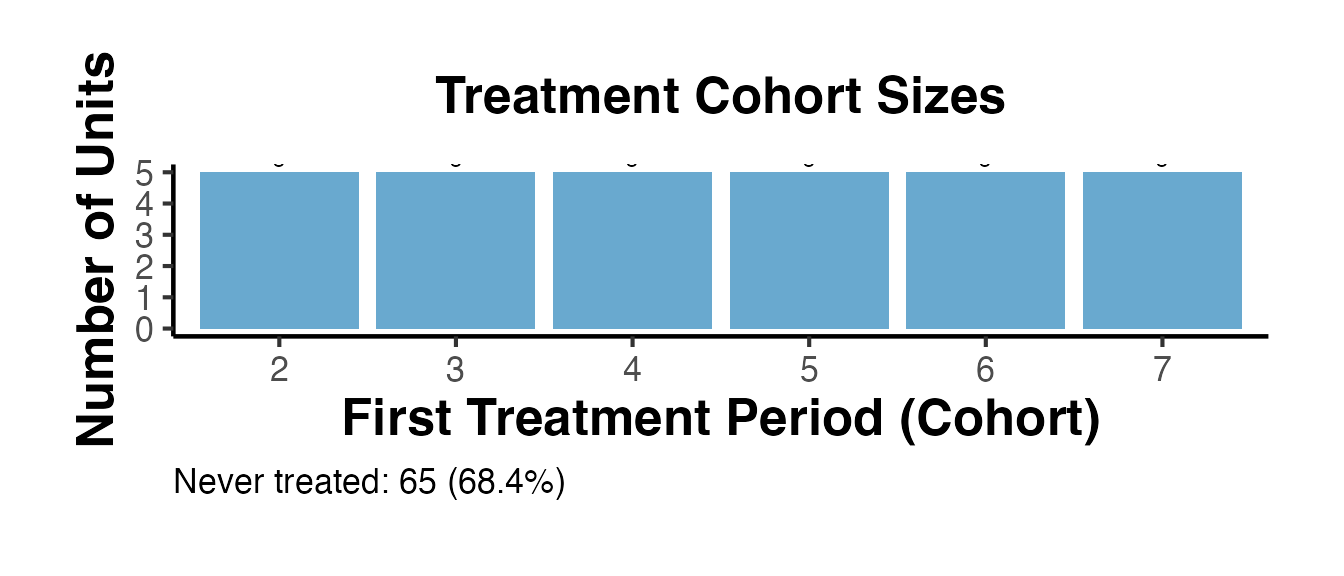

Staggered Adoption Summary Table

causalverse::staggered_summary() returns a comprehensive summary of the staggered design, including cohort sizes and the calendar plot.

summ <- causalverse::staggered_summary(

data = df_stagg_cal,

unit_var = "id",

time_var = "year",

treat_var = "treatment",

plot = TRUE

)

# Print cohort sizes table

cat("=== Cohort Sizes ===\n")

#> === Cohort Sizes ===

print(summ$cohort_sizes)

#> first_treat_period n_units share

#> 1 2 5 0.0526

#> 2 3 5 0.0526

#> 3 4 5 0.0526

#> 4 5 5 0.0526

#> 5 6 5 0.0526

#> 6 7 5 0.0526

cat("\nTotal units:", summ$n_units, "\n")

#>

#> Total units: 95

cat("Periods:", summ$n_periods, "\n")

#> Periods: 10

cat("Never-treated units:", summ$never_treated_n, "\n")

#> Never-treated units: 65

# Cohort bar chart

summ$cohort_plot + causalverse::ama_theme()

Trajectory Plot: Observed vs. Synthetic with Shaded Treatment Window

The trajectory plot is the central figure in any synthetic control paper. Here we build a fully customized version using ggplot2 and the estimated weights from SynthDID.

# California Prop 99 - full trajectory

Y_mat <- setup_ca$Y

time_lbl <- as.numeric(colnames(Y_mat))

treat_yr <- time_lbl[setup_ca$T0 + 1]

synth_Y <- as.vector(t(wts$omega) %*% Y_mat[seq_len(setup_ca$N0), ])

treated_Y <- as.numeric(Y_mat[nrow(Y_mat), ])

traj_df <- data.frame(

year = rep(time_lbl, 2),

value = c(treated_Y, synth_Y),

series = rep(c("California (Treated)", "Synthetic California"), each = length(time_lbl))

)

ggplot(traj_df, aes(x = year, y = value, color = series, linetype = series)) +

annotate("rect",

xmin = treat_yr, xmax = max(time_lbl) + 0.5,

ymin = -Inf, ymax = Inf,

fill = "#FEE0D2", alpha = 0.4

) +

geom_vline(xintercept = treat_yr, linetype = "dashed", color = "gray40") +

geom_line(linewidth = 1.1) +

annotate("text",

x = treat_yr + 0.3, y = max(treated_Y) * 0.97,

label = "Treatment\nstarts", hjust = 0, size = 3.2, color = "gray30"

) +

scale_color_manual(

values = c("California (Treated)" = "#D73027",

"Synthetic California" = "#2166AC")

) +

scale_linetype_manual(

values = c("California (Treated)" = "solid",

"Synthetic California" = "dashed")

) +

labs(

title = "California Prop 99: Observed vs. Synthetic Trajectory",

subtitle = paste0("SDID estimate = ",

round(as.numeric(est_sdid), 1),

" cigarette packs per capita"),

x = "Year",

y = "Cigarette Packs Sold per Capita",

color = NULL,

linetype = NULL

) +

causalverse::ama_theme() +

theme(legend.position = "bottom")

Unit Weight Bar Chart (Top Donors)

omega_df <- data.frame(

unit = rownames(setup_ca$Y)[seq_len(setup_ca$N0)],

weight = as.numeric(wts$omega)

)

# Keep all with positive weight

omega_df <- omega_df[omega_df$weight > 1e-4, ]

omega_df <- omega_df[order(omega_df$weight, decreasing = TRUE), ]

# Top 15

omega_top <- head(omega_df, 15)

omega_top$unit <- factor(omega_top$unit, levels = rev(omega_top$unit))

ggplot(omega_top, aes(x = unit, y = weight)) +

geom_col(fill = "#2166AC", alpha = 0.85) +

geom_text(aes(label = sprintf("%.3f", weight)), hjust = -0.1, size = 3) +

coord_flip(clip = "off") +

scale_y_continuous(expand = expansion(mult = c(0, 0.2))) +

labs(

title = "SynthDID Donor Unit Weights (Top 15)",

subtitle = "Prop 99 California analysis - states with weight > 0",

x = NULL,

y = "Weight"

) +

causalverse::ama_theme()

Time Weight Bar Chart

lambda_df <- data.frame(

period = seq_len(setup_ca$T0),

year = time_lbl[seq_len(setup_ca$T0)],

weight = as.numeric(wts$lambda)

)

ggplot(lambda_df, aes(x = year, y = weight)) +

geom_col(fill = "#D73027", alpha = 0.80) +

geom_hline(

yintercept = 1 / setup_ca$T0,

linetype = "dashed", color = "gray40"

) +

annotate("text",

x = min(lambda_df$year), y = 1 / setup_ca$T0 + 0.005,

label = "Uniform weight (DID)",

hjust = 0, size = 3, color = "gray40"

) +

labs(

title = "SynthDID Pre-Treatment Time Weights",

subtitle = "Higher weight on periods more similar to post-treatment period",

x = "Year (Pre-Treatment)",

y = "Weight"

) +

causalverse::ama_theme()

The dashed line shows the uniform weight that DID would assign (1/T0). SynthDID concentrates weight on periods that are more predictive of the post-treatment period.

Estimator Comparison with Confidence Intervals

set.seed(42)

# Compute estimates and SEs for all three methods

tau_sc_ca <- synthdid::sc_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

tau_did_ca <- synthdid::did_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

tau_sdid_ca <- synthdid::synthdid_estimate(setup_ca$Y, setup_ca$N0, setup_ca$T0)

# Use placebo SE (valid here: single treated unit, many donors)

get_placebo_se <- function(tau_obj) {

tryCatch(

sqrt(vcov(tau_obj, method = "placebo")),

error = function(e) NA_real_

)

}

est_vals <- c(

as.numeric(tau_sc_ca),

as.numeric(tau_did_ca),

as.numeric(tau_sdid_ca)

)

se_vals <- vapply(

list(tau_sc_ca, tau_did_ca, tau_sdid_ca),

get_placebo_se,

numeric(1)

)

comp_df <- data.frame(

method = c("Synthetic\nControl", "Difference-in-\nDifferences",

"Synthetic\nDiff-in-Diff"),

estimate = est_vals,

se = se_vals

)

comp_df$ci_lo <- comp_df$estimate - 1.96 * comp_df$se

comp_df$ci_hi <- comp_df$estimate + 1.96 * comp_df$se

comp_df$method <- factor(comp_df$method,

levels = comp_df$method)

ggplot(comp_df, aes(x = method, y = estimate)) +

geom_hline(yintercept = 0, linetype = "dashed", color = "gray50") +

geom_errorbar(aes(ymin = ci_lo, ymax = ci_hi),

width = 0.18, color = "#444444", linewidth = 0.8) +

geom_point(size = 4, color = "#2166AC") +

geom_text(aes(label = sprintf("%.1f", estimate)),

vjust = -1.1, size = 3.3, color = "#2166AC") +

labs(

title = "Proposition 99: SC vs. DID vs. SynthDID",

subtitle = "Point estimates with 95% placebo confidence intervals",

x = NULL,

y = "ATT (packs per capita per year)"

) +

causalverse::ama_theme()

Inference Methods Deep Dive

Overview of Inference Approaches

SynthDID standard errors cannot be computed from standard OLS formulas because the unit and time weights are estimated from the data. The synthdid package provides three resampling-based methods, each appropriate in different settings.

# Base DID data: multiple treated units - allows all three SE methods

df_did <- fixest::base_did |>

dplyr::mutate(

id = as.factor(id),

period = as.integer(period),

y = as.double(y),

treatment = as.integer(if_else(treat == 0, 0, post))

)

setup_did <- df_did |>

synthdid::panel.matrices(

unit = "id",

time = "period",

outcome = "y",

treatment = "treatment"

)

tau_did_base <- synthdid::synthdid_estimate(setup_did$Y, setup_did$N0, setup_did$T0)

cat("Point estimate:", round(as.numeric(tau_did_base), 4), "\n")

#> Point estimate: 4.8278

cat("N treated:", nrow(setup_did$Y) - setup_did$N0, "\n")

#> N treated: 55

cat("N control:", setup_did$N0, "\n")

#> N control: 53Placebo (In-Space) Standard Errors

The placebo method iterates through control units, treating each one as if it were treated, and computes the SDID estimator on the remaining controls. The standard deviation of these placebo estimates provides the SE.

# Standard placebo SE requires N_controls > N_treated

# base_did: 40 control, 10 treated → eligible

se_placebo_base <- sqrt(vcov(tau_did_base, method = "placebo"))

cat("Placebo SE:", round(se_placebo_base, 4), "\n")

# Per-period placebo SE from causalverse

set.seed(2024)

se_per_period <- causalverse::synthdid_se_placebo(tau_did_base, replications = 200)

print(se_per_period)Jackknife Standard Errors

The jackknife leaves one unit out at a time (treated or control, depending on implementation) and recomputes the estimate. The causalverse wrapper synthdid_se_jacknife() provides per-period jackknife SEs.

Bootstrap Standard Errors

Bootstrap resamples control units with replacement and recomputes estimates across B bootstrap draws. It is the most computationally intensive method but can be more robust in finite samples.

Comparing All Three SE Methods

est_val <- as.numeric(tau_did_base)

se_table <- data.frame(

Method = c("Placebo", "Jackknife", "Bootstrap"),

SE = round(c(se_placebo_base, se_jack_base, se_boot_base), 4),

CI_lower_95 = round(est_val - 1.96 *

c(se_placebo_base, se_jack_base, se_boot_base), 3),

CI_upper_95 = round(est_val + 1.96 *

c(se_placebo_base, se_jack_base, se_boot_base), 3),

Requirements = c("N_ctrl > N_treated", "N_treated >= 2 (or fallback)",

"N_treated >= 2")

)

cat("=== SE Method Comparison ===\n")

cat("Point estimate:", round(est_val, 4), "\n\n")

print(se_table)RMSPE Ratio Test: Permutation Inference

The RMSPE ratio test is a classic non-parametric inference approach from Abadie, Diamond, and Hainmueller (2010). It computes the ratio of post-treatment to pre-treatment prediction error (RMSPE) for both the treated unit and each control unit (treated as a placebo). A large ratio for the treated unit relative to the placebo distribution indicates a genuine treatment effect.

# Detailed RMSPE computation for California Prop 99

Y_ca <- setup_ca$Y

T0_ca <- setup_ca$T0

N0_ca <- setup_ca$N0

times_ca <- as.numeric(colnames(Y_ca))

treat_yr_ca <- times_ca[T0_ca + 1]

pre_idx_ca <- which(times_ca < treat_yr_ca)

post_idx_ca <- which(times_ca >= treat_yr_ca)

# Treated unit gap

synth_ca <- as.vector(t(wts$omega) %*% Y_ca[seq_len(N0_ca), ])

gap_treat <- as.numeric(Y_ca[nrow(Y_ca), ]) - synth_ca

rmspe_pre_treat <- sqrt(mean(gap_treat[pre_idx_ca]^2))

rmspe_post_treat <- sqrt(mean(gap_treat[post_idx_ca]^2))

ratio_treat <- rmspe_post_treat / rmspe_pre_treat

cat("=== RMSPE Ratio Test ===\n")

#> === RMSPE Ratio Test ===

cat("Treated unit pre-treatment RMSPE: ", round(rmspe_pre_treat, 4), "\n")

#> Treated unit pre-treatment RMSPE: 24.8952

cat("Treated unit post-treatment RMSPE: ", round(rmspe_post_treat, 4), "\n")

#> Treated unit post-treatment RMSPE: 41.6049

cat("RMSPE ratio (treated): ", round(ratio_treat, 4), "\n\n")

#> RMSPE ratio (treated): 1.6712

# Placebo RMSPE ratios for all control units

placebo_ratios_all <- vapply(seq_len(N0_ca), function(j) {

other <- setdiff(seq_len(N0_ca), j)

synth_j <- colMeans(Y_ca[other, , drop = FALSE])

gap_j <- as.numeric(Y_ca[j, ]) - synth_j

pre_r <- sqrt(mean(gap_j[pre_idx_ca]^2))

if (pre_r < 1e-10) return(NA_real_)

post_r <- sqrt(mean(gap_j[post_idx_ca]^2))

post_r / pre_r

}, numeric(1))

placebo_ratios_all <- na.omit(placebo_ratios_all)

# Permutation p-value

p_val <- mean(placebo_ratios_all >= ratio_treat)

cat("Number of placebo units: ", length(placebo_ratios_all), "\n")

#> Number of placebo units: 38

cat("Units with ratio >= treated unit: ",

sum(placebo_ratios_all >= ratio_treat), "\n")

#> Units with ratio >= treated unit: 6

cat("Permutation p-value: ", round(p_val, 4), "\n")

#> Permutation p-value: 0.1579

# Histogram

ratio_df_all <- data.frame(

ratio = c(as.numeric(placebo_ratios_all), ratio_treat),

type = c(rep("Placebo states", length(placebo_ratios_all)), "California")

)

ggplot(ratio_df_all, aes(x = ratio, fill = type)) +

geom_histogram(bins = 20, alpha = 0.75, position = "identity") +

geom_vline(xintercept = ratio_treat, color = "#D73027",

linewidth = 1.5, linetype = "dashed") +

scale_fill_manual(values = c("Placebo states" = "#4393C3",

"California" = "#D73027")) +

annotate("text",

x = ratio_treat * 1.05, y = Inf, vjust = 2,

label = paste0("California\nratio = ", round(ratio_treat, 1),

"\np = ", round(p_val, 3)),

hjust = 0, size = 3.5, color = "#D73027"

) +

labs(

title = "RMSPE Ratio: Permutation Inference for Prop 99",

subtitle = paste0("California's post/pre RMSPE ratio vs. all donor states\n",

"Permutation p-value = ", round(p_val, 3)),

x = "Post/Pre RMSPE Ratio",

y = "Count",

fill = NULL

) +

causalverse::ama_theme() +

theme(legend.position = "top")

A permutation p-value below 0.05 indicates that the treated unit’s RMSPE ratio is unusual compared to what we would expect under the null hypothesis of no treatment effect.

Staggered Adoption: A Complete Workflow

This section provides a complete, step-by-step workflow for staggered adoption using causalverse::synthdid_est_ate().

Setting Up the Staggered Data

# base_stagg: staggered DiD dataset with units treated at years 5, 6, or 7

# Never-treated units have year_treated = 10000 (a large placeholder)

df_full <- fixest::base_stagg |>

dplyr::mutate(

treatvar = as.integer(

time_to_treatment >= 0 & year_treated <= (5 + 2)

)

)

cat("Treatment cohort distribution:\n")

#> Treatment cohort distribution:

print(table(df_full$year_treated[!duplicated(df_full$id)]))

#>

#> 2 3 4 5 6 7 8 9 10 10000

#> 5 5 5 5 5 5 5 5 5 50

cat("\nPanel dimensions:\n")

#>

#> Panel dimensions:

cat("Units:", length(unique(df_full$id)), "\n")

#> Units: 95

cat("Periods:", length(unique(df_full$year)), "\n")

#> Periods: 10Cohort Structure Visualization

# Staggered summary for the treated cohorts only

summ_full <- causalverse::staggered_summary(

data = df_full,

unit_var = "id",

time_var = "year",

treat_var = "treatvar",

plot = TRUE

)

# Show calendar plot

summ_full$calendar_plot + causalverse::ama_theme(base_size = 9)

summ_full$cohort_plot + causalverse::ama_theme()

Per-Cohort SDID Estimates

The synthdid_est_ate() function estimates a separate SynthDID model for each adoption cohort, then aggregates to an overall ATE.

set.seed(42)

est_staggered <- causalverse::synthdid_est_ate(

data = df_full,

adoption_cohorts = 5:7,

lags = 3,

leads = 3,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y"

)

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 50

# Per-period ATE table

ate_df <- data.frame(

Period = names(est_staggered$TE_mean_w),

ATE = round(est_staggered$TE_mean_w, 4),

SE = round(est_staggered$SE_mean_w, 4)

)

ate_df$CI_lo <- round(ate_df$ATE - 1.96 * ate_df$SE, 3)

ate_df$CI_hi <- round(ate_df$ATE + 1.96 * ate_df$SE, 3)

print(ate_df)

#> Period ATE SE CI_lo CI_hi

#> -3 -3 -0.0394 0.1527 -0.339 0.260

#> -2 -2 -0.0470 0.2042 -0.447 0.353

#> -1 -1 0.0864 0.1880 -0.282 0.455

#> 0 0 -4.7257 0.6792 -6.057 -3.394

#> 1 1 -4.3725 0.4828 -5.319 -3.426

#> 2 2 -3.4619 0.7168 -4.867 -2.057

#> 3 3 -1.5984 0.6707 -2.913 -0.284

#> cumul.0 cumul.0 -4.7257 0.6792 -6.057 -3.394

#> cumul.1 cumul.1 -4.5491 0.4488 -5.429 -3.669

#> cumul.2 cumul.2 -4.1867 0.4202 -5.010 -3.363

#> cumul.3 cumul.3 -3.5396 0.3989 -4.321 -2.758

# Plot

causalverse::synthdid_plot_ate(est_staggered) + causalverse::ama_theme()

Comparing Staggered SDID vs. DID vs. SC

methods_compare <- c("synthdid", "did", "sc")

results_staggered <- lapply(methods_compare, function(m) {

causalverse::synthdid_est_ate(

data = df_full,

adoption_cohorts = 5:7,

lags = 3,

leads = 3,

time_var = "year",

unit_id_var = "id",

treated_period_var = "year_treated",

treat_stat_var = "treatvar",

outcome_var = "y",

method = m

)

})

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 50

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 50

#> Adoption Cohort: 5

#> Treated units: 5 Control units: 60

#> Adoption Cohort: 6

#> Treated units: 5 Control units: 55

#> Adoption Cohort: 7

#> Treated units: 5 Control units: 50

names(results_staggered) <- methods_compare

# Aggregate overall ATEs for comparison

overall_ates <- lapply(names(results_staggered), function(nm) {

res <- results_staggered[[nm]]

te <- res$TE_mean_w

se <- res$SE_mean_w

# Weighted average across periods (equal weighting for display)

data.frame(

Method = nm,

Mean_ATE = round(mean(te, na.rm = TRUE), 4),

Mean_SE = round(mean(se, na.rm = TRUE), 4)

)

})

cat("=== Staggered ATE Comparison Across Estimators ===\n")

#> === Staggered ATE Comparison Across Estimators ===

print(do.call(rbind, overall_ates))

#> Method Mean_ATE Mean_SE

#> 1 synthdid -2.8327 0.4583

#> 2 did -2.7699 0.4960

#> 3 sc -3.1116 0.5626Covariates in SynthDID: Residualization and Balance

Why Residualize?

The standard SynthDID estimator uses only the outcome variable to compute weights. When time-varying covariates are available, residualizing the outcome on those covariates can improve pre-treatment fit by removing variation that the covariates explain, leaving the weights to focus on unexplained dynamics.

Step-by-Step Residualization

df_cov <- fixest::base_did |>

dplyr::mutate(

id = as.factor(id),

period = as.integer(period),

y = as.double(y),

treatment = as.integer(if_else(treat == 0, 0, post))

)

# Step 1: Conservative residualization using pre-treatment data only

beta_pre <- lm(y ~ x1, data = df_cov |> dplyr::filter(post == 0))

df_cov$y_res <- df_cov$y - predict(beta_pre, newdata = df_cov)

# Step 2: Build panel matrices for raw outcome and residualized outcome

setup_raw <- df_cov |>

synthdid::panel.matrices(

unit = "id", time = "period",

outcome = "y", treatment = "treatment"

)

setup_res <- df_cov |>

synthdid::panel.matrices(

unit = "id", time = "period",

outcome = "y_res", treatment = "treatment"

)

# Step 3: Estimate both

tau_raw <- synthdid::synthdid_estimate(setup_raw$Y, setup_raw$N0, setup_raw$T0)

tau_res <- synthdid::synthdid_estimate(setup_res$Y, setup_res$N0, setup_res$T0)

cat("=== Covariate Adjustment Comparison ===\n")

#> === Covariate Adjustment Comparison ===

cat("SynthDID (raw outcome): ", round(as.numeric(tau_raw), 4), "\n")

#> SynthDID (raw outcome): 4.8278

cat("SynthDID (residualized on x1): ", round(as.numeric(tau_res), 4), "\n")

#> SynthDID (residualized on x1): 4.8501Pre-Treatment Fit Assessment

A key diagnostic is whether the residualized outcome achieves better pre-treatment fit:

compute_prefit_rmspe <- function(setup) {

tau_obj <- synthdid::synthdid_estimate(setup$Y, setup$N0, setup$T0)

wts_obj <- attr(tau_obj, "weights")

synth_oc <- as.vector(t(wts_obj$omega) %*% setup$Y[seq_len(setup$N0), ])

treat_oc <- as.numeric(setup$Y[nrow(setup$Y), ])

gap_oc <- treat_oc - synth_oc

sqrt(mean(gap_oc[seq_len(setup$T0)]^2))

}

rmspe_raw <- compute_prefit_rmspe(setup_raw)

rmspe_res <- compute_prefit_rmspe(setup_res)

cat("Pre-treatment RMSPE (raw): ", round(rmspe_raw, 5), "\n")

#> Pre-treatment RMSPE (raw): 4.28485

cat("Pre-treatment RMSPE (residualized): ", round(rmspe_res, 5), "\n")

#> Pre-treatment RMSPE (residualized): 4.95701

cat("Improvement: ",

round(100 * (rmspe_raw - rmspe_res) / rmspe_raw, 1), "%\n")

#> Improvement: -15.7 %Covariate Balance Before and After Weighting

After computing SynthDID weights, we can assess whether the weighted control group achieves better covariate balance relative to the treated group.

# Extract unit weights

omega_raw <- attr(tau_raw, "weights")$omega

# Unit IDs for control vs. treated

unit_ids <- rownames(setup_raw$Y)

ctrl_ids <- unit_ids[seq_len(setup_raw$N0)]

treat_ids <- unit_ids[(setup_raw$N0 + 1):nrow(setup_raw$Y)]

# Pre-treatment x1 means for each unit

df_pre <- df_cov |> dplyr::filter(post == 0)

ctrl_means <- tapply(

df_pre$x1[df_pre$id %in% ctrl_ids],

df_pre$id[df_pre$id %in% ctrl_ids],

mean, na.rm = TRUE

)

treat_mean_x1 <- mean(df_pre$x1[df_pre$id %in% treat_ids], na.rm = TRUE)

# Unweighted control mean

unweighted_ctrl_mean <- mean(ctrl_means, na.rm = TRUE)

# Weighted control mean using SynthDID omega

ctrl_means_aligned <- ctrl_means[names(omega_raw)]

ctrl_means_aligned[is.na(ctrl_means_aligned)] <- 0

weighted_ctrl_mean <- sum(omega_raw * ctrl_means_aligned)

cat("=== Covariate Balance (x1) ===\n")

#> === Covariate Balance (x1) ===

cat("Treated mean: ", round(treat_mean_x1, 4), "\n")

#> Treated mean: 0.1712

cat("Unweighted control mean: ", round(unweighted_ctrl_mean, 4), "\n")

#> Unweighted control mean: 0.0458

cat("SynthDID-weighted ctrl mean:", round(weighted_ctrl_mean, 4), "\n")

#> SynthDID-weighted ctrl mean: 0

cat("\nBias (unweighted): ",

round(abs(treat_mean_x1 - unweighted_ctrl_mean), 4), "\n")

#>

#> Bias (unweighted): 0.1254

cat("Bias (weighted): ",

round(abs(treat_mean_x1 - weighted_ctrl_mean), 4), "\n")

#> Bias (weighted): 0.1712Multiple Treated Units

When multiple units are treated, SynthDID aggregates the per-unit estimates into an overall ATT. This section demonstrates setup and estimation with more than one treated unit.

Setup with Multiple Treated Units

# base_did has multiple treated and control units

# Let's also examine the structure

df_multi_t <- fixest::base_did |>

dplyr::mutate(

id = as.factor(id),

period = as.integer(period),

y = as.double(y),

treatment = as.integer(if_else(treat == 0, 0, post))

)

# Panel structure

cat("Units:", length(unique(df_multi_t$id)), "\n")

#> Units: 108

cat("Periods:", length(unique(df_multi_t$period)), "\n")

#> Periods: 10

cat("Treated units:", sum(unique(df_multi_t[, c("id", "treat")])$treat), "\n")

#> Treated units: 55

cat("Control units:",

sum(unique(df_multi_t[, c("id", "treat")])$treat == 0), "\n")

#> Control units: 53

setup_multi <- df_multi_t |>

synthdid::panel.matrices(

unit = "id", time = "period",

outcome = "y", treatment = "treatment"

)

cat("\nPanel matrix dimensions:", nrow(setup_multi$Y), "units x",

ncol(setup_multi$Y), "periods\n")

#>

#> Panel matrix dimensions: 108 units x 10 periods

cat("N0 (control):", setup_multi$N0, "\n")

#> N0 (control): 53

cat("N_treated:", nrow(setup_multi$Y) - setup_multi$N0, "\n")

#> N_treated: 55Aggregate and Per-Unit Estimates

tau_multi_t <- synthdid::synthdid_estimate(

setup_multi$Y, setup_multi$N0, setup_multi$T0

)

cat("Aggregate ATT (SynthDID):", round(as.numeric(tau_multi_t), 4), "\n")

# With multiple treated units, all three SE methods are available

se_jack_multi <- sqrt(vcov(tau_multi_t, method = "jackknife"))

se_boot_multi <- sqrt(vcov(tau_multi_t, method = "bootstrap"))

se_plac_multi <- sqrt(vcov(tau_multi_t, method = "placebo"))

cat("\n=== Standard Errors (multiple treated units) ===\n")

cat("Jackknife SE: ", round(se_jack_multi, 4), "\n")

cat("Bootstrap SE: ", round(se_boot_multi, 4), "\n")

cat("Placebo SE: ", round(se_plac_multi, 4), "\n")

# Jackknife CI

est_m <- as.numeric(tau_multi_t)

cat("\n95% CI (Jackknife): (",

round(est_m - 1.96 * se_jack_multi, 3), ",",

round(est_m + 1.96 * se_jack_multi, 3), ")\n")Using panel_estimate() for Multiple Estimators

results_multi <- causalverse::panel_estimate(

setup_multi,

selected_estimators = c("synthdid", "did", "sc", "sc_ridge", "difp")

)

cat("=== Multi-Unit Estimator Comparison ===\n")

print(causalverse::process_panel_estimate(results_multi))Per-Unit Standard Errors

With multiple treated units, per-unit treatment effects and placebo SEs can help identify heterogeneous effects:

# Per-period effects using causalverse

per_period_multi <- causalverse::synthdid_est_per(

Y = setup_multi$Y,

N0 = setup_multi$N0,

T0 = setup_multi$T0,

weights = attr(tau_multi_t, "weights")

)

cat("Per-period estimates (post-treatment):\n")

T0_m <- setup_multi$T0

T_m <- ncol(setup_multi$Y)

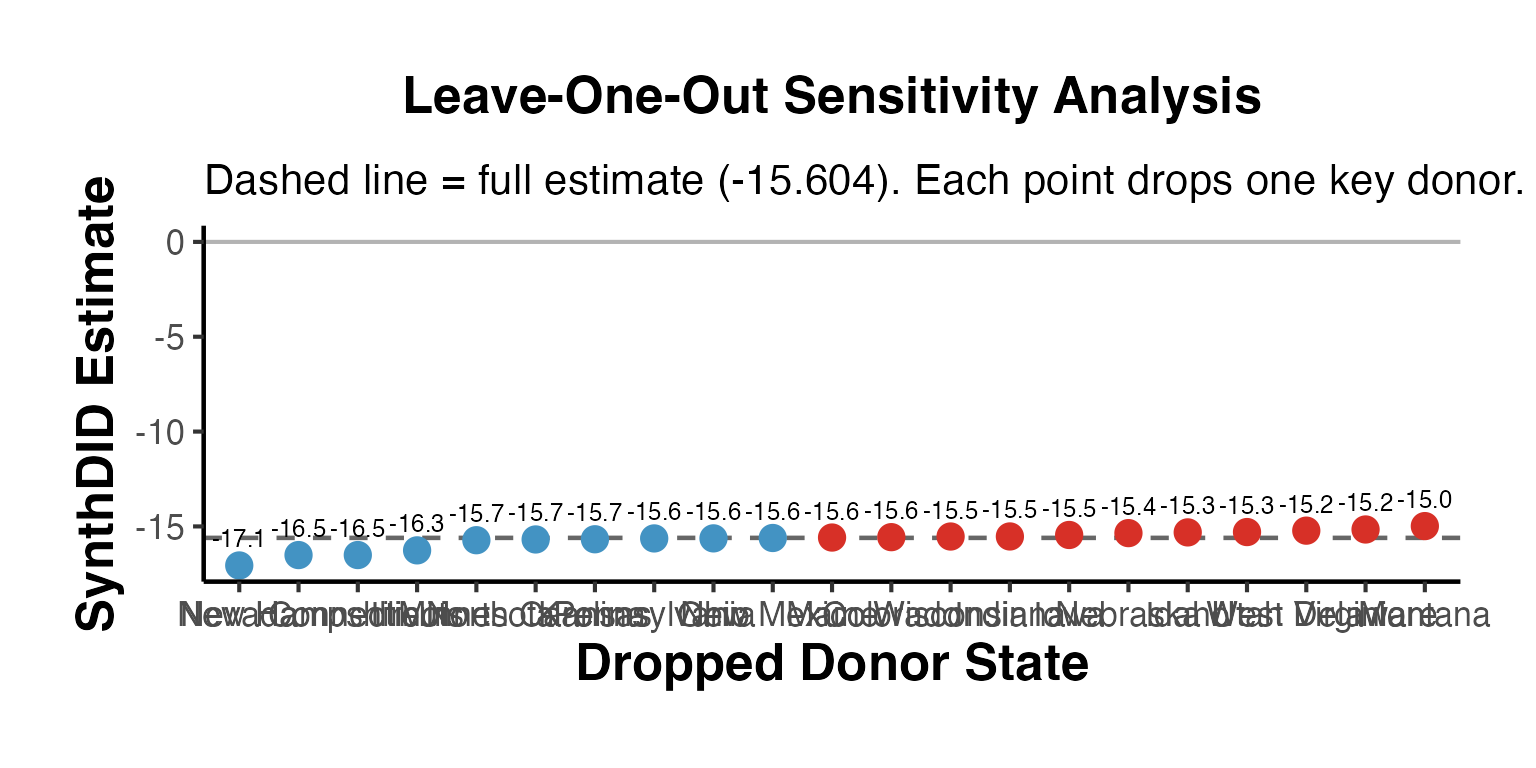

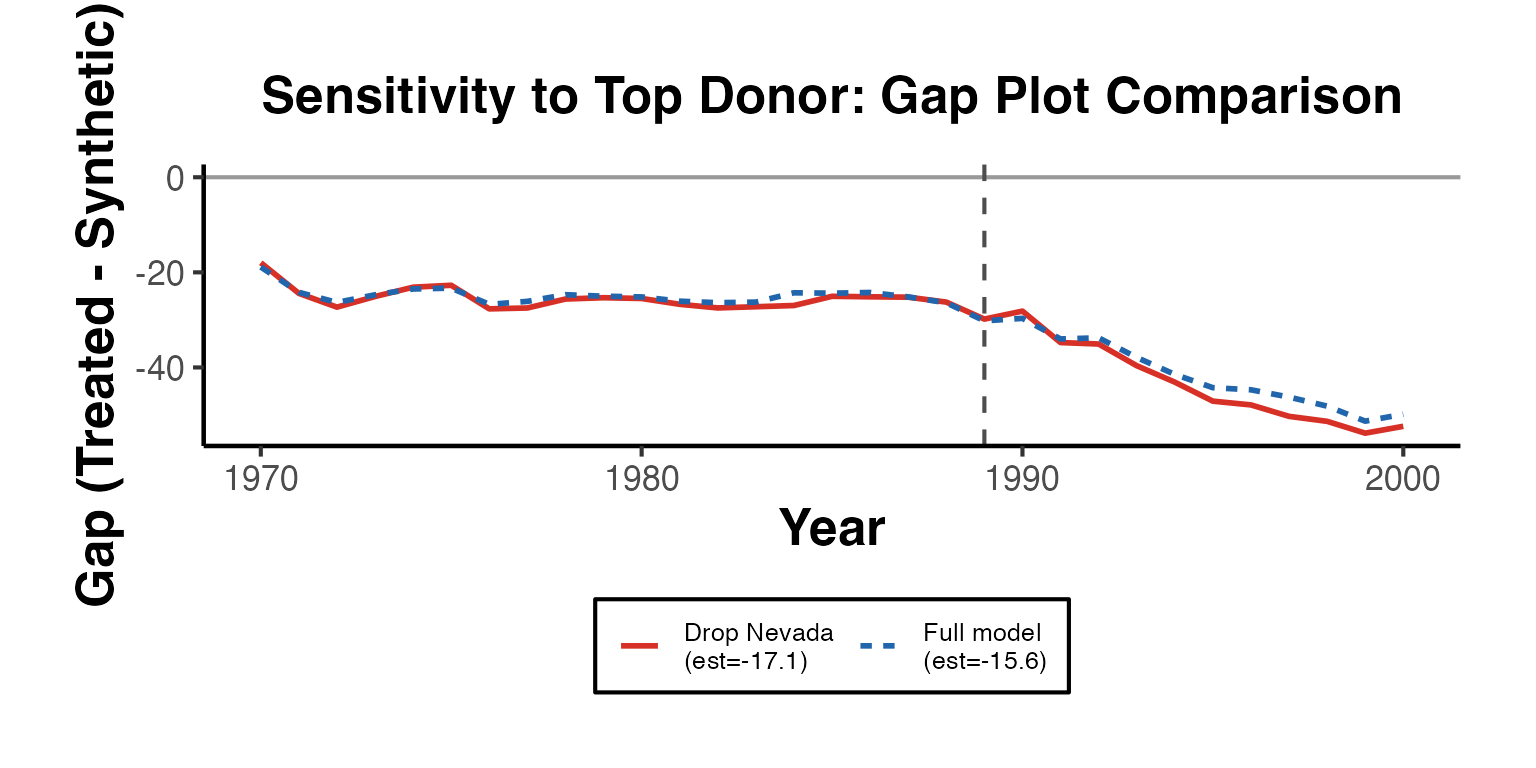

print(round(per_period_multi$est[(T0_m + 1):T_m], 4))Sensitivity Analysis: Leave-One-Out Donors

Sensitivity analysis for synthetic control methods typically involves dropping one donor unit at a time and examining whether estimates remain stable.

Leave-One-Out Estimation

set.seed(99)

Y_loo <- setup_ca$Y

N0_loo <- setup_ca$N0

T0_loo <- setup_ca$T0

# Donors with non-negligible weight (focus LOO on important donors)

omega_full <- attr(est_sdid, "weights")$omega

important_donors <- which(omega_full > 0.01)

cat("Donors with weight > 0.01:", length(important_donors), "\n")

#> Donors with weight > 0.01: 21

cat("Donor names:", rownames(Y_loo)[important_donors], "\n\n")

#> Donor names: Colorado Connecticut Delaware Idaho Illinois Indiana Iowa Kansas Maine Minnesota Montana Nebraska Nevada New Hampshire New Mexico North Carolina Ohio Pennsylvania Utah West Virginia Wisconsin

# Run LOO: exclude one important donor at a time

loo_results <- lapply(important_donors, function(j) {

keep_ctrl <- setdiff(seq_len(N0_loo), j)

Y_loo_j <- Y_loo[c(keep_ctrl, nrow(Y_loo)), ]

tryCatch({

tau_j <- synthdid::synthdid_estimate(

Y_loo_j,

N0 = length(keep_ctrl),

T0 = T0_loo

)

list(

dropped = rownames(Y_loo)[j],

est = as.numeric(tau_j),

ok = TRUE

)

}, error = function(e) {

list(dropped = rownames(Y_loo)[j], est = NA_real_, ok = FALSE)

})

})

loo_df <- data.frame(

Dropped = sapply(loo_results, `[[`, "dropped"),

Estimate = round(sapply(loo_results, `[[`, "est"), 3),

stringsAsFactors = FALSE

)

loo_df$Dropped <- factor(loo_df$Dropped,

levels = loo_df$Dropped[order(loo_df$Estimate)])

full_est_val <- round(as.numeric(est_sdid), 3)

loo_df$Delta <- loo_df$Estimate - full_est_val

cat("=== Leave-One-Out Sensitivity ===\n")

#> === Leave-One-Out Sensitivity ===

cat("Full estimate:", full_est_val, "\n\n")

#> Full estimate: -15.604

print(loo_df)

#> Dropped Estimate Delta

#> 1 Colorado -15.566 0.038